The TOP500 organizers published their 45th twice-yearly list this week in tandem with ISC 2015 in Frankfurt, Germany. What is becoming increasingly clear, as our analysis piece covered and as many in the community have also pointed out, is that the salad days of “better-than-Moore’s” performance growth hit an inflection point in 2008.

We went into the various drivers for this slowdown in the piece — the aftershocks of recession-era spending cuts, the limits of CMOS scaling, and slowed adoption cycles from sites seeking to minimize risk. What we didn’t mention was what these trends say about the ability of the TOP500 LINPACK benchmark to keep pace with evolving system and application demands.

The debate centers on the continued utility of the High-Performance LINPACK (HPL) benchmark in an era of new architectures and different data access patterns. Discussion over the relevance of the list kicked into high gear when the NCSA Blue Waters administrators opted out of the TOP500 in 2012, and since then there has been growing community support for alternative benchmarks that better represent real-world application performance.

LINPACK creator Dr. Jack Dongarra was candid in acknowledging that application sets have migrated since HPL was first implemented. A growing base of HPC applications are reliant on partial differential equations. These are sparse matrix problems, but HPL only tests the resolution of dense linear systems. Two years ago, at ISC 2013, Dongarra along with TOP500 co-editor Michael Heroux and Piotr Luszczek presented a new concept for ranking systems called the High Performance Conjugate Gradient (HPCG) benchmark.

The team see the HPCG “as a more relevant metric for ranking HPC systems than the High Performance LINPACK (HPL) benchmark that is currently used by the TOP500 benchmark.” According to the group’s website, the benchmark was designed “to exercise computational and data access patterns that more closely match a broad set of important applications, and to give incentive to computer system designers to invest in capabilities that will have impact on the collective performance of these applications.”

The team officially launched the new benchmarking scheme, HPCG Benchmark Release 1.0, at SC13. The following June as part of ISC 2014, the very first list debuted with featured systems built upon Fujitsu, Intel, and NVIDIA technologies.

Today at ISC 2015 in Frankfurt, Germany, the HPCG list celebrated is one-year anniversary with the publishing of its third iteration of rankings. It takes a while for benchmark efforts to get going, since involvement is of course optional and can be perceived as risky (no one wants to be last), but the list has already grown 180 percent (by machine count) since it was established. The first list contained 15 entries; the second had 25; while the current one contains more than 40 entries.

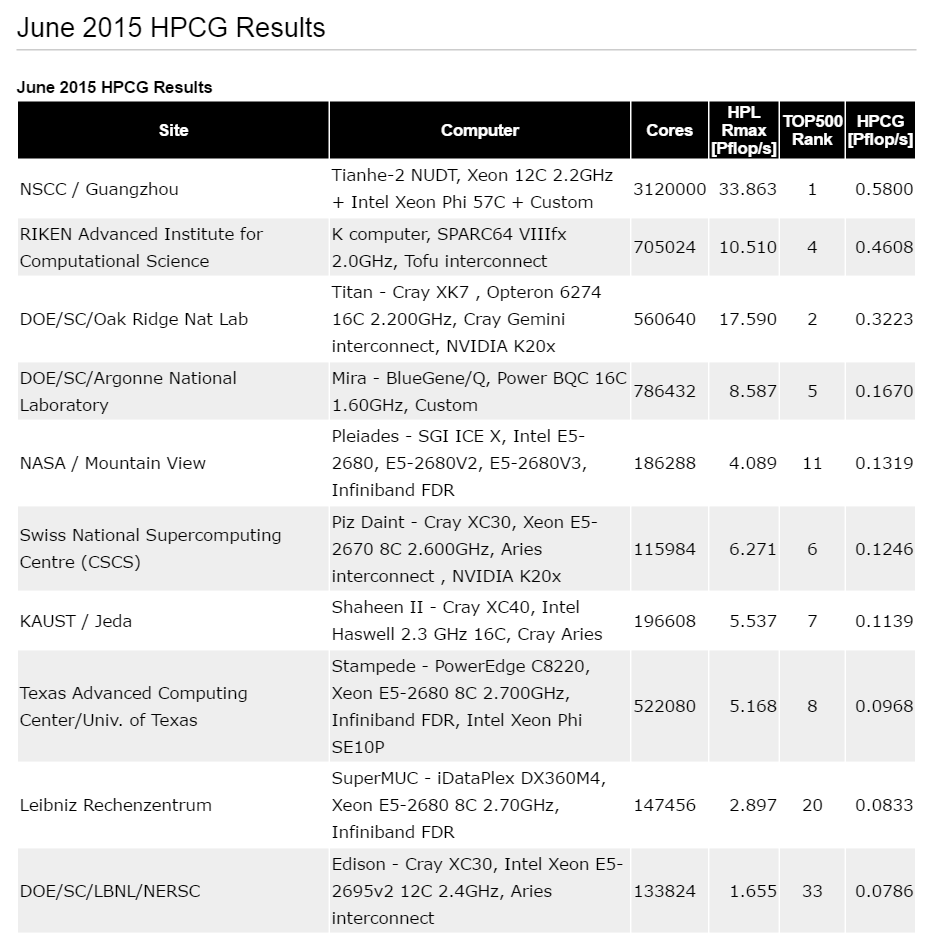

The top 10 machines from the third HPCG-based list represent. The full listing has 40 entries.

According to the benchmark founders, the third list “contains entries from many of the top 50 HPL systems but exhibits a significant shuffling of the HPL rankings, indicating that HPCG features are exposing different and complementary system characteristics.”

Highlights from the latest ranking:

• HPCG list of supercomputing sites now features 40 entries, most of them from the very top of the TOP500 list.

• New supercomputers (also coming to TOP500) featured on this edition are from Saudi Arabia Shaheen II from Cray and Lomonosov 2 (based on NVIDIA Kepler) a followup to Lomonosov (based on NVIDIA Fermi) from the Russian computer company T-Platforms.

• Strong showing from Japan and NEC SX vector computer that achieves over 10% of peak performance of HPCG in comparison to a few percentage points for the rest of the machines.

• Updated results from TACC with larger scale of the system tested.

• IBM BlueGene machines make their first appearance on the list.

Dongarra and his colleagues are clear that they do not intend for the new benchmark to replace or diminish the role of the TOP500, which owing to its reach and recognition, is a valuable metric for larger trends in supercomputing. The stated goal for HPCG is rather “to augment the TOP500 listing with a benchmark that correlates with important scientific and technical apps not well represented by HPL.”

The “companion metric” aims to do this by stressing floating point and communication bandwidth and latency and involving the elements important to today’s architectures, such as messaging, memory and parallelization.

The current HPCG Suite Release version is 2.4.