The release of three new software enhancements adds even more capabilities to Chelsio Communications’ powerful Terminator 5 (T5) ASIC.

The T5 is a fifth generation, high-performance 2x40Gbps/4x10Gbps server adapter engine with Unified Wire capability, enabling offloaded storage, compute and networking traffic to run simultaneously. T5 based adapters are high performance drop-in replacements for Fibre Channel storage adapters and InfiniBand RDMA adapters.

T5 is the only 40G Ethernet adapter with plug and play RDMA Ethernet support with no extra network configuration.

The enhancements include:

- Linux 40GbE Data Plane Development Kit (DPDK) for high speed packet processing

- GPUDirect over 40GbE iWARP RDMA for high performance CUDA clustering

- NVMe over 40GbE iWARP RDMA for enhanced latency and throughput

Linux 40GbE DPDK

DPDK is a suite of packet processing libraries and NIC drivers optimized for running in user space to boost networking performance. DPDK provides a programming framework of open-source libraries to develop high speed data packet networking applications.

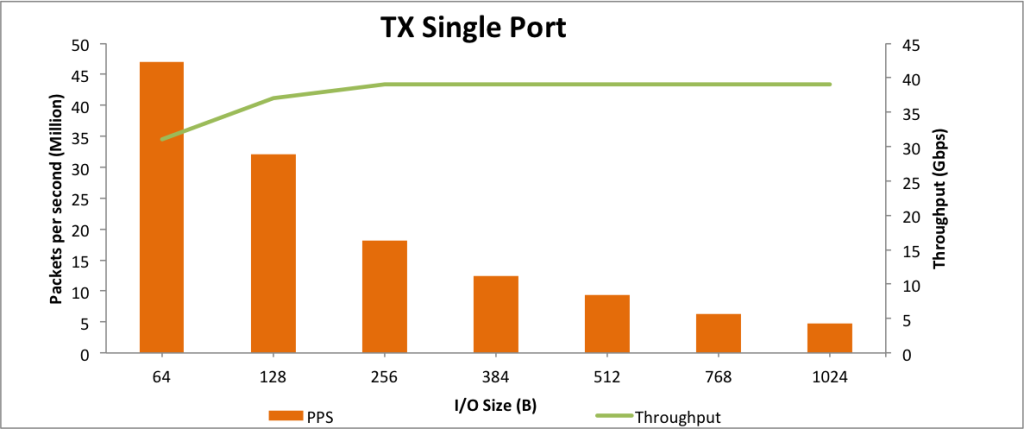

A recent report provides benchmark results that demonstrate the performance benefits of DPDK support for the T5 ASIC. The implementation allows networking applications to achieve unprecedented packet processing capacity and throughput unmatched by standard Linux kernel network stack or other DPDK implementations.

The benchmarks, which used Chelsio’s T580-CR Unified Wire Adapter at 40GbE, show record breaking performance, exceeding 47 MPPS in unidirectional tests, and 71 MPPS in bidirectional tests. In addition, a superior bandwidth curve showcases T5’s DPDK performance with line rate throughput at I/O size 256B for both unidirectional and bidirectional traffic.

These outstanding results make T5’s DPDK solution an ideal fit for networking applications striving for the best in packet processing performance.

GPUDirect over 40GbE iWARP RDMA

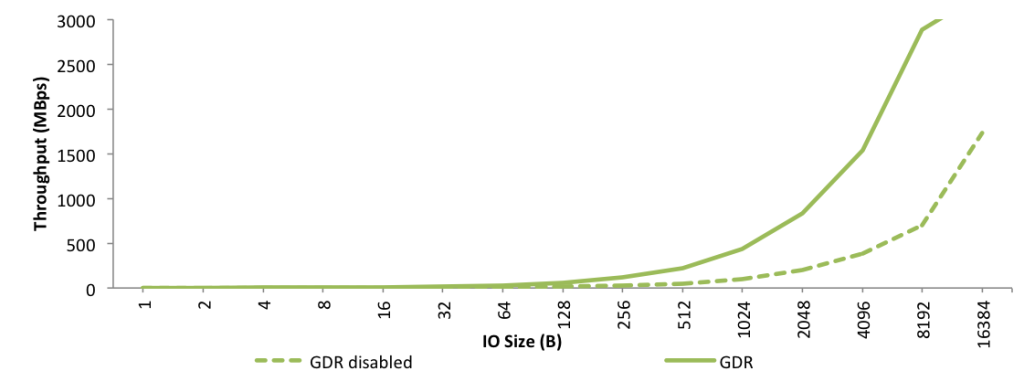

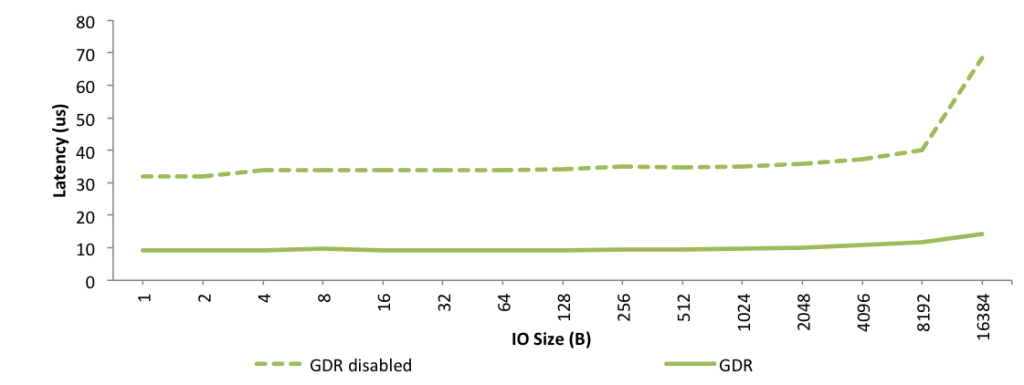

Remote DMA (RDMA) is a technology that achieves unprecedented levels of efficiency, thanks to direct system or application memory-to-memory communication, without CPU involvement or data copies. With RDMA enabled adapters, all packet and protocol processing required for communication is handled in hardware by the network adapter, resulting in high performance. iWARP RDMA uses a hardware TCP/IP stack that runs in the adapter, completely bypassing the host software stack, thus eliminating any inefficiencies due to software processing.

NVIDIA’s GPUDirect technology combined with iWARP RDMA takes full advantage of these advanced technologies. Network access to the GPU is achieved with high performance and efficiency. Since the host CPU and memory are completely bypassed, communication overheads and bottlenecks are eliminated, resulting in minimal impact on host resources, and translating to significantly higher overall cluster performance.

The use of Chelsio’s T580-CR iWARP RDMA adapter along with NVIDIA’s GPUDirect technology delivers dramatically lower latency and higher throughput for mission-critical scientific and HPC applications. The results also show that iWARP RDMA provides significantly higher bandwidth than competing RDMA over Ethernet protocols.

NVMe over 40GbE iWARP RDMA

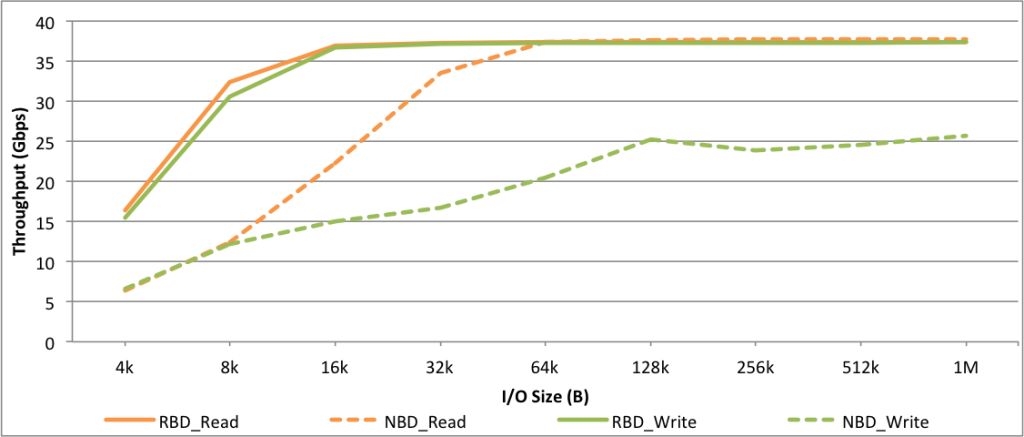

Developed by a consortium of storage and networking companies, NVM Express (NVMe) is an optimized interface for accessing PCI Express (PCIe) non-volatile memory (NVM) based storage solutions.

With an optimized stack, a streamlined register interface and command set designed for high performance solid state drives (SSD), NVMe is expected to provide significantly improved latency and throughput compared to SATA based solid state drives. The enhancement includes support for security and end-to-end data protection.

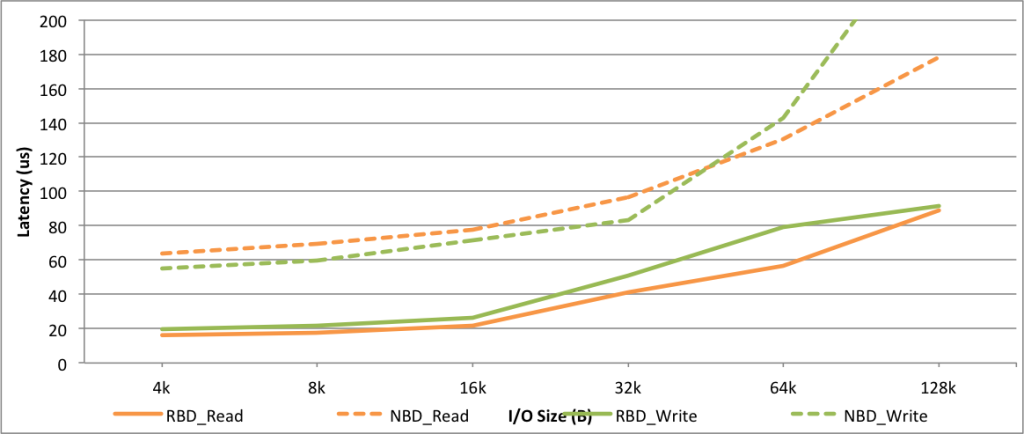

The benchmarks not only present superior throughput results, but also demonstrate the low remote storage access latency made possible with iWARP. Such latency numbers allow exploiting the full potential of ultra low latency SSD drives, and in combination with the efficient high throughput made possible by iWARP, provide the next generation, scalable storage network over standard, cost effective Ethernet.

iWARP is the only RDMA Ethernet that offers the performance and scalability needed by storage by using TCP/IP’s mature and proven design in hardware.

Related Links

- T5 Unified Wire Adapters

- Linux 40GbE DPDK Performance

- GPUDirect over 40GbE iWARP RDMA

- NVMe over 40GbE iWARP RDMA