With the recent launch of the Center for Advanced Technology Evaluation (CENATE), Pacific Northwest National Laboratory is well positioned to serve as a nexus for evaluating technologies that will be foundational for extreme-scale systems – all within a first-of-its-kind computing proving ground. Funded by the Department of Energy Office of Advanced Scientific Computing Research, CENATE’s mission is to deliver concrete benefits to PNNL and to the broader technology research community.

The idea is straightforward: By creating a focal point for the evaluation of early systems technologies that too often are conducted between isolated research teams and technology providers, CENATE provides a needed research and development connection function. The intent is to productively impact the major advancements needed in computing technology and energy efficiency in the transition to exascale and beyond.

“It will be essential to engage a hierarchy of strategic partnerships and integrated methodologies to accelerate both numerically and data-intensive breakthrough technologies toward efficient, productive, high-end computing,” said DOE-ASCR Research Division Director William Harrod. “CENATE’s entire operations model speaks directly to this.” PNNL opened CENATE in mid October and will be spreading the CENATE message as part of a contingent of national laboratories at the DOE booth at SC15 next week.

CENATE is a natural progression of activities within PNNL’s Performance and Architecture Lab (PAL). In the last few years, PNNL used internal investments to create a state-of-the-art measurement facility. The laboratory affords accurate measurements of performance, power, and thermal effects, from device to system level. PAL has also developed methods and tools for modeling and simulating performance and power; researchers will be able to the infrastructure and tools to gain deeper insight into system and application performance and, hopefully, an improved ability ‘to design ahead.’

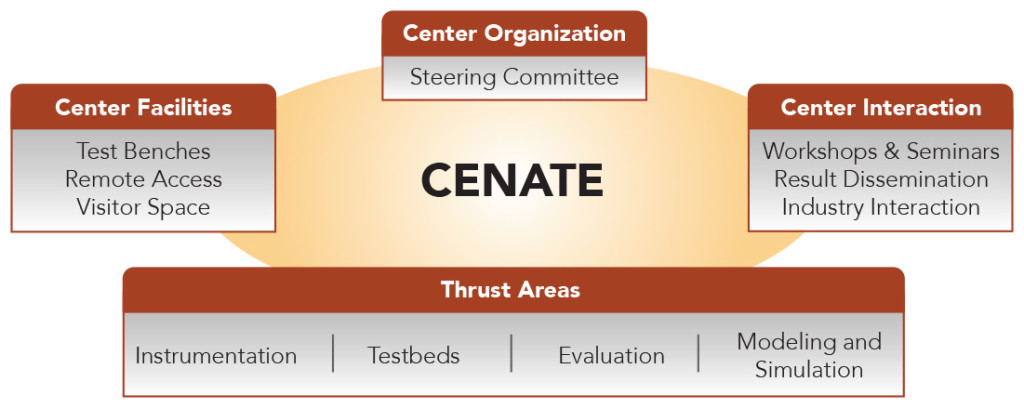

“Application workloads and technologies under investigation will cover many scientific domains of interest to the DOE,” said Adolfy Hoisie, PNNL’s chief scientist for computing and CENATE’s principal investigator and director. “CENATE facilities will be made available to the DOE laboratory community, and our findings will be disseminated among the DOE complex and to technology provider communities within NDA and IP limitations. Moreover, we will make the most of our industry connections to provide added technology evaluation capabilities, including early access to technologies, equipment, and knowledge resources.”

The CENATE Resource

The CENATE Resource

CENATE is designed to provide a multi-perspective, integrated approach in its evaluation process, undertaking empirical analyses affecting sub-system or constituent components to full nodes and small clusters with network switches that are fully populated with compute nodes. Sub-systems of interest include the processor socket (homogeneous and accelerated systems), memories (dynamic, static, memory cubes), motherboards, networks (network interface cards and switches), and input/output and storage devices.

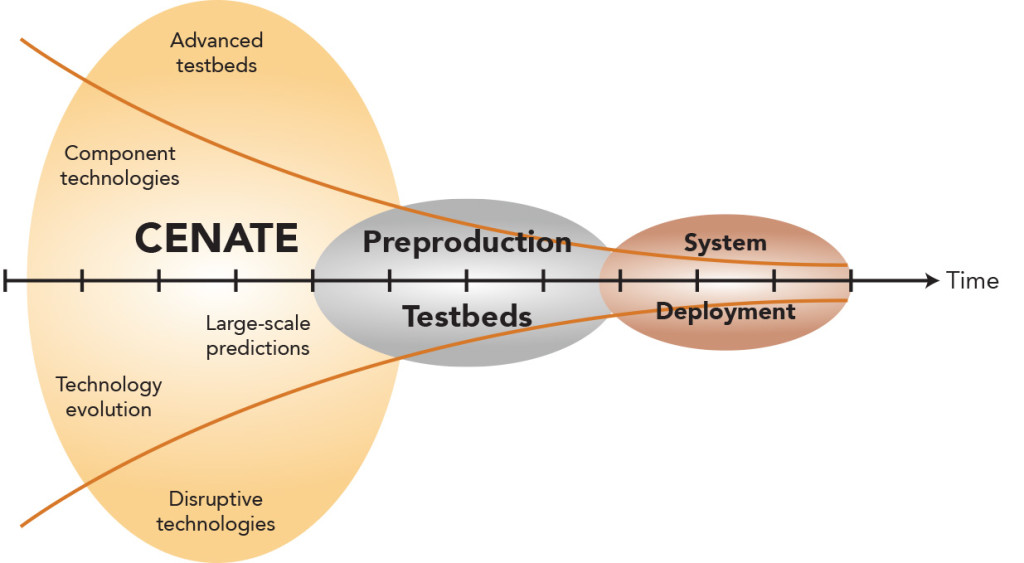

The CENATE core encompasses instrumentation, testbeds, evaluation, and modeling and simulation research areas that primarily will focus on workload applications of interest to DOE with the notion that the broader high-performance computing community can take advantage of synergies as they develop. Hosie emphasized the specific ‘how, when, and what type of evaluation mechanism’ employed in the CENATE pipeline will depend completely on where the technology of interest is in its life cycle.

“Within CENATE, we can take a concept, an early idea where a hardware prototype doesn’t exist and employ modeling and simulation,” he said. “At another stage of the technology development pipeline, very early technologies may be available for measurement in the Advanced Measurement Lab at device level, such as 7nm technology. At another stage of technology maturity a subsystem such as a novel memory board would be available for measurements in AML.”

In both cases – from early measurements to assessment of the impact of such technologies on DOE applications, systems, and power performance – research will be conducted using PAL’s modeling and simulation bag of tools. This measure-model-design pipeline is just one of many potential options that CENATE capabilities afford for steering future system designs and application mapping for optimal performance under the constraints of power consumption.

Tools to Grow By

Measurement instrumentation will provide detailed power information facilitated by PNNL’s investments in technical capabilities that already include the AML. These dedicated physical labs will enable rapid evaluations of component technologies, while evaluations at small and medium scales will feed the analysis pipeline toward modeling and simulation to assess the impact of technologies at large-scale and explore how the technologies may evolve in subsequent generations.

The testbed infrastructure features numerous workbenches—with room for more—that accommodate black box, or complete systems, with standard I/O devices, network connections, and external power meters that can be evaluated in a relatively short turnaround. In addition, there are open-box, or white box, systems where carrier boards are exposed and only include the essentials, such as CPUs, memory, and storage. These systems require a longer setup but also provide added power evaluation via external digital-to-analog converters and voltage probes. CENATE also can manage gray box, or single-component, evaluations that require specific carrier boards and coarse power instrumentation to examine. Gray box evaluation times will vary by system.

“CENATE will assist in preparing applications for future technology generations by assessing the importance of components and their impact on large-scale system deployments still on the horizon,” said Darren Kerbyson, CENATE’s lead scientist. “Ultimately, our evaluations and prediction processes will weed out robustness issues associated with early hardware that can be an imposing barrier en route to production.”

CENATE leverages PNNL’s existing expertise in analytical modeling of performance and power of large-scale applications and systems, as well as work on adapting open-source, near-cycle-accurate system simulations for small scales. Hoisie and Kerbyson manage the center, and the evaluation area leads include Roberto Gioiosa, Instrumentation; Andres Marquez, Testbeds (scalability and new technology); Nathan Tallent, Evaluation; and Kevin Barker, Modeling and Simulation.

CENATE leverages PNNL’s existing expertise in analytical modeling of performance and power of large-scale applications and systems, as well as work on adapting open-source, near-cycle-accurate system simulations for small scales. Hoisie and Kerbyson manage the center, and the evaluation area leads include Roberto Gioiosa, Instrumentation; Andres Marquez, Testbeds (scalability and new technology); Nathan Tallent, Evaluation; and Kevin Barker, Modeling and Simulation.

“To enable extreme-scale science and computing, especially as defined by DOE-ASCR, we need viable methods for more effectively examining the applications that pave the way to the new state of the art,” Hoisie said. “These evaluations also must provide accessible feedback in the form of useful data about performance and energy efficiencies. These data then will be employed to refine technology using co-design methods and additional optimizations. CENATE is a clear bridge that also aligns well with the current Executive Order for the National Strategic Computing Initiative.”

The long-term goal is for CENATE to get deeply enmeshed in the many computing projects funded by DOE for exascale and beyond. To that end, CENATE facilities will be made available to the DOE laboratory community via an instrument-like resource and time allocation to assure broad connectivity. A Wiki is already in development to facilitate rapid dissemination of results, within the confines of non-disclosure and intellectual property agreements with industry partners, as well as to provide a users’ forum for observations, suggestions, and general comments. Workshops, webinars, and onsite host visitors, all aimed at developing new testing methods and procedures, will further promote interaction and augment CENATE general expertise through contributions from experts within the HPC scientific community.

The long-term goal is for CENATE to get deeply enmeshed in the many computing projects funded by DOE for exascale and beyond. To that end, CENATE facilities will be made available to the DOE laboratory community via an instrument-like resource and time allocation to assure broad connectivity. A Wiki is already in development to facilitate rapid dissemination of results, within the confines of non-disclosure and intellectual property agreements with industry partners, as well as to provide a users’ forum for observations, suggestions, and general comments. Workshops, webinars, and onsite host visitors, all aimed at developing new testing methods and procedures, will further promote interaction and augment CENATE general expertise through contributions from experts within the HPC scientific community.