Roughly six months ago SGI reorganized into three business units – traditional HPC; high performance data analytics (HPDA); and SGI services – each with a general manager at the head. Gabriel Broner, then vice president of software innovation, became general manager for the HPC unit.

Broner is a familiar voice in the HPC community. He joined Cray in 1991 where he led operating system design and implementation for Cray’s vector supercomputers and the massively parallel Cray T3E. After Cray’s acquisition of SGI’s in 1996, Broner became senior vice president and general manager at SGI for software and storage. He ventured outside of HPC for few years, first at Microsoft (2006-2009) and then Ericsson (2010-2014), before rejoining SGI in 2014.

“We’ve been a high performance compute company for many years. Over the last year we’ve also been looking at how to take some of the products and solutions we have developed for HPC, including very big data systems, to a broader market so we created a separate group focusing on the enterprise (HPDA business unit). We also created a services BU,” said Broner in an interview with HPCwire.

Fresh from SGI’s customer advisory board meeting late last year and settling into his new role as HPC general manager, Broner spoke with HPCwire about his job and key 2016 technology trends – many gleaned from the customer council – and how they inform SGI product offerings.

“Today, the HPC business unit [still] represents the majority of SGI revenue and customers and the focus is to continue to grow the business by bringing together engineering, product management, marketing, vertical marketing, the applications team, focusing on the HPC customer,” said Broner.

The HPC GM job is pretty straightforward (most of the times) he said, “Our focus is on delivering these production supercomputing systems. The job is simple, right, understand customer needs, make sure we innovate and deliver around those needs, and ensure we align across the company in those efforts. It’s only been six months but I feel we are moving the ball forward.”

Echoing the industry-wide chorus, Broner suggests storage, memory, and data management are the new bottlenecks and customer care-abouts – replacing the traditional focus on chasing FLOPS. “What’s happening is data has been growing to the point that it is too large to move and, increasingly, compute will happen where the data is. That creates a number of challenges. You have to manage large datasets. Things such as multiple tiers of storage are a necessity.”

In-memory processing is a good example of technology being used to tackle big data analysis in both the enterprise and traditional HPC. SAP Hana has been growing in popularity for use with enterprise applications. “We are also seeing new uses [for SAP Hana] such as in the genomic space,” said Broner.

One SGI customer, The Genome Analysis Centre (TGAC) used in-memory processing to perform a complete assembly of the wheat genome; this isn’t a trivial task given the wheat genome is large, hexaploid (human genome is diploid), and difficult to work with. TGAC focuses on world food supply issues and wheat accounts for about 38 percent of the world’s food. It’s a classic big data, in-memory processing project, said Broner.

One SGI customer, The Genome Analysis Centre (TGAC) used in-memory processing to perform a complete assembly of the wheat genome; this isn’t a trivial task given the wheat genome is large, hexaploid (human genome is diploid), and difficult to work with. TGAC focuses on world food supply issues and wheat accounts for about 38 percent of the world’s food. It’s a classic big data, in-memory processing project, said Broner.

“The whole space of data, is a big headache for many of our customers and I view it as probably hitting an inflection point,” he said.

Flash memory, especially with its declining cost, is another increasingly important component for unclogging jammed data-flows said Broner. SGI offers “flash at the first tier in a file system and we are achieving within the box performance of 200 GBs to storage or 30 million IOPs which is half of the level of performance in supercomputing but we are able to achieve that within one box by writing to storage and flash,” he said.

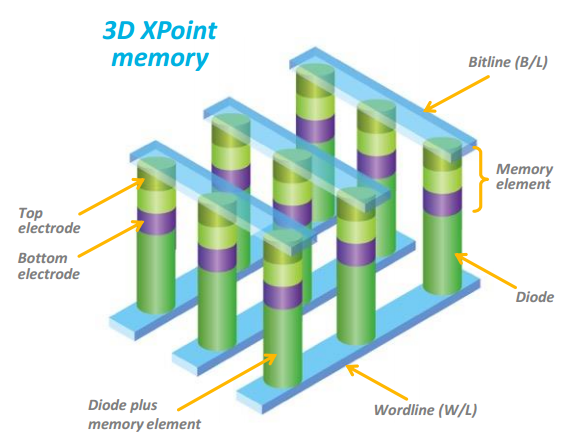

SGI also has high expectations for Intel’s and Micron’s forthcoming 3D XPoint non-volatile memory. “We’ll be able to put 1 PB of memory in our systems in nonvolatile memory. That’s going to change how people use storage and change what’s [considered] really storage and what’s really memory, and change memory hierarchies and how people manage those,” said Broner. The new technology is expected to have up to 1,000 times lower latency and exponentially greater endurance than NAND.

SGI also has high expectations for Intel’s and Micron’s forthcoming 3D XPoint non-volatile memory. “We’ll be able to put 1 PB of memory in our systems in nonvolatile memory. That’s going to change how people use storage and change what’s [considered] really storage and what’s really memory, and change memory hierarchies and how people manage those,” said Broner. The new technology is expected to have up to 1,000 times lower latency and exponentially greater endurance than NAND.

Power management is another a much-discussed problem that has steadily risen to a top-of-mind status according to Broner, “A few customers at the SGI advisory meeting were talking about having 5 megawatts in their datacenters so the next systems will be bound by the power they have. We’ve been working on power and cooling and achieving higher density with warm water cooling in our ICE XA product. That’s something I expect we’ll see more of from ourselves and other vendors. We have also been working on power management at the software level,” he said.

Working with Altair (PBS Pro), SGI recently introduced systems management approaches to lowering the power, including for example, the ability to submit a job and indicate whether an application should be run and at high power or low.

“One of our customers said, ‘If I have an application that solves my problem and I have two versions of it and one code that runs at half the power of the other code, why wouldn’t I run that code?’ This was the first time I’ve heard that,” said Broner.

Users also seem more willing to accept a performance penalty for reducing power consumption suggested Broner. Tamping CPU speeds, for example, instead of having to shut parts of the system down is sometimes a preferred approach though not always. It’s a matter of assessing tradeoffs.

Another trend SGI has taken notice of and addressed is virtualization. Beginning last year, SGI incorporated the ability to do virtualization, using KVM. A few customers are using it today according to Broner. Typical use case: “A customer who is running an application in Windows on their desktop and they want to submit it to run with more memory, on more CPUs, or longer periods of time [on a central HPC resource] but don’t want to learn Linux and multi-threading,” he said.

Interest in containers is also on the rise. “We’re hearing people start to talk about containers and Docker. I think it’s happening mostly because you don’t pay the performance penalty [you do with virtual machines]; they give you the manageability without the penalty although that penalty is less and less today.”

Heading up the other two business units are Rick Rinehart, senior vice president of services and support and general manager of the services business unit, and Brian Freed, vice president and general manager of high performance data analytics. It will be interesting to watch SGI’s push into the enterprise.

SGI computers have long been well entrenched in government and research academia. Indeed, just recently SGI won an order from the National Center for Atmospheric Research (see HPCwire article, SGI and DDN Notch Big Wins at National Center for Atmospheric Research). The push into broader enterprise poses new challenges.

SGI computers have long been well entrenched in government and research academia. Indeed, just recently SGI won an order from the National Center for Atmospheric Research (see HPCwire article, SGI and DDN Notch Big Wins at National Center for Atmospheric Research). The push into broader enterprise poses new challenges.

An important part of SGI’s enterprise initiative may turn out to be its deal with Dell to resell SGI products. Writing in a blog post when the deal was struck last July, Freed noted, “[T]he enterprise space consists of thousands of potential customers, each with unique business requirements that require a level of customer intimacy that takes significant time to develop. Accordingly, we see the Dell partnership as a key growth vector given the strength and breadth of their enterprise relationships.”

On the product side generally, said Broner, SGI’s philosophy remains to innovate around customer needs but using standard components (hardware and software) and is closely aligned with Intel.

“We have constantly focused on in using standard components,” said Bronner. “All of our stack is standard Linux. We run Red Hat and SUSE so people are able to run standard applications.”

Broner recalled the Linux decision being made back when SGI was running Irix and IBM was running AIX. “It was kind of crazy to propose to use Linux but with Linux we went to a standards-based architecture. We use standard components, they work, that’s our approach, we only add uniqueness where it matters, and have been contributing to open source for all these years.”

The introduction six months ago of the full services offering, combined with a standard architecture, should help SGI in the enterprise where reliability is usually a key driver. There about 1,000 systems currently using SGI services, according to Broner, which are available as an option in the warranty. SGI employees remotely monitor performance and alerts and can intervene or advise to solve problems when they arise.

UPDATE (1/28/16):

SGI released its second quarter financial results shortly after this article was published. Below is an excerpt from the SGI release:

Total revenue for the second quarter was $152 million compared to $138 million in the second quarter of 2015. GAAP net loss for the second quarter was $0.5 million, or $(0.01) per share compared with a net loss of $10 million, or $(0.30) per share in the second quarter of 2015. Non-GAAP net income in the second quarter was $5 million, or $0.14 per share compared with net income of $0.1 million, or breakeven per share in the same quarter a year ago.

“The second quarter results marked our return to non-GAAP profitability with improvements in both the top and bottom line,” said Jorge Titinger, President and CEO. “We made significant progress in our high performance data analytics business as we surpassed $10 million in revenue this quarter. We also continued to gain traction winning large deals in HPC such as the recent NCAR award in the weather vertical.”

Here’s a link to the full release: http://www.hpcwire.com/off-the-wire/sgi-reports-fiscal-second-quarter-2016-financial-results/ and another to the conference call transcript: http://finance.yahoo.com/news/edited-transcript-sgi-earnings-conference-044044866.html