By now you’ve likely heard that scientists reported detecting the long-sought gravitational waves; this is roughly a 100 years since their prediction by Einstein’s theory of general relativity and more than 20 years since first funding for the LIGO (Laser Interferometer Gravitational-wave Observatory (LIGO) project to find them. You can safely assume the National Science Foundation (NSF), a prime funder of the LIGO, is feeling well rewarded (and relieved) for the $620 million invested. It was a big risk/big reward project.

The event that created these particular gravitational waves – the spiraling of two black holes into each other about 1.3 billion light-years away (or 1.3 billion years ago) – was the most energetic event ever detected. For a brief moment, the collision of these black holes generated more power than all of the stars in the universe, meaning it briefly outshone the output of 100 billion galaxies, each with 100 billion stars.

“It’s hard to overstate how historic the moment is today. Think about all of the amazing astronomical objects we’re studying with radio astronomy, x-ray astronomy, ultraviolet astronomy, infrared astronomy or optical. Those are all just parts of the electromagnetic spectrum. This is an entirely new spectrum, from end to end, with as diverse sources as the electromagnetic spectrum that we have been working on since Galileo,” said Larry Smarr, founding director of the California Institute for Telecommunications and Information Technology (Calit2), a UC San Diego/UC Irvine partnership, who holds the Harry E. Gruber professorship in Computer Science and Engineering (CSE) at UCSD’s Jacobs School.

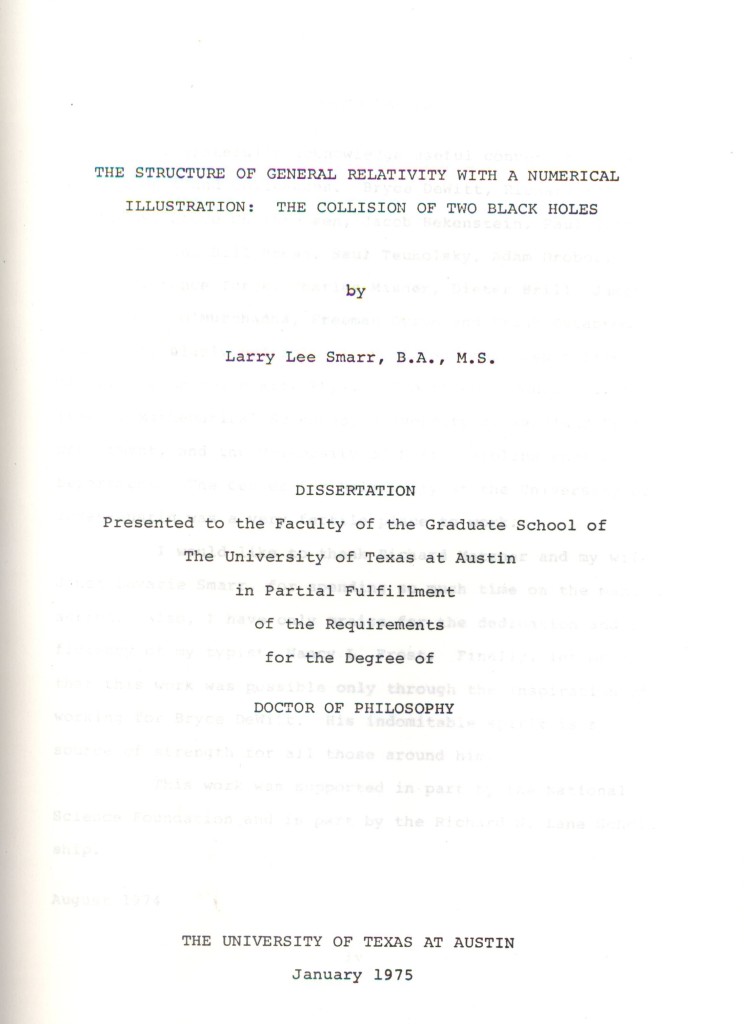

Modern science, of course, is propelled forward in large measure by advanced computing; in this case Smarr was one of the early architects of supercomputing tools needed to solve Einstein’s equations. His Ph.D. theses written in 1975 – The Structure of General Relativity with a Numerical Illustration: The Collision of Two Black Holes – worked out the foundations of numerical relativity and began winning over theoretical physicists whose bias then favored analytic over computational methods.

First, let’s look at the remarkable LIGO project achievement itself. LIGO was originally proposed as a means of detecting gravitational waves in the 1980s by Rainer Weiss, professor of physics, emeritus, from MIT; Kip Thorne, Caltech’s Richard P. Feynman Professor of Theoretical Physics, emeritus; and Ronald Drever, professor of physics, emeritus, also from Caltech. Both Weiss and Thorne were present at the announcement.

Besides providing more proof for Einstein’s general theory of relativity, the ability to detect gravity waves opens an entirely new window through which to observe the universe. This event was the collision of relatively modest size black holes. As LIGO’s sensitivity is improved – it’s at one third of its spec now and expected to reach full sensitivity fairly quickly – a wealth of new and fascinating phenomena will be observed. Nobel prizes will ensue.

The achievement was announced yesterday at the annual American Association for the Advancement of Science (AAAS) meeting being held in Washington over the next few days. Gravitational waves carry information about their dramatic origins and about the nature of gravity that cannot be obtained from elsewhere. Physicists have concluded that the detected gravitational waves were produced during the final fraction of a second of the merger of two black holes to produce a single, more massive spinning black hole. This collision of two black holes had been predicted but never observed.

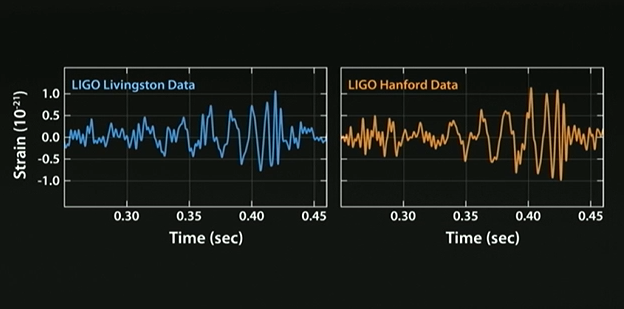

For the record, these gravitational waves were detected on Sept. 14, 2015 at 5:51 a.m. EDT (09:51 UTC) by both of the twin Laser Interferometer Gravitational-wave Observatory (LIGO) detectors, located in Livingston, Louisiana, and Hanford, Washington. The LIGO observatories were conceived, built and are operated by Caltech and MIT. The discovery, accepted for publication in the journal Physical Review Letters, was made by the LIGO Scientific Collaboration (which includes the GEO Collaboration and the Australian Consortium for Interferometric Gravitational Astronomy) and the Virgo Collaboration using data from the two LIGO detectors.

Based on the observed signals, LIGO scientists estimate that the black holes for this event were about 29 and 36 times the mass of the sun, and the event took place 1.3 billion years ago. About three times the mass of the sun was converted into gravitational wave energy in a fraction of a second — with a peak power output about 50 times that of the whole visible stellar universe. By looking at the time of arrival of the signals — the detector in Livingston recorded the event 7 milliseconds before the detector in Hanford — scientists can say that the source was located in the Southern Celestial Hemisphere.

LIGO research is carried out by the LIGO Scientific Collaboration (LSC), a group of more than 1,000 scientists from universities around the United States and in 14 other countries. More than 90 universities and research institutes in the LSC develop detector technology and analyze data; approximately 250 students are strong contributing members of the collaboration. The LSC detector network includes the LIGO interferometers and the GEO600 detector. The GEO team includes scientists at the Max Planck Institute for Gravitational Physics (Albert Einstein Institute, AEI), Leibniz Universität Hannover, along with partners at the University of Glasgow, Cardiff University, the University of Birmingham, other universities in the United Kingdom and the University of the Balearic Islands in Spain.

“This detection is the beginning of a new era: The field of gravitational wave astronomy is now a reality,” says Gabriela González, LSC spokesperson and professor of physics and astronomy at Louisiana State University.

The discovery was made possible by the enhanced capabilities of Advanced LIGO, a major upgrade that increases the sensitivity of the instruments compared to the first generation LIGO detectors, enabling a large increase in the volume of the universe probed — and the discovery of gravitational waves during its first observation run. NSF is the lead financial supporter of Advanced LIGO. Funding organizations in Germany (Max Planck Society), the U.K. (Science and Technology Facilities Council, STFC) and Australia (Australian Research Council) also have made significant commitments to the project.

There are many excellent accounts of the LIGO achievement (see links at the end of this article). The New York Times has a good brief video. There’s also a paper describing the LIGO discovery published online in Physical Review Letters (Observation of Gravitational Waves from a Binary Black Hole Merger) at the same time as yesterday’s announcement. Yet another interesting thread in the LIGO success story is Smarr’s personal connection to gravitational wave research, which as mentioned earlier stretches back to his graduate school days.

“My Ph.D. dissertation was on solving Einstein’s equations for the collision of two black holes and the gravitational radiation it would generate. In those days, I started out working on a CDC 6600,” recalled Smarr.

“My Ph.D. dissertation was on solving Einstein’s equations for the collision of two black holes and the gravitational radiation it would generate. In those days, I started out working on a CDC 6600,” recalled Smarr.

He was at the University of Texas at Austin, which had a relativity research center, and his Ph.D. advisor was Bryce DeWitt. These were early days for supercomputing. The CDC 6600 cranked out around 3 or 4 megaflops. Smarr moved up to a CDC 7600, roughly ten-times more powerful with a peak of 36 megaflops. Today, a typical smart phone is a gigaflops device, Smarr noted.

The task was to develop computational techniques to solve Einstein’s equations for general relativity in curved space-time. “I used hundreds of hours doing that. I had to do the head-on collision, which actually makes the problem axisymmetric; so imagine two black holes gravitationally attracting each other on a line and crashing into each other. You couldn’t possibly compute orbiting. We knew that’s what we needed to do for reality, but you couldn’t do it with the supercomputers of those days.”

Access to sufficient compute power wasn’t the only challenge. Many relativity experts resisted using of computational approaches. “Most had done all of their research relying on exact analytic solutions. I was arguing for computational science and that the only way to understand the dynamics was to use computers. There were a lot of people who were the leaders of general relativity in the 60s who felt that I was besmirching their beautiful analytic solutions,” said Smarr.

Smarr credits DeWitt with the vision that you could use supercomputers to solve the Einstein equations. Upon completion of his degree, DeWitt advised Smarr “to work with the masters of computational science and use the supercomputers. I said, well where are those, and he said you’ll have to get a top secret nuclear weapons clearance to go work inside Livermore (National Laboratory) or Los Alamos (National Lab).”

At the time, said Smarr, it was considered a crazy notion that universities would be able to support national supercomputer centers as they do now. The prevailing view was universities couldn’t handle anything that complicated. He went to Livermore. While there Smarr met a graduate student, Michael Norman, who had just started doing summer research at Livermore. That initiated a four-decade collaboration on many areas of computational astrophysics. Mike is currently the director of the San Diego Supercomputer Center.

“I realized if you could solve something as complicated as general relativity on supercomputers you could also be solving many other problems in science and engineering that researchers in academia were working on. That’s what led me over the years to develop the idea of putting in a proposal [along those lines] to NSF.”

So it is that Smarr’s graduate school experience working to computationally describe the collision of two black holes using Einstein’s equations led to a broader interest in solving science problems with supercomputers. He also became convinced that such computers could thrive usefully in academia, and in 1983 made an unsolicited proposal to NSF that ultimately became NCSA – the National Center for Supercomputing Applications.

Smarr was awarded the Golden Goose award in 2014 which the American Association for the Advancement of Science, the American Association of Universities, other organizations, and supporters in Congress gives to people whose seemingly foolish government funded ideas turn out to be whopping successes for society at large. “It’s given to people who are working on some totally obscure project by that people would normally make fun of and that ends up changing the World,” laughed Smarr.

“When I got to NCSA I wanted to continue my personal research on colliding black holes and so I hired a bright energetic post doc, Ed Seidel who is now the director of NCSA and in between was the NSF Associate Director for Math and Physical Sciences, which has the funding for LIGO. You can’t make this stuff up,” said Smarr who served as NCSA director for 15 years.

At NCSA, Smarr formed a numerical group, led by Seidel. The group quickly became a leader in applying supercomputers to black hole and gravitational wave problems. For example, in 1994 one of the first 3-dimension simulation of two colliding black holes providing computed gravitational waveforms was carried out at NCSA by this group in collaboration with colleagues at Washington University.

NCSA as a center has continued to support the most complex problems in numerical relativity and relativistic astrophysics, including working with several groups addressing models of gravitational waves sources seen by LIGO in this discovery. Even more complex simulations will be needed for anticipated future discoveries such as colliding neutron stars and black holes or supernovae explosions.

NCSA as a center has continued to support the most complex problems in numerical relativity and relativistic astrophysics, including working with several groups addressing models of gravitational waves sources seen by LIGO in this discovery. Even more complex simulations will be needed for anticipated future discoveries such as colliding neutron stars and black holes or supernovae explosions.

NCSA has also played a role in developing the tools needed for simulating relativistic systems. The work of Seidel’s NCSA group led to the development of the Cactus Framework, a modular and collaborative framework for parallel computing which since 1997 has supported numerical relativists as well as other disciplines developing applications to run on supercomputers at NCSA and elsewhere. Built on the Cactus Framework, the NSF-supported Einstein Toolkit developed at Georgia Tech, RIT, LSU, AEI, Perimeter Institute and elsewhere now supports many numerical relativity groups modeling sources important for LIGO on the NCSA Blue Waters supercomputer.

“This historic announcement is very special for me. My career has centered on understanding the nature of black hole systems, from my research work in numerical relativity, to building collaborative teams and technologies for scientific research, and then also having the honor to be involved in LIGO during my role as NSF Assistant Director of Mathematics and Physical Sciences. I could not be more excited that the field is advancing to a new phase,” said Seidel, who is also Founder Professor of Physics and professor of astronomy at Illinois.

Gabrielle Allen, professor of astronomy at Illinois and NCSA associate director, previously led the development of the Cactus Framework and the Einstein Toolkit. “NCSA was a critical part of inspiring and supporting the development of Cactus for astrophysics. We held our first Cactus workshop at NCSA and the staff’s involvement in our projects was fundamental to being able to demonstrate not just new science but new computing technologies and approaches,” said Allen.

Last October Smarr was awarded an NSF grant as the principal investigator for the Pacific Research Platform. PRP is a five-year award to UC San Diego and UC Berkeley to establish a science-driven high-capacity data-centric “freeway system” on a large regional-scale. PRP’s data-sharing architecture, with connections of 10-100 gigabits per second, will enable region-wide virtual co-location of data with computing resources and enhanced security options.

PRP links most of the research universities on the West Coast (the 10 campuses of the University of California, San Diego State University, Caltech, USC, Stanford, University of Washington) via the Corporation for Education Network Initiatives in California (CENIC)/Pacific Wave’s 100G infrastructure. PRP also extends to the University of Hawaii System, Montana State University, University of Illinois at Chicago, Northwestern, and University of Amsterdam. Other research institutions in the PRP include Lawrence Berkeley National Laboratory and four national supercomputer centers. In addition, PRP will interconnect with the experimental research testbed Chameleon NSFCloud and the Chicago StarLight/MREN community.

“So yesterday’s LIGO announcement was for the first event detected. The expectation is for many more, and more regularly. We’ll be hooking up Caltech to SDSC at 10/100Gbs to do data analysis on supercomputers as LIGO goes from just one event to many events a year,” said Smarr.

Smarr noted the data-intensive science that PRP will enable is a “completely separate world from simulation and solving the Einstein’s equations” but quickly added, “I feel privileged that I started off helping figure out how to do the simulations and I’m ending up 40 years later helping LIGO to the data science.”

Looking back, Smarr said “After my PhD, I realized that we were going to eventually create a new gravitational wave astronomy, and so in 1978 I organized a two-week international workshop on the potential sources of gravitational radiation and then I edited the book which you can get on Amazon even today (Sources of Gravitational Radiation: Proceedings of the Battelle Seattle Workshop). The first paper in this collection of papers that we spent two weeks working on is from Ray Weiss, who was the inventor of LIGO and the podium at the announcement, and the third article was by Kip Thorne [also at ceremony]. So in ‘78 the global community was being formed and over the next four decades grew to what you see today.”

Further reading

NCSA article: Gravitational waves detected 100 years after Einstein’s prediction http://www.ncsa.illinois.edu/news/story/gravitational_waves_detected_100_years_after_einsteins_prediction

New York Times article and great video: Gravitational Waves Detected, Confirming Einstein’s Theory, http://www.nytimes.com/2016/02/12/science/ligo-gravitational-waves-black-holes-einstein.html?hp&action=click&pgtype=Homepage&clickSource=story-heading&module=second-column-region®ion=top-news&WT.nav=top-news&_r=0

Physical Review Letters: Observation of Gravitational Waves from a Binary Black Hole Merger, https://journals.aps.org/prl/abstract/10.1103/PhysRevLett.116.061102

APS Physics: Viewpoint: The First Sounds of Merging Black Holes, http://physics.aps.org/articles/v9/17