In a relatively short time, storage giant Seagate Technology has amped up its push into HPC. Today, four of the top ten supercomputer sites in the world run on Seagate, including all of the newcomers to the top ten, says Seagate[i]. It leads the SAGE project, a Horizon 2020 storage technology program in support of Europe’s exascale effort, and it’s the storage provider (with Cray) on the second phase of the Trinity Supercomputer at Los Alamos National Lab, which when finished will be the fastest storage system in the world at 1.6 TB/s.

“We have only been in the [HPC] business since about 2012,” said Ken Claffey, vice president of ClusterStor HPC and big data business. “The first [big HPC] system we ever really deployed broke the speed barrier; it was a 1TB/s system that we deployed with Cray at NCSA Blue Waters. Before that the fastest system in the world was the Spider (Lustre) system at Oak Ridge National Labs at just 250GB/s. We started with these high end systems and are now expanding through the [rest of] the top 100 and so forth.”

With $13.7 billion in revenue (2015) and 50,000 employees, Seagate (NASDAQ: STX) is a goliath that can’t be ignored. It manufactures on the order of one million drives per day according to Claffey, and spends approximately $900 million yearly on R&D that spans the “physics of the magnetic recording, material science, all the way up to file system and software development.”

Motivating Seagate’s heavy HPC pivot are current and future markets. Start with the current HPC-related storage sector, roughly a $4 billion pie. “Even when you are a $14-$15 billion dollar business, that’s a significant market,” said Claffey. Yet more important, he emphasized, is the collision of HPC and traditional enterprise technology, which is expanding the market.

Gaining HPC market share won’t happen easily. DataDirect Networks and IBM currently dominate what is a very fragmented HPC storage market with a long list of suppliers. They are all chasing what is the fastest growing segment in HPC; most are also eagerly expecting a rapid expansion of demand for high-end storage in the enterprise.

Seagate’s plunge into high performance computing was substantially driven by a series of acquisitions over the last 18 months costing more than $1 billion. (It’s nice to have resources.) Seagate acquired Xyratex (March 2014). It scooped up LSI’s broad flash portfolio (September 2014) from ASICs to PCIe cards to SSDs, and a large contingent of engineers (~600). Most recently, the acquisition of Dot Hill (October 2015) brought its portfolio of enterprise class RAID technology into Seagate.

Claffey came with the Xyratex acquisition. “People know Xyratex primarily for two areas,” said Claffey, “One was capital equipment and initially Seagate was one of our biggest customers. The second area was a business we started focused on developing a more engineered systems specifically for HPC.”

“Seagate has basically done these acquisitions all built around the HPC storage portfolio we have today,” said Claffey. Seagate’s vertical integration now covers fundamental storage media itself all the way up to the controllers, storage servers, file/fault systems, and storage cluster management software. “We have key intellectual property at every layer.”

“You can see by the investments we are making and some of the core technology innovations [introduced] that we’re focused on HPC because we believe that the architectures and technologies that we are creating to serve this market are informing us to the where the future of the enterprise is going,” he said.

The last few months have provided a glimpse of Seagate’s plans. At SC15, Seagate debuted a substantially updated ClusterStor line with Lustre and IBM Spectrum Scale (previously GPFS) offerings. It also introduced a new archiving platform and what it calls a ClusterStor HPC drive, specifically designed to boost performance in the ClusterStor line (more product details below). Just last week, Seagate demonstrated the fastest single flash-based SSD with throughput performance of 10 GB/; it’s scheduled for release this summer and meets Open Compute Project (OCP) specifications.

Having spent for product and expertise, and having made strides integrating both into its portfolio and organization, Seagate is now actively pursuing a higher profile in HPC. One of the clearest examples of the company’s effort to be associated with tip of the storage technology spear is its leadership of the SAGE project announced last October. This is Seagate’s first contribution to the European Commission (EC) Horizon 2020 Program. [ii]

Under program guidelines, Seagate will provide next generation object storage based technologies through new APIs designed specifically for the exascale era. The idea is to create what Seagate calls “percipient storage” – storage that is purpose-built to meet both Big Data and Extreme Compute (BDEC) requirements. Certainly that’s a familiar clarion call in HPC and the enterprise.

Under program guidelines, Seagate will provide next generation object storage based technologies through new APIs designed specifically for the exascale era. The idea is to create what Seagate calls “percipient storage” – storage that is purpose-built to meet both Big Data and Extreme Compute (BDEC) requirements. Certainly that’s a familiar clarion call in HPC and the enterprise.

The project will run for three years from September 2015 and has “eight fields of research, including: the study of the 1) application use cases co-designing solutions to address 2) Percipient Storage Methods, 3) Advanced Object Storage, and 4) tools for I/O optimization, supporting 5) next generation storage media and developing a supporting ecosystem of 6) Extreme Data Management, 7) Programming techniques and 8) Extreme Data Analysis tools.”

Malcolm Muggeridge, senior engineering director at Seagate based in the U.K. and another Xyratex veteran, is leading the initiative SAGE, which is one of 15 projects recently funded under Horizon 2020. Direct funding is actually through the European Technology Platforms (ETP) organization – “industry-led stakeholder groups recognized by the European Commission as key actors in driving innovation, knowledge transfer and European competitiveness. ETPs develop research and innovation agendas and roadmaps for action at EU and national level to be supported by both private and public funding.”

It probably doesn’t hurt that Muggeridge is vice chair of ETP. “We create the strategic research agenda which is the bible, if you like, that leads the way the programs will be laid out throughout the years of the horizon 2020 program.” The focuses of projects tend to be either technology or application-centric (centers of excellence) driven.

SAGE is focused on relieving storage IO problems and facilitating the capability to compute wherever the data is stored. Looking beyond burst-buffer approaches used now, the goal is to create a storage IO stack that can seamlessly accommodate next-gen NVRAM technologies without being locked in to any particular technology (resistive random access memory (RRAM or ReRAM) for example). Such an architecture, it’s expected, will ‘drastically’ reduce time-to-solution by moving compute to storage. A key to doing so will be use of novel extensions to existing objects.

As described by Sai Narasimhamurthy, Seagate research staff engineer responsible for coordinating the technical work, the stack would “have memory at the top, various NVRAM technologies in the middle, of course you have your flash technology as well as part of the stack, and then you have scratch disks and then archival disks.”

“You could have an object, or a piece of it, lying in high speed memory, a piece of it in NVRAM, and a piece of the object lying in scratch based upon the usage profile of the object,” explained Narasimhamurthy. “The view of the object is transparent to the application, it’s just I0 to an object, but on the back end you could have various types of layout which could be very interesting because you could optimize your layout for performance or for resiliency. You could do all sorts of things.”

Developing HSM tools is another important goal, said Muggeridge. “Currently in HPC you have some HSM tools which are very naïve and very simplistic and just work between the storage and the archive. In SAGE, we are looking to take advantage of the same concept that you can move object data, or piece of an object data, across the stack. So what are the policies that trigger these movements? There are lot of complex parameters that guide this data movement across the stack including all the inputs from the system administrator or equally machine-learning.”

Just six months old, SAGE is making good progress said Muggeridge. Work is following two tracks, one on design of the architecture and another for characterizing performance against different workloads. Eventually a small-scale system will be built and tested at European supercomputer. There’s a ninth-month review coming up in June to determine if the project is proceeding on schedule.

It bears repeating that much of the SAGE work is aimed at accommodating big data workloads (e.g. climate and nuclear fusion use cases now being studied) – as noted earlier, the fundamental idea is that the architecture will to handle most BDEC workflows.

If the SAGE program represents next-gen technology, Claffey said the recently refreshed ClusterStor product line is the current state of the art. At SC15, Seagate rolled the newest ClusterStor products which included a Lustre appliance (L300), IBM Spectrum Scale (G200) appliance, a new archiving product (A200) which works with the ClusterStor product line, and a Multi-Level Security for Lustre Storage (MLS) offering (SL200).

If the SAGE program represents next-gen technology, Claffey said the recently refreshed ClusterStor product line is the current state of the art. At SC15, Seagate rolled the newest ClusterStor products which included a Lustre appliance (L300), IBM Spectrum Scale (G200) appliance, a new archiving product (A200) which works with the ClusterStor product line, and a Multi-Level Security for Lustre Storage (MLS) offering (SL200).

Seagate also introduced its ClusterStor HPC Drive, which it says can be integrated with the ClusterStor L300 for extra high-performing storage in big data environments; it supports up to four terabytes in a single drive slot with the highest sequential data rate of any hard disk drive on the market at 300 megabytes-per-second according to Seagate.

“It’s a hybrid drive. So not only have we done special things in terms of the rotation of the drive (runs at 7200 rpm) but we have also integrated a cache within the drive both for reads and writes and you see significant improvements, for example, at 4K random IO workload,” according to Claffey.

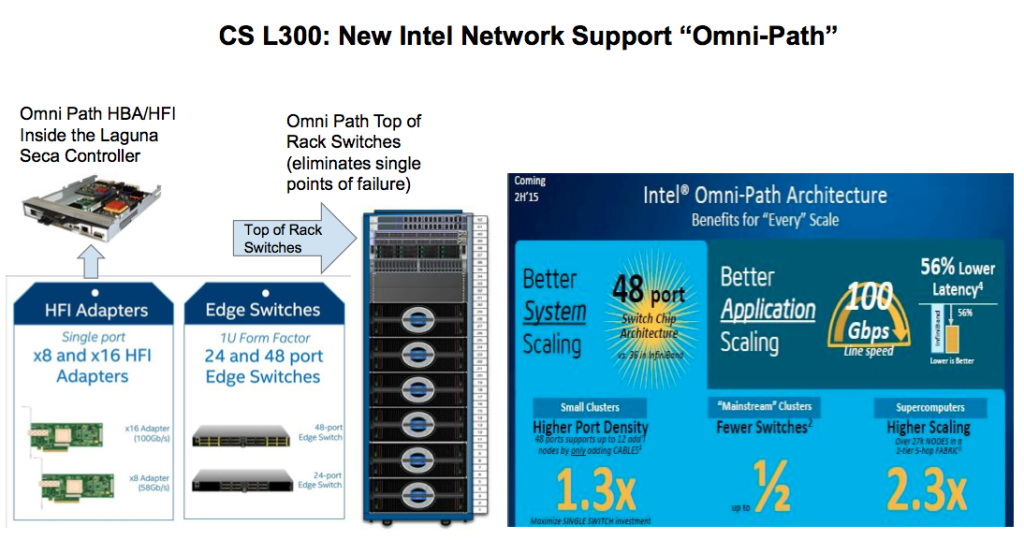

Connectivity improvements were also prominent. The L300 now supports Intel Omni-Path or Mellanox IB EDR Network (see diagram below) and offers performance increments of 12GB to 16GB/s per SSU (Scalable Storage Unit). Seagate promotes it as “the industry’s fastest converged scale-out platform.”

The addition of the G200 offering means customers now have a ready Seagate choice between Lustre and Spectrum Scale solutions. In the last couple of years, there’s been a fair amount of jockeying between the two popular parallel files systems. Like most observers, Claffey said it’s not an either or question.

“In traditional HPC Lustre has obviously been very strong. All you have to do is look at the top 10 and top 20 systems. IBM (GPFS) or Spectrum Scale has been more successful in the commercial space. GPFS does a very good job where small files move around and in mixed workloads. If you are typically dealing with larger files, more sequential reads, that’s where luster is optimized and does a phenomenal job. If a customer application has a lot of small files and they are random we are going to steer them towards spectrum scale. If the customer has a smaller number of larger files, that’s where Lustre does really well.

“What we are seeing relative to mainstream NAS systems is the adoption of parallel file systems is growing and both luster and spectrum scale are benefiting from that option. Scale-out NAS solutions are struggling with the growth in terms of their performance requirements and the capacity scalability requirements,” he said.

Given the size of the HPC storage market the stakes are high and Seagate has put a fair number of chips on the table. Differentiating itself and its products will depend delivering performance and developing approaches to solve the data IO problem currently hobbling storage system performance. Virtually all suppliers offer some work-around; it’s a blend of cache and traffic monitoring techniques to make the storage ‘application aware.’

“All these options are basically caching, right, at what level, where are you doing the caching, and you are trying to get more flash into the system,” said Claffey who contends there are really just two options: 1) you can throw a lot of flash at it and that’s going to be an expensive option; or 2) you can come in with a more hybrid architecture.

“What we are proposing is a hybrid architecture. Our approach is to have multiple layers of caching but we do not want to add additional software layers into the stack. Users and storage systems builders are struggling to manage the movement of data within the software layers that are already there,” he said.

The Seagate approach is to add multiple layers of caching “within the file system itself both from a software perspective, from a hardware perspective and a system perspective without adding in an additional layer. Look at what Cray is doing. Look at what Intel is doing with the Aurora system. We think the majority of the market has already determined that that is probably the best approach rather than adding in more layers to an already complicated stack,” said Claffey

According to Claffey, Seagate customers have collectively stood up more than an exabyte of total storage – to put that into perspective the Titan supercomputer at ORNL has roughly 40 petabytes of storage. Claffey also said Seagate’s four largest storage installations are all in the oil and gas sector although he didn’t identify them.

It will be interesting to see how Seagate’s expansion into HPC affects the supplier landscape. There’s no lack of competitive zeal at Seagate. Claffey said about one prominent competitor, “If I show them in their most favorable light, in the most optimum, downhill, wind assisted configuration, I mean that very genuinely, actually it’s pretty comparable to our previous generation the cs9000 product.” Time will tell.

[i] Top500 List, 11/2015

[ii] In addition to Seagate Systems (UK) Limited, the SAGE participants include Allinea Software (UK), BULL SAS (Atos SE) (France), Culham Centre for Fusion Energy (CCFE) (UK), French Alternative Energies and Atomic Energy Commission (CEA) (France), Deutsches Forschungszentrum für Künstliche Intelligenz (DFKI) (Germany), Diamond Light Source (UK), Forschungszentrum Jülich (Germany), Kungliga Tekniska Hoegskolan (KTH) (Sweden) and the Science and Technology Facilities Council (STFC) (UK).

. Read more…" share_counter=""]