Is the National Strategic Computing Initiative in trouble? Launched by Presidential Executive Order last July, there are still few public details of the draft implementation plan, which was delivered to the NSCI Executive Council back in October. Last week on the final day of the HPC User Forum being held in Tucson, Saul Gonzalez Martirena (OSTP, NSF) gave an NSCI update talk that contained, really, no new information from what was presented at SC15.

As he was heading out, and aware that a open discussion on NSCI was scheduled for later in the day, Martirena asked one of the meeting’s organizers (IDC Research VP, Bob Sorensen) to take good notes, adding “if there is any possibility send them to me by tomorrow. We are really looking for good ideas.” It’s too bad he missed the discussion.

Starved for details and perhaps becoming tone-deaf to NSCI aspirations, the late afternoon discussion was wide-ranging and concern-ridden relative to NSCI’s reality. The first member of the gathered group to venture an opinion, a very senior member of the HPC community, said simply, “It’s all [BS].” What followed was candid conversation among the forty or so attendees who stuck around for the final session of the forum.

NCSI, of course, is a grand plan to ensure the U.S. maintains a leadership position in high performance computing. It’s five objectives, bulleted below, represent frank recognition by the U.S. government, or at least the current administration, that HPC leadership is vital for advancing science, ensuring national defense, and maintaining national economic competitiveness. Now, nearly nine months after its start, there’s still not much known about the plan to operationalize the vision.

The five NSCI objectives, excerpted from the original executive order, are:

- “Accelerating delivery of a capable exascale computing system that integrates hardware and software capability to deliver approximately 100 times the performance of current 10 petaflop systems across a range of applications representing government needs.

- Increasing coherence between the technology base used for modeling and simulation and that used for data analytic computing.

- Establishing, over the next 15 years, a viable path forward for future HPC systems even after the limits of current semiconductor technology are reached (the “post- Moore’s Law era”).

- Increasing the capacity and capability of an enduring national HPC ecosystem by employing a holistic approach that addresses relevant factors such as networking technology, workflow, downward scaling, foundational algorithms and software, accessibility, and workforce development.

- Developing an enduring public-private collaboration to ensure that the benefits of the research and development advances are, to the greatest extent, shared between the United States Government and industrial and academic sectors.”

Sorensen was a good choice for moderator. Before joining IDC he spent 33 years in the federal government as a science and technology analyst covering HPC for DoD, Treasury, and the White House. He was involved during the early formation of NSCI and remains an advocate; that said he has since written that more must be done to ensure success (see NSCI Update: More Work Needed on Budgetary Details and Industry Outreach).

Views were decidedly mixed in the audience, which was mostly drawn from academia, national labs, and industry. Sorensen kicked things of with a list of discussion points (see on left) but discussion wandered extensively.

What did seemed clear is that lacking a concrete NSCI implementation plan to react to, members of the audience defaulted to ad hoc concerns and attitudes, sometimes predictably characteristic of the segment of the HPC community to which they belonged, but also often representative of the diversity of opinion within segments. Two of the more contentious issues were the picking of winners and losers by government and the challenges in creating an enduring national HPC ecosystem through pubic-private efforts.

Uncle Sam Not Good At Picking Winners

Every time an RFP goes out, there’s a winner and a loser, said Sorensen. One attendee recalled the government-funded effort to develop a national aerodynamic simulator in the 1980s as something less than successful . “They funded Control Data Corp. and Burroughs Corporation. Somebody asked Cray how come you’re not going after any of that. Seymour [Cray] said ‘when I build my machine they will decide that ‘s the machine they really want.’ And he built the Cray 2 and Burroughs dropped out of the business. [In the end] CDC supplied a dead machine and Cray won the business. The point is for years the government has tried to pick winners and losers and hasn’t been successful.”

Sorensen, in his introductory remarks, noted further there is an inherent “dichotomy” in the program. The folks who are doing this, DOE, NNSA want the best HPC systems in the world [because] leadership here means greater potential for greater national security. [While] at the same time we want a vibrant HPC infrastructure that builds the best equipment in the world and sells it to anyone that has money that.”

Indeed, mention was made of the recent report that China – denied Intel’s latest chips roughly one year ago by the U.S. Commerce Department– would soon bring on two 100 petaflops machines made with Chinese components and planing to benchmark one in time for the next Top500 list (June). One comment was “The Chinese are giving a gift to this program. Imagine what Trump is going to say. We are going to be portrayed as being way behind the Chinese and get out the check book because we have to catch up.”

It was hardly smooth sailing for the sprawling NSCI blueprint. Still, it would be very inaccurate to say the mood was anti-NSCI; rather so much uncertainty remains that there was little to focus on. The devil is in the details, said one attendee. Funding, HPC training, software issues (modernization and ISV interest), big box envy, the politically charged environment, clarity of NSCI goals, and program metrics were all part of the discussion mix.

Acknowledged but not discussed at length was the fact that the NSCI might not survive the charged political atmosphere of an election year and might not be supported by the next administration. During Q&A following his earlier presentation Gonzalez Martirena was cautiously optimistic that bipartisan support around national security and national competitiveness issues was possible.

Broadly, the difficulties of democratizing HPC dominated concerns. Buying and building supercomputers for national and academic purposes is a more traveled road where best practices (and stumbling blocks) are better known.

Here is a brief sampling of a few issues raised:

The “ISV Problem”

In a rare show of consensus, many thought enticing ISVs to embrace HPC would be a major hurdle. Indeed, software presents challenges on several fronts – from modernizing code to run on exascale machines to simply making HPC software more widely available to industry were discussed

Unless ISVs sees larger scale HPC as a lucrative market for they won’t have the incentive to scale their software was the general opinion. Consequently, companies who are completely dependent on commercial applications would discover to their movement into the HPC world limited by software availability and cost.

Moreover, NSCI’s seeming intense attention on hardware could become problematic. Throughput, at least for industrial HPC, is far more important than impressive machine specs. Perhaps, suggested one attendee, what’s needed is an X Prize of sorts to incentivize ISVs to go after these ‘world’s hardest’, meaningful work.

The Big Box Syndrome

A far amount of discussion was given to DoE and NSCI’s apparent focus on producing exascale machines. Talking about the early NSCI planning, Sorensen noted, “We talked long and hard about using exacale. It really came down to we don’t need an exascale machine, we need exascale technologies, that could be sitting on someone’s desktop. I remember the day the NSCI came out, the headline in Washington was ‘New Supercomputer’. It’s like no, don’t you understand. We are not talking about the top ten systems anymore; we need to at least deal with 100,000 technical servers out there.”

Certainly academia, national labs, and DoE do care about big machines. One person said these programs always make him wonder is there’s a hidden agenda by “people who just always want to get the faster system and NSCI is sort of being steered in that direction.”

HPC Workforce

The HPC skill shortage is a widely acknowledged problem. Young talent races to the start-up world, not HPC. Several approaches were bandied about, ranging from better use of formal training at the national labs and DoE to creation of new outreach programs. Even so, one attendee also wondered if a small company with limited engineering talent would be able or willing to allow those resources get needed training.

Getting the word out for existing training resources is an issue, said one attendee, who noted DoE doesn’t have a marketing budget per se to alert companies that training is available at centers. “It’s not like a company that has a marketing budget like Intel and IBM that’s going out and telling people all the time about this. That’s probably a barrier to getting the word out about resources are available.”

What Should Success Look Like?

For all its grand goals, the gathering wondered what NSCI success should look like, particularly if the idea was to achieve more than incremental success in economics or science or just build an exascale computer.

Merle Giles, director of Private Sector Programs and Economic Impact at the National Center for Supercomputing Applications, and co-editor of the text, Industrial Applications of High-Performance Computing: Best Global Practices, said “Look at the game-changing events that affected the economy in this country. They were all an order of magnitude of 10X to 100X changes. It was railroad, [etc]. We don’t need to extract those last ten percent of performance of the machine. We need 10X to 100X and we can be really sloppy and still be really good. The 10X to 100x is not just the technology – it’s not exascale that will change the entire nation. It is greater access [to HPC resources] for those who can take advantage of that access.”

In that vein, another attendee added that NSCI is a projection of what was done in the past. What’s needed instead is to fundamentally think differently, saying, “Probably the biggest advantage comes from miniaturization of systems, not the biggest systems.”

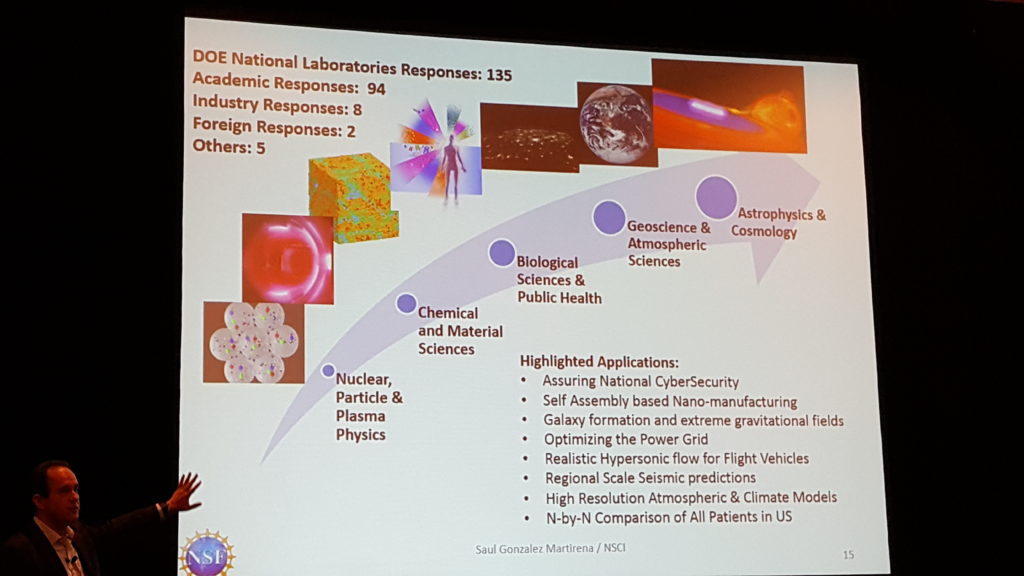

One missing element to the entire program, agreed Gonzalez Martirena after his presentation, is more extensive interaction is industry. He showed a slide of responses to the RFI issued by NSF last fall (shown here) indicating roughly 200 academia/national lab responses with just eight from industry. Perhaps industry should form a group of representatives to  works with NSCI, suggested Gonzalez Martirena to HPCwire.

works with NSCI, suggested Gonzalez Martirena to HPCwire.

Sorensen indicated IDC would send along the group’s comments to NASCI and Gonzalez Martirena, who recently moved back from OSTP to his position as program director of the division of physics at NSF. It seemed clear from the breadth of the discussion that the lack of a definite NSCI plan has created a something of vacuum for the HPC community and impatience for more detail.

One attendee offered, “How many people are left in the room, 40? I’d be willing to bet there are 40 different visions about what [NSCI] success looks like. We’re having lots of conversation but I think we are down in the weeds.”

NSCI Resources

NSCI Update: More Work Needed on Budgetary Details and Industry Outreach; http://www.hpcwire.com/2016/03/10/nsci-update-more-work-needed-on-budgetary-details-and-industry-outreach/

Speak Up: NSF Seeks Science Drivers for Exascale and the NSCI; http://www.hpcwire.com/off-the-wire/sc15-releases-latest-invited-talk-spotlight-randal-bryant-and-tim-polk/

HPC User Forum Presses NSCI Panelists on Plans; http://www.hpcwire.com/2015/10/06/speak-up-nsf-seeks-science-drivers-for-exascale-and-the-nsci/

Podcast: Industry Leaders on the Promise & Peril of NSCI; http://www.hpcwire.com/2015/08/27/podcast-industry-leaders-on-the-promise-peril-of-nsci/

New National HPC Strategy Is Bold, Important and More Daunting than US Moonshot; http://www.hpcwire.com/2015/08/06/new-national-hpc-strategy-is-bold-important-and-more-daunting-than-us-moonshot/

White House Launches National HPC Strategy; http://www.hpcwire.com/2015/07/30/white-house-launches-national-hpc-strategy/

President Obama’s Executive Order ‘Creating a National Strategic Computing Initiative’; http://www.hpcwire.com/off-the-wire/creating-a-national-strategic-computing-initiative/