As we approach an Exascale future, the focus is on how to provision and use that computational capability. In order to realize the full societal impact of Exascale computing, storage systems to support Exascale supercomputers are equally important else those valuable (and expensive) compute cycles will be wasted during IO operations. Thought leadership in the HPC community agrees that increasing core count is clearly the direction for computation (although there are strong differences of opinion on how those increased core counts are to be implemented). However, the storage picture is more complicated.

Unlike computation or in memory systems, data retained in storage is persistent over a period of years and even decades. Further, storage systems cannot ever risk losing or delivering bad data at any point in the data lifecycle. This requires deep technical capabilities and experience in large scale computing and data management. As we look to the future, past performance truly is a predictor of future success in the storage market, which is why more than 2/3 of the world’s fastest computers rely on DDN (Data Direct Networks) for their storage needs. From pre-Petascale supercomputers to the current generation of double-digit PF/s (petaflop per second) machines, DDN has preferentially been selected to partner with end users and technology integrators to expand the limits of HPC computing. Looking to an Exascale world, DDN is investing 10’s of millions of dollars and opening new research and development facilities to create the end-to-end storage technologies that will meet the data requirements of current users and future Exascale supercomputers. DDN storage simply works, is fast, expandable, power efficient, and cost effective, which is why DDN is the storage vendor of choice for HPC professionals and those tasked with advancing the state-of-the-art in leadership class supercomputing.

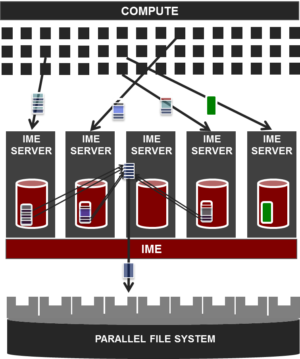

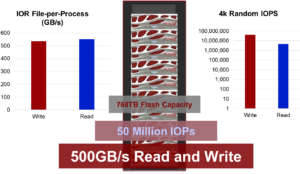

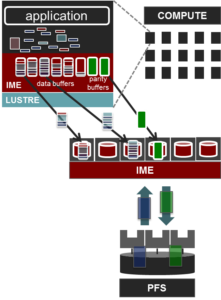

The recent announcement of the Japanese Oakforest-PACS 25 PF/s supercomputer is the latest double-digit Petascale machine that will utilize a combination of DDN burst buffer, application acceleration, SSD and file system technologies together to achieve results faster than conceived possible even just 2 years ago. The Oakforest storage system is comprised of 25 DDN IME14KX caching appliances to provide 1.4 TB/s of low-latency flash-based cache. These cache devices will work in conjunction with DDN supplied storage to deliver 400 GB/s of peak Lustre bandwidth to meet the storage bandwidth needs of this latest generation multi-PF/s supercomputer. As can be seen in the figure below, Lustre is just one option as tiered DDN storage works with any parallel file-system.

Infinite Memory Engine

DDN’s IME (Infinite Memory Engine) represents a new IO tier for HPC that treats small IO in precisely the same manner as large sequential IO. This is a revolutionary change from existing parallel filesystems results in near wire-speed performance regardless of random IO patterns, IO size, and shared file access. DDN’s IME product line also has the ability to work with future storage media such as 3D XPoint and others.

IME “burst-buffers”

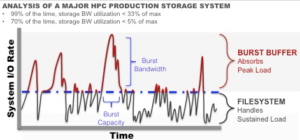

The DDN IME intelligently decouples storage performance from the traditional view of ‘storage’ to greatly accelerate HPC workloads – especially for frequently performed checkpoint/restart operations.

As can be seen in the figure below, Burst Bandwidth has traditionally required overprovisioning of storage to meet peak bandwidth needs. Checkpoint/restart operations are an example of a common IO operation that requires storage overprovisioning to quickly move the data and prevent wasting valuable compute cycles. The DDN IME caches can be configured to act as burst buffers that can quickly handle bursts of extremely high IO activity. This is the reason why the Oakforest-PACS supercomputer has been provisioned with 1.4 TB/s of DDN IME bandwidth.

IME positions HPC for the Exascale

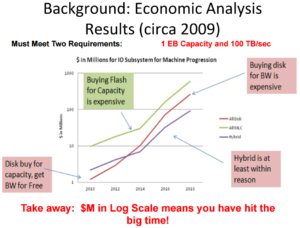

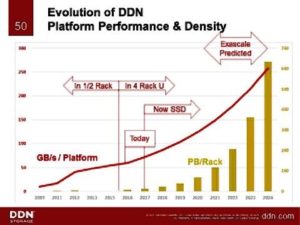

Looking ahead to the Exascale, DDN IME caches can save significant capital and operational dollars by reducing the number of devices required to achieve Exascale-capable levels of storage performance. To put this in perspective, Gary Grider famously pointed out in his 2009 presentation, Preparing Applications for Next Generation IO/Storage that plotting Exascale storage costs of millions of dollars in log scale means you have hit the big time!

In contrast, the Oakforest-PACS procurement only required 25 DDN IME14KX caching appliances. As the industry leader, DDN has dramatically redefined the storage landscape and costs associated with Exascale storage systems since 2009 as shown in the graphic below.

For HPC, DDN IME devices makes high-performance clusters, multi-PF/s systems, and Exascale computation both possible and affordable.

The many uses of IME

Of course, IME storage works great for databases, out-of-core solvers, and a variety of other scientific and commercial HPC workloads.

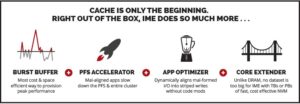

- A Write Accelerating Burst Buffer absorbing the bulk application data into the IME14K NVMe solid state cache significantly faster than the file system can absorb it.

- A File System Accelerator and Application Optimizer as IME reorders application I/O to optimize flushing the cache to long term storage (enabling purchasing as little expensive cache possible).

- A Read-optimized Application-I/O Accelerator that enables out-of-band API configuration of the IME appliance to optimize both reads and writes, allowing more simultaneous job runs, shortening the job queue and enabling significantly faster application run time to the user. The API integrates IME with the job schedulers and pre-stages / warms the cache for new jobs, accelerating first read.

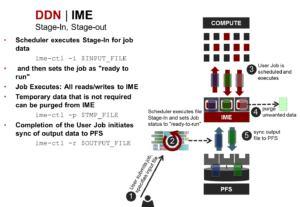

Standard script operations make utilization of DDN IME appliance capabilities straight-forward. The following shows how to use the DDN IME as an application accelerator.

Robustness and Scalability are key!

Cost and power savings are for naught if the storage solution is not robust and scalable as well.

DDN gives the customer the option of using a technique called erasure coding to protect against storage failures. Erasure codes are primarily used in scale-out object storage systems where erasure encoded data blocks are distributed across multiple storage nodes to provide protection against both media and node failures. Erasure encoding can literally save racks of storage nodes when compared to the alternative, three- or four-way mirroring/replication [For more information click here].

Option 1: Data protection is optional. The IME server and associated storage media are considered “just cache” where the data can be recreated if lost.

Option 2: Erasure coding is calculated at the client:

- Exhibits excellent scaling and can run with high client counts.

- Servers don’t get clogged up.

- There is a tradeoff as erasure coding does reduce usable client bandwidth and IME capacity according to IME count by roughly 11% (in an 8+1 configuration) to 25% (in a 3+1 configuration).

Managing the full spectrum of end-to-end data lifecycle management

Robust, scalable, and performant storage are but part of the HPC storage picture as data archive must also be considered as well as full life cycle data management and distributed cloud based storage. Similarly, questions are being raised about the efficacy of POSIX based file-systems in future HPC systems. For this reason, object storage systems are undergoing rapid development.

To address current and future end-user storage needs – even at the Exascale – DDN has created a complete portfolio of end-to-end storage products that work together as an extremely flexible data lifecycle management toolset. DDN claims these tools that can be applied anywhere and at any scale.

Briefly, the DDN storage portfolio covers:

- Fast data and compute: Addressed through the DDN family of IME products.

- File-system appliances: DDN products include the GRIDScaler® and EXAScaler®.

- Persistent data: Persistent data for a variety of commercial and big data workloads are addressed via the SFA14k™ storage array products.

- Object and cloud storage: The WOS® Object storage for private and hybrid clouds take DDN customers beyond traditional file-systems. WOS is described in the DDN white paper, WOS® 360° full spectrum object storage.

For more information

For more information, visit http://www.ddn.com.

Rob Farber is a global technology consultant and author with an extensive background in HPC and storage technologies that he applies at national labs and commercial organizations. He can be reached at [email protected].