SC15 was sort of a muted launch party for OpenHPC – the nascent effort to develop a ‘plug-and-play’ software framework for HPC. There seemed to be widespread agreement the idea had merit, not a lot of knowledge of details, and some wariness because Intel was a founding member and vocal advocate. Next week, ISC16 will mark the next milestone for OpenHPC, which has since grown into a full-fledged Linux Foundation Collaborative Project and today released version 1.0.1 of OpenHPC (build and test tools).

The initial software stack includes over 60 packages, including tools and libraries, as well as provisioning, and a job scheduler. The complete list is available on the project GitHub page. OpenHPC’s 25 founding members of the project span academic, government labs and hardware organizations a technical Steering Committee and Governing Board have been established to help drive technical direction, code contributions, establish IP policies, among other operational needs for the open source project.

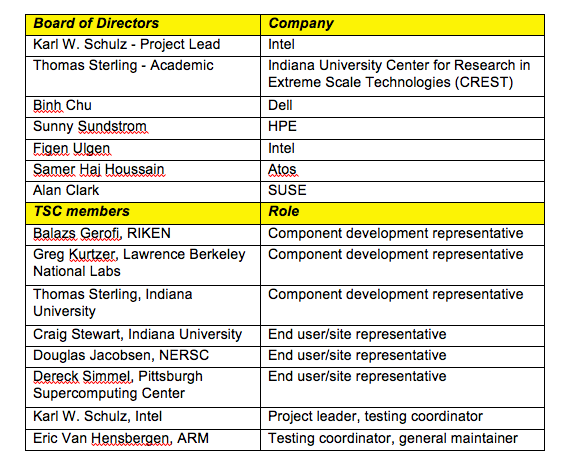

The following chart, provided by OpenHPC, “best represents OpenHPC leadership at its highest level. However, there are additional leadership roles including TSC Functional Area Maintainers, who are dedicated to specific areas of the tech (like energy efficiency and distro packages, for ex). The project also has Board of Director representatives – one seat per Silver member is granted. I can provide that information if helpful as well.” There are clearly some influential HPC folks onboard, including Thomas Sterling, for example.

A central premise of OpenHPC is that standardizing or at least validating HPC stack elements will greatly ease wider adoption of HPC among less computationally-savvy organizations and certainly in the enterprise. Its stated mission is set put below. In earlier days of HPC, of course, the HPC user community (government, national labs, very big companies) tended to work hand-in-glove with technology suppliers to stand up HPC systems. As outlined in the National Strategic Computing Initiative, it’s now a specific goal to evangelize and democratize HPC.

Here’s the statement from today’s announcement:

“While HPC is often thought of as a hardware-dominant industry, the software requirements needed to accommodate supercomputing deployments and large-scale modeling requirements is increasingly more demanding. An open source framework like OpenHPC promises to close technology gaps that hardware enhancements alone can’t address. Because open source software has proven its ability to reliably test and maintain operating conditions, it is quickly becoming the de facto software choice for the world’s most complex environments – meteorology, astronomy, engineering and nuclear physics, and big data science, among others. “

Founding member organization include: Altair, Argonne National Laboratory, ARM, Atos, Avtech Scientific, Barcelona Supercomputing Center, CEA, Center for Research in Extreme Scale Technologies (Indiana University), Cineca Consorzio Interuniversitario, Cray, Inc., Dell, Fujitsu, Hewlett Packard Enterprise, Intel, Lawrence Berkeley National Laboratory (LBNL), Lawrence Livermore National Laboratory (LLNL), Leibniz Supercomputing Centre (LRZ), Lenovo, Los Alamos National Security (LANS), ParTec Cluster Computing Center, the Pittsburgh Supercomputing Center, RIKEN, Sandia National Laboratories (SNL), SGI, SUSE, and Univa.”

The roster continues to grow. It’s worthwhile to look at the mission and goals OpenHPC has set for itself. The statement and bullets below are taken from the OpenHPC web site.

Mission: OpenHPC is a Linux Foundation Collaborative Project whose mission is to provide an integrated collection of HPC-centric components that can be used to provide full-featured reference HPC software stacks. Provided components should range across the entire HPC software ecosystem including provisioning and system administration tools, resource management, I/O services, development tools, numerical libraries, and performance analysis tools.

To support this mission, the following sections highlight elements of the community vision and key values.

Vision

- to provide a collection of pre-packaged binary components that, when combined with a supported base operating system (BoS), can be used to install and manage HPC systems throughout its lifecycle to provide a stable, feature-rich development and runtime environment

- to provide HPC-centric packages that are either absent or have unacceptable lag time from leading Linux distro providers

- to support new hardware offerings from vendors in a timely fashion

- to provide distribution/installation mechanisms for leading research groups releasing open-source software

- to allow both open-source and proprietary software vendors to focus efforts on innovation

- to allow and promote multiple system configuration recipes that leverage community reference designs

- to foster development of defined interfaces between supported components that allows for simple component replacement and customization

OpenHPC also defines what it call key values: component interoperability; system stability; performance scalability; community collaboration; traceability; validated and reproducible recipes; knowledge base for configuration recipes; focus on user experience and convenience (for system administrators and end-users)

It will be interesting to see how OpenHPC plays out. Some have suggested it’s a counter move to IBM-backed OpenPOWER Foundation, although at SC15 IBM told HPCwire it found the idea interesting and wouldn’t reject participation until more was known about it.

With momentum building and organizational infrastructure solidifying, Michael Dolan, VP, strategic programs, The Linux Foundation, told HPCwire, “Some near term goals for the technical steering committee include establishing guidelines, procedures, and a roadmap to guide future OpenHPC releases. Example efforts in this process include obtaining alignment on packaging conventions and upgrade mechanisms, implementing a transparent component selection process to evolve the software components being made available based on community requests, identifying target hardware support, and expanding available CI test infrastructure.”

Dolan commented briefly on the fears of Intel dominance, noting that Linux Foundation Collaborative Projects are specifically intended to serves as neutral forums. “This model encourages broad participation and transparency to foster a community-based initiative and OpenHPC’s governance structures were purposefully designed to include leadership participation from academia, government labs, and industry,” he said.

OpenHPC will have a presence at ISC in Germany next week. The project will have a booth (#1224) and a few speaking tracks.