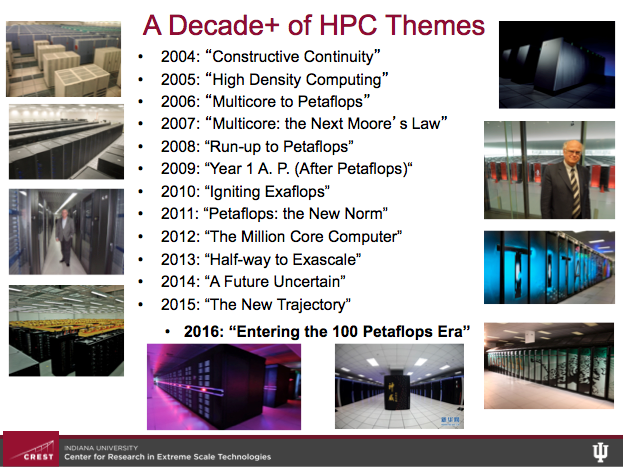

Capturing the sparkle, wit, and selective skewering in Thomas Sterling’s annual closing ISC keynote is challenging. This year was his 13th, which perhaps conveys the engaging manner and substantive content he delivers. Like many in the room, Sterling is an HPC pioneer as well as the director of CREST, the Center for Research in Extreme Scale Technologies, Indiana University. In his ISC talk, Sterling holds up a mirror to the HPC world, shares what he sees, and invites all to look in as well and see what they may.

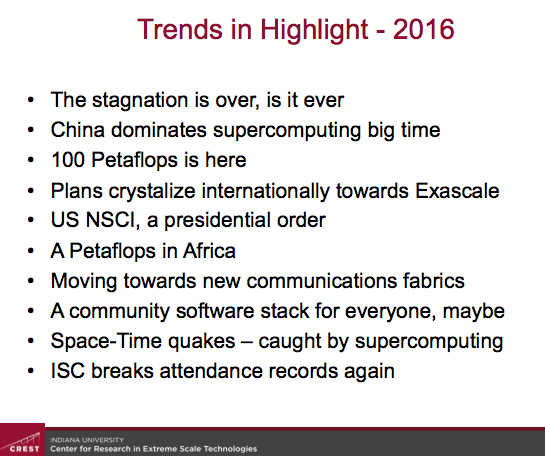

It’s perhaps no surprise that China was high on Sterling’s list this year. He also paid homage to Marvin Minsky, provided encouragement and gentle chiding for the National Strategic Computing Initiative (NSCI) and there were shout-outs to Intel, Data Vortex, and Africa CHPCS. He also suggested the supercomputing world may resemble a great iceberg where the public systems we see and worry about are actually just the just the tip of the iceberg while hidden below are huge commercial and government system whose capabilities perhaps dwarf those of the Top500.

You get the picture. His view is expansive. Presented here is a very brief (apologies to Prof. Sterling) random walk through Sterling’s talk and few slides from his ISC deck. Certainly not everything is covered but there’s plenty to chew on. (If you bump into Sterling you might ask of his interesting theory why dinosaurs never made it onto The Ark, which didn’t make it into the article.)

The Elephant in the Room

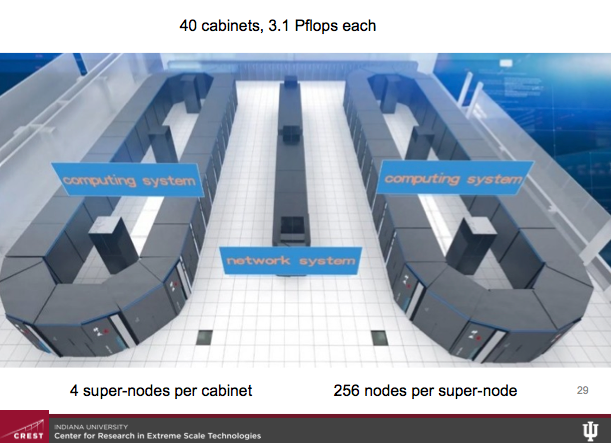

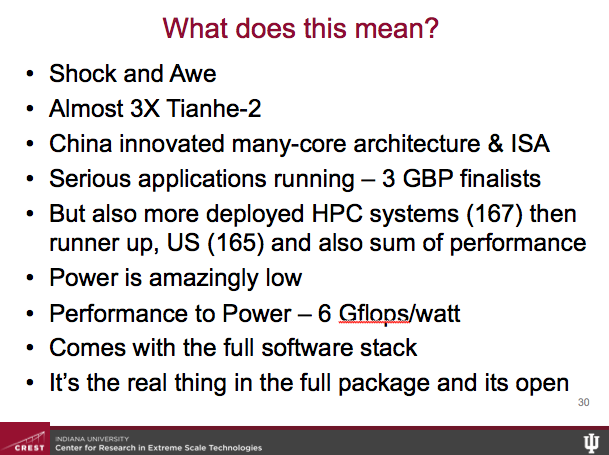

There’s no way around it, said Sterling, the Chinese now dominate supercomputing big time (see HPCwire article, China Debuts 93-Petaflops ‘Sunway’ with Homegrown Processors). The Sunway TaihuLight system, achieved 93 petaflops out of a theoretical peak of 125 petaflops, giving it an efficiency of 74.51 percent. The new king of the Top500 solidifies China’s position.

Instead of hand wringing, Sterling said, “There’s a wow factor here and let’s sit back and really enjoy this for a moment. For me even more impressive is the fact that this is a China homegrown system. They designed the architecture, the structure and instruction set, they designed and deployed the electronics, and they did the systems integration. There are some parts contributed from outside but I’ll tell you all of our big machines back in the states also have some memory that wasn’t home grown.” Sunway’s power budget of only 15MW is extraordinary, he said.

There’s been a fair amount of comment around Sunway’s perceived shortcoming. Duly noted, agreed Sterling. “In terms of memory capacity, it’s pretty light weight with 125 PF peak performance it had about 1/100th that amount in terms of memory capacity at 1.3PB. Its bandwidth also, in terms of the rate with which information is delivered from the memory versus the peak rate of computing isn’t impressive. Its HPSG benchmark – which is proving more realistic in exercising more part of the machine – is below one percent.”

That said the world is changing. By Sterling’s count we are now in our sixth computing epoch. Technology is forcing us into a new class of optimization, which “we are reluctant, in some sense intransigent” in pursuing, he said.

“As we have moved into the era of [multicore/manycore] with an strong emphasis on heterogeneous computing we’ve all known that we’ve moved away from the more conventional and frankly more comfortable model,” he said. Floating point efficiency, a key driver of architecture, “is not the critical objective function it was. Today it is among the easiest things to manufacture and to deploy and so we should be making a lot of them everywhere.”

Instead, said Sterling, it’s the instruction issue rate that counts and that should prompt changes in thinking. “We routinely waste die area by building an enormous memory hierarchies and then add speculative execution in many different forms including pre-fetching, speculative fetching, branch prediction and any number mechanisms and architecture to do what? To keep the ALU busy. [We] need to clean up all that mess. That’s what the Chinese machine is attempting to do. Perhaps we should be valuing the FPU availability not the utilization. This machine does that,” he said.

Some critics have said that’s all well and good but the new machine can’t do anything useful. Sterling noted that Sunway runs three Gordon Bell prize finalists – clearly excellent applications – and there are tasks it can handle quite well. He also noted China now has the largest number of deployed systems on the Top500 – 167 surpassing the US’s 165 deployed.

“While the numbers don’t matter this indicates a keen commitment, a commitment not just to having the high stature machine but rather to having a large number of medium scale systems doing a lot of the heavy lifting of high performance computing,” Sterling noted. The supercomputer game is hardly over, but the current round has gone to China.

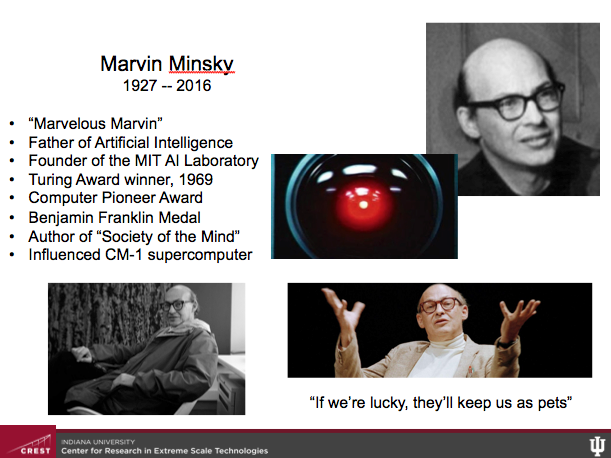

Marvelous Marvin Minsky

“This year we lost Marvin Minsky,” said Sterling. “His name is synonymous with the term of artificial intelligence in the same way Seymour Cray is synonymous with the word supercomputer. In the early years of the 50s and 60s Marvin laid [computer science] foundations and won essentially every award one can win including the Alan Turing Award in 1969.”

Minsky was the co-founder of the MIT Artificial Intelligence Laboratory, who passed away in January. Part of what makes the passing notable, said Sterling is the palpable change in the industry around deep learning, machine learning, cognitive computing none. AI may become the one of the biggest application for supercomputing said Sterling. But rather than dense floating point or even large data processing to do pattern recognition and clustering, it will require, “symbolic computing, the creation of machines that think, more importantly, the creation of machines that understand.”

To Sterling that still seems far away. “Let’s acknowledged the fact the today machines don’t learn anything. We learn. If they learned they wouldn’t just have data converted to information in pretty pictures. They would have data converted to knowledge and be able to manipulate that.”

Maybe that’s good. Minsky believed intelligent machines would take over. He once joked if humans we’re lucky the machines would keep us keep us as pets, said Sterling. In a slightly more serious moment, Minsky offered “yes intelligent machines would take over but they will be our children,” said Sterling.

However the AI adventure plays out, Minsky was seminal force in developing basic ideas around the path to AI and the computing approaches necessary to achieve it.

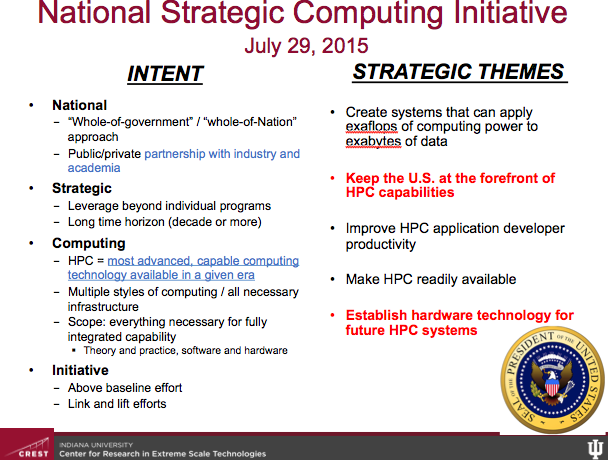

Quo Vadis NSCI

By now most of the HPC community is familiar with the NSCI program, the ambitious “whole of government” program to promote U.S. leadership in HPC. (see HPCWire article, White House Launches National HPC Strategy) Launched by Presidential Executive Order late last July, there has been some confusion in the community over the slowness of the program to take shape. A detailed implementation plan was due before the end of last year – there is a draft but few have seen it.

“One of the objectives and it is highlighted in red [see slide] is to keep the US at the forefront of HPC capabilities. That’s what it says. Of course there’s an underlying assumption there which I’ll leave to you…” said Sterling. “I struggled to get a phrase that would let me get a positive approach to this and still [do] to be honest. The US was (and is) in launch mode towards exascale, and shortly after ISC 15, NCSI was declared.”

The Exacale Computing Project (ECP), which predates NSCI but was subsumed under it, is still progressing largely as planned. “DOE is very sensitive about the term project, [which is] not synonymous with program [and] not synonymous with initiative; project means a very specific rigorous carefully regulated highly professional activity to lead to the end result,” he said, noting that Paul Messina of Argonne National Lab is the project’s leader had run a “very lucid” session on the ECP earlier in ISC.

Shout-Outs to Data Vortex, and CHPCS

A big part of ISC this week surrounded Intel’s official release of Xeon Phi/KNL to general availability and a building chorus OEM announcing plans rapidly introduce product incorporating the chip and Omni-Path Architecture. Sterling had good things to say about both KNL and OPA. He also had optimism for new technology from Data Vortex and praise for South Africa’s supercomputing progress:

- Data Vortex. “The Data Vortex computer is a very different animal. It is created around a new and very different class of communication. [It’s] focused on lightweight fine grained messages, in fact very fine grained, each payload is only 64 bits with an address space available of 64 bits so that’s quite on the edge. Its high-rate, high bandwidth network [has] both low latency and its contention free,” said Sterling who suggests Data Vortex may be most useful as a domain specific or a domain narrow machine as there are a wide range of applications that are particularly well suited for its architecture.

- Lengau (Cheetah). Last month the Centre for High Performance Computing (CHPC) at the Council for Scientific and Industrial Research (CSIR) unveiled the fastest computer in Africa, the Lengau system. Sterling applauded the accomplishment noting, “This is no small accomplishments 15X the last CHPC system. Its rmax is a 785 TF,” said Sterling, It was a timely piece of praise as Team South Africa had taken to the stage short time before to collect its third HPCAC-ISC Student Cluster Competition championship.

How Big and How Fast

Towards the end of his presentation, Sterling said, “I’d like to leave us with a question and I’d like to talk about supercomputing in the shadows. We are the HPC community but do we in fact represent [all of ] HPC? I am a big supporter of HPL (LINPACK benchmark).” But many systems are unranked or poorly structured for the LINPACK bechmark. “There’s a whole world of high performance computing hidden in the cloud, of course I am being metaphorical here, Amazon, Google, Microsoft. Some of you may know how big those systems are. I don’t. But they are enormous and we don’t see them through our methods of evaluation.

“Sometimes they are hidden by intent, [such as] the intelligence community, and I’ll just say this – I have been in the basement,” he said drawing a laugh, and adding there are financial and banking systems that are “enormous, quite possible dwarfing what we view. Finally there are special purpose devices being used such as the Anton machine, for n body problems in molecular dynamics. The SKA (Square Kilometer Area project) is building a very complex configuration of multiple computer systems to process all the data. How do we run a LINPACK on a quantum computer?

“So I really leave you with this question. Do we really know how fast computing is, and if not, how should we as a community broaden our reach, our perspective to quantify and evaluate the trends in technology, the trends in architecture, the trends in applications that span the entire set of what high performance computing is and will be?”