Getting to the root of how things work has informed and progressed all aspects of scientific discovery. As computers and applications grow in complexity, seemingly poised to enter a new phase beyond the limits of Moore’s Law and CMOS technology, enlightening how they work best is paramount.

With new resources from a growing list of industry partners, the Center for Advanced Technology Evaluation – known as CENATE – a computing proving ground at Pacific Northwest National Laboratory supported by the U.S. Department of Energy’s Office of Advanced Scientific Computing Research, is rapidly expanding its capabilities to assist the high-performance computing community by providing diverse methods to steer optimal system designs and enhance overall performance. (See HPCwire article, PNNL Launches Center for Advanced Technology Evaluation)

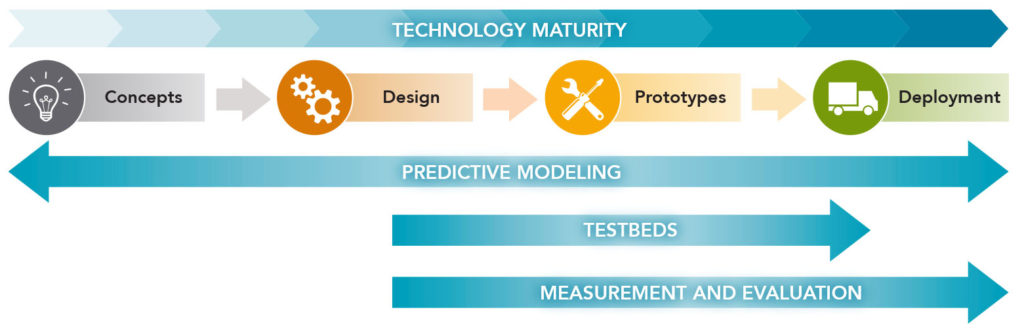

Through its integrated approach to combining advanced concepts and technology, the CENATE measure–model–design pipeline remains unique among similar early analysis capability providers. Adolfy Hoisie, CENATE’s director and PNNL’s chief scientist for computing, cited how the CENATE technology maturity pipeline can be applied to analyzing advanced architectures that are expected to emerge from the union of diverse technologies, for example, minimizing data movement, which is a central concern in achieving optimality for performance and power.

The CENATE Technology Maturity Pipeline

“Novel networking concepts, which we currently are examining with Data Vortex, are designed for extremely high bandwidths obtained through innovative topologies, while novel memory concepts, such as stacked memory, enable memory operations at extreme bandwidths and complex patterns,” he explained. “So, memories and networks can impact performance and power. Within CENATE, we link advanced concepts to assess the impact of such hypothetical architectures on DOE applications and systems. Rather than look at these as separate technologies, we can enable the next step: we link the two through modeling and simulation, experimentation, and measurement.”

The Data Vortex work is a good example of a promising new technology that is drawing attention.

Speaking at ISC 2016, Thomas Sterling, chief scientist and associate director at the Center for Research on Extreme Scale Technologies (CREST), Indiana University, noted, “The Data Vortex computer is a very different animal. It is created around a new and very different class of communication. [It’s] focused on lightweight fine grained messages, in fact very fine grained, each payload is only 64 bits with an address space available of 64 bits so that’s quite on the edge. Its high-rate, high bandwidth network [has] both low latency and its contention free.”

Sterling suggested the Data Vortex approach may be most useful as a domain specific or a domain narrow machine as there are a wide range of applications that are particularly well suited for its architecture. Exploring new technologies such as this is a central mission for CENATE.

In addition to providing a closer look at the interface between technologies and how these interactions influence system designs, CENATE’s approach uses measurement of advanced technologies combined with modeling and simulation to explore their possible impact on future large-scale HPC deployments.

Well-equipped Research Engine

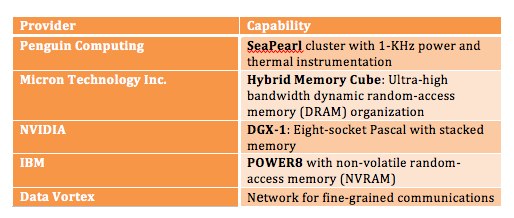

CENATE recently has seen its slate of analysis equipment and testbeds expand to include advanced technologies from industry, including IBM, Mellanox, NVIDIA, Micron, Penguin Computing, and the previously noted Data Vortex Technologies.

These technologies include:

CENATE also is exploring a new flexible cluster that will emulate large networks within a limited footprint, as well as combine optical circuit switching (OCS) for software-defined networking (SDN)/dynamic bandwidth allocations.

CENATE Seeks to Spread the Word

Access, outreach, and HPC community involvement are a critical part of CENATE’s research activities. The CENATE team is working on providing an access portal for the center’s instrumentation and testbeds with priority for other DOE-sponsored researchers. They are evaluating methods for disseminating research to the community (with accommodations for analysis activities conducted under non-disclosure agreements). A soon-to-be-established Advisory Board will inform some of the best practices for these mechanisms.

CENATE also benefits from interaction with large-scale, production-level computing facilities in the Molecular Science Computing capability within the Environmental Molecular Sciences Laboratory, a DOE Office of Biological and Environmental Research-sponsored user facility available for use by the global research community. Currently, EMSL is housing CENATE’s machine room, and efforts to further couple CENATE research applications with PNNL-based resources and other computing systems throughout the DOE complex are ongoing.

For more information or to access CENATE, contact Lead Scientist, Darren Kerbyson.