Moving large datasets around HPC networks such as those of XSEDE is often challenging. While Internet2, the most commonly used backbone, is fast at 100GBS, local traffic to campuses often slows to 10GBS. At this week’s XSEDE meeting the DANCES (Developing Applications with Networking Capabilities via End-to-End SDN) project leaders reported first successful testing of hardware components needed as well as results of vendor bakeoff for switches.

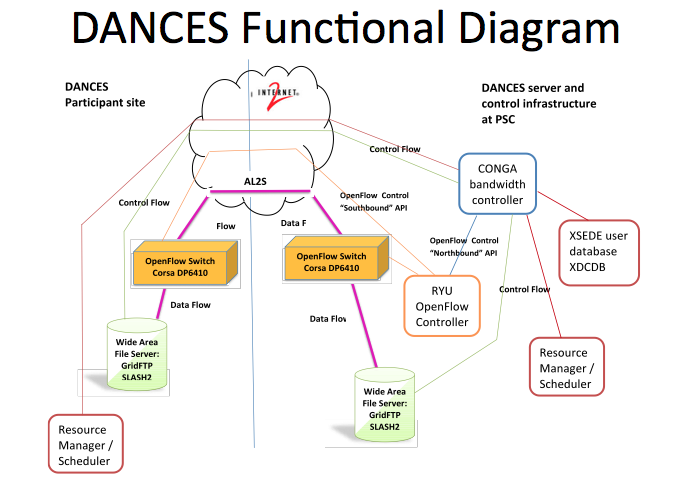

The NSF-funded DANCES[i] project is intended to investigate and develop the ability to add network bandwidth scheduling via software- defined networking (SDN) programmability to selected cyberinfrastructure services and applications intended to develop a software/hardware infrastructure that speeds and smoothes high-speed traffic. The recent test involved transfer of “Big Data” between two XSEDE sites, the Pittsburgh Supercomputing Center (PSC) and the National Institute for Computational Sciences (NICS) at the University of Tennessee.

“If you think about [high-performance computing] users and their end-to-end workflow, they need to do it by breaking their projects into pieces,” says Victor Hazlewood of NICS, principal author of the peer-reviewed article accompanying the presentation. “One of these pieces inevitably involves moving data into and out of where researchers do their computation.”

Today’s research wide-area networks (WAN) are more a free-for-all than their compute and storage counterparts, which are scheduled and managed, according to Hazlewood. By design, the protocol software that regulates the network data flow treats all data packets as equal. When the networks are not crowded it’s like an empty freeway. When they are crowded, though, the situation becomes much like a convoy of buses getting broken apart by other traffic. For large datasets traveling across the WAN, that can mean slow movement or even failures to move the data completely in a reasonable amount of time.

Project leaders presented a DANCES progress update and their paper[ii] at the XSEDE. In addition to reviewing the technology investigated, the paper details the evaluation of several vendors for use in the project. Corsa Technology, a Canadian technology start-up, was selected. “We were … interested in devices that supported bandwidth reservation,” said coauthor Kathy Benninger of PSC. “Part of that has to do with adoption of the newest OpenFlow versions by switch vendors.”

OpenFlow 1.3, the latest commonly deployed version of the standard network communications interface, has features that support such bandwidth management. But the problem, Benninger explains, is that “compliance” with a given version of OpenFlow doesn’t require a particular network switch to support every facet of that version’s specification. The researchers had to test a number of switch products, eventually choosing the Corsa DP6410 as most fully compatible with the DANCES requirements, successfully testing the combination in a number of data-transfer scenarios between PSC and NICS. Next, the group plans to test the full set of end-to-end system functions that will be needed to support real data transfers by XSEDE users.

Here’s an excerpt from the paper describing target device and application characteristics:

“The SDN capabilities would be provided by a network device that implements OpenFlow 1.3 including per-flow meters and per-port queuing required by DANCES. The bandwidth control and scheduling capability will be used to mitigate congestion – induced throughput problems on end site networks. The prototype environment setup has been done in two phases. The first phase was done by setting up and testing in a virtual environment and the second phase was done by creating a WAN test environment.

“The cyberinfrastructure applications chosen for investigation and potential performance enhancement by DANCES include (1) SCP and GridFTP data transfers and (2) wide area distributed file system data transfers initiated by, and integrated with, job scheduling and resource management. In addition, SLASH2, the wide area distributed file system targeted for DANCES integration, natively supports scheduled remote file replication. Coupling the SLASH2native file transfer scheduling with the network bandwidth scheduling capability of DANCES increases the efficiency of both network and file system resources.

“The project set out to deploy a prototype environment to extend the TORQUE/Moab scheduling and management currently in use at several XSEDE supercomputing sites to support networking as a schedulable resource. The wide area distributed file systems selected for bandwidth scheduling integration were the XSEDE-wide File System built on IBM’s General Parallel File System (GPFS) and SLASH2 developed at Pittsburgh Supercomputing Center. Since the award of DANCES, XSEDE has decided to no longer support the XSEDE-wide file system based on GPFS and, therefore, DANCES modified its goals to test and prototype using GridFTP, SLASH2 and now SCP as the replacement for GPFS.”

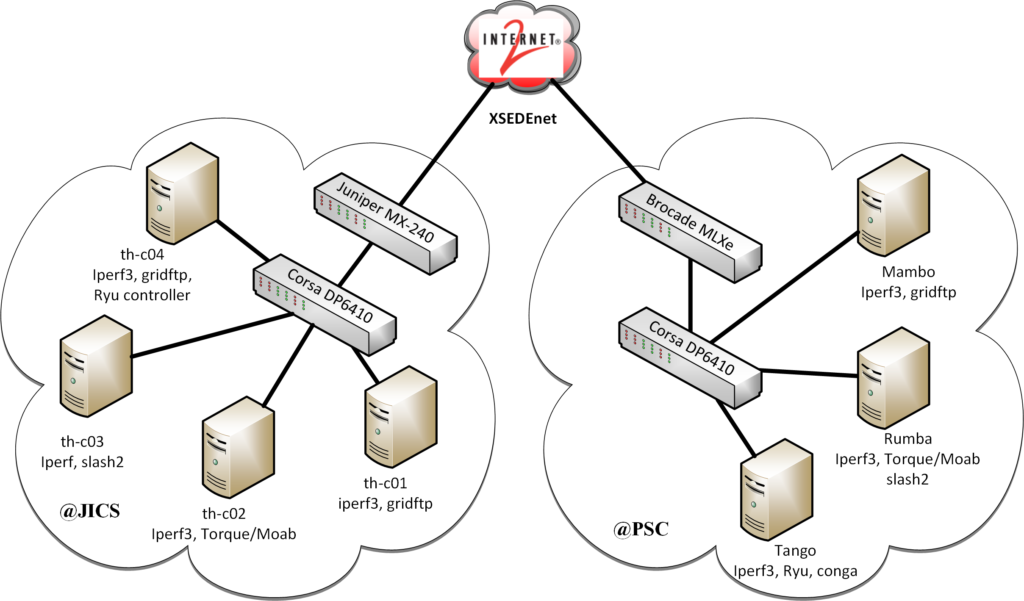

Shown below is an early test DANCES WAN configuration:

The authors note that Software Defined Networking has become broadly used terminology, including open and vendor proprietary protocols for network device control communication. Early in the project, OpenFlow was selected as the switch control protocol because it was the most widely deployed and supported, as well as being an open standard. DANCES team identified the version 1.3.0 specification as the minimum target for switch selection in order to provide for complex quality of service frameworks (e.g. rate limiting) that could be provided by implementation of the specification’s per-flow meters in section and per-port queues in section. The per-switch port buffer space was also an important consideration.

Product specifications from Arista, Brocade, Cisco, Dell, Noviflow, and Pica8 were assessed and determined not to “sufficiently meet all the selection criteria while remaining within the project budget.” Five OpenFlow switches were selected for field test, including an early-release Netronome Network Flow Processing Platform (NFPP-1U), a Juniper EX9204, a Juniper MX80-48T, an HP5920 (now HPE), and a Corsa DP6410, which was eventually selected for the project deployment.

Hazlewood expects that the SDN technology employed by DANCES may be adopted by major coordinated HPC networks such as XSEDE within the next five years, with more mainstream use in five to 10 years.

References

[i] Developing Applications with Networking Capabilities via End-to-End SDN (DANCES) project is a collaboration between The University of Tennessee’s National Institute for Computational Sciences (UT-NICS), Pittsburgh Supercomputing Center (PSC), Pennsylvania State University (Penn State), the National Center for Supercomputing Applications (NCSA), Texas Advanced Computing Center (TACC), Georgia Institute of Technology (Georgia Tech), the Extreme Science and Engineering Discovery Environment (XSEDE), and Internet2 to investigate and develop the ability to add network bandwidth scheduling via software- defined networking (SDN) programmability to selected cyberinfrastructure services and applications. DANCES, funded by the National Science Foundation’s Campus Cyberinfrastructure – Network Infrastructure and Engineering (CC-NIE) program award numbers 1341005, 1340953, and 1340981, has field tested five vendor network devices in order to determine which implements the DANCES requirements of the OpenFlow 1.3 standard to provide the network reservation and rate-limiting capability desired to implement the goals of DANCES.

[ii] Developing Applications with Networking Capabilities via End-to-End SDN

(DANCES), by Victor Hazlewood, Kathy Benninger, Greg Peterson, Jason Charcalla, Benny Sparks, Jesse Hanley, Andrew Adams, Bryan Learn, Robert Budden, Derek Simmel, Joseph Lappa, Jared Yanovich; http://www.psc.edu/images/news/XSEDE16-DANCES-final.pdf