IBM Research today announced a significant advance in phase-change memristive technology – based on chalcogenide-based phase-change materials – that IBM says has the potential to achieve much higher neuronal circuit densities and lower power in neuromorphic computing. Also, for the first time, researchers demonstrated randomly spiking neurons based on the technology and were able to detect “temporal correlations in parallel data streams and in sub-Nyquist representation of high-bandwidth signals.”

The announcement coincided with the publishing of a paper, Stochastic phase-changing neurons, in Nature Nanotechnology. The work, largely performed at IBM Research Zurich, is part of a sizable body of exciting research currently occurring in the neuromorphic space.

Turning on two large-scale neuromorphic computers – SpiNNaker and BrainScaleS – this spring and making them readily available to researchers marked another major step forward in pursuit of brain-inspired computing. These machines, based on different neuromorphic architectures, mimic much more closely the way brains process information. Why this is important is perhaps best illustrated by an earlier effort to map neuron-like processing onto a traditional supercomputer, in this case Japan’s K Computer.

“They used about 65000 processors [to create] a billion very simple neurons. Call it one percent of the brain – but it’s not really a brain, [just] a very simple network model. This machine consumes the power of 30MW and the system runs 1500X slower than real-time biology,” said Karlheinz Meier, a leader in the Human Brain Project and whose group developed the BrainScaleS machine. In terms of energy efficiency, this K Computer effort was “ten billion times less energy efficient.”

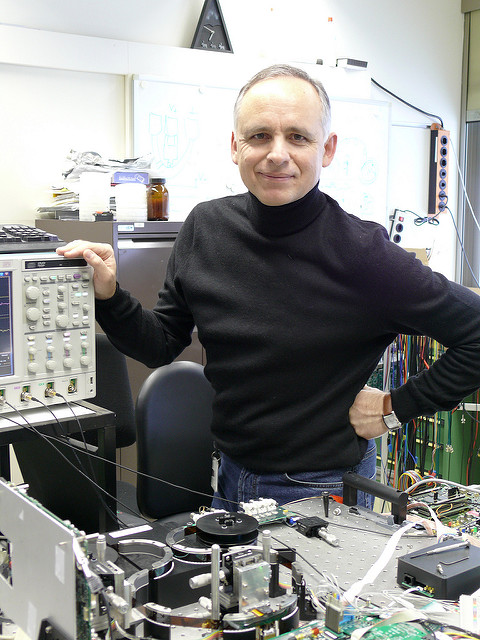

The IBM research takes substantial steps forward on several fronts. “We have been researching phase-change materials for memory applications for over a decade, and our progress in the past 24 months has been remarkable,” said IBM Fellow Evangelos Eleftheriou. “In this period, we have discovered and published new memory techniques, including projected memory, stored 3 bits per cell in phase-change memory for the first time, and now are demonstrating the powerful capabilities of phase-change-based artificial neurons, which can perform various computational primitives such as data-correlation detection and unsupervised learning at high speeds using very little energy.”

IBM scientists organized hundreds of artificial neurons into populations and used them to represent fast and complex signals. Moreover, the artificial neurons have been shown to sustain billions of switching cycles, which would correspond to multiple years of operation at an update frequency of 100 Hz. The energy required for each neuron update was less than five picojoules and the average power less than 120 microwatts — for comparison, 60 million microwatts power a 60 watt lightbulb.

As Eleftheriou notes memristor technology is hardly new, and as promising as it is, there have also been obstacles, notably variability and scaling, but the IBM approach overcomes some of those issues.

Co-author Dr. Abu Sebastian, IBM Research, told HPCwire, “Variability is indeed a big issue for resistive memory technologies such as the ones based on metal-oxides (TiOx, HfOx etc.). But not so much in phase change memory (PCM) devices. We still have a certain amount of inter-device and intra-device variability even in PCM devices. Clearly this type of randomness would be undesirable for memory-type of applications. However, for neuromorphic applications, this could even be advantageous as we show in our paper. The stochastic firing response of the phase change neurons and their ability to represent high frequency signals arise from this randomness or variability.”

Just the mechanism of IBM’s phase change device technology is fascinating. The artificial neurons designed by IBM scientists in Zurich consist of phase-change materials, including germanium antimony telluride, which exhibit two stable states, an amorphous one (without a clearly defined structure) and a crystalline one (with structure). These materials are the basis of re-writable Blue-ray discs. However, the artificial neurons do not store digital information; they are analog, just like the synapses and neurons in our biological brain.

In the published demonstration, the team applied a series of electrical pulses to the artificial neurons, which resulted in the progressive crystallization of the phase-change material, ultimately causing the neuron to fire. In neuroscience, this function is known as the integrate-and-fire property of biological neurons. This is the foundation for event-based computation and, in principle, is similar to how our brain triggers a response when we touch something hot.

The authors write in the conclusion:

“The ability to represent the membrane potential in artificial spiking neurons renders phase-change devices a promising technology for extremely dense memristive neuro-synaptic arrays. A particularly interesting property is their scalability down to the nanometer scale and the fast and well-understood dynamics of the amorphous-to-crystalline transition. The high speed and low energy at which phase-change neurons operate will be particularly useful in emerging applications such as processing of event-based sensory information, low-power perceptual decision making and probabilistic inference in uncertain conditions. We also envisage that distributed analysis of rapidly emerging, pervasive data sources such as social media data and the ‘Internet of Things’ could benefit from low-power, memristive computational primitives.”

A figure from the paper, shown below, illustrates the principal.

Artificial neuron based on a phase-change device, with an array of plastic synapses at its input. Schematic of an artificial neuron that consists of the input (dendrites), the soma (which comprises the neuronal membrane and the spike event generation mechanism) and the output (axon). The dendrites may be connected to plastic synapses interfacing the neuron with other neurons in a network. The key computational element is the neuronal membrane, which stores the membrane potential in the phase configuration of a nanoscale phase-change device. Owing to their inherent nanosecond-timescale dynamics, nanometre-length-scale dimensions and native stochasticity, these devices enable the emulation of large and dense populations of neurons for bioinspired signal representation and computation.

“By exploiting the physics of reversible amorphous-to-crystal phase transitions, we show that the temporal integration of postsynaptic potentials can be achieved on a nanosecond timescale. Moreover, we show that this is inherently stochastic because of the melt-quench-induced reconfiguration of the atomic structure occurring when the neuron is reset,” write the researchers in the paper.

Mimicking threshold-based neuronal models with traditional technology can be problematic, note the researchers. “Emulating these by means of conventional CMOS circuits, such as current-mode, voltage-mode and subthreshold transistor circuits, is relatively complex and hinders seamless integration with highly dense synaptic arrays. Moreover, conventional CMOS solutions rely on storing the membrane potential in a capacitor. Even with a drastic scaling of the technology node, realizing the capacitance densities measured in biological neuronal membranes is challenging.”

(A brief note on the phase-change device attributes is included at the end of this article.)

One interesting aspect of the work is demonstration of stochastic behavior of artificial neurons based on the phase-change technology. The authors believe they can turn this into an advantage. The stochastic behavior of neurons in nature results from many sources such as the ionic conductance noise, the chaotic motion of charge carriers due to thermal noise, inter-neuron morphologic variabilities and background noise. This complexity has “restricted the implementation of artificial noisy integrate-and-fire neurons in software simulations”, despite their importance in bio-inspired computation and applications in signal and information processing.

The phase change devices, it turns out, also exhibit stochastic behavior for similar reasons. Think of a population of 1000 artificial neurons based on IBM’s phase-change technology. Broadly, when fed the same signal trains, they will fire at slightly differing rates that track well with standard probability models. This can be extremely useful when using a population of artificial neurons for some tasks.

“The relatively complex computational tasks, such as Bayesian inference, that stochastic neuronal populations can perform with collocated processing and storage render them attractive as a possible alternative to von-Neumann-based algorithms in future cognitive computers.”

Here’s a link to a Youtube video by IBM on the technology posted today.

Note on Phase-Change Device Attributes Excerpted From the Paper

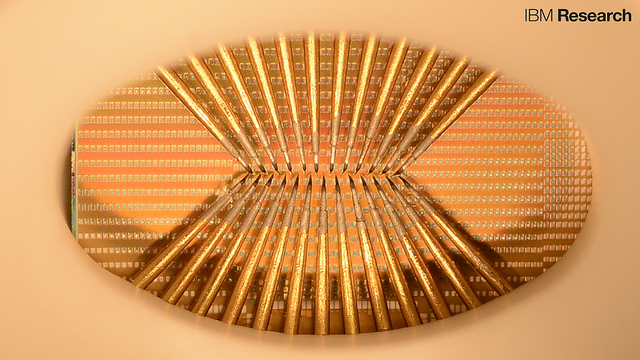

The mushroom-type phase-change devices used in the experiments were fabricated in the 90 nm technology node with the bottom electrode created using a sublithographic key-hole process27. The phase-change material was doped Ge2Sb2Te5. All experiments were conducted on phase-change devices that had been heavily cycled. The bottom electrode had a radius of 20 nm and a length of 65 nm. The phase-change material was 100 nm thick and extended to the top electrode, the radius of which was 100 nm. For the single- neuron experiments, the phase-change device was operated in series with a resistor of 5 kΩ. The experiments using multiple neurons and experiments with neuronal populations were based on a crossbar topology in which 100 phase-change devices were interconnected in a 10 × 10 array unit, with a lateral field-effect transistor used as the access device. We used multiple array units to reach population sizes of up to 500 neurons.

Link to paper: http://www.nature.com/nnano/journal/v11/n8/full/nnano.2016.70.html