What will computing look like in the post Moore’s Law era? That’s probably a bad way to pose the question and certainly there’s no shortage of ideas. A new federal white paper – A Federal Vision for Future Computing: A Nanotechnology-Inspired Grand Challenge – tackles the ‘what’s next’ question and spells out seven specific research and development priorities and identifies the federal entities responsible.

The document, roughly a year in the making, is from the National Nanotechnology Initiative (NNI). The NNI, you may know, has it roots in discussions arising in the late 90s and formal creation by the 21st Century Nanotechnology Research and Development Act in 2003. NNI encompasses a large number of activities has a $1.4B budget request for FY2017.

Intended from the start to be a long-term program with long-term R&D horizons, NNI released of the new vision paper on the first year anniversary of the National Strategic Computing Initiative (NSCI) – perhaps as encouragement to the NSCI community. Specifically, the vision paper supports Nanotechnology-Inspired Grand Challenge, announced last fall by the Obama Administration, to develop “transformational computing capabilities by combining innovations in multiple scientific disciplines.”

As described in the latest paper, “The Grand Challenge addresses three Administration priorities—the National Nanotechnology Initiative (NNI); the National Strategic Computing Initiative (NSCI); and the Brain Research through Advancing Innovative Neurotechnologies (BRAIN) Initiative to: create a new type of computer that can proactively interpret and learn from data, solve unfamiliar problems using what it has learned, and operate with the energy efficiency of the human brain.

Somewhat soberly, the report says, “While it continues to be a national priority to advance conventional digital computing—which has been the engine of the information technology revolution—current technology falls far short of the human brain in terms of the brain’s sensing and problem-solving abilities and its low power consumption. Many experts predict that fundamental physical limitations will prevent transistor technology from ever matching these characteristics.”

NNI has categorized research and development needed to achieve the Grand Challenge into seven focus areas:

- Materials

- Devices and Interconnects

- Computing Architectures

- Brain-Inspired Approaches

- Fabrication/Manufacturing

- Software, Modeling, and Simulation

- Applications

Nanotechnology, of course, is already an area of vigorous R&D. As the list of focus areas illustrates, the program covers a wide swath of technologies. Though brief, much of the directional discussion is fascinating. Here’s an excerpt from the materials section:

“The scaling limits of electron-based devices such as transistors are known to be on the order of 5 nm due to quantum-mechanical tunneling. Smaller devices can be made if information-bearing particles with mass greater than the mass of an electron are used. Therefore, new principles for logic and memory devices, scalable to ~1 nm, could be based on “moving atoms” instead of “moving electrons;” for example, by using nanoionic structures. Examples of solid-state nanoionic devices include memory (ReRAM) and logic (atomic/ionic switches).”

Despite the diversity of topics covered, the goal of emulating human brain-like capabilities runs throughout document. Indeed brain-inspired computing R&D is hot right now and making substantial progress.

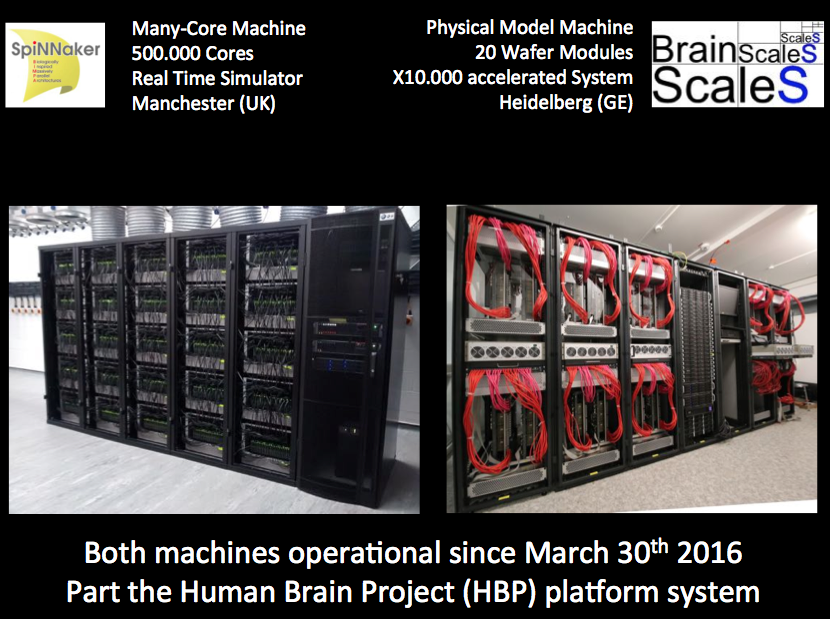

In late spring of this year, IBM and Lawrence Livermore National Laboratory announced a collaboration in which LLNL would receive a 16-chip TrueNorth system representing a total of 16 million neurons and 4 billion synapses. At almost the same time in Europe, two large-scale neuromorphic computers, SpiNNaker and BrainScaleS, were put into service and made available to the wider research community.

LLNL will also receive an end-to-end ecosystem to create and program energy-efficient machines that mimic the brain’s abilities for perception, action and cognition. The ecosystem consists of a simulator; a programming language; an integrated programming environment; a library of algorithms as well as applications; firmware; tools for composing neural networks for deep learning; a teaching curriculum; and cloud enablement.

“Lawrence Livermore computer scientists will collaborate with IBM Research, partners across the Department of Energy complex and universities (link is external) to expand the frontiers of neurosynaptic architecture, system design, algorithms and software ecosystem,” according to a project description on the LLNL web site.

The SpinNNaker project, run by Steve Furber, one of the inventors of the ARM architecture and a researcher at the University of Manchester, has roughly 500K arm processors. The reason for selecting ARM, said Meier, is that ARM cores are cheap, at least if you make them very simple (integer operation). The challenge is to overcome scaling required. “Steve implemented a router on each of his chips, which is able to very efficiently communicate, action potentials called spikes, between individual arm processors,” said Karlheinz Meier, a leader in the HBP project whose group developed the BrainScaleS machine.

The BrainScaleS effort, led by Meier, “makes physical models of cell neurons and synapses. Of course we are not using a biological substrate. We use CMOS. Technically it’s a mixed CMOs signal approach. In reality is it pretty much how the real brain operates. The big thing is you can automatically scale this by adding synapses. When it is running you can change the parameters,” Meier said.

The BrainScaleS effort, led by Meier, “makes physical models of cell neurons and synapses. Of course we are not using a biological substrate. We use CMOS. Technically it’s a mixed CMOs signal approach. In reality is it pretty much how the real brain operates. The big thing is you can automatically scale this by adding synapses. When it is running you can change the parameters,” Meier said.

It will be interesting to track neuromorphic computing’s advance and observe how effective various government programs are (or are not) moving forward.

Besides including discussion of technical challenges and promising approaches for each of the seven focus areas, the white paper lays out 5-, 10-, and 15-goals for each focus. Here’s a partial excerpt from the brain-inspired computing section:

“High-performance computing (HPC) has traditionally been associated with floating point computations and primarily originated from needs in scientific computing, business, and national security. On the other hand, brain-inspired approaches, while at least as old as modern computing, have traditionally aimed at what might be called pattern recognition applications (e.g., recognition/understanding of speech, images, text, human languages, etc., for which the alternative term, knowledge extraction, is preferred in some circles) and have exploited a different set of tools and techniques.

“Recently, convergence of these two computing paths has been mandated by the National Strategic Computing Initiative Strategic Plan, which places due emphasis on brain-inspired computing and pattern recognition or knowledge extraction type applications for enabling inference, prediction, and decision support for big data applications. DOE and NSF have demonstrated significant scientific advancements by investing and supporting HPC resources for open scientific applications. However, it is becoming apparent that brain-like computing capabilities may be necessary to enable scientific advancement, economic growth, and national security applications.

- 5-year goal: Translate knowledge from biology, neuroscience, materials science, physics, and engineering into useable information for computing system designers.

- 10-year goal: Identify and reverse engineer biological or neuro-inspired computing architectures, and translate results into models and systems that can be prototyped.

- 15-year goal: Enable large-scale design, development, and simulation tools and environments able to run at exascale computing performance levels or beyond. The results should enable development, testing, and verification of applications, and be able to output designs that can be prototyped in hardware.”

The new document is a fairly quick read and has a fair amount of technical detail. Here’s a link to the white paper: http://www.nano.gov/node/1635