At the HPC User Forum in Austin, Texas, Dr. Yutaka Ishikawa, project lead for RIKEN AICS, confirmed that Japan’s next-generation supercomputer, the Post-K computer, has been delayed by one-to-two years, slipping from its original 2020 target to either 2021 or 2022. The additional time is needed to ensure sufficient processor volume, sources report. With the adjusted schedule, Japan’s exascale horizon has shifted closer to the US goal to stand up a productive exascale computer by no later than 2023.

To be clear, Japan does not use the term exascale with respect to post-K, but has set a goal for the machine to deliver a 100x speedup (with algorithm and code optimizations) over the nation’s current top number-cruncher, the K Computer (an 8.2-petaflops system). The DOE has a similar objective; it defines a “capable exascale machine” as a supercomputer that solves science problems 50 times faster than today’s 20 petaflops systems.

Updated timetable for Japan’s Post-K computer, from Dr. Ishikawa’s presentation at the HPC User Forum (Sept. 7, 2016)

The Japanese government has so far budgeted 110 billion yen (~.91 billion USD) toward the Post-K computer, including research, development and acquisition, and application development. The complete project cost currently stands at 130 billion yen with the difference (20 billion yen) being funded by Fujistu, however trusted sources tell us that Fujitsu is currently negotiating to increase its compensation. A final budget is expected to be announced in 2017.

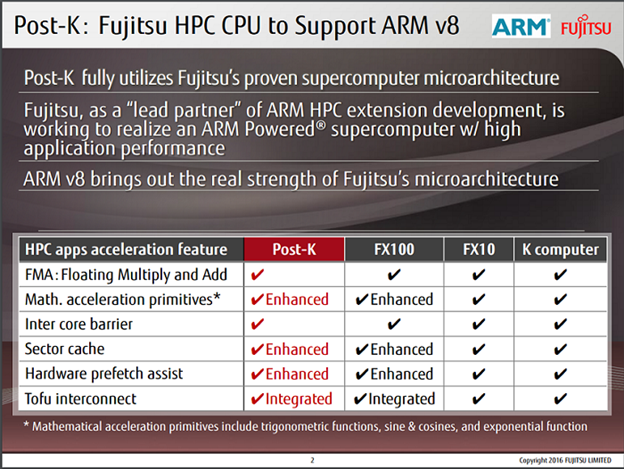

At ISC16, Fujitsu announced plans to adopt the ARMv8-A for Post-K, a switch from the SPARC64 chips used in the K computer. Dr. Ishikawa believes this is absolutely the right direction. “We think the usability will be improved over the K computer by changing the architecture,” he said. “The K computer uses the SPARC architecture but now the community for the SPARC architecture is very small and we don’t have as much software for SPARC architecture. With the ARM architecture, we expect wider community support, leading to mature tools, compilers and systems software.”

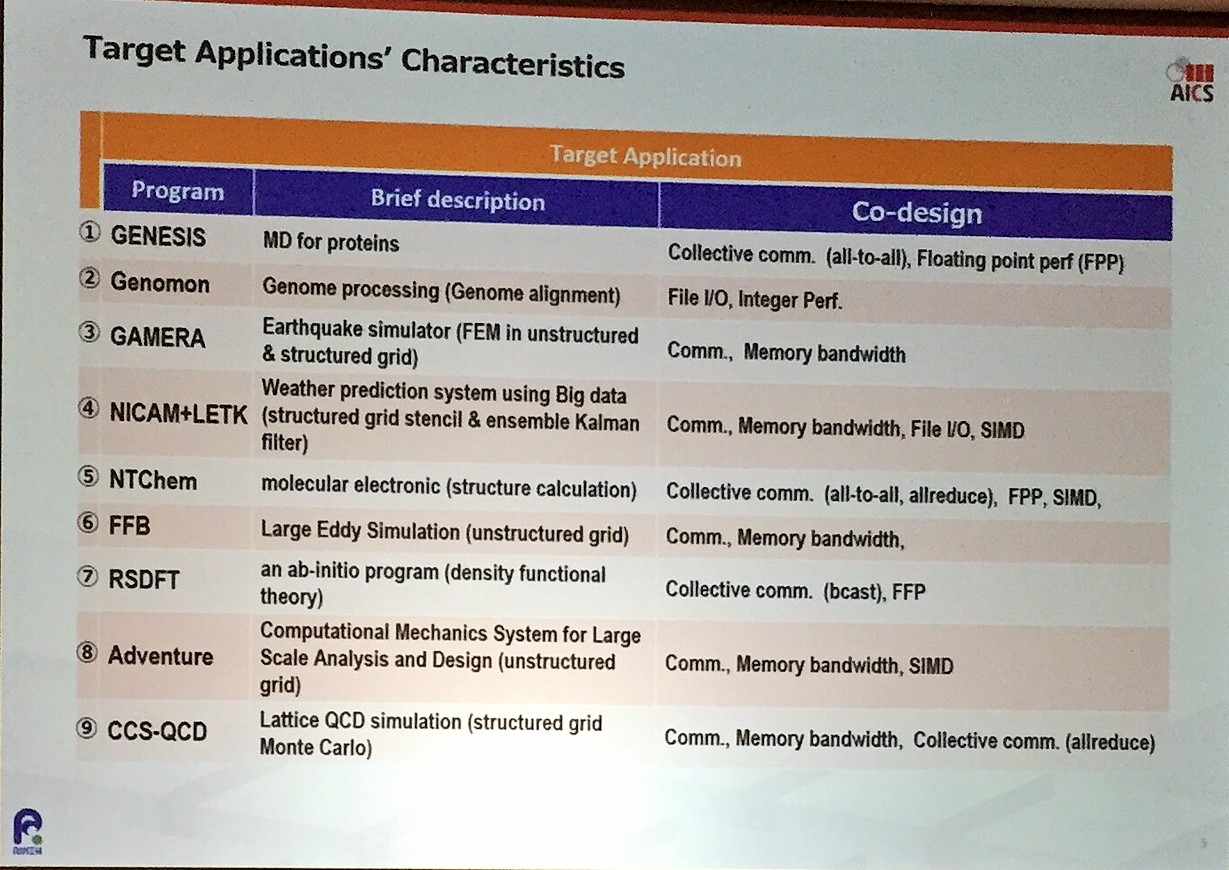

The Japanese government selected nine high-priority application targets for the new supercomputer, segmented into five areas: societal/health benefits; disaster preparedness (including climate research); energy security; industrial competitiveness; and basic science.

Even with the ISA switch, the microarchitecture is almost the same, said Dr. Ishikawa, which gives his team a profile for predicting how fast the applications will be.

“We expect that problems taking a year to solve on the current system will only take a few days on the new one,” Dr. Ishikawa shared on the Flagship2020 website. “As a result, we will also be able to address a broader range of problems and make use of the new supercomputer in novel, innovative ways. The new system will become an essential tool for solving problems in the areas of bioscience, disaster prevention, environmental issues, energy, and manufacturing.”

“We expect that problems taking a year to solve on the current system will only take a few days on the new one,” Dr. Ishikawa shared on the Flagship2020 website. “As a result, we will also be able to address a broader range of problems and make use of the new supercomputer in novel, innovative ways. The new system will become an essential tool for solving problems in the areas of bioscience, disaster prevention, environmental issues, energy, and manufacturing.”

Like its US and European counterparts, Japan is very much focused on real-world application performance as well as the “co-design” concept. These similarities are not exactly accidental, US Exascale Computing Project (ECP) lead Paul Messina told HPCwire. There have been a number of efforts, both at the grassroots and official levels, which have laid the ground work for these commonalities.

For the last three years, Argonne Lab and RIKEN have collaborated under a Memorandum of Understanding implemented to facilitate mutually beneficial collaboration. The relationship enables Japanese students to spend months at Argonne and vice versa.

Another internationally-focused group, the Joint-laboratory on Extreme Scale Computing (aka JLESC), was founded by INRIA and the University of Illinois Urbana-Champaign to help its members bridge the gap between petascale and extreme-scale computing. JLESC has since grown to include Argonne Lab, Barcelona Supercomputing Center, Jülich Supercomputing Center, and RIKEN/AICS. The 6th JLESC Workshop will be held Nov. 30 – Dec. 2 at RIKEN/AICS.