Researchers from the Barcelona Supercomputer Center today presented the big data roadmap commissioned by the EU as part of the RETHINK big project intended to identify technology goals, obstacles and actions for developing a more effective big data infrastructure and competitive position for the Europe over the next ten years. Not surprisingly, the leading position of non-European hyperscalers was duly noted as a major roadblock.

Paul Carpenter, senior researcher at BSC and a member of the RETHINK big team, presented the projects results at the European Big Data Congress being held at BSC this week. The report, like so many EU technology efforts in recent years, is tightly focused on industry, developing a stronger technology supplier base as well as promoting use of advanced scale technology by commercial end-users. A major objective is to bring together academic expertise with industry to move the needle.

To a significant extent, the key findings are a warning:

- Europe is at a strong disadvantage with respect to hardware / software co-design. The European ecosystem is highly fragmented while media and internet giants such as Google, Amazon, Facebook, Twitter and Apple and others (also known as hyperscalers) are pursuing verticalization and designing their own infrastructures from the ground up. European companies that are not closely considering hardware and networking technologies as a means to cutting cost and offering better future services run the risk of falling further and further behind. Hyperscalers will continue to take risks and transform themselves because they are the “ecosystem”, moving everybody else in their trail.

- Dominance of non-European companies in the server market complicates the possibility of new European entrants in the area of specialized architectures.

Intel is currently the gatekeeper for new Data Center architectures; moreover, Intel is spearheading the effort to increase integration into the CPU package which can only exacerbate this problem.

The report notes pointedly that even today big data is a capricious and arbitrary term – no one has adequately defined it perhaps because it’s a moving target. What’s nevertheless clear despite is that the avalanche of data is real and managing and mining it will be increasingly important throughout society. What’s not clear, at least to most European companies according to the report, is how much to spend on advanced technology infrastructure to spend on the opportunity.

The report notes pointedly that even today big data is a capricious and arbitrary term – no one has adequately defined it perhaps because it’s a moving target. What’s nevertheless clear despite is that the avalanche of data is real and managing and mining it will be increasingly important throughout society. What’s not clear, at least to most European companies according to the report, is how much to spend on advanced technology infrastructure to spend on the opportunity.

Many European companies, according to the report, are skeptical of the ROI and remain “extremely price-sensitive” with regard to adopting advanced hardware. Coupled the wariness over ROI with “the fact that there is no clean metric or benchmark for side-by-side comparisons for heterogeneous architectures, the majority of the companies were not convinced that the investment in expensive hardware coupled with the person months required to make their products work with new hardware were worthwhile.”

One reason for the more laissez-faire attitude, suggested the report, is the industry does not yet see big data problems, only big data opportunities: “This is largely the case because the industry is not yet mature enough for most companies to be trying to do that kind of analytics and all-encompassing Big Data processing that leads to undesirable bottlenecks.”

Industry is also still focused on finding how to extract value from their data, according to the roadmap, and companies are also still looking for the right business model to turn this value into profit. Consequently, they are not focused on processing (and storage) bottlenecks, let alone on the underlying hardware.

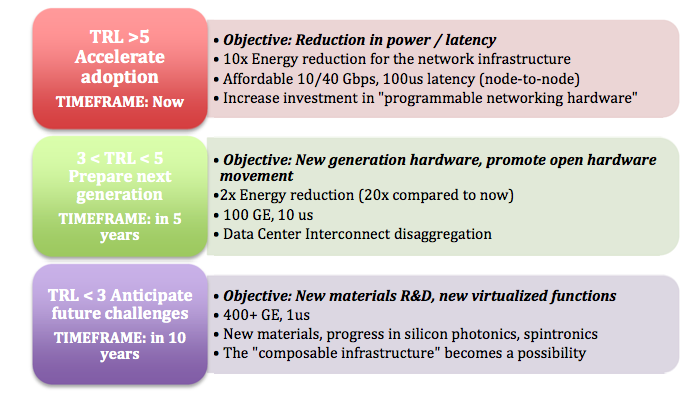

The plan suggests a timetable for many objectives, identifies a “technology readiness level” (TRL) for the goal, as well achievable elements. An example around networking technology is shown below.

Many of the report finding’s were gleaned from interview or surveys with more than 100 companies across a broad spectrum of big data-related Industries including “major and up-and-coming players from telecommunications, hardware design and manufacturers as well as a strong representation from health, automotive, financial and analytics sectors.” A fair portion of the report also tackles needed technology innovation to handle big data going forward.

Many of the report finding’s were gleaned from interview or surveys with more than 100 companies across a broad spectrum of big data-related Industries including “major and up-and-coming players from telecommunications, hardware design and manufacturers as well as a strong representation from health, automotive, financial and analytics sectors.” A fair portion of the report also tackles needed technology innovation to handle big data going forward.

The RETHINK big roadmap, said Carpenter, helps provide guidance on gaining leadership in big data through, “stimulation of research into new hardware architectures for application in artificial intelligence and machine learning, and encouraging hardware and software experts to work together for co-design of new technologies.”

The report tackles a long list of topics: disaggregation of the datacenter; the rise of heterogeneous computing with an emphasis on FPGAs; use of software defined approaches; high speed networking and network appliances; trends toward growing integration inside the compute node (System on a Chip versus System in a Package). Interestingly quantum computing is deemed unready but neuromorphic computing is thought to be on the cusp of readiness and represents an opportunity for Europe which has supported active research through its Human Brain Project.

The RETHINK big roadmap bullets out 12 action items, which constitute a good summary:

The RETHINK big roadmap bullets out 12 action items, which constitute a good summary:

- Promote adoption of current and upcoming networking standards. Europe should accelerate the adoption of the current and upcoming standards (10 and 40Gb Ethernet) based on low-power consumption components proposed by European Companies and connect these companies to end users and data-center operators so that they can demonstrate their value compared to the bigger players.

- Prepare for the next generation of hardware and take advantage of the convergence of HPC and Big Data interests. In particular, Europe must take advantage of its strengths in HPC and embedded systems by encouraging dual-purpose products that bring these different communities together (e.g. HPC / Big Data hardware that can be differentiated in SW). This would allow new companies to sell to a bigger market and decrease the risk associated with development of new product.

- Anticipate the changes in Data Center design for 400Gb Ethernet networks (and beyond). This includes paying special attention to photonics-on-silicon integration and novel Data Center interconnect designs.

- Reduce risk and cost of using accelerators. Europe must lower the barrier to entry of heterogeneous systems and accelerators; collaborative projects should bring together end users, application providers and technology providers to demonstrate significant (10x) increase in throughput per node on real analytics applications.

- Encourage system co-design for new technologies. Europe must bring together end users, application providers, system integrators and technology providers to build balanced system architectures based on silicon-in- package integration of new technologies, I/O interfaces and memory interfaces, driven by the evolving needs of big data.

- Improve programmability of FPGAs. Europe should also fund research projects involving providers of tools, abstractions and high-level programming languages for FPGAs or other accelerators with the aim of demonstrating the effectiveness of this approach using real applications. Europe should also encourage a new entrant into the FPGA industry.

- Pioneer markets for neuromorphic computing and increase collaboration. For neuromorphic computing and other disruptive technologies, the principal issue is the lack of a market ecosystem, with insufficient appetite for risk and few European companies with the size and clout to invest in such a risky direction. Europe should encourage collaborative research projects that bring together actors across the whole chain: end users, application providers and technology providers to demonstrate real value from neuromorphic computing in real applications.

- Create a sustainable business environment including access to training data. Europe should address access to training data by encouraging the collection of open anonymized training data and encouraging the sharing of anonymized training data inside EC-funded projects. To address the lack of information sharing, Europe should encourage interaction between hardware providers and Big Data companies using the network-of-excellence instrument or similar.

- Establish standard benchmarks. It is difficult for Industry to assess the benefits of using novel hardware. We propose establishing benchmarks to compare current and novel architectures using Big Data applications.

- Identify and build accelerated building blocks. We propose to identify often-required functional building blocks in existing processing frameworks and to replace these blocks with (partially) hardware- accelerated implementations.

- Investigate intelligent use of heterogeneous resources. With edge computing and cloud computing environments calling for heterogeneous hardware platforms, we propose the creation of dynamic scheduling and resource allocation strategies.

- Continue to ask the question – Do companies think that hardware and networking optimizations for Big Data can solve the majority of their problems? As more and more companies learn how to extract value from Big Data as well as determine which business models lead to profits, the number of service offerings and products based on Big Data analytics will grow sharply. This growth will likely lead to an increase in consumer expectations with respect to these Big Data-driven products and services, and we expect companies to run into more and more undesirable performance bottlenecks that will require optimized hardware.

Clearly Europe – just as the U.S. – is mobilizing efforts to turn high performance technology into a competitive strength generally and also turning its attention to big data. Increasingly, that means a blending of HPC capabilities with big data analytics to turn the growing gush of data into scientific insight and commercial advantage. The latest report, roughly 50 pages, is a quick but worthwhile read for obtaining EU directional thinking.

Link to BSC press release: https://www.bsc.es/about-bsc/press/bsc-in-the-media/bsc-highlights-need-european-research-big-data-hardware-and

Link to the THINK big roadmap: http://www.rethinkbigproject.eu/sites/default/files/u273/D5.3RoadmapV23_0.pdf