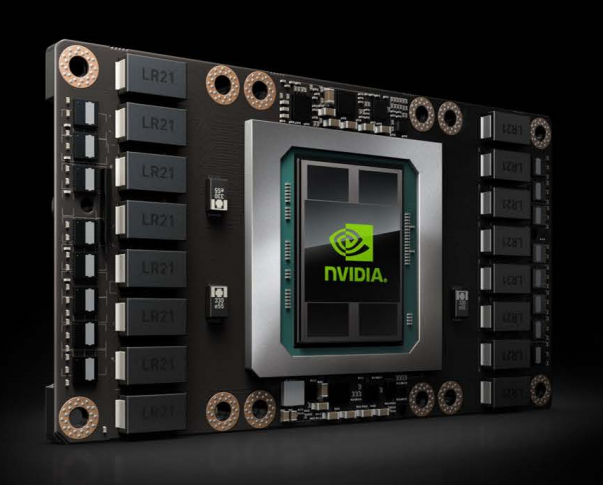

In September, the Center for Advanced Technology Evaluation (CENATE) at Pacific Northwest National Laboratory (PNNL) took possession of NVIDIA’s DGX-1 GPU-based (Pascal 100) supercomputer. More on what they are doing with it later. Soon, IBM will deliver its Con Tutto memory technology. Data Vortex’s advanced switch technology is already in-house, along with products (and ideas) from a handful of other technology heavyweights. Now entering its second year, CENATE already has some potent equipment and ambitious ideas.

“We have established the lofty goal for us to even design some neuromorphic technologies that are doing machine learning natively and not as you can do machine learning for example on a GPU in which you sort of come in from behind and map machine workload to the architecture of the GPU,” says Adolfy Hoisie, PNNL’s chief scientist for computing and CENATE’s principal investigator and director. All things (time and money) being available, “We would like those neuromorphic systems chips, whatever, to actually cast them in silicon.”

This is perhaps getting ahead of the story. Launched last fall and funded by the Department of Energy (DOE) Office of Advanced Scientific Computing Research (ASCR), CENATE is envisioned as a proving ground for advanced technology that is making its way into market – the DGX-1 and Data Vortex platforms are good examples – and as a lens for keeping a selective eye on technologies further out. A core goal is to assess these technologies for DOE workloads and to influence emerging architecture including those headed into leadership class systems.

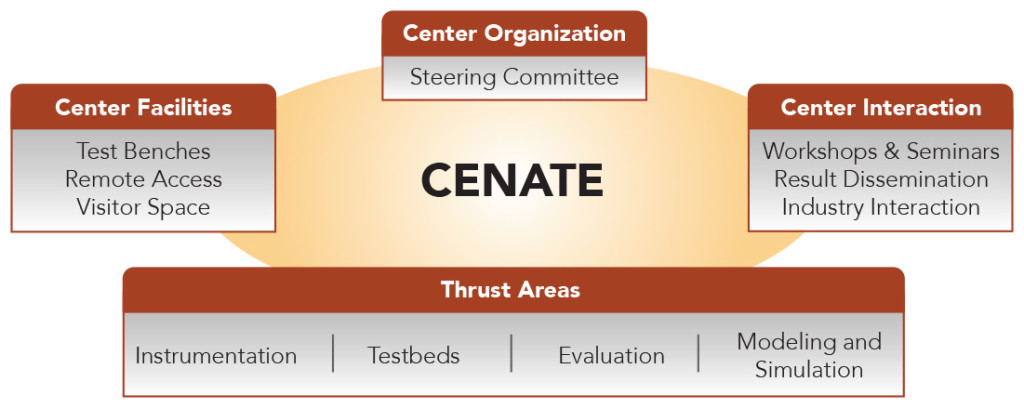

In CENATE taxonomy, the center has what Hoisie labels as “four thrusts:”

- Enablement of Tests Beds. “Test beds don’t have a uniform definitions of what they are because in CENATE even the notion of a technology pipeline, technology maturity pipeline [varies]. We are going to tackle technologies that range from very early concepts or blueprints all the way to pre-productions machines.”

- Extensive Instrumentation and Measurement. “For a national laboratory, we have unique, instrumentation and measurement capability. This applies not only to measuring time, which is performance, but also to measurement of power and thermal effects, and we are contemplating venturing into reliability as well. At this time our lab has the capability to measure performance, power and thermal at very high resolutions and very high frequency for dynamic measurements. We measure both static and dynamic these technologies.”

- Technology Evaluation. “Not all these technologies apply equally well to all the application workloads that ASCR may be interested in. What evaluation means is to determine what applications map well onto various architectures that are being contemplated and studied within CENATE then go the extra step in trying to guide future development of those activities within the applications.”

- Modeling and Simulation. “[This] is a big forte of ours. It is a very exciting capability because if we had machines from vendor X from vendor Y and we have the capability to measure things, to validate the initial models, our modeling and simulation [expertise] allows us to ‘place’ this system into the future not just doing simple statistical extrapolations but really guiding the architecture to maximize the positive impact on the applications.”

Work on any technology may cross all CENATE domains and evaluation of NVIDIA’s DGX-1, CENATE’s latest addition, is a good example.

“Firstly we are interested in ways to accelerate computation. We are interested in PASCAL as the next generation of GPU. We’ll see what we learn through measurements on Pascal, running benchmarks, and pushing it forward with what-if scenarios, [looking for] when Pascal goes from where it is right now to double precision, to increased memory, to possibly modification in the SIMD characteristics and so forth,” says Hoisie.

Part of the interest, not surprisingly, stems from DOE’s forthcoming Summit supercomputer, to be based at Oak Ridge Leadership Computing Facility. Summit will be based on IBM Power technology and NVIDIA GPUS. Ian Buck, vice president of accelerated computing, NVIDIA, says the DGX-1, like Summit, is based on a strong node architecture versus weak node – where you have “a single node with maybe one or two cores, some memory, and the you replicated that en masse, hundreds of thousands, and you relied on the network for kind of MPI scalability to achieve performance at scale.”

The large infrastructure requirements (cabling, power, networks) inherently limited the weak node approach and complicated programming, he argues. “The vision we have been pursuing for exascale and in general for supercomputing is building these strong nodes systems like DGX-1 where we put a lot of horsepower into a single node and minimize the number of nodes you have to scale up to,” says Ian Buck, vice president of accelerated computing, NVIDIA.

As on the OLCF website: “Summit will deliver more than five times the computational performance of Titan’s 18,688 nodes, using only approximately 3,400 nodes when it arrives in 2017. Like Titan, Summit will have a hybrid architecture, and each node will contain multiple IBM POWER9 CPUs and NVIDIA Volta GPUs all connected together with NVIDIA’s high-speed NVLink. Each node will have over half a terabyte of coherent memory (high bandwidth memory + DDR4) addressable by all CPUs and GPUs plus 800GB of non-volatile RAM that can be used as a burst buffer or as extended memory. To provide a high rate of I/O throughput, the nodes will be connected in a non-blocking fat-tree using a dual-rail Mellanox EDR InfiniBand interconnect.”

The DGX-1 is a mini-version of sorts, with eight P100 and NVlink interconnect. Buck says NVIDIA is looking to CENATE for “insight into scalability with a strong node architecture and to help us define where the bottlenecks are in power and these workloads so we can better optimize.” There’s no shortage of questions. “Maybe we should be focusing more on 32-bit floating and not 64-bit floating point for some of these workloads. Also programmability is a challenge. Hoisie and PNNL are users of PGI and OpenACC and may have ideas how can we improve programmability and compiler ability.”

The DGX-1 is a mini-version of sorts, with eight P100 and NVlink interconnect. Buck says NVIDIA is looking to CENATE for “insight into scalability with a strong node architecture and to help us define where the bottlenecks are in power and these workloads so we can better optimize.” There’s no shortage of questions. “Maybe we should be focusing more on 32-bit floating and not 64-bit floating point for some of these workloads. Also programmability is a challenge. Hoisie and PNNL are users of PGI and OpenACC and may have ideas how can we improve programmability and compiler ability.”

Power consumption, of course, is major worry in the race to exascale and an area where CENATE and its unique measurement abilities can help address. “We are in the single gigaflop per watt era now with these strong nodes supercomputers,” says Buck. “We should get into the double digit category relatively soon and the goals for exascale we’ve got to get upwards of 25 Gflops per watt,” says Buck who is interested to see what new ideas CENATE might offer.

Besides learning more about overall acceleration, Hoisie says understanding and assessing the DGX-1’s machine learning capability is an important objective.

“We have developed here, not part of CENATE but part of PNNL, very important scalable algorithms for the machine learning. Those libraries exist today and they are open source and we want to assess frankly them on the DGX-1. NVIDIA represents this as a machine learning box, and we believe that there is some truth in that, but you know as researchers in CENATE we are going to ask the question, ‘OK let’s quantify that. What does it mean for a box to be a machine learning box?,’ he says.

“Part of that is looking at how it compares to others, how does DGX-1 compare to Knights Landing [systems] for example. Again these DGX-1 and KNL systems are offerings you can go and buy now, or they are very close to being in that stage (the sweet spot for CENATE). So we are going to look not only at where DXX-1 may go in the future, where Pascal may go in the future, and what would that do to our workloads, but also how machine learning performs today on the DGX-1 and how it compares with other top-of-the-line systems,” Hoisie says. “We are also looking at new algorithms for machine learning.”

Hoisie expects CENATE’s modeling and simulation capabilities will be valuable in this portion of the DGX-1 work. “If we measure something on a current machine and we validate and calibrate a model, we get enough confidence to predict what is the performance of a different algorithm is, sometimes drastically different sometimes only marginally different.”

Yet a third project with the DGX-1 is work by distinguished PNNL researcher Ruby Leung and her team with portions of their climate modeling code. In particular, says Hoisie, they are look at portion of code already running on GPUs and are benchmarking the code’s performance on DGX-1: “We are going to be able to say the DGX-1 is this much faster or it is not or whatever the case may be and look at what needs to be done to improve the performance as the specs of the machine allows.” Leung is the Chief Scientist of Department of Energy Accelerated Climate Modeling for Energy (ACME).

There’s obviously lots going on inside CENATE. One important mission element is diffusing the knowledge it gleans into the broader HPC community. Given all of the IP involved and the center’s capacity to identify shortcomings as well as strengths, sharing information is tricky.

“We would like the researchers from all of the national laboratories and from academia to have the opportunity to access these resources and we are very much committed to that; however it’s not a simple exercise. Vendors are legitimately concerned about crosspollination of ideas. We at the national laboratory are equipped to deal with that but academia is less so,” says Hoisie. As a general rule all of the national labs have NDAs “with all the vendors that are in the HPC orbit.”

For good or ill, Hoisie says, “If we saw something wrong, we wouldn’t go and publish a paper on that attempting to talk down that product; instead we would point it out for the benefit of the vendor and for ourselves the ways in which that particular architecture or architectural issue can be improved.”

Part of effort to share learnings will occur at regular CENATE workshops. “We are planning to have the first CENATE symposium in early spring next year. We want it to have enough information [available] so we can discuss ideas with the users, [we] want to have systems all set up, machines on the floor that people can access, both locally and remotely, and then we are going to organize that. It is something very important for us to do.” A frequency of the meetings hasn’t set been determined.

Clearly CENATE does have lofty goals and its list of projects is growing. That said, Hoisie is quick to emphasize CENATE has “no interest in hording technology” wanting instead to use the resources it has in a focused way the produces more than just incrementally advanced. On early stage CENATE project is development of a scalability test capability that combines optical and electronic technology for the network.

“Imagine that you have, for example, an InfiniBand network that connects a cluster of nodes – these are yet to be determined – and the network is also comprised of optical technology that allows you to basically re-cable the machine in seconds or less without literally having to move any cable. This allows us to create enclaves within the system that isolates jobs from the rest of the activity on the system, which is a problem that plagues say many of these very large scale machine in which the nodes are dedicated to a job but not a network path.

The design of this system for this project is far along, says Hoisie, and the project is now in the procurement process. “It’s generated a lot of interest within the vendor community – Penguin Computing, Cray, DDN, Mellanox, and optical switch vendor Calient. These vendors are all so interested in this concept and its potential for future commercial uses that in some cases, for example Mellanox, is donating the entire Infiniband gear for it.” That’s an indication of CENATE’s growing success,” says Hoisie.

As has been the case in the past few years, the national labs do not have individual SC booths, but are represented in the DOE both. CENATE will have a presence there and Hoisie expects several participating vendors, perhaps NVIDIA for example, to also have CENATE materials or demos at their booths.