The programming language Fortran has been in existence since 1957, and a large percentage of software that runs on HPC systems worldwide is written, at least in part, in Fortran. Since it has been in existence for so long, and so many scientists and engineers learned it early in their careers and have utilized it extensively, much of this code is not up to modern standards of software development.

Although there is no doubt that most of that code works well and runs fast, as codes have grown more complex and involve more developers, errors can easily be introduced. As I’ll discuss in greater detail next week at SC16, these types of errors can be greatly reduced with a more disciplined approach to software development.

Fortunately, the Fortran language has continued to evolve and has incorporated many of the most successful paradigms of more modern languages such as C++ and Java, while still retaining its overall speed and general simplicity. For example, the 2003 standard, which greatly enhanced Fortran 95, introduced pointers to procedures, object-oriented programming, and better interoperability with C among other things.

The new object-oriented paradigm is particularly useful when refactoring existing Fortran code to make it more modular and robust. It is also fairly straight-forward to implement!

It is fair to ask, “Why change something that’s not broken?” In some cases, it may not make any sense to refactor legacy code, but if the code is still being developed actively, and there are multiple contributors to the code base, refactoring with OOP standards makes a lot of sense. Perhaps the most important reason to refactor is to reduce code duplication. There is a tendency among many programmers to simply copy a subroutine or function that does 90% of what is needed and then modify the new routine to fit the rest. This can lead to lots of headaches if something in the original routine needs to be changed or added, and often leads to discrepancies that are hard to trace. Fortunately, using the classes, inheritance, and polymorphism now available in Fortran 2003, this dilemma can be almost completely avoided.

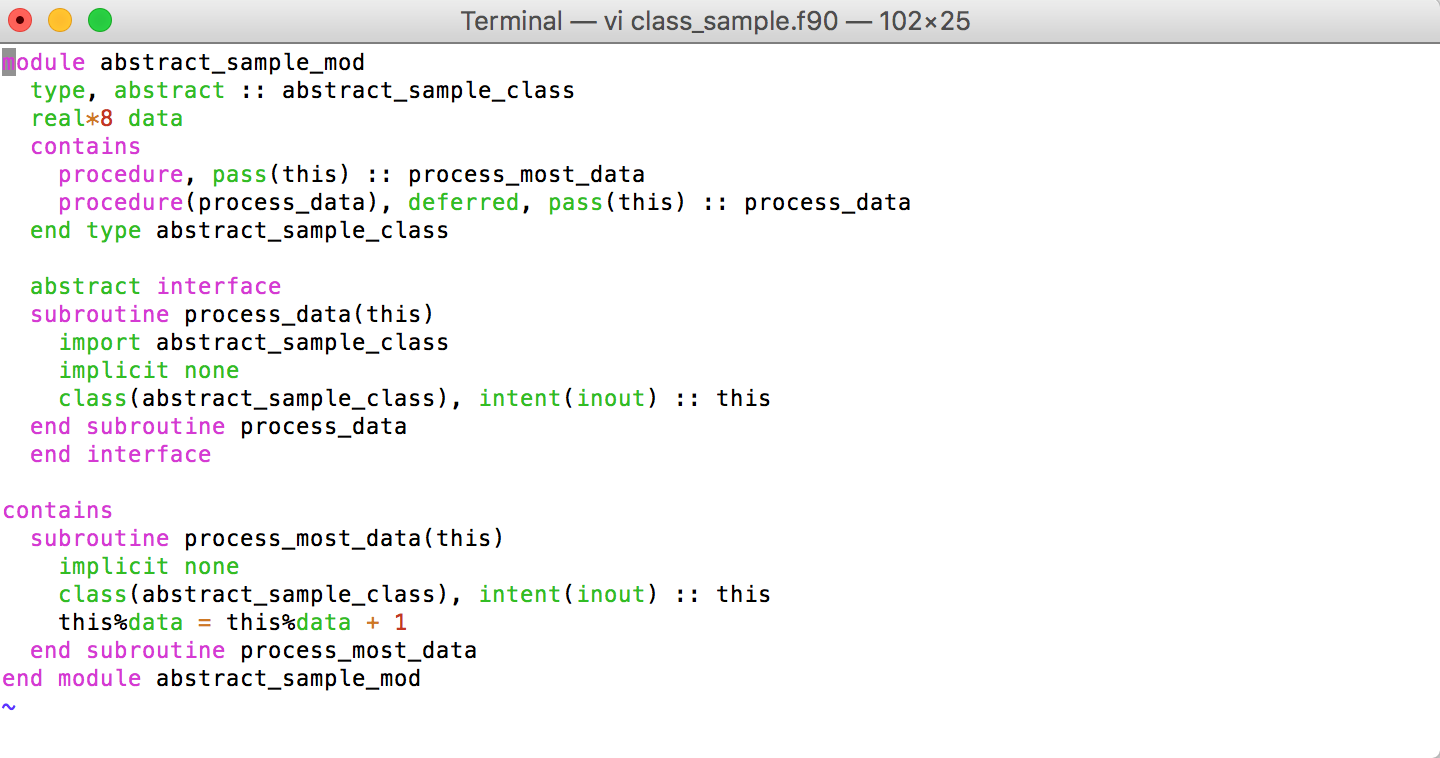

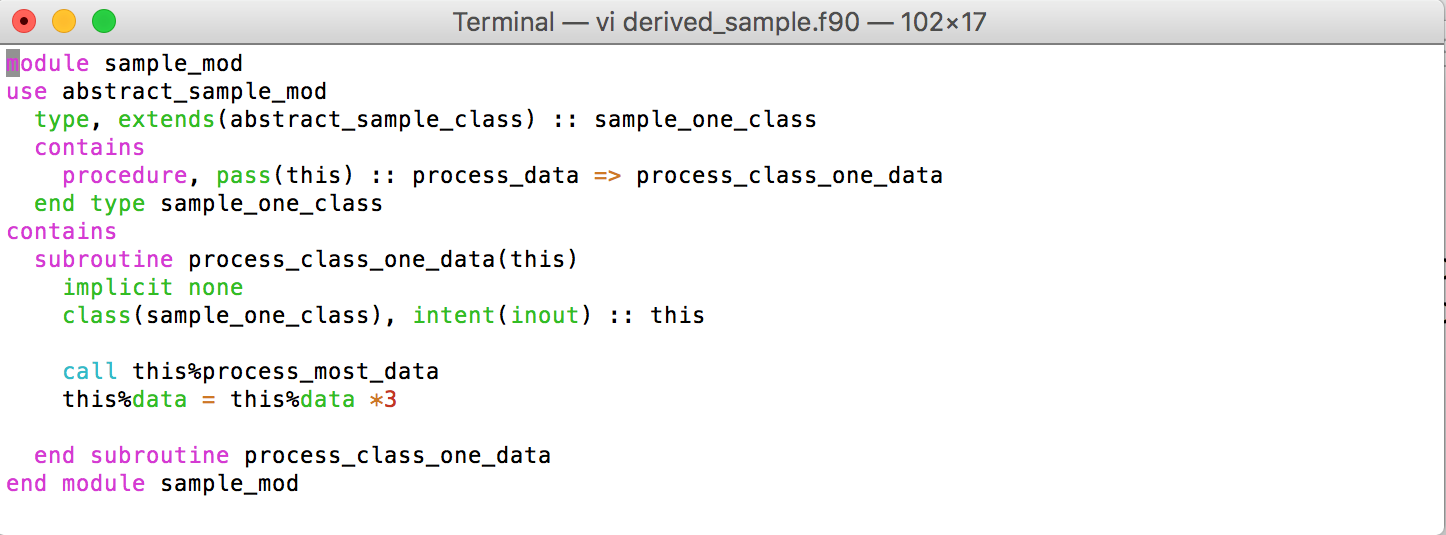

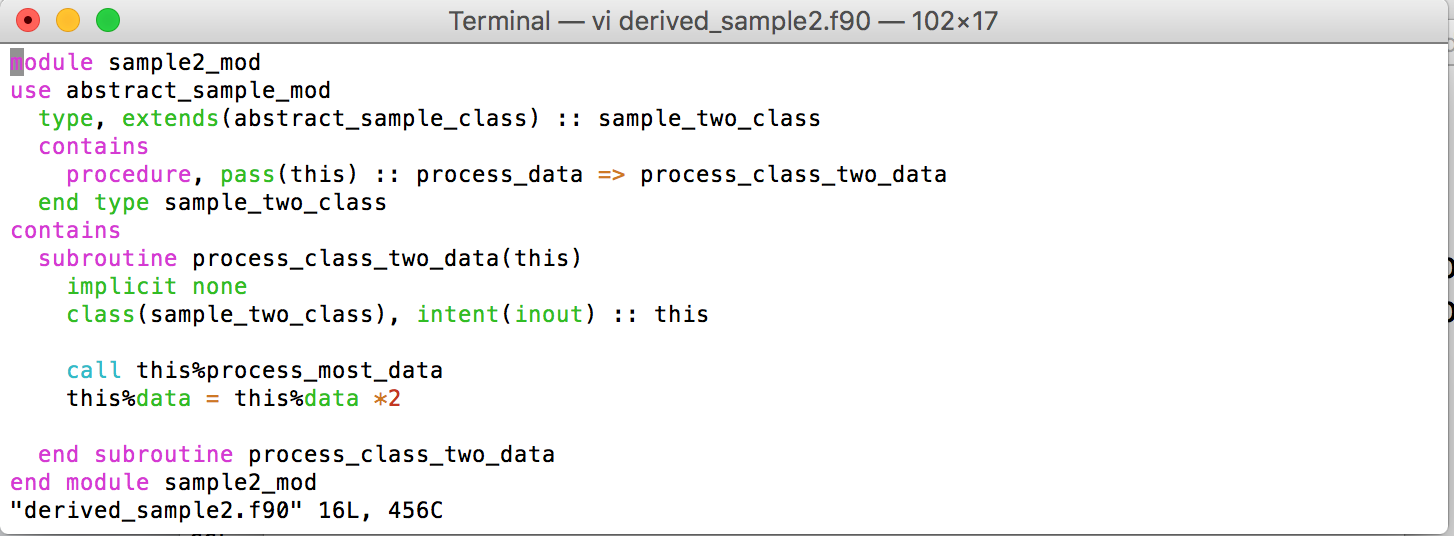

When two or more files contain very similar routines, it is usually possible to combine the shared functionality in a base class and then add derived classes that “finish off” the calculation in their own unique manner. By eliminating the copied code, the new classes will now make use of the same core computation, which can be easily modified for all classes at once and also lends itself very well to the addition of unit testing—a big component to any modern software design. The figures below show a very simple example of this sort of combination using an abstract base class along with two derived classes.

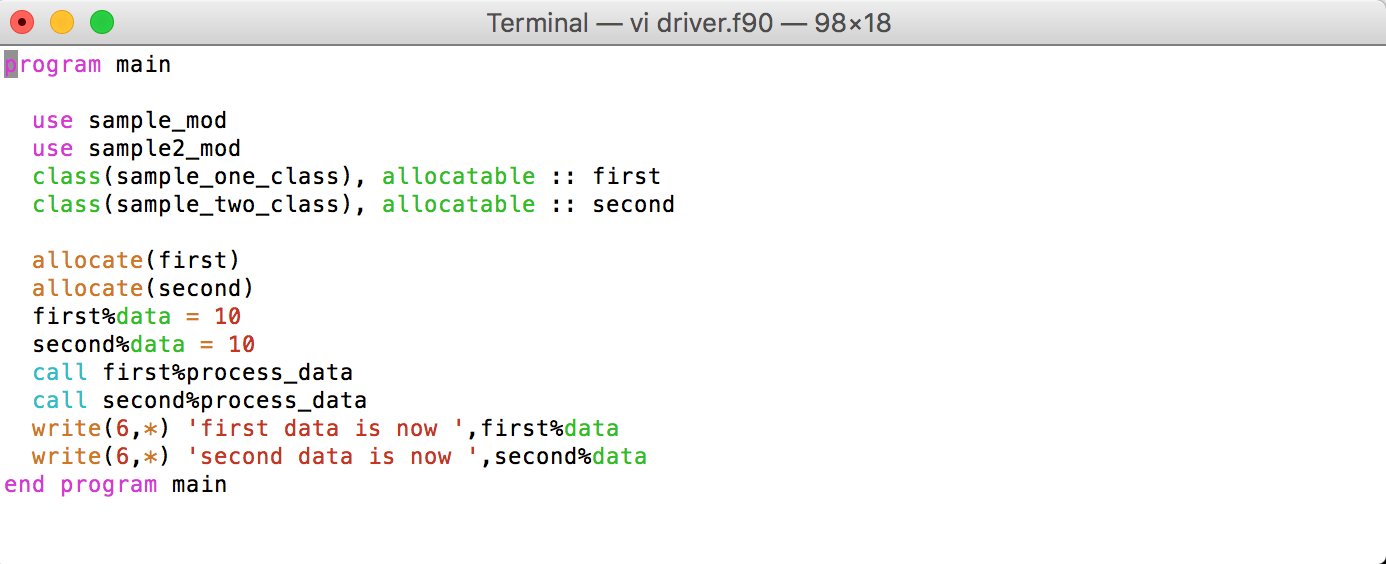

Both derived classes inherit the process_most_data procedure from the abstract base class, and both use that function directly in their own version of process_data. The “=>” seen under the extends command refers to the fact that the process_data procedure required in the base class has been implemented locally and renamed within the specific derived class. Figure 3 below shows a sample driver routine that allocates both classes, sets the initial data value for each and then has each instance process the data according to its version of the procedure.

When compiled and run, the output looks as follows:

first data is now 33.000000000000000 second data is now 22.000000000000000

Both derived classes (first and second) start with data equal to 10.0 when each is called to run the process_data routine. Each then runs through the common core functionality provided by the abstract base class and then performs its own individual calculation. In the case of first, that is multiply by 3.0; for second, it is multiply by 2.0.

While this is clearly a trivial example of combining common calculations into a single procedure, it provides a template for doing so in an object-oriented manner using Fortran. Because abstract classes cannot be instantiated, the use of an abstract base class in this instance also ensures that no future developer will accidentally try to use it rather than a fully defined derived class.

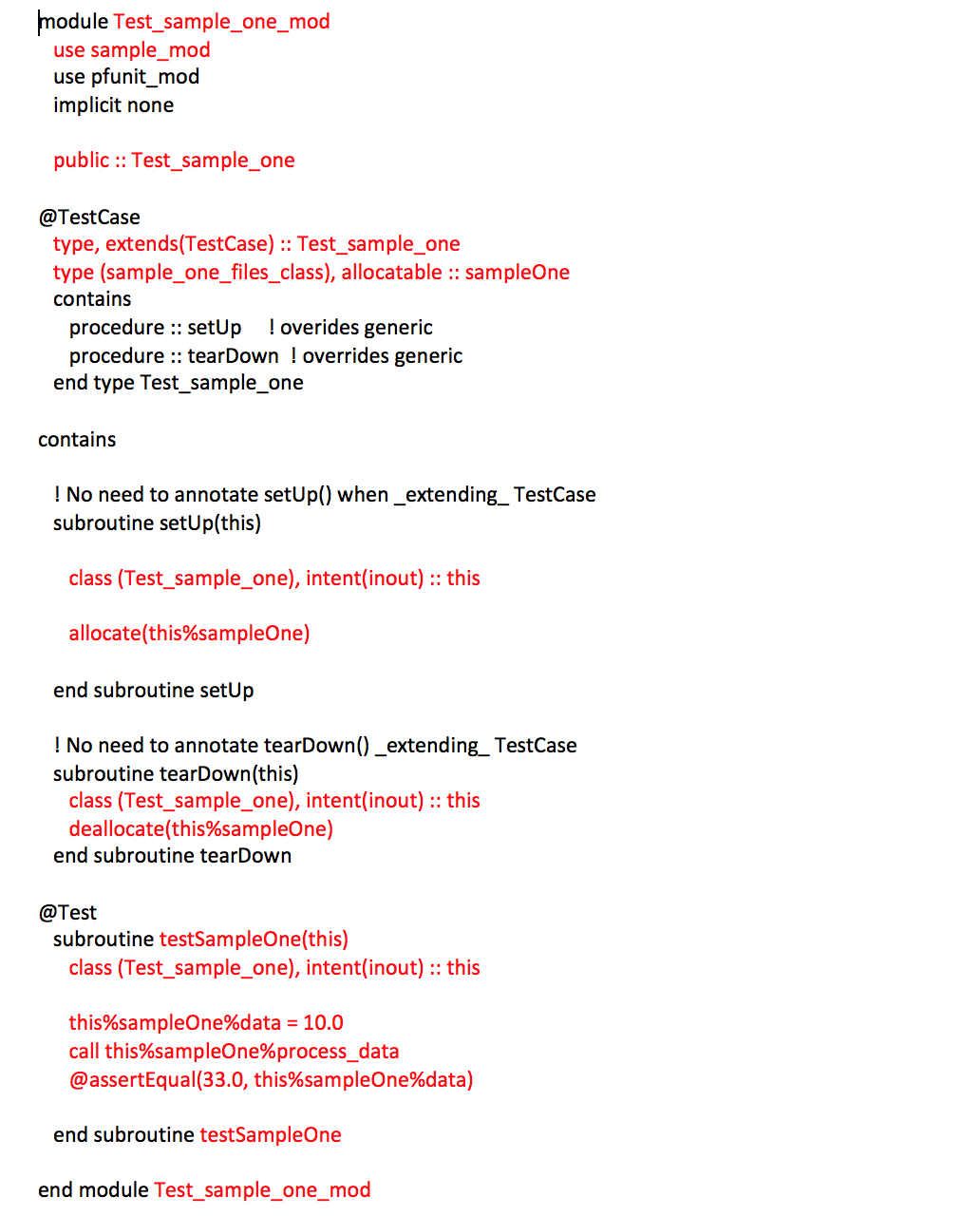

Extending this idea even further, unit tests can now be added to these new classes. Using pFUnit, which is an open source unit-testing framework for Fortran, a unit test for sample_one_class would look something like Figure 4.

In the sample unit test shown in Figure 4, most of the text is boilerplate, with only the highlighted red text indicating the tailoring for the sample class. The class is included at the top of the file, it is defined under @TestCase, allocated in setup, and deallocated in tearDown—all with very simple additions to the boilerplate. The actual test takes place in testSampleOne where the process_data procedure is called after starting with an initial data value of 10.0. The AssertEqual command checks that the value of data matches 33.0, as it should after the process_data procedure is complete. This sort of test will run extremely quickly and will provide an early warning sign if the base class is changed without making sure that all derived classes are updated appropriately.

I’ll be presenting on this topic at SC16 on Wednesday, Nov. 16 at 4 p.m., and would be happy to answer your questions. Please stop by!

About the Author:

Mark Potts, Ph.D., is a Senior Computational Scientist at RedLine Performance Solutions, LLC. Dr. Potts has over 20 years of software development experience, including more than 15 years of work in research and application development using HPC systems. He joined Redline Performance Solutions in 2015 and has since been working with NOAA to accelerate and modernize their Gridpoint Statistical Interpolation (GSI) analysis code used to assimilate data into operational weather models.