In virtually every way, precision medicine (PM) is the poster child for the HPC Matters mantra and was a good choice for the Monday panel opening SC16 (HPC Impacts on Precision Medicine: Life’s Future–The Next Frontier in Healthcare). PM’s tantalizing promise is to touch all of us, not just writ large but individually – effectively fighting disease, enhancing health and lifestyle, extending life, and necessarily contributing to basic science along the way. All of this can only happen with HPC.

Moderated by Steve Conway of IDC, five distinguished panelists from varying disciplines painted a powerful picture of PM’s prospects and challenges. Rather than dwell down-in-the-weeds on HPC technology minutiae, the panel tackled the broad sweep of data-driven science, mixed workload infrastructure, close collaboration across domains and organizations, and the need to make use of incremental advances while still pursuing transformational change.

It was a conversation with wide scope and difficult to summarize. Here are the panelists and a sound bite from their opening comments:

- Mitchell Cohen, director of surgery, Denver Health Medical Center; Professor, University of Colorado School of Medicine. “If you get shot, stabbed, or run over I am your guy – a good person not to need,” quipped Cohen, momentarily underplaying his equal strength in basic medical research.

- Warren Kibbe, director, Center for Biomedical Informatics and Information Technology (CBIIT); CIO, acting deputy director, National Cancer Institute. “[ACS] estimates there will be 1.7M new cases of cancer in the U.S. along and 14M worldwide this year. Six hundred thousand will die. [However] the mortality rate in cancer has been declining year since about 2000 so we are doing something right but it’s clear we need to understand more about basic biology,” said Kibbe.

- Steve Scott, chief technology officer, Cray Inc. “I’m the computer guy. We tend to talk about Pflops [and the like]. The real disconnect is between the computational science world and clinical scientist and physicians. We need build solutions those people can use,” said Scott who dove a bit deeper into the simulation and analytics technologies and the computer architecture required to deliver PM.

-

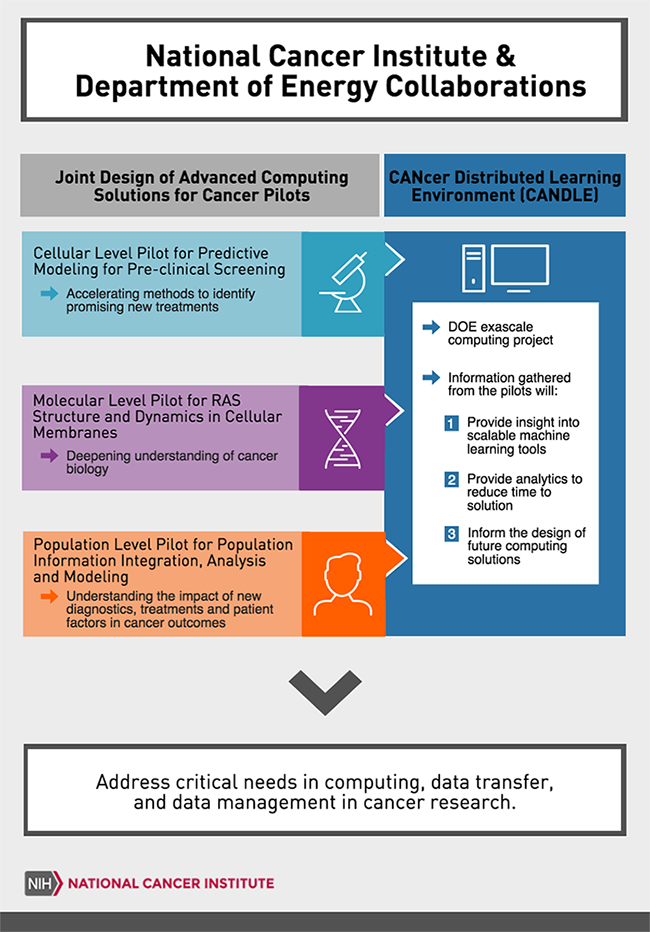

Fred Streitz, LLNL Fred Streitz, chief computational scientist and director of the High Performance Computing Innovation Center at Lawrence Livermore Lab.[i] Talking about a population scale data collection pilot that’s part of the CANcer Distributed Learning Environment (CANDLE), said Streitz: “[It’s] where rubber hits the roads. It’s focused on [establishing] an effective national cancer surveillance program that takes advantage of all of the data we currently have and are already collecting in different ways and states – [and will first use] natural language processing to makes sense of the data and regularize the data, and, then use machine learning to extract the information in a useful way.”

- Martha Head, senior director, The Noldor; acting head, Insights from Data at GlaxoSmithKline Pharmaceuticals. She tackled the lengthy and problematic drug R&D cycle (a decade) from hypothesis to therapy. “We have to go faster [and not] with the same processes and just rushing ever faster. We need transformation, a new approach that combines simulation HPC and data analytics with experiment – a new engineering paradigm that almost treats an experiment as a subroutine or a function in a larger algorithm that we are running in our drug discovery process,” said Head.

Setting the stage, Conway emphasized zeroing in on the most appropriate care and preventative treatment is also financially imperative. The U.S. spent about $3 Trillion on healthcare in 2014 and is headed to $4.8 Trillion in 2021. Other countries do a bit better, with healthcare spending claiming 9-11 percent of GDP, yet that too is alarming.

PM, he said, will not only help save lives but also curb costs. It’s also becoming an important HPC market, so much that IDC is tracking dozens of healthcare initiatives around the world and will add PM as a new market segment it tracks within commercial analytics. Clearly the stakes are high.

Kibbe, a key player in NCI’s Moonshot program, is a powerful advocate of HPC tools’ capacity to advance medicine through database creation, machine-learning based techniques, and a variety of simulation. That said, he cautioned, the biggest hurdle remains unknown biology. We simply do not know enough basic biology. This is a point echoed by a few others. Basic research as part of PM overall will help.

The Cancer Moonshot, he noted, has been carefully road-mapping what it thinks can be impactful and done. CANDLE is one of those efforts. He noted a blue ribbon NCI panel has spelled out clear objectives in a publically available report. Here are a few of its directional findings:

- Tumor Evolution and Progression Working Group Report

- Clinical Trials Working Group Report

- Precision Prevention and Early Detection Working Group Report

- Pediatric Cancer Working Group Report

- Enhanced Data Sharing Working Group Report

- Cancer Immunology Working Group Report

- Implementation Science Working Group Report

These and other efforts, driven by HPC, will work over time. One example is creation of the NCI Genomic Data Commons intended to provide the cancer research community with a unified data repository that enables data sharing across cancer genomic studies in support of precision medicine. “I want to give a shout out to Bob Grossman and his team at the University of Chicago,” said Kibbe of the project. The idea is “to help take data out of existing repositories and get it into the cloud so people can use cloud computing more effectively.”

Kibbe offered a realistically measured view of the Cancer Moonshot’s goal. It will make significant, meaningful progress, but it’s a long road towards whatever it is that actually constitutes a cure for al cancers. Head of GSK agreed and emphasized the value of public-private collaborations like the one GSK has with NCI.

As described by NCI, “Department of Energy, NCI, and GlaxoSmithKline are forming a new public–private partnership designed to harness high-performance computing and diverse biological data to accelerate the drug discovery process and bring new cancer therapies from target to first in human trials in less than a year. This partnership will bring together scientists from multiple disciplines to advance our understanding of cancer by finding patterns in vast and complex datasets to accelerate the development of new cancer therapies.”

Given the wealth of genomics data and the relative paucity of mechanistic information, pattern recognition and database analysis have been primary tools in pursuing PM. Recent advancement in these data-driven science techniques and their increasing use on HPC infrastructure are well aligned with PM purposes said Scott. The emerging HPC system model, which emphasizes memory and data movement as well as intense computation (lots of flops) is a good fit for PM.

“Computational demands and algorithm complexity are pushing us to build larger and larger machines, like Cori at NERSC, but they are fortunately pushing us in the direction of broader HPC. Computations tends to get all of the attention, [but] the real way to build a SC today depends upon the memory systems and interconnect,” said Scott. A mixed workload environment is what’s needed and also where supercomputing is trending.

“On the software side common HPC techniques like simulation done on molecular dynamics or finite element analysis or image processing can be brought to bear fairly successfully on PM problems [while similarly] areas like large scale graph analytics and machine learning are also critical.”

Streitz reviewed directions of CANDLE’s three pilot projects (see figure below) one of which seeks to unravel the role of RAS mutations, current in about 30 percent of cancer including some of the toughest, zeroing in how RAS behaves on the cell membrane. RAS is involved in growth and when it gets stuck in the on position, cancer can be the result.

Just today, it was announced that NVIDIA will join the project. Here’s an excerpt from the release:

“AI will be essential to achieve the objectives of the Cancer Moonshot,” said Rick Stevens, associate laboratory director for Computing, Environment and Life Sciences at Argonne National Laboratory. “New computing architectures have accelerated the training of neural networks by 50 times in just three years, and we expect more dramatic gains ahead.”

“GPU deep learning has given us a new tool to tackle grand challenges that have, up to now, been too complex for even the most powerful supercomputers,” said Jen-Hsun Huang, founder and chief executive officer, NVIDIA.

“Together with the Department of Energy and the National Cancer Institute, we are creating an AI supercomputing platform for cancer research. This ambitious collaboration is a giant leap in accelerating one of our nation’s greatest undertakings, the fight against cancer.” (See the full release: http://nvidianews.nvidia.com/news/nvidia-teams-with-national-cancer-institute-u-s-department-of-energy-to-create-ai-platform-for-accelerating-cancer-research#sthash.AiT5EhY2.dpuf )

One of the most interesting observations came from Cohen. To some extent PM is trying to capture the knowledge experienced clinicians already have and codify it and make it available. Think of the time required to train a complicate neural network, matching answers to desire outcomes based on experience, as akin to clinical training and experience. Some clinicians still push back against this idea, calling it autonomous medicine that will claim or erode their jobs said Cohen.

This was clearly not his view. It’s also less about how PM can contribute to progress and more about its implementation. Still it suggested creating physician friendly tools and changing physician attitudes is at least a part of the challenge.

Capturing the full scope of the SC16 panel is a tall order. PM is a broad undertaking with many components. The NCI Cancer Moonshot is making progress daily, as demonstrated by today’s NVIDIA announcement. Precision medicine, which depends critically on HPC, matters.

[i] Streitz fiilled for Dimitri Kusnezov, Chief Scientist & Senior Advisor to the Secretary, U.S. Department of Energy, National Nuclear Security Administration, who was stuck in San Francisco because of travel problems.