Paul Messina, director of the U.S. Exascale Computing Project, provided a wide-ranging review of ECP’s evolving plans last week at the HPC User Forum in Santa Fe, NM. The biggest change, of course, is ECP’s accelerated timetable with delivery of the first exascale machine now scheduled for 2021. While much of the material covered by Messina wasn’t new there were a few fresh details on the long awaited Path Forward hardware contracts and on progress-to-date in other ECP fronts.

“We have selected six vendors to be primes, and in some cases they have had other vendors involved in their R&D requirements. [We have also] been working on detailed statements of work because the dollar amounts are pretty hefty, the approval process [reaches] high up in the Department of Energy,” said Messina of the Path Forward awards. Five of the contracts are signed and the sixth is not far off. Even his slide had the announcement to be ready by COB April 14, 2017. “It would have been great to announce them at this HPC User Forum but it was not meant to be.” He said the announcements will be made public soon.

The duration of the ECP project has been shortened to seven years from ten years although there’s a 12-month schedule contingency built in to accommodate changes, said Messina. Interestingly, during the Q&A, Messina was asked about U.S. willingness to include ‘individuals’ not based in the U.S. in the project. The question was a little ambiguous as it wasn’t clear if ‘individuals’ was intended to encompass foreign interests broadly, but Messina answered directly, “[For] people who are based outside the U.S. I would say the policy is they are not included.”

Presented here are a handful of Messina’s slides updating the U.S. march towards exascale computing – most of the talk dwelled on software related challenges – but first it’s worth stealing a few Hyperion Research (formerly IDC) observations on the global exascale race that were also presented during the forum. The rise of national and regional competitive zeal in HPC and the race to exascale is palpable as evidenced by Messina’s comment on U.S. policy.

China is currently ahead in the race to stand up an exascale machine first, according to Hyperion. That’s perhaps not surprising given its recent dominance of the Top500 list. Japan is furthest along in settling on a design, key components, and contractor. Here are two Hyperion slides summing up the world race. (see HPCwire article, Hyperion (IDC) Paints a Bullish Picture of HPC Future, for full rundown of HPC trends)

Messina emphasized the three-year R&D projects (Path Forward) are intended to result in better hardware at the node level, memory, system level and energy consumption, and programmability. Moreover, ECP is looking past the initial exascale systems. “The idea is that after three years hopefully the successful things will become part of [vendors’] product lines and result in better HPC systems for them not just for the initial exascale systems,” he said. The RFPs for the exascale systems themselves will come from the labs doing the buying.

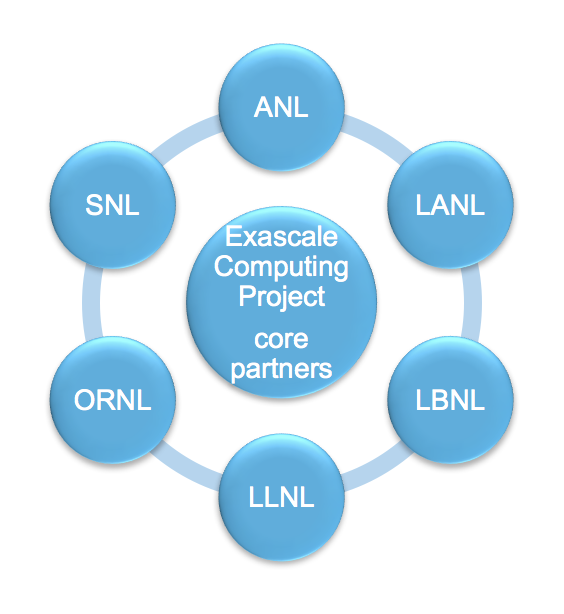

The ECP is a collaborative effort of two U.S. Department of Energy organizations, the Office of Science (DOE-SC) and the National Nuclear Security Administration (NNSA). Sixteen of seventeen national labs are participating in ECP and the six who have traditionally fielded leadership HPC system – Argonne, Oak Ridge, Lawrence Livermore, Sandia, Los Alamos, and Lawrence Berkeley National Laboratories – form the core partnership and signed a memorandum of agreement on cooperation defining roles and responsibilities.

Under the new schedule, “We will have an initial exascale system delivered in 2021 ready to go into production in 2022 and which will be based on advanced architecture which means really that we are open to something that is not necessarily a direct evolution of the systems that are currently installed at the NL facilities,” explained Messina.

“Then the ‘Capable Exascale’ systems, which will benefit from the R&D we do in the project, we currently expect them to be delivered in 2022 and available in 2023. Again these are at the facilities that normally get systems. Lately it’s been a collaboration of Argonne, Oak Ridge and Livermore, that roughly every four years establish new systems. Then [Lawrence] Berkeley, Los Alamos and Sandia, which during the in between years installs systems.” Messina again emphasized, “It is the facilities that will be buying the systems. [The ECP] be doing the R&D to give them something that is hopefully worth buying.”

“Then the ‘Capable Exascale’ systems, which will benefit from the R&D we do in the project, we currently expect them to be delivered in 2022 and available in 2023. Again these are at the facilities that normally get systems. Lately it’s been a collaboration of Argonne, Oak Ridge and Livermore, that roughly every four years establish new systems. Then [Lawrence] Berkeley, Los Alamos and Sandia, which during the in between years installs systems.” Messina again emphasized, “It is the facilities that will be buying the systems. [The ECP] be doing the R&D to give them something that is hopefully worth buying.”

Four key technical challenges are being addressed by the ECP to deliver capable exascale computing:

- Parallelism a thousand-fold greater than today’s systems.

- Memory and storage efficiencies consistent with increased computational rates and data movement requirements.

- Reliability that enables system adaptation and recovery from faults in much more complex system components and designs.

- Energy consumption beyond current industry roadmaps, which would be prohibitively expensive at this scale.

Another important ECP goal, said Messina, is to kick the development of U.S. advanced computing into a new higher trajectory (see slide below).

From the beginning the exascale project has steered clear of FLOPS and Linpack as the best measure of success. That theme has only grown stronger with attention focused on defining success as performance on useful applications and the ability to tackle problems that are intractable on today’s petaflops machines.

“We think of 50x times that performance on applications [as the exascale measure of merit], unfortunately there’s a kink in this,” said Messina. “The kink is people won’t be running todays jobs in these exascale systems. We want exascale systems to do things we can’t do today and we need to figure out a way to quantify that. In some cases it will be relatively easy – just achieving much greater resolutions – but in many cases it will be enabling additional physics to more faithfully represent the phenomena. We want to focus on measuring every capable exascale based on full applications tackling real problems compared to what they can do today.”

“This list is a bit of an eye chart (above, click to enlarge) and represents the 26 applications that are currently supported by the ECP. Each of them, when selected, specified a challenge problem. For example, it wasn’t just a matter of saying they’ll do better chemistry but here’s a specific challenge that we expect to be able to tackle when the first exascale systems are available,” said Messina

One example is GAMESS (General Atomic and Molecular Electronic Structure System) an ab initio quantum chemistry package that is widely used. The team working on GAMESS has spelled out specific problems to be attacked. “It’s not only very good code but they have ambitious goals; if we can help that team achieve its goals exascale for the games community code, the leverage is huge because it has all of those users. Now not all of them need exascale to do their work but those that do will be able to do it quickly and more easily,” said Messina.

GAMESS is also a good example of a traditional FLOPS heavy numerical simulation application. Messina reviewed four other examples (earthquake simulation, wind turbine applications, energy grid management optimization, and precision medicine). “The last one that I’ll mention is a collaboration between DoE and NIH and NCI on cancer as you might imagine,” said Messina. “It is extremely important for society and also quite different to traditional partial differential equation solving because this one will rely on deep learning and use of huge amounts of data – being able to use millions of patient records on types of cancer and the treatments they received and what the outcome was as well as millions of potential cures.”

Data analytics is a big part of these kinds of precision medicine applications, said Messina. When pressed on whether the effort to combine traditional simulation with deep learning would inevitably lead to diverging architectures, Messina argued for the contrary: “One of our second level goals is try to promote convergence as opposed divergence. I don’t know that we’ll be successful in that but that’s what we are hoping. [We want] to understand that better because we don’t have a good understanding of deep learning and data analytics.”

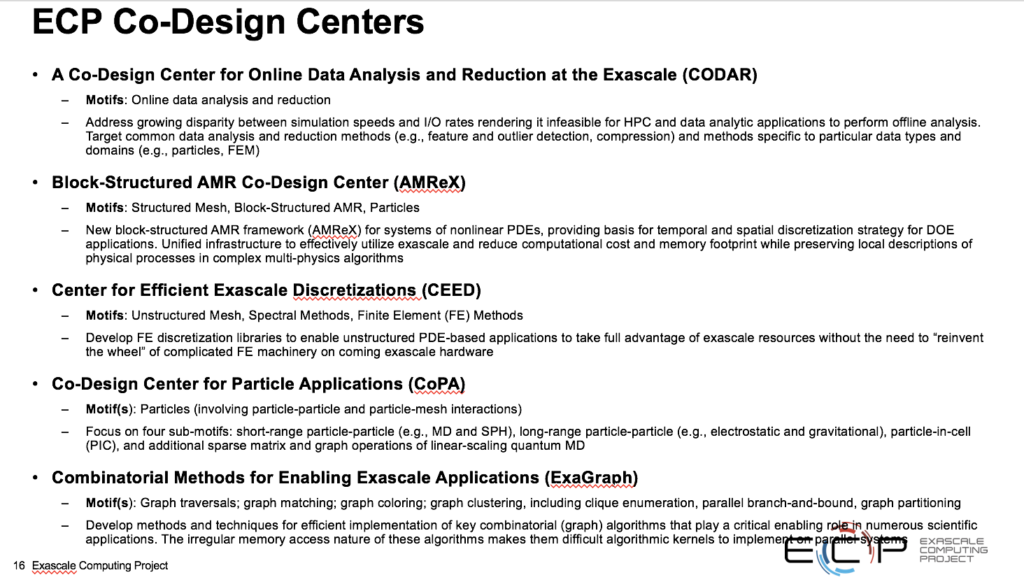

Co-design also been a priority and received a fair amount of attention. Doug Kothe, ECP director of applications development, is spearheading those efforts. Currently there are five co-design centers including a new one focused on graph analytics. All of the teams have firm milestones, including some shared milestones with other ECP effort to ensure productive cooperation.

Messina noted that, “Although we will be measuring our success based on whole applications, in the meantime you can’t always deal with the whole application, so we have proxies and sub projects. The vendors need this and we will need it to guide our software development.”

Ensuring resilience is a major challenge given the exascale system complexity. “On average the user should notice a fault on the order of once a week. There may be faults every hour but the user shouldn’t see them more than once a week,” said Messina. This will require, among other things, a robust capable software stack, “otherwise it’s a special purpose system or a system that is very difficult to use.”

Messina showed a ‘notional’ software stack slide (below, click to enlarge). “Resilience and workflows are on the side because we believe they influence all aspects of the software stack. In a number of areas we are investing in several approaches to accomplish the same functionality. At some point we will narrow things down. At the same time we feel we probably have some gaps, especially in the data issues, and are in the process of doing a gap analysis for our software stack,” he said.

Clearly it’s a complex project with many parts. Integration of all these development activities is an ongoing challenge.

“You have to work together so the individual teams have shared milestones. Here’s one that I selected simply because it was easy to describe. By the beginning of next calendar year [we should have] new prototype APIs to have coordination between MPI and OpenMPI runtimes because this is an issue now in governing the threads and messages when you use both programming models which a fair number of applications do. How is this going to work? So the software team doing this will interact with a number of application development teams to make sure we understand their runtime requirements. We can’t wait until we have the exascale systems to sort things out.”

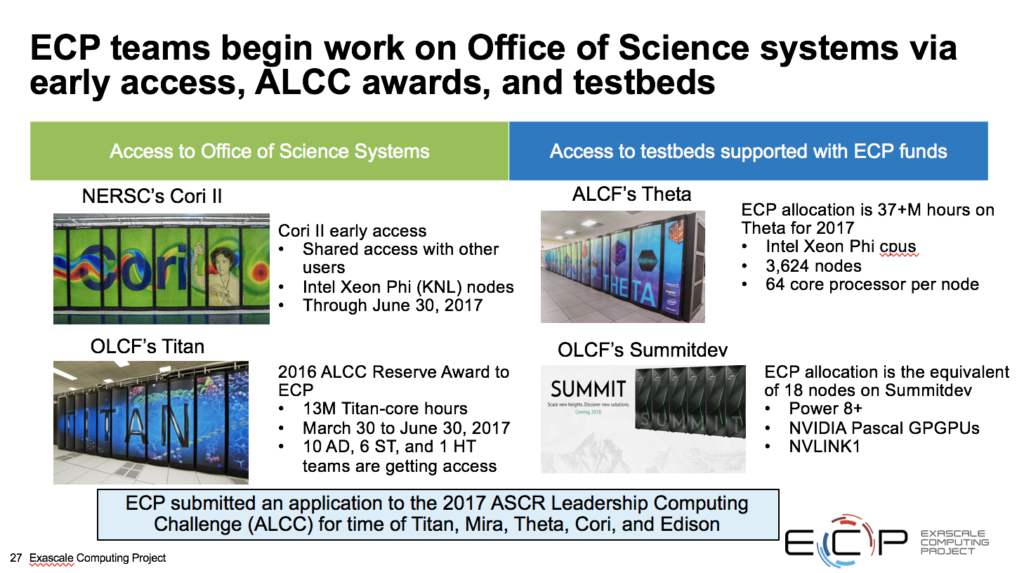

“We also want to be able to measure how effective the new ideas are likely to be and so we are also launched a design space evaluation effort,” said Messina. The ECP project has actively sought early access to several existing resources for use during development.

These are just a few of the topics Messina touched on. Workforce training is another key issue that ECP is tackling. It is also increasing it communications and outreach efforts as shown below. There is, of course, an ECP web site and recently ECP launched newsletter expected to be published roughly monthly.

With the Path Forward awards coming soon, several working group meetings having been held, and the new solidified plan, the U.S. effort to reach exascale computing is starting to feel concrete. It will be interesting to see how well ECP’s various milestones are hit. The last slide below depicts the overall program. Hyperion indicated it will soon post all of Messina’s slides (and other presentations from HPC User Forum) on the HPC User Forum site.