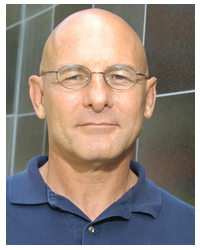

Retirement, it seems, isn’t for everyone. Indeed you may already know that David Patterson joined Google’s Tensor Processing Unit (TPU) development effort after a 40-year career in academia at the University of California at Berkley. On Saturday, CNBC posted an engaging account of Paterson’s leap into his next career.

“Four years ago they (Google) had this worry and it went to the top of the corporation,” said Patterson, 69, while sporting a T-shirt for Google Brain, the company’s research group. The fear was that if every Android user had three minutes of conversation translated a day using Google’s machine learning technology, “we’d have to double our data centers,” Patterson is quoted in the article* by Ari Levy who covered a talk last week by Patterson on the roughly year anniversary of his UC Berkeley retirement.

The short piece is a fun ride tracing the indefatigable Patterson’s path forward. It’s also an interesting reminder of shifting dynamics in computer technology development. Today’s big cloud providers – Google, Amazon, and Microsoft to name three – are far from mere technology consumers but in fact leading edge developers. It’s not an understatement that the big system and semiconductor suppliers take many of their cues from hyperscalers’ efforts and directions.

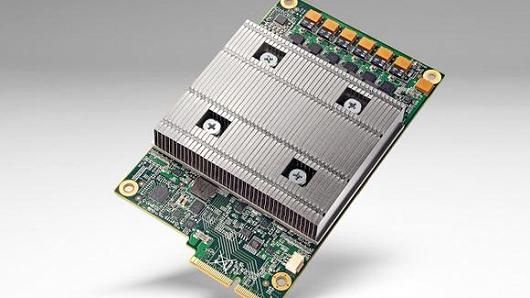

Patterson is one of the lead authors on a report from Google last month on the TPU’s performance. The report concludes that the TPU is running 15 to 30 times faster and 30 to 80 times more efficient than contemporary processors from Intel and Nvidia. The paper, written by 75 engineers, will be delivered next month at the International Symposium on Computer Architecture in Toronto

According to the article, Google says the TPU is being tested broadly across the company. It’s used for every search query as well as for improving maps and navigation, and it was the technology used to power DeepMind’s AlphaGo victory over Go legend Lee Sedol last year in Seoul.

Levy writes “It’s still very early days for the TPU. Thus far, the processor has proven effective at what’s called inference, or the second phase of deep learning. The first phase is training and — as far as we know — for that Google still counts on off-the-shelf processors.”

* Link to CNBC article (Meet the 69-year-old professor who left retirement to help lead one of Google’s most crucial projects): http://www.cnbc.com/2017/05/06/googles-tpu-for-machine-learning-being-evangelized-by-david-patterson.html