Higher return on investment of up to 250 Percent demonstrated on various high-performance computing applications; InfiniBand delivers up to 55 Percent higher performance Vs. Omni-Path Using half the infrastructure

The latest revolution in high-performance computing (HPC) is the move to a co-design architecture — a collaborative effort among industry thought leaders, academia, and manufacturers to achieve Exascale performance by taking a holistic system-level approach to achieve fundamental performance improvements. Co-design architecture exploits system efficiency and optimizes performance by creating synergies between the hardware and the software, as well as between the different hardware elements within the data center.

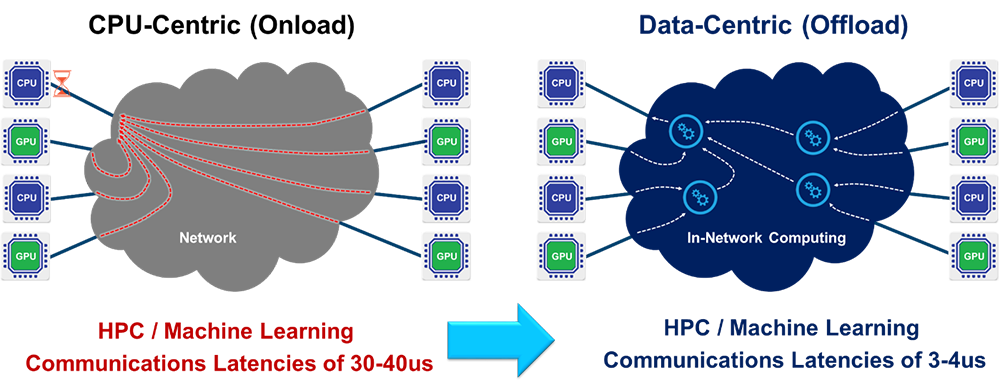

Industry wide, it is recognized that the CPU has reached the limits of its scalability. This has created a need for the intelligent network to act as a “co-processor”, sharing the responsibility for handling and accelerating application workloads. By placing computation for data-related algorithms on an intelligent network, it is possible to dramatically improve data center and applications performance and to improve scalability.

The new generation of smart interconnect solutions is based on a data-centric architecture, which can offload all network functions from the CPU to the network and perform computation in-transit, freeing CPU up cycles and subsequently increasing the system’s efficiency. With this new architecture, the interconnect supports the management and execution of more data algorithms within the network. This allows users to run algorithms on the data as it is being transferred within the system interconnect rather than waiting for the data to reach the CPU. Smart interconnect solutions can now deliver both In-Network Computing and In-Network Memory, representing the industry’s most advanced approach to achieve performance and scalability for high performance cluster systems.

Mellanox hardware-based acceleration technologies such as SHARP (Scalable Hierarchical Aggregation and Reduction Protocol) for offloading data reduction and data aggregation protocols, hardware-based MPI tag matching, and MPI rendezvous offload are just a few of the solutions that work together to offload a significant amount of inter-process communication-related computation, enabling data algorithm processing as the data moves.

The performance and scalability advantages of Mellanox interconnect solutions over Intel’s Omni-Path based solutions have been demonstrated over various applications. Testing has been conducted at different sites on production systems, comparing an InfiniBand EDR cluster to an Omni-Path connected cluster. The InfiniBand cluster includes servers with dual-socket Intel Xeon 16-core E5-2697 v4 CPUs at 2.60GHz. The Omni-Path cluster includes servers with dual-socket Intel Xeon 18-core Intel E5-2697 v4 CPUs at 2.30 GHz. Although there exists a small difference between the CPU frequencies, it is very possible to compare the scaling performance of the two clusters. As the following two cases clearly demonstrate, InfiniBand offers dramatically higher performance and lowers total cost of ownership.

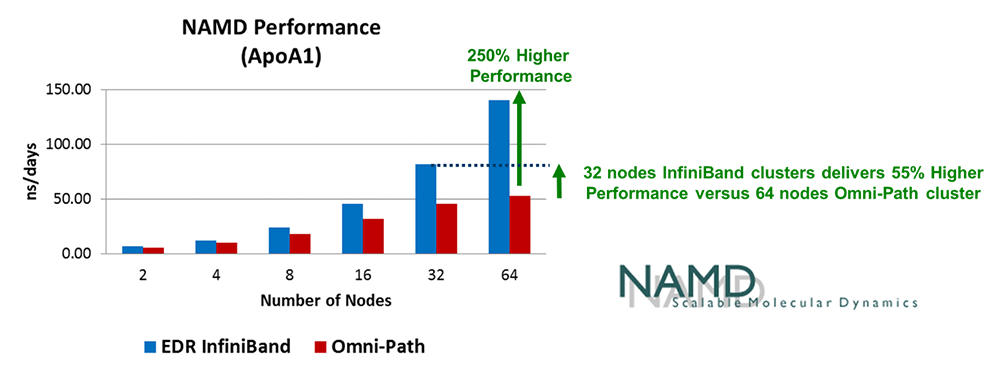

Case I: NAMD

NAMD is a molecular dynamics application for chemistry and chemical biology. Figure 1 below shows test results for the standard ApoA1 benchmark of NAMD. As can be seen, a 64-node InfiniBand cluster delivered an impressive 250 percent higher performance than a 64-node Omni-Path cluster. Furthermore, if the same benchmark is run on an InfiniBand cluster, with half the number of servers (32 nodes), the InfiniBand cluster delivered 55 percent higher performance than the 64-node Omni-Path cluster.

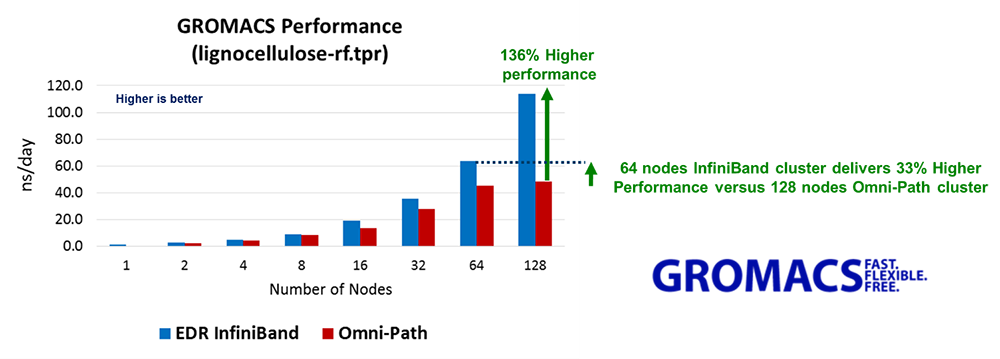

Case II: GROMACS

GROMACS is a molecular dynamics package used for simulations of proteins, lipids and nucleic acids. Figure 2 below shows test results for an industry standard benchmark simulation of lignocellulose. As can be seen, a 128-node InfiniBand cluster delivered 136 percent higher performance than a 128-node Omni-Path cluster. Furthermore, if the same benchmark is run on an InfiniBand cluster with half the number of servers (64 nodes), the InfiniBand cluster still delivered 33 percent higher performance than the 128-node Omni-Path cluster.

Both applications require fast and efficienct interprocess communications. The ability of InfiniBand to run a large portion of the MPI communication layer within the network greatly boosts the performance and scalability attainable from the HPC infrastructure. In both test cases, InfiniBand delivers higher performance (250 percent higher in the NAMD case, and 136 percent in the GROMACS case) versus Omni-Path – for the same-sized cluster job. Of equal import, in both cases InfiniBand delivered higher performance with only half the number of servers (for NAMD, a 32-node InfiniBand cluster delivered 55 percent higher performance than a 64-node Omni-Path cluster; and for GROMACS, a 64-node InfiniBand cluster delivered 33 percent higher performance than a 128-node Omni-Path cluster).

Mellanox has more than 17 years of experience designing high-speed communication fabrics. Today, Mellanox is a leading supplier of end-to-end Ethernet and InfiniBand intelligent interconnect solutions and services for servers, storage, and hyper-converged infrastructure. Mellanox intelligent interconnect solutions increase data center efficiency by providing the highest throughput and lowest latency, delivering data faster to applications and unlocking system performance. For more information, please visit: http://www.mellanox.com/solutions/hpc/.