Increasing wattage of CPUs and CPUs has been the trend in HPC for many years. So why is it that in the run up to ISC17, there is an increasing amount of buzz about this increased wattage? Many sense that an inflection point has been reached in the relationship between server density, the wattage of key silicon components and heat rejection.

This is first becoming critical in HPC as sustained computing throughput is paramount to the type of applications implemented in high density racks and clusters. Cutting edge HPC requires the highest performance versions of the latest CPUs and GPUs. As ISC17 approaches we are seeing the highest frequency offerings of Intel’s Knight’s landing, Nvidia’s P100 and Intel’s Skylake (Xeon) processor. With that comes high wattages at the node-level with even higher wattages coming in the near future. HPC cooling requirements mean that good-enough levels of heat removal are no longer sufficient.

For HPC, the cooling target needs to not only assure reliability but be able to prevent down-clocking and throttling of nodes running at 100% compute for sustained periods. This recent inflection point means that to cool high wattage nodes, there is little choice other than node-level liquid cooling to maintain reasonable rack densities. Assuming that massive air heat sinks with substantially higher air flows could cool some of the silicon on the horizon, the result would be extremely low compute density. 4U nodes, partially populated racks taking up expensive floor space, and costly data center build outs and expansions would become the norm.

OEMs and a growing number of TOP500 HPC sites such as JCAHPC’s Oakforest-PACS, Lawrence Livermore National Laboratory, Los Alamos National Laboratory, Sandia National Laboratories and the University of Regensburg have addressed both the near term and anticipated cooling needs using Asetek RackCDU D2C™ hot water liquid cooling.

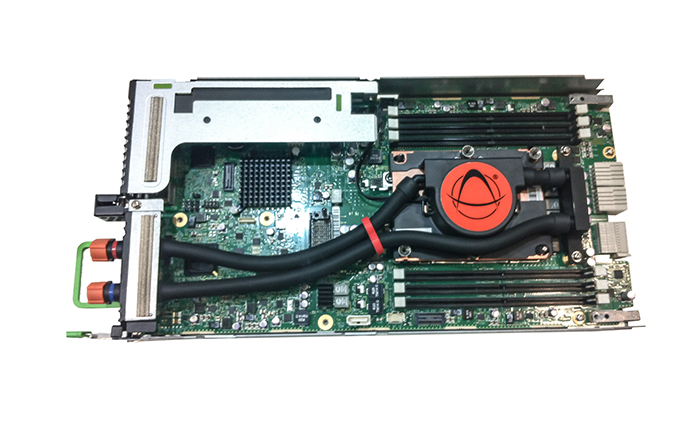

Asetek’s direct to chip distributed cooling architecture addresses the full range of heat rejection scenarios. The distributed pumping architecture is based on low pressure, redundant pumps and closed loop liquid cooling within each server node. This approach allows for a high level of flexibility.

The lowest data center impact is with Asetek ServerLSL server-level liquid enhanced air cooling solution. ServerLSL replaces less efficient air coolers in the servers with redundant coolers (cold plate/pumps) and exhausts 100% of this hot air into the data center. It can be viewed as a transitional stage in the introduction of liquid cooling or as a tool for HPC sites to instantly incorporate the highest performance computing into the data center. At a site level, all the heat is handled by existing CRACs and chillers with no changes to the infrastructure.

While ServerLSL isolates the liquid cooling system within each server, the wattage trend is pushing the HPC industry toward all liquid-cooled nodes and racks. Enter

Asetek’s RackCDU system which is rack-level focused, enabling a much greater impact on cooling costs for the datacenter. In fact, RackCDU is used by all of the current sites in the TOP500 using Asetek liquid cooling.

Asetek RackCDU provides the answer both at the node level and for the facility overall. As with ServerLSL, RackCDU D2C (Direct-to-Chip) utilizes redundant pumps/cold plates atop server CPUs & GPUs, cooling those components and optionally memory and other high heat components. The heat collected is moved it via a sealed liquid path to heat exchangers in the RackCDU for transfer of heat into facilities water. RackCDU D2C captures between 60 percent and 80 percent of server heat into liquid, reducing data center cooling costs by over 50 percent and allowing 2.5x-5x increases in data center server density.

The remaining heat in the data center air is removed by existing HVAC systems in this hybrid liquid/air approach. When there is unused cooling capacity available, data centers may choose to cool facilities water coming from the RackCDU with existing CRAC and cooling towers.

Asetek’s distributed pumping method of cooling at the server, rack, cluster and site levels deliver flexibility in the areas of heat capture, coolant distribution and heat rejection that centralized pumping cannot.

As HPC requirements in 2017 and beyond require more efficient cooling, Asetek continues to demonstrate global leadership in liquid cooling as its OEM and installation bases grow. To learn more about Asetek liquid cooling, stop by booth J-600 at ISC 2017 in Germany.

Appointments for in-depth discussions about Asetek’s data center liquid cooling solutions at ISC17 may be scheduled by sending an email to [email protected].