I’ve seen the future this week at ISC, it’s on display in prototype or Powerpoint form, and it’s going to dumbfound you. The future is an AI neural network designed to emulate and compete with the human brain. In this game, the brain doesn’t stand a chance.

Scoff at such talk as farfetched or far off in a hazy utopic/dystopic future. Roll your eyes and say we’ve heard the hype before (some of us remember a supercomputer company 25 years ago with the inflated name of “Thinking Machines,” long defunct). But it’s neither futuristic nor hype, it’s happening now, the technology pieces are taking shape, and the implications for business, for the work world and for our everyday lives – for good or ill – are as staggering as they are real.

Aside: It’s somewhat unsettling that conference attendees here in Frankfurt don’t seem particularly interested in those implications. For the moment, ISC is at the gathering point of computer scientists bringing about massive technological change, but nearly all the talk here is about the “how” of AI systems, not the “what then?” But there’s one anecdote making the rounds that has raised eyebrows: when Google engineers were asked to how its AlphaGo machine the winning move against the world champion of Go (the world’s most complex board game), the answer was: “We don’t know” (more on this below).

Quite consciously, engineers are architecting HPC systems along the lines of our brain. The new architecture is an emerging style of computing called “data intensive” or “data centric.” It replaces processing with memory (i.e., data) at the center of the computing universe. Combined with advanced algorithms, new memory and processor technologies are coming on line to make the new architecture a practical reality. Once the pieces are in place, the next step will be to scale these systems beyond all measure of human brain capacity.

What does data centric computing mean? How does it work? Why does it represent a major shift in advanced scale computing?

Let’s start answering those questions by first looking at how data centric systems are measured. The benchmark for new AI systems isn’t how fast they solve linear algebra problems (i.e., Linpack). That’s how processor-centric systems have been measured for decades, and considering the capabilities of data-centric systems under development, that benchmark seems wholly inadequate.

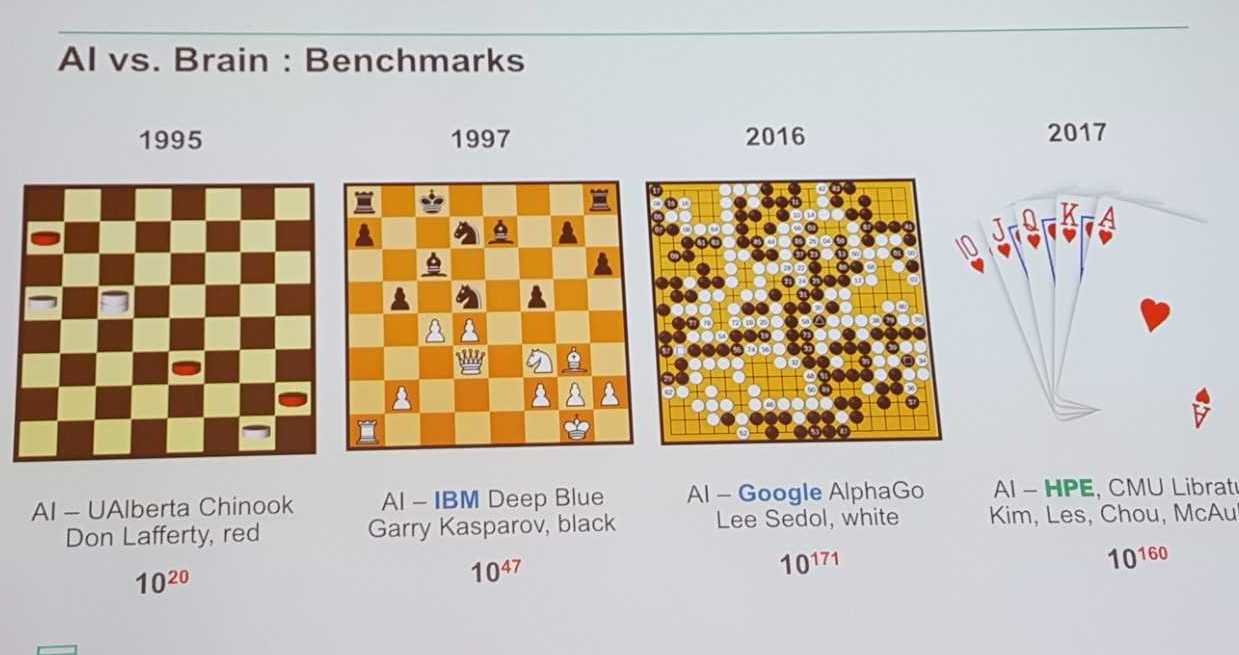

Rather than throughput, AI-based systems are measured in relation to people: their ability to compete with humans at our most intellectually challenging games of reason – checkers, chess, Go, poker. The standard of success isn’t training the system to become perfect at it, or to “solve” the game (i.e., work out every possible combination of moves). The benchmark is playing the game better than any human.

That’s the objective. Once the system is better than any of us, it’s ready to move into an advisory role, providing guidance and suggestions, augmenting our capabilities. For now. In a decade or so, these systems will take over tasks for us altogether.

Driving is a prime example. If driving were a game, humans would still beat machines – even though statistics show we’re getting worse at it (according to Dr. Pradeep Dubey, Intel Fellow, Intel Labs & Director, Parallel Computing Lab, who presented at ISC on autonomous vehicle technology). Around the world, two people are killed in car accidents each minute. In the U.S., 40,000 people are killed annually and 2 million suffer permanent injuries.

Meanwhile, AI is enabling machines to get better at driving. A convergence point is coming. For now, the car’s intelligence is limited to navigating, warning us about traffic conditions and setting off beepers when we get close to curbs and other cars.

The next step: our roads will have special lanes where we’ll temporarily hand over operation of the car to itself. A few years after that, we won’t drive at all. Driving is a game in which machines will soon be much better than we are.

Dr. Eng Lim Goh, Vice President of HPE and an industry visionary for decades, is a prime driver of new AI system development. At ISC this week, he discussed why AI in all its forms – machine learning, deep learning, strategic reasoning, etc. – is the driving force bringing about “data intensive” computing architectures.

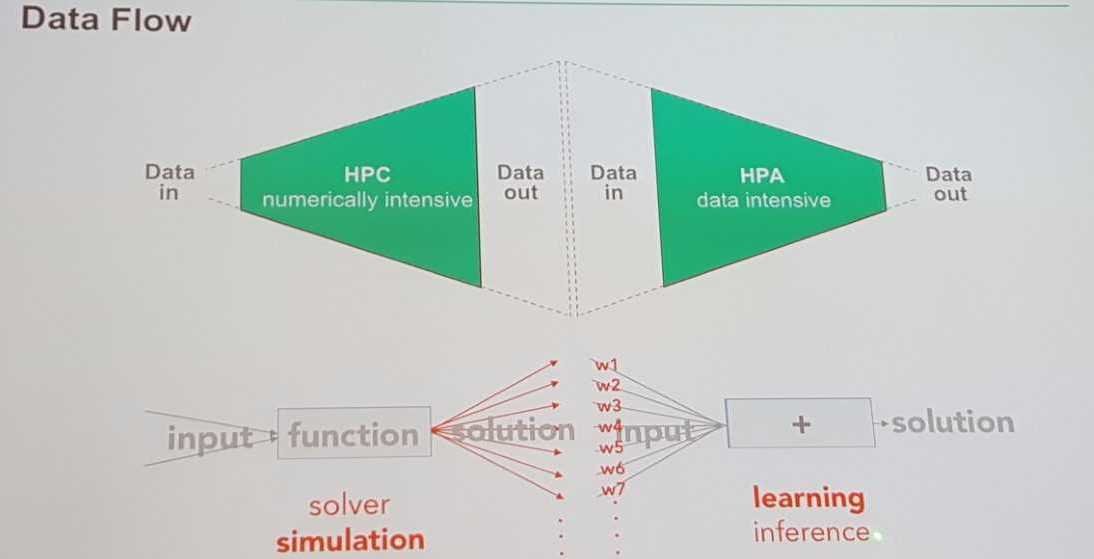

Here’s his schema for the data intensive computer:

The left side of the diagram is old-style, LINPACK-benchmarked, processor-centric computing. That’s where HPC happens. The processor is at the center. Data is sent to the CPU, simulations are run, and new, and more, data comes out. These systems have hit a wall of their own making. The problem occurs when HPC systems run their simulations, generating exponentially more machine-generated data than they started with. They’re producing data beyond the capability of data scientists to analyze. Big data isn’t big enough.

The left side of the diagram is old-style, LINPACK-benchmarked, processor-centric computing. That’s where HPC happens. The processor is at the center. Data is sent to the CPU, simulations are run, and new, and more, data comes out. These systems have hit a wall of their own making. The problem occurs when HPC systems run their simulations, generating exponentially more machine-generated data than they started with. They’re producing data beyond the capability of data scientists to analyze. Big data isn’t big enough.

“For 30 years we’ve lived in this world where small amounts of data go in, and we apply supercomputing power onto our partial differential equations, or our models, to generate lots of data,” he said.

Already, Goh pointed out, there aren’t enough data scientists to meet demand for today’s data analytics requirements. For the torrents of machine-generated data to come, there’s an overwhelming need to automate how data is analyzed.

Take for example seismic exploration.

For exploration of energy reserves at sea, ships drag cables with hydrophones, fire shots into the ocean floor and collect the echo on sensors. Goh said for every 10TB of data collected by the sensors, 1PB of simulation data is produced – 100X the original data.

That’s where the right side of the diagram comes in: high performance analytics (HPA), self-learning AI systems that can take voluminous amounts of data produced by HPC, put it in memory, and work up answers to questions.

The key to the data-centric system of the future is the border area in the middle of the diagram. That’s where memory (i.e., data) resides, like a queen bee. It will be surrounded by a variety of processors (CPUs, GPUs, FPGAs, ASICs, assigned jobs appropriate for their capabilities) operating around the data, like drones.

Looked at this way, in a world where most companies have analyzed only about 3 percent of their data on average, traditional HPC systems seem glaringly incomplete. Combining the left side of the diagram and the right, integrating HPC with HPA – that takes supercomputing somewhere new. That’s a machine with a new soul.

But Goh conceded there are barriers to HPC and HPA joining forces.

“The two worlds are very different,” Goh said. “The HPC world where I lived, I’m guilty of this. All these years we assumed data movement was free. Guess what? When Linpack started 20 years ago we didn’t consider data movement. Yet we’re still ranking our Top500 systems that way. We’re still guilty that way.

“But the data scientists of the world also have something to say about us,” he added. “They assume compute is free. Take Hadoop. Hadoop is a technique where you map your data out onto compute nodes, then do your computation, then you reduce the data you bring back. The data world called this MapReduce. So we have to bring the two worlds together. More and more now, people should be investing in one system of left and right, not just the left.”

Goh pointed to the middle of his diagram and said that’s where the big architectural challenge lies. “If you have to move an exabyte of data between system A and B, if they are two different systems, it will be impractical. The world will come to this (integration of HPC and HPA).”

That’s why the U.S. effort to develop a “capable” exascale computer by the early 2020’s puts as much emphasis on compute power as memory capacity. A mission document issued by the Exascale Computing Project said its intent to build a system not just with an exaflop of processing power but one that also can handle an Exabyte of data in memory.

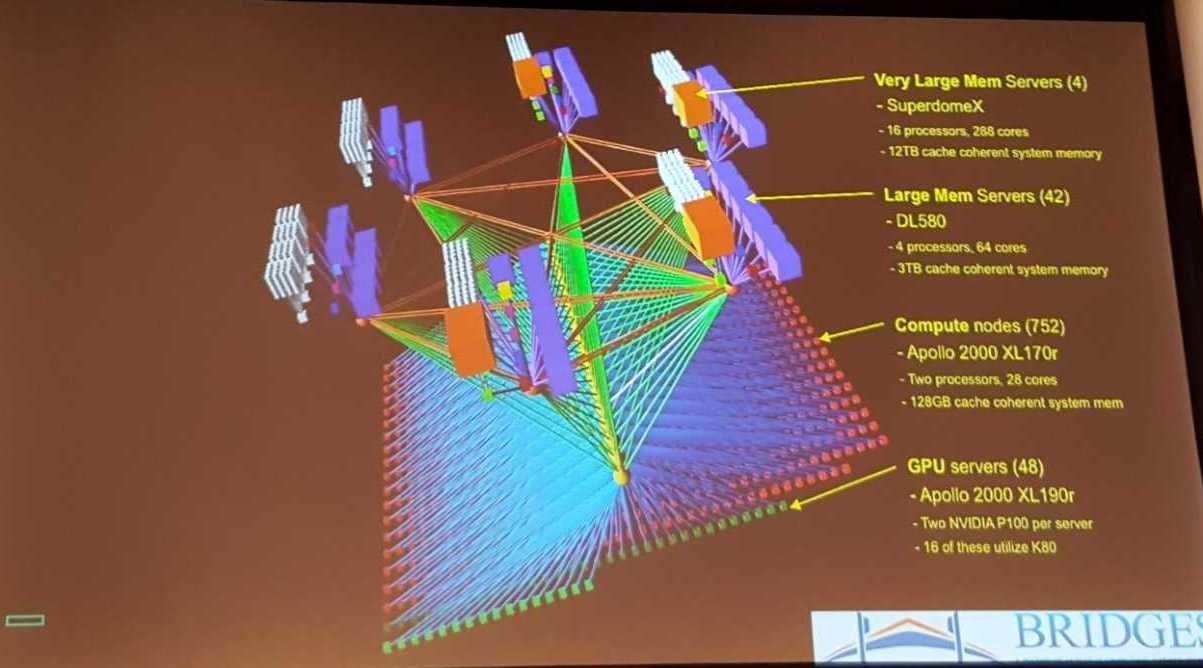

Goh described HPE’s “Bridges” system at the Pittsburgh Supercomputer Center as a data-centric supercomputer that incorporates HPC and HPA, designed specifically for “scalable deep learning.”

“Essentially, it’s a bandwidth machine,” Goh said. “It’s a supercomputer, but really it’s a data mover. Not only are NVlinks all connected, they’re also GPU-connected, so clumps of four GPUs can talk to other clumps of four GPUs directly. Then we have four OPA’s coming out of each node, giving one OPA per GPU. So this is really a data machine.”

The Bridges supercomputer pulled off one of the most impressive game wins of the emerging AI era when it defeated four of the world’s top poker players earlier this year. Actually, the competition stretched across two years, Goh said, with the AI system losing $700,000 to the players the first year they played. The second year, with 10X more compute from the Bridges computer, the AI system (“Libratus”) took the four humans for $1.7 million, a classic hustle.

The Bridges supercomputer pulled off one of the most impressive game wins of the emerging AI era when it defeated four of the world’s top poker players earlier this year. Actually, the competition stretched across two years, Goh said, with the AI system losing $700,000 to the players the first year they played. The second year, with 10X more compute from the Bridges computer, the AI system (“Libratus”) took the four humans for $1.7 million, a classic hustle.

While IBM Deep Blue (chess) and Google’s AlphaGo have grabbed most of the machine-defeated-human headlines of late, it’s less well known that machines have beaten humans at checkers, which has 1020 “naïve” (or possible) combinations, since the early 1990s, several years before IBM beat the world’s top chess player. Chess has 1047 naïve combinations. How big is 1047? An exascale machine running for 100 years would complete only 1028 combinations. The point being that without integrated AI techniques, processing only gets you so far.

Go, meanwhile, has 10171 combinations. Poker, with “only” 10160 combinations, offers up the added complexity of “incomplete information.” By contrast with the three board games, in which you can see the pieces held by your opponent, in poker, you don’t know what your opponents have in their hands.

Go, meanwhile, has 10171 combinations. Poker, with “only” 10160 combinations, offers up the added complexity of “incomplete information.” By contrast with the three board games, in which you can see the pieces held by your opponent, in poker, you don’t know what your opponents have in their hands.

“So we didn’t solve chess, machines didn’t solve chess,” Goh said, “all they did was be good enough to be superhuman – to beat any human. That’s a term were going to hear more and more now.”

After Goh’s presentation, he was asked to response to Google not understanding how AlpaGo won the Go tournament. The issue, he said, is overcoming opacity.

“We’re working very hard to increasing transparency,” he said. “Some people have discussed the idea that there are many stages in a neural network, to intercept it in between those stages, and take its output and see if you can make sense of it.”

Leaving a strong role for human supervision also is important. He pointed out that since the Industrial Revolution, workers get promoted from first operating a machine to supervising machines.

He also discussed the distinction between the “correct” and the “right” answer. An AI-based system may deliver a correct answer, but whether it’s “right” – acceptable within human social mores, the bounds of business ethics, or even an aesthetic judgment – is something only humans can decide.

“Societal values need to be applied, human values need to be applied,” he said.