Neural networks offer a powerful new resource for analyzing large volumes of complex, unstructured data. However, most of today’s Artificial Intelligence (AI) deep learning frameworks rely on in-core processing, which means that all the relevant data must fit into main memory. As the size and complexity of a neural network grows, cost becomes a limiting factor. DRAM memory is simply too expensive.

Of course, memory bottlenecks are hardly new in intensive-computing environments such as High Performance Computing (HPC). Transferring large data sets to large numbers of high-performance cores has been an increasing challenge for decades. Fortunately, that is beginning to change. New Intel memory and storage technologies are being integrated into the Intel® Scalable System Framework (Intel® SSF) to help reverse this trend. They do this by moving high volume data closer to the processing cores, and by accelerating data movement at each tier of the memory and storage hierarchy.

Moving Data Closer to Compute

To accelerate the flow of data into the compute cores, Intel is integrating high-speed memory directly into Intel® Xeon® Phi™ processors and future Intel® Xeon® processors. By moving memory closer to compute resources, these solutions help to optimize core utilization. They also help to improve workload scaling. Intel Xeon Phi processors, for example, have demonstrated up to 97 percent scaling efficiency for deep learning workloads up to 32-nodes1.

Transforming the Economics of Memory

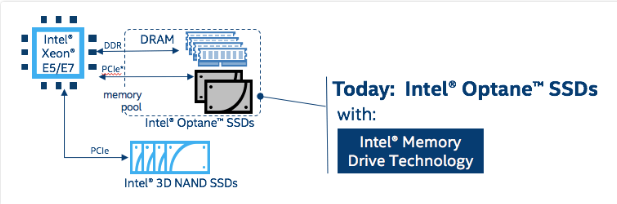

Intel® Optane™ technology provides even more far-reaching advantages for data movement. This groundbreaking, non-volatile memory technology combines the speed of DRAM with the capacity and cost efficiency of NAND. Based on Intel® Optane™ technology, Intel® Optane™ SSDs are designed to provide 5-8x faster performance than Intel’s fastest NAND-based SSDs2. Intel Optane SSDs can be combined with Intel® Memory Drive Technology to extend memory and provide cost-effective, large-memory pools.

When connected over the PCIe bus, an Intel Optane SSD provides an efficient extension to system memory. Behind the scenes, the Intel Memory Drive Technology transparently integrates the SSD into the memory subsystem and orchestrates data movement. “Hot” data is automatically pushed onto the DRAM to maximize performance. The OS and applications see a single high-speed memory pool, so no software changes are required.

How good is performance? Based on Intel internal testing, the DRAM + Intel Optane SSD combination provides roughly 75 to 80 percent of the performance of a comparable DRAM-only solution3. The outlook may be even better for deep learning applications. Intel engineers found that the DRAM + Intel Optane SSD combination can optimize a data locality and minimize cross socket traffic which could result in better performance4 than the DRAM-only solution. This is the case for big datasets distributed across all system memory where every application thread has access to all data. Such an example could be found in the General Matrix Multiplication (GEMM) benchmark which represents some portion of Deep Learning core algorithms.

Accelerating Storage

With today’s exploding data volumes, transferring data from bulk storage to local storage to cluster memory can lead to operational bottlenecks at any point. Intel Optane SSDs can be used as high-speed buffers to break through these barriers. A relatively small number of Intel® Optane™ SSDs can dramatically reduce data transfer times. They can also improve performance for applications that are constrained by excessive storage latency or insufficient storage bandwidth.

Simplifying Integration with Intel® Scalable System Framework (Intel® SSF)

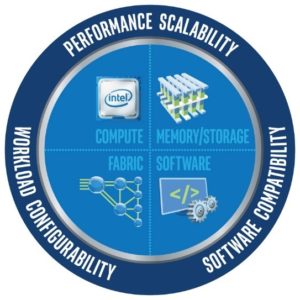

By accelerating data movement, Intel Optane SSDs—and future Intel products based on Intel Optane technology—will help to transform many aspects of HPC and AI. Their inclusion in Intel SSF will make it easier for organizations to take advantage of emerging memory and storage solutions based on this new technology.

As deep learning emerges as a mainstream HPC workload, these balanced, large-memory cluster solutions will help organizations deploy massive neural networks to analyze some of the world’s largest and most complex datasets.Intel SSF provides a scalable blueprint for efficient clusters that deliver higher value through increased integration and balanced designs. This system-level focus helps Intel synchronize innovation across all layers of the HPC and AI solution stack, so new technologies can be integrated more easily by system vendors and end-user organizations.

Stay tuned for additional articles focusing on the benefits Intel SSF brings to AI at each level of the solution stack through balanced innovation in compute, fabric, storage, and software technologies.

1 https://syncedreview.com/2017/04/15/what-does-it-take-for-intel-to-seize-the-ai-market/

2 https://www.intel.com/content/www/us/en/solid-state-drives/optane-ssd-dc-p4800x-brief.html

3 Based on Intel internal testing using SGEMM MKL from the Intel® Math Kernel Library. System under test (DRAM + SSD): 2 X Intel® Xeon® processor E5-2699 v4, Intel® Server Board S2600WT, 128 GB DDR4 memory + 4 X Intel® Optane SSD SSDPED1K375GA), Cent OS 7.3.1611. Baseline system (all DRAM): 2 X Intel® Xeon® processor E5-2699 v4, Intel® Server Board S2600WT, 768 GB DDR4 memory, Cent OS 7.3.1611.

4 Achieving higher performance while using less DRAM memory was made possible by Intel® Memory Drive Technology, which automatically takes advantage of NUMA technology in Intel processors to enhance data placement not only across the hybrid memory space, but also within the available DRAM memory.