On Monday, the U.S. Department of Energy’s (DOE’s) ASCR Leadership Computing Challenge (ALCC) program awarded 24 projects a total of 2.1 billion core-hours at the Argonne Leadership Computing Facility (ALCF). The one-year awards are set to begin July 1. Several of the 2017-2018 ALCC projects will be the first to run on the ALCF’s new 9.65 petaflops Intel-Cray supercomputer, Theta, when it opens to the full user community July 1.

Theta, of course, is based on the second-generation of the Intel Xeon Phi processor (more detailed system description at the end of article). Projects in the Theta Early Science Program performed science simulations on the system, but those runs served a dual purpose of helping to stress-test and evaluate Theta’s capabilities. The new projects are focused on the science research.

Each year, the ALCC program selects projects with an emphasis on high-risk, high-payoff simulations in areas directly related to the DOE mission and for broadening the community of researchers capable of using leadership computing resources. In 2017, the ALCC program awarded 40 projects totaling 4.1 billion core-hours across the three ASCR facilities. More 2017/2018 projects may be announced at a later date as ALCC proposals can be submitted throughout the year.

The 24 projects awarded time at the ALCF are noted below. Some projects received additional computing time at OLCF and/or NERSC.

- Thomas Blum from University of Connecticut received 220 million core-hours for “Hadronic Light-by-Light Scattering and Vacuum Polarization Contributions to the Muon Anomalous Magnetic Moment from Lattice QCD with Chiral Fermions.”

- Choong-Seock Chang from Princeton Plasma Physics Laboratory received 80 million core-hours for “High-Fidelity Gyrokinetic Study of Divertor Heat-Flux Width and Pedestal Structure.”

- John T. Childers from Argonne National Laboratory received 58 million core-hours for “Simulating Particle Interactions and the Resulting Detector Response at the LHC and Fermilab.”

- Frederico Fiuza from SLAC National Accelerator Laboratory received 50 million core-hours for “Studying Astrophysical Particle Acceleration in HED Plasmas.”

- Marco Govoni from Argonne National Laboratory received 60 million core- hours for “Computational Engineering of Electron-Vibration Coupling Mechanisms.”

- William Gustafson from Pacific Northwest National Laboratory received 74 million core-hours for “Large-Eddy Simulation Component of the Mesoscale Convective System Climate Model Development and Validation (CMDV-MCS) Project.”

- Olle Heinonen from Argonne National Laboratory received 5 million core-hours for “Quantum Monte Carlo Computations of Chemical Systems.”

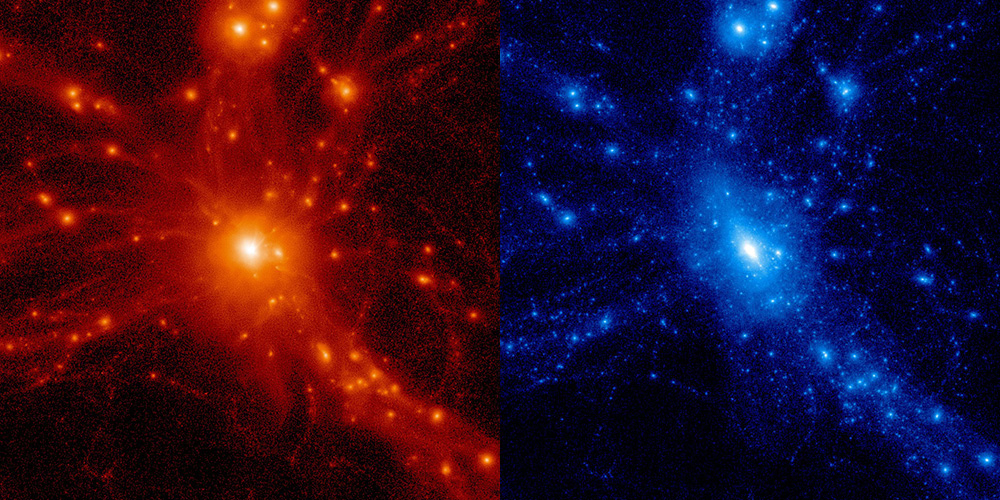

- Katrin Heitmann from Argonne National Laboratory received 40 million core-hours for “Extreme-Scale Simulations for Multi-Wavelength Cosmology Investigations.”

- Phay Hofrom Argonne National Laboratory received 68 million core-hours for “Imaging Transient Structures in Heterogeneous Nanoclusters in Intense X-ray Pulses.”

- George Karniadakis from Brown University received 20 million core-hours for “Multiscale Simulations of Hematological Disorders.”

- Daniel Livescu from Los Alamos National Laboratory received 60 million core-hours for “Non-Boussinesq Effects on Buoyancy-Driven Variable Density Turbulence.”

- Alessandro Lovato from Argonne National Laboratory received 35 million core-hours for “Nuclear Spectra with Chiral Forces.”

- Elia Merzari from Argonne National Laboratory received 85 million core-hours for “High-Fidelity Numerical Simulation of Wire-Wrapped Fuel Assemblies.”

- Paul Messina from Argonne National Laboratory received 530 million core-hours for “ECP Consortium for Exascale Computing.”

- Aleksandr Obabko from Argonne National Laboratory received 50 million core-hours for “Numerical Simulation of Turbulent Flows in Advanced Steam Generators – Year 3.”

- Mark Petersen from Los Alamos National Laboratory received 25 million core-hours for “Understanding the Role of Ice Shelf-Ocean Interactions in a Changing Global Climate.”

- Benoit Roux from the University of Chicago received 80 million core-hours for “Protein-Protein Recognition and HPC Infrastructure.”

- Emily Shemon from Argonne National Laboratory received 44 million core-hours for “Elimination of Modeling Uncertainties through High-Fidelity Multiphysics Simulation to Improve Nuclear Reactor Safety and Economics.”

- Ilja Siepmann from University of Minnesota received 130 million core-hours for “Predictive Modeling of Functional Nanoporous Materials, Nanoparticle Assembly, and Reactive Systems.”

- Tjerk Straatsma from Oak Ridge National Laboratory received 20 million core-hours for “Portable Application Development for Next-Generation Supercomputer Architectures.”

- Sergey Syritsyn from RIKEN BNL Research Center received 135 million core-hours for “Nucleon Structure and Electric Dipole Moments with Physical Chirally-Symmetric Quarks.”

- Sergey Varganov from University of Nevada, Reno received 42 million core-hours for “Spin-Forbidden Catalysis on Metal-Sulfur Proteins.”

- Robert Voigt from Leidos received 110 million core-hours for “Demonstration of the Scalability of Programming Environments By Simulating Multi-Scale Applications.”

- Brian Wirth from Oak Ridge National Laboratory received 98 million core-hours for “Modeling Helium-Hydrogen Plasma Mediated Tungsten Surface Response to Predict Fusion Plasma Facing Component Performance.”

Managed by the Advanced Scientific Computing Research (ASCR) program within DOE’s Office of Science, the ALCC program provides awards of computing time that range from a few million to several-hundred-million core-hours to researchers from industry, academia, and government agencies. These allocations support work at the ALCF, the Oak Ridge Leadership Computing Facility (OLCF), and the National Energy Research Scientific Computing Center (NERSC), all DOE Office of Science User Facilities.

Theta Description (from ALCF web site):

Designed in collaboration with Intel and Cray, Theta will serve as a stepping stone to the ALCF’s next leadership-class supercomputer, Aurora. Both Theta and Aurora will be massively parallel, many-core systems based on Intel processors and interconnect technology, a new memory architecture, and a Lustre-based parallel file system, all integrated by Cray’s HPC software stack.

Theta is equipped with 3,624 nodes, each containing a 64 core processor with 16 gigabytes (GB) of high-bandwidth in-package memory (MCDRAM), 192 GB of DDR4 RAM, and a 128 GB SSD. Theta’s initial parallel file system is 10 petabytes.

Theta has several features that will allow scientific codes to achieve higher performance, including:

- High-bandwidth MCDRAM (300 – 450 GB/s depending on memory and cluster mode), with many applications running entirely in MCDRAM or using it effectively with DDR4 RAM

- Improved single thread performance

- Potentially much better vectorization with AVX-512

- Large total memory per node (208 GB on Theta vs. 16 GB on Mira)

Theta System Configuration

- 20 racks

- 3,624 nodes

- 231,935 cores

- 56 TB MCDRAM

- 679 TB DDR4

- 453 TB SSD

- Aries interconnect with Dragonfly configuration

- 10 PB Lustre file system

- Peak performance of 9.65 petaflops

Link to ALCF Article: http://www.alcf.anl.gov/articles/alcc-program-awards-alcf-computing-time-24-projects

Link to ASCR Leadership Computing Challenge: https://science.energy.gov/ascr/facilities/accessing-ascr-facilities/alcc/alcc-current-awards/

Source: Argonne Leadership Computing Facility