Last week we reported from ISC on an emerging type of high performance system architecture that integrates HPC and HPA (High Performance Analytics) and incorporates, at its center, exabyte-scale memory capacity, surrounded by a variety of accelerated processors. Until the arrival of quantum computing or other new computing paradigm, this is the architecture that could enable the “scalable learning machine.” It will handle and be trained on, literally, decades of accumulated data and foster Advanced AI, the ultimate manifestation of the Big Data of today and the highest form of AI. It will co-exist with, augment, guide and, soon enough, outperform humans.

This week, we’re reporting on a startling, scholarly white paper recently issued by researchers from Yale, the Future of Humanity Institute at Oxford and the AI Impacts think tank that adumbrates the AI world to come.

The white paper – “When Will AI Exceed Human Performance?”, based on a global survey of 352 AI experts – reinforces the truism that technology is always at a primitive stage. Impressive as current Big Data and machine learning innovations are, they are embryonic compared with Advanced AI in the decades to come.

![]() High-Level Machine Intelligence (HLMI) will transform the life we know. According to study, it’s not just conceivable but likely that all human work will be automated within 120 years, many specific jobs much sooner.

High-Level Machine Intelligence (HLMI) will transform the life we know. According to study, it’s not just conceivable but likely that all human work will be automated within 120 years, many specific jobs much sooner.

Some of the study’s findings are not surprising. We know, for example, that jobs like truck driving are limited. But the study predicts that a surprising number of occupations considered to be “value add,” creative and nonfungible, such as producing a Top 40 pop song, will be done by machines within 10 years. (Actually, truck driving also will be automated within 10 years, the survey found, which may say less about truck driving than it does pop music.)

The study asked respondents to forecast automation milestones for 32 tasks and occupations, 20 of which, they predict, will happen within 10 years. Some of the more interesting findings: language translator: seven years: retail salesperson: 12 years; writing a New York Times bestseller and performing surgery: approximately 35 years; conducting math research: 45 years.

The study asked respondents to forecast automation milestones for 32 tasks and occupations, 20 of which, they predict, will happen within 10 years. Some of the more interesting findings: language translator: seven years: retail salesperson: 12 years; writing a New York Times bestseller and performing surgery: approximately 35 years; conducting math research: 45 years.

The researchers point to two watersheds in AI revolution that will have profound impact. The first is the attainment of HLMI, “achieved when unaided machines can accomplish every task better and more cheaply than human workers.”

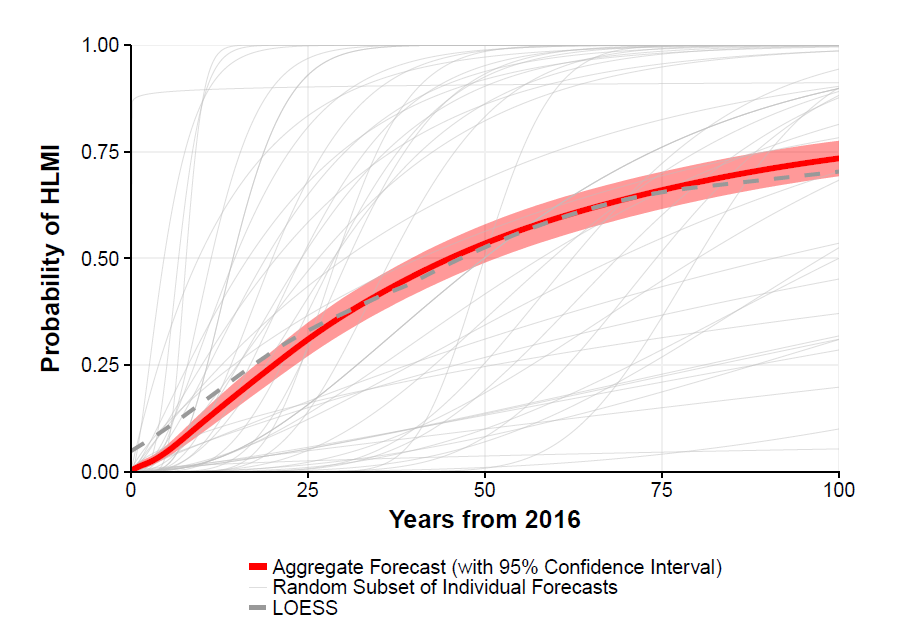

The researchers reported that the “aggregate forecast” gave a 50 percent chance for HLMI to occur within 45 years (and a 10 percent chance within eight years). Interestingly, respondents from Asia are more sanguine about the HLMI timeframe than those from other regions – Asian respondents expect HLMI within about 30 years, whereas North Americans expect it in 75 years.

AI research will come under the power of HLMI within 90 years, and this in turn could contribute to the second major watershed, what the AI community calls an “intelligence explosion.” This is defined as AI performing “vastly better than humans in all tasks,” a rapid acceleration in AI machine capabilities.

All of this, of course, has incredibly potent social and economic implications, which is why the researchers conducted the study.

“Advances in artificial intelligence (AI) will transform modern life by reshaping transportation, health, science, finance, and the military,” the study’s authors state. “To adapt public policy, we need to better anticipate these advances…

“Self-driving technology might replace millions of driving jobs over the coming decade,” they continue. “In addition to possible unemployment, the transition will bring new challenges, such as rebuilding infrastructure, protecting vehicle cyber-security, and adapting laws and regulations. New challenges, both for AI developers and policy-makers, will also arise from applications in law enforcement, military technology, and marketing.”

In preparing the survey, the authors targeted all researchers who published at the 2015 Neural Information Processing (NIPS) and International Conference on Machine Learning (ICML) conferences, two prominent peer-reviewed machine learning research conclaves. Twenty-one percent responded to the survey out of 1634 authors contacted.

Among the issues explored by the study:

“Will progress in AI become explosively fast once AI research and development itself can be automated? How will HLMI affect economic growth? What are the chances this will lead to extreme outcomes (either positive or negative)? What should be done to help ensure AI progress is beneficial?

While the authors state there will be “profound social consequences if all tasks were more cost effectively accomplished by machines,” the weight of survey sentiment about the ultimate impact of HLMI is optimistic. The median probability for a “good” or “extremely good” outcome was 45 percent, whereas the probability was 15 percent for a bad “extremely bad” outcome (such as human extinction).

The study did not delve into details of what those outcomes – good or bad – will look like, but the authors stated that “Society should prioritize research aimed at minimizing the potential risks of AI.”