AI compute cycles are expected to grow by a factor of 20 within the next three years as the intelligence revolution goes mainstream. Intel is working to fuel this growth at every level, delivering a major leap in AI performance with new Intel® Xeon® Scalable processors and targeting a game-changing 100X increase in machine learning performance by 2020 with the Intel® Nervana™ Platform.

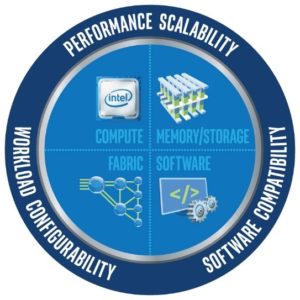

These leaps in performance will need to be accompanied by comparable leaps in scalability to support growing data volumes and larger neural networks. To address this need, Intel is driving innovation across the entire HPC solution stack through the Intel® Scalable System Framework (Intel® SSF). Tight integration and synchronized innovation across compute, memory, storage, fabric, and software will help organizations scale cost-effectively as their own AI revolution unfolds.

Superior Performance Today

Choosing the right processors for specific workloads is important. Current options include:The move toward faster, more scalable machine learning is already under way. Intel is working with vendors and the open source community to optimize popular software frameworks and algorithms for higher performance on Intel architecture. The performance benefits can be transformative[1].

- Intel® Xeon® Scalable processors for inference engines and for some training workloads. Intel Xeon processors already support 97 percent of all AI workloads[2]. With more cores, more memory bandwidth, an option for integrated fabric controllers, and ultra-wide 512-bit vector support, the new Intel Xeon Scalable processors (formerly code name Skylake) provide major advances in performance, scalability, and flexibility. They are ideal for deploying AI inference engines at scale and, in many cases, for tackling the heavier demands of neural network training. With their broad interoperability, they also provide a powerful and agile foundation for integrating AI solutions into other business and technical applications.

- Intel® Xeon Phi™ processors for training large and complex neural networks. With up to 72 cores, 288 threads, and 512-bit vector support—plus integrated high bandwidth memory and fabric controllers—these processors offer performance and scalability advantages versus GPUs for neural network training. Since they function as host processors and run standard x86 code, they simplify implementation and eliminate the inherent latencies of PCIe-connected accelerator cards. An AI-optimized upgrade to the Intel Xeon Phi processor family (code name Knights Mill) will be in production in the fourth quarter of 2017, and is expected to provide a significant increase in deep learning training performance to help unleash a new wave of innovation.

- Optional accelerators for agile infrastructure-optimization. Intel offers a range of workload-specific accelerators, including programmable Intel FPGAs that can evolve along with workloads to meet changing requirements. These optional add-ons for Intel Xeon processor-based servers bring new flexibility and efficiency for supporting AI and many other critical workloads. They open new doors for innovation, and can help organizations reduce data center power and space requirements for their most demanding workloads.

Unprecedented Performance Tomorrow

If today’s Intel processors push the boundaries of machine learning performance, tomorrow’s will shatter them. The Intel Nervana Platform is a complete solution stack that is designed for the sole purpose of delivering unprecedented performance and density for neural networks. High-speed memory and powerful interconnects are built into each chip to deliver extreme performance that can be scaled across multiple chips and multiple chassis without performance loss.

At the same time, Intel SSF is evolving to help enable cutting-edge performance at every scale, from small workgroup clusters to the world’s largest supercomputers. In combination with rapid, ongoing advances in the x86 software ecosystem—including applications, frameworks, and optimized Intel libraries—this ramp up in computing capability will provide the foundation for a tidal wave of AI innovation. In relatively short order, this groundbreaking new technology will become a mainstream resource that can be deployed with confidence by virtually every organization.

Learn more: Read previous and future articles about the benefits Intel SSF brings to AI through synchronized innovation across the complete HPC solution stack: Overview, memory, compute, fabric, storage, software.

[1] For more information on the value of running optimized AI software on Intel Architecture, read the Intel article: “Intel® Xeon Phi™ Delivers Competitive Performance for Deep Learning—And Getting Better Fast,” September 26, 2016. https://software.intel.com/en-us/articles/intel-xeon-phi-delivers-competitive-performance-for-deep-learning-and-getting-better-fast

[2] Based on Intel internal estimates.