For about a year the Renaissance Computing Institute (RENCI) has been assembling best practices and open source components around data-driven scientific research to create a rapidly deployable ‘cross-disciplinary Data CyberInfrastructure’ dubbed xDCI. Funded in part by NSF, and quite far along in its first two test cases, xDCI could become a powerful enabler of research in the age of growing datasets and the proliferation of data sources that often turn even small research projects into big data management and analysis challenges.

“xDCI was really driven by our experience working with different groups of scientists and different disciplines. We saw there were many common components needed and there were also sort of unique capabilities needed depending upon the domain. So RENCI has had been putting together these domain-specific cyberinfrastructures for many years,” said xDCI project lead Ashok Krishnamurthy, also deputy director, RENCI.

“The idea of xDCI was to have a collection of components that we have experience with and that we know interoperate well. Then, depending upon the particular need of the group that wants to stand up a community data sharing and collaborative analysis facility, we would put these components together into a solution that is particular to that community. We would also work with them in terms of how do you actually take it out into the community and bring other researchers to start using those services.”

Such thinking is in line with RENCI’s mission to be “leader in data science and an essential catalyst for data-driven discoveries leading to better health, a safer environment, and improved economic and business successes.” Certainly expansive goals.

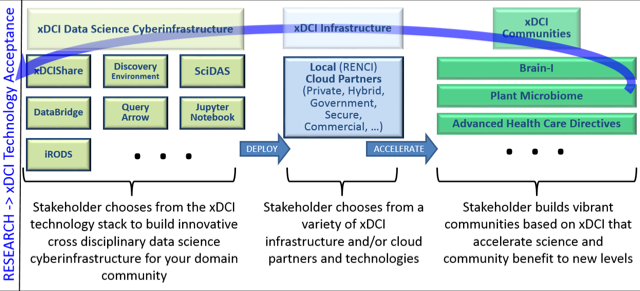

RENCI describes xDCI as a “technology framework that enables their research communities to rapidly deploy robust cyberinfrastructure that can easily ingest, move, share, analyze and archive scientific data in all its varieties.” Besides open source and specific RENCI-deveoped technologies, tools such as Docker and HTCondor are also leveraged by the xDCI team. The specific xDCI stack elements at this writing are bulleted here, and the deployment process sketched out below:

- iRODS – Open source data management, providing automated data virtualization, data discovery and metadata templates via an integrated rules engine.

- CyVerse DE – Workflows, apps, and analysis environment.

- xDCIshare – Data sharing among team members and with the larger community.

- Jupyter Notebook – Creation and sharing of live code, equations, visualizations and text.

- Query Arrow – Semantically unified SQL and NoSQL query and update system.

- DataBridge – Discovery of relevant datasets and “dark” data.

- SciDAS – Fluid and flexible infrastructure for working with and analyzing large-scale data.

It’s important to note that xDCI offers considerably more than just a set of tools. RENCI is formalizing the effort to help researchers stand up xDCI environments with what it calls a set of “concierge” services. Here is snapshot of concierge services taken from the xDCI web page – bear in mind the process is still taking shape:

- Technology Concierge – The xDCI Information Technology (IT) Concierge staff will work with your team to move your project from concept to a productive and efficient combination of select xDCI technologies, in effect creating a unique xDCI-based architecture for your project.

- Software Concierge – The xDCI Software Concierge staff will serve as technical project managers and work with your team to deploy your project on the appropriate cloud infrastructure. Once deployed, they will work with your team to instill sustainable software best practices to ensure efficient and persistent continuation of your project over time.

- Data Science Concierge – The xDCI Data Science Concierge staff will offer data science expertise in how to extract maximum scientific and community benefit from your deployment of xDCI. These staff are experts in data science analytics and visualization using the latest technologies and methods that will accelerate your community to new levels of achievement.

- Sustainability Concierge – The xDCI Sustainability Concierge staff will assist in the identification of requirements necessary to support future scenarios including migration to new cloud infrastructure, business plans for identifying future funding models, and identification of technology resulting from use of xDCI which may feed back into xDCI itself.

The BRAIN-I project is the most advanced xDCI pilot; it is essentially a cyberinfrastructure for dealing with large image files and supports work by Jason Stein, an assistant professor in the department of genetics at UNC-Chapel Hill and a researcher at UNC’s Neuroscience Center.

The research itself is fascinating. A few years back, a method for removing lipid (fatty) tissue from the brain was developed. In its place a more transparent gel is placed making a kind of “see-through brain”. The work is with mice. Using light sheet microscopy researchers can scan thin layers or section of the brain. Use of different stains allows highlighting different cell types. Obtaining images this way makes it much easier to align sections and trace neuron paths correctly and to create composite 3D images of the brain. If you do this for a set of bred mice with a particular disease, say Alzheimer’s Disease, you can compare it to images from a set of normal (wild type) mice.

It also turns out, not surprisingly, that handling and processing mouse brain image data is a huge challenge.

“Each of the 3-D images for a mouse brain turns out to be 2-to-4 terabytes and there are multiple images for each experiment. In a typical experiment, they may have bred mice, let’s say 24 bred mice, that have genetic characteristics that they could image. They would have another 24 mice for control wild type mice that they would image. Suddenly for a single experiment you would have several hundred of these images, each of which is 2-4 TB in size. That causes significant problems in terms of data management, in terms of how you do computation on it, how do you analyze on it,” said Krishnamurthy.

“The images we are analyzing are 1 micron thick, and a cell is about 10 microns, so we have many images with very detailed resolution,” said Stein. “All that data has to go somewhere, but it can’t fit onto individual hard drives. If you want to share an image with a colleague the process can take days or weeks.”

Enter RENCI and xDCI.

The BRAIN-I system takes the 3D microscopy images and replicates that data onto a server at RENCI that runs the integrated Rule Oriented Data System (iRODS). Once ingested into an iRODS data grid, the data is validated, metadata tags are assigned to it and relevant inputs and processes are documented to provide an historical record of the data and its origins. Using iRODS, each image can be linked to its biological sample, tracked from its creation in the lab through final analysis, and made discoverable and reproducible for future research.

Analysis and visualization tools can be used in the BRAIN-I system thanks to a collaboration with CyVerse, a cyberinfrastructure initiative based at the University of Arizona that offers an easy to use, web-based interface for handling computing and data analysis. Using the CyVerse Discovery Environment (DE), BRAIN-I users can launch analysis codes that are packaged as Docker images. Docker is a platform that packages software into a lightweight container that includes everything needed to run the software and is guaranteed to work the same regardless of who runs it, their location or the type of computer they are using.[i]

xDCI also helped BRAIN-I researchers develop a custom microscopy data ingest workflow. Image analysis takes place on RENCI HPC resources and leverages GPUs there but in principle, an xDCI community could be stood up anywhere and work with its preferred compute resources. RENCI also has access to a duplicate system in the Information Technology Services at UNC that has more GPUs. “We have the two locations but technically we could reach into any place that computing resources are available,” said Krishnamurthy.

RENCI director Stan Ahalt and Krishnamurthy emphasize the longterm goals for xDCI are broad. Think of xDCI as software stack for data-driven science that lets users select what suits their community needs. It’s even possible for experienced users to simply download xDCI components. “That has happened. As an example, the National Cancer Institute is using some of the components to manage their data internally and use them,” said Ahalt.

RENCI director Stan Ahalt and Krishnamurthy emphasize the longterm goals for xDCI are broad. Think of xDCI as software stack for data-driven science that lets users select what suits their community needs. It’s even possible for experienced users to simply download xDCI components. “That has happened. As an example, the National Cancer Institute is using some of the components to manage their data internally and use them,” said Ahalt.

The use cases vary. The BRAIN-I infrastructure includes automated data ingest, image analysis and analytics, automated data management, data discovery, data publication, and cross-institutional collaboration. For another xDCI pilot – My Health Peace of Mind (MyHPOM) an online system to support sharing advanced health care directives – the primary use cases include document sharing, document versioning, individual and group access controls, comments and ratings on documents, secure storage and archiving, and cross-institutional collaboration.

While these efforts are life sciences centric the intent accommodate any domain. In fact and long those lines, RENCI borrowed work on another NSF-funded project, HydroShare, a physical sciences effort, for use in xDCI. HydroShare[ii] is part of the NSF’s Software Infrastructure for Sustained Innovation (SI2) program; its goal is “to enable scientists to easily discover and access hydrologic data and models, retrieve them to their desktop, or perform analyses in a distributed computing environment that may include grid, cloud, or high performance computing.”

Ray Idaszak, RENCI HydroShare project lead and co-leader of xDCI, said, “The HydroShare software has about a half million lines of code, required quite a bit of NSF funding, and had ten teams contribute to it. We’re taking that code base and we are generalizing it and we are making it part of the xDCI technology stack. So for example the advanced healthcare project will be based on HydroShare code that has been repurposed so it can serve a completely different orthogonal community.”

NSF funding for the BRAIN-I pilot is scheduled to run for another year and RENCI is seeking additional funding for xDCI. One question is how large should xDCI ambitions be? Much of the user base may end up being in the NC and SC area frequently served by RENCI but, as mentioned by Ahalt, there’s no reason it couldn’t be used widely and perhaps even by commercial entities. RENCI’s core mission[iii] is more academic and includes developing and deploying advanced computing to support research as well as conducting research itself.

“This idea of doing things as a service is a little bit outside of our wheelhouse however we have sought guidance from our leadership and they are willing to have us at least consider that as a different kind of model. That’s kind of where we’re headed. We don’t necessarily want to claim we’re there,” said Ahalt. “If Exxon, and I am making this up, if Exxon talked to us and asked us to stand up the infrastructure to manage all of their data, I’m not sure we’d be in a position to be able to service that request very quickly, although with some time lag we could start thinking about that. In every case what we are looking for first is a research engagement.”

“If there was a reason why Exxon wanted us to do it and they were also interested in having us look at some of their seismic data, for example, then it is a research engagement. It’s just this pure service model is doable but it’s not the typical thing an academic institution would do.”

Broadly, xDCI is part of the shift in advanced computing that combines HPC and data analytics and deep learning, all of which frequently require different infrastructure capabilities. Efforts to harmonize among them are ongoing, spanning the most demanding efforts such as the U.S. Exascale Computing Project to traditional enterprise systems, cloud computing, and IoT.

“I don’t want to go too far philosophical but I do think that we are entering a new phase of how computation interacts with data and it’s a little bit hard to explain exactly what I am thinking. In the past, HPC, a lot of HPC not all, has focused on most extensive core use, simulation. I think that will continue, but I also think increasingly the simulation will be in the context of newly minted data and by newly minted I mean data that is both incoming because it is being collected by sensors or by other types of surveillance or whatever and data that is discovered as the computation proceeds, so almost as a consequence of steering the computation. I think this ecosystem of data and HPC are going to coexist in some interesting ways,” said Ahalt.

One stark example, noted Ahalt, is the massive computational efforts used to assist in dealing with hurricane Harvey and its aftermath in Texas.

“What if all those were interlinked and you could do some centralized steering among all of those simulations, you know discover where to put bottles of water, how to reroute ships to find alternative ports to offload sugar cane, identify escape routes for individuals. All of these things are at least technically plausible. I think we are increasingly moving to a system that allows us to think about that type of highly interconnected HPC and rich data sources where the programmer may not have said ‘I also need to go find out about the weather in Wisconsin’ because it just happens it’s a feeder to the Mississippi. What I am saying it is an interconnected universe.”

“If you think about knowledge networks, it is not just deep learning although deep learning is also a central part, but it is the literal discovery process of relationships between disparate datasets that is starting to emerge as a force in science.”

[i] Explanation excerpted from RENCI article BRAIN-I project, http://renci.org/news/brain-i-project-uses-renci-cyberinfrastructure-called-xdci-to-manage-brain-microscopy-images/

[ii] The HydroShare project will expand the data sharing capabilities of the Consortium of Universities for the Advancement of Hydrologic Science, Inc. (CUAHSI) Hydrologic Information System (HIS), a nonprofit research organization representing about 130 U.S. universities and international water science-related organizations.”

[iii] RENCI (Renaissance Computing Institute) develops and deploys advanced technologies to enable research discoveries and practical innovations. RENCI partners with researchers, government, and industry to engage and solve the problems that affect North Carolina, our nation, and the world. An institute of the University of North Carolina at Chapel Hill, RENCI was launched in 2004 as a collaboration involving UNC Chapel Hill, Duke University, and North Carolina State University.