Unicorn is a parallel programming framework that provides a simple way to program multi-node clusters with CPUs and GPUs, and potentially other compute devices. Its innovative runtime allows these programs to run efficiently even when connected by a network with limited capabilities. Unicorn supports heterogeneous clusters, allowing incremental and gradual assembly of clusters. At the same time it exposes a unified abstract programming model that easily adapts to such an evolving cluster.

Unicorn enables a universal shared address space, which need not be partitioned in a user-visible way. The user program remains agnostic to the data placement or the address space location and its distribution, making for a familiar programming style. In fact, it is possible to simply “plug in” already optimized and tested pre-existing sequential code, multithreaded code, MPI code or CUDA kernel into the Unicorn framework, assuming “locally available” memory. Even new code development can occur mainly in a local sandbox before plugging it into the parallel Unicorn framework and distributing the computation across the cluster. The user code never contains explicit data transfers or task scheduling. Under the hood, Unicorn simply makes it happen with the help of an efficient distributed data directory, pre-fetching data and overlapping the communication with other computation. However, the current implementation does rely on explicitly user hints about which section of the universal address space is of interest to which part of the code. On-demand fetching of address space is also possible but less efficient. This association between address space segments and subtasks can be inferred automatically in the future.

The other important innovation in Unicorn is to defer synchronizations in a well defined, user controlled, isolated step in a bulk-synchronous manner. As a result, the usual pain associated with non-deterministic shared memory access and data races is simply goes away. Unicorn makes this possible with the help of a check-in and check-out memory semantics with explicit conflict resolution in the synchronization step. This synchronization step includes a rich set of primitive for the conflict resolution and data agglomeration.

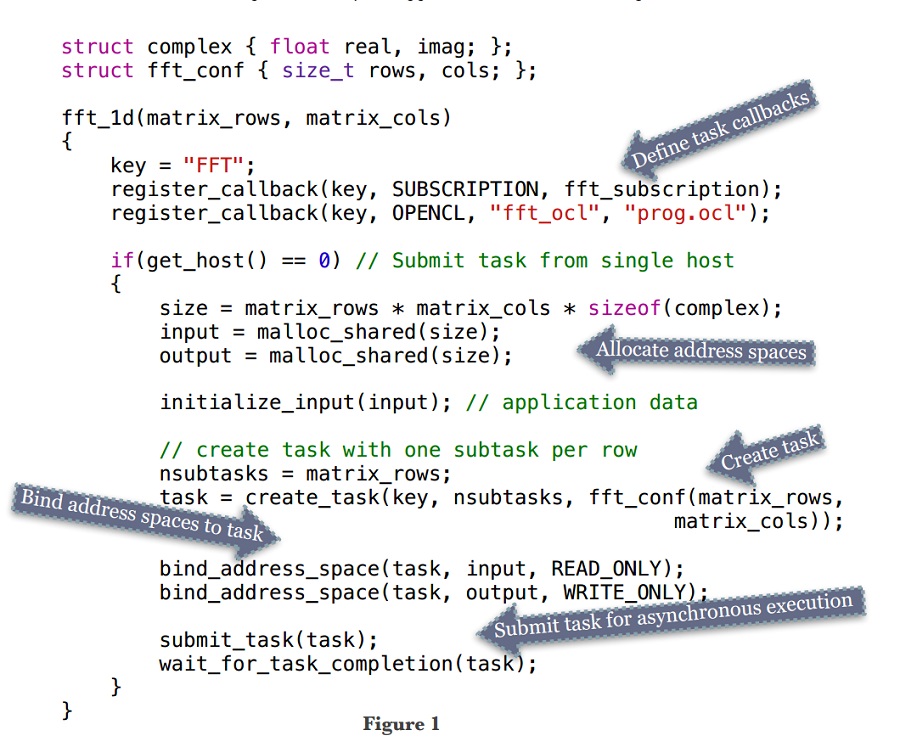

In the Unicorn framework an application is organized into multiple tasks that can have complex dependencies among them. Each task is in turn organized into many independent subtasks. A subtask is implemented by the application — and can be sequential code, OpenCL or CUDA kernel, or other parallel code. Unicorn schedules each subtask dynamically on a subset of available devices capable of computing that subtask, while balancing device load and monitoring network latency. A subtask may contain multiple code variants, each optimized for a specific subset of devices in the cluster, and Unicorn chooses the right variant for the device it eventually schedules the subtask on. As an example, Figure 1 demonstrates what a simple Unicorn application looks like.

Unicorn runtime is optimized for relatively coarse-grained subtasks, which compute more often (even though the data may be anywhere in the universal address space) and synchronize only occasionally. It uses many ideas like hierarchical pro-active load stealing, lock-free directory management, network load prediction, memory-compute matching, etc. This work is a part of the PhD thesis of Tarun Beri. More details are available at http:// www.cse.iitd.ac.in/~subodh/unicorn.html.

Subodh Kumar, Professor, IIT Delhi, is presenting at GTC18 in San Jose this week. His session, “S8565 – Programming a Hybrid CPU-GPU Cluster using Unicorn,” takes place Thursday, March 29, at 2pm.