Don’t look now, but GPUs are gobbling up big workloads as massive data-crunching AI use cases proliferate around the world. That news couldn’t be sweeter for Nvidia, which is hosting its ninth annual GPU Technology Conference (GTC) this week in San Jose, California.

GPUs have come a long way from the early 1990s, when Jensen Huang, Chris Malachowsky, and Curtis Priem founded Nvidia. At the time, rendering lifelike graphics was one of the toughest computational challenges, so the trio decided to tackle the development of a massively multicore processor that could offload the graphics work from the main CPU.

The company’s GPUs became hot commodities among a certain demographic, i.e. young male video game enthusiasts. But making first-person shooters pop doesn’t explain how Nvidia’s stock has risen 1,900 percent over the past five years and made the Santa Clara company a $150 billion giant.

Rise of GPUs

We’ve known for some time that GPUs are good at other things besides driving high quality graphics for video games. In the mid 2000s, the high performance computing (HPC) crowd discovered how much faster GPUs are at math than CPUs, and so they started building them into supercomputers as accelerators.

More recently, Web giants like Google have discovered that GPUs are good at something else: deep learning.

By training neural networks upon massive amounts of data, hyperscalers discovered they could get an iterative improvement on machine learning tasks like image recognition and natural language processing (NLP). GPUs aren’t required for training neural networks, but they sure can make them run faster.

Nvidia isn’t the only player in the emerging deep learning world, but it’s one of the central figures that has made the deep learning revolution possible. What’s more, its GTC conferences are showcases for what’s becoming possible with the combination of GPUs and AI.

The rise of deep learning has given artificial intelligence (AI) a shot in the arm and opened the door to using computers to solve problems that previously were deemed unsolvable. Without GPU-powered deep learning, we would not have had a mad rush to develop the first truly autonomous car. The number of other use cases for deep learning — in radiology, pharmacology, bioinformatics, security, and more — continues to grow on a weekly basis.

Not every session at GTC this week is about AI, but it’s safe to say that a majority of them are. “This has become one of the premier conferences in the world around AI,” Greg Estes, vice president of developer programs at Nvidia, said in a press briefing yesterday.

More than 8,000 people have registered for the four-and-a-half day GTC conference, which is taking place in the San Jose McEnery Convention Center, a few miles from Nvidia’s headquarters. According to Estes, the number could exceed 9,000, which would blow away last year’s attendance figure of 6,500 attendees.

‘Meteoric Rise’

Ian Buck, vice president and general manager of accelerated computing at Nvidia, shared some impressive figures about the rise of GPUs around the world.

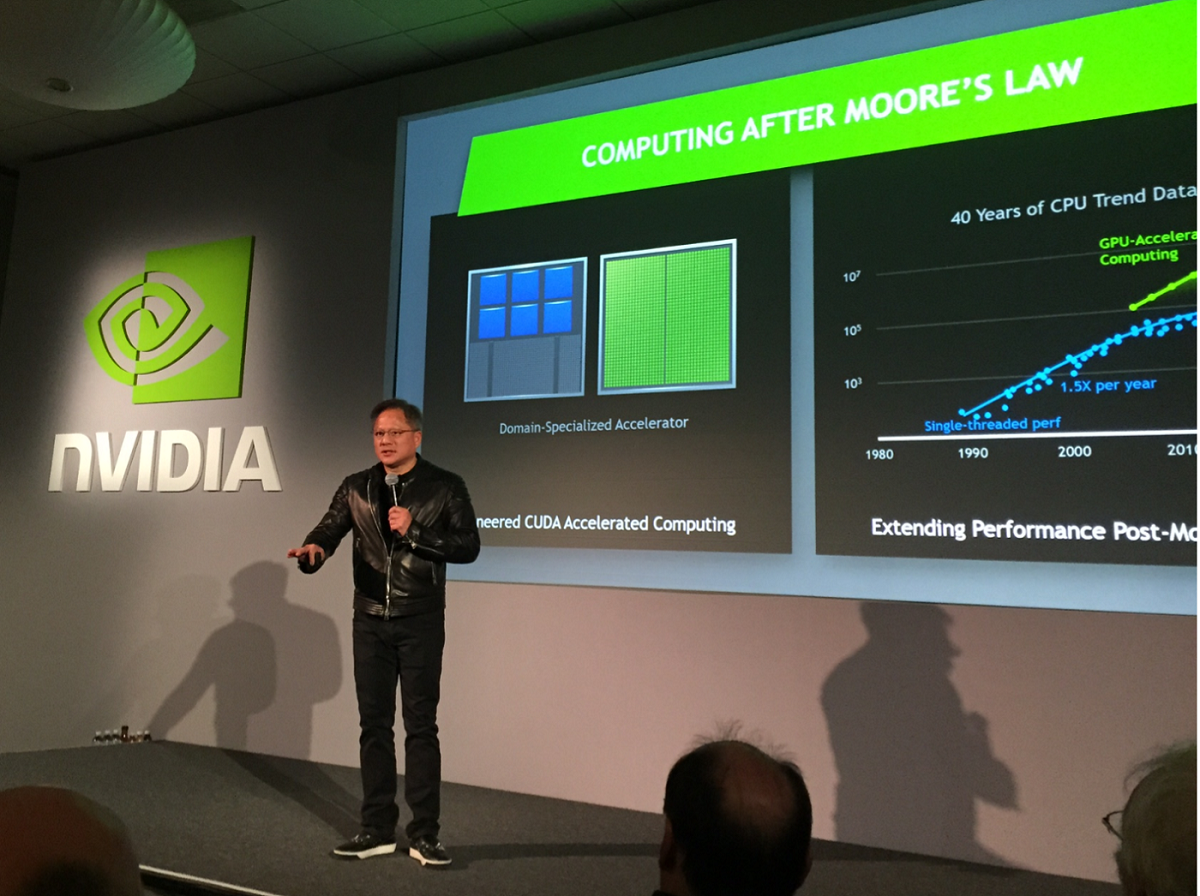

When Nvidia launched its first datacenter product in 2006, a single Nvidia GPU boasted a 4x performance advantage over a comparable CPU. With the “Volta” V100s installed in supercomputers today, the advantage has widened considerably.

“We are now 20x faster than a comparable CPU node. That’s 1.7x faster year-over-year performance, clearly outstripping Moore’s law,” he said.

What’s more, GPUs will power 50 percent of the floating point computational horsepower for the top 50 supercomputers in the world. “That’s a 15x increase in five years from where we were,” he continued. “We’re obviously experiencing a meteoric rise in GPU computing and acceleration.”

That type of growth would not be possible if Nvidia didn’t gamble by making big changes in how it developed hardware and how it developed software to exploit that hardware, Buck said.

“It really speaks to how we are innovating and how we think about our products and how we think about … the market,” Buck said. “We change our architectures. We change our instruction sets. We’re not afraid to do so because we’re actually developing a new kind of…accelerated computing model, that’s breaking the old rules and running this … faster than Moore’s law.”

Deep learning is delivering huge gains in that field. According to Buck, deep learning has delivered a 190x performance boost for image recognition compared to traditional machine learning methods. A neural language translator saw a 50x boost. Speech recognition is up 60x, while voice synthesis — or voice generation — has improved 36x.While the HPC market may have given Nvidia enterprise credibility, it’s the rise of AI and deep learning that seems to have investors excited. The company is working closely with nearly every major tech company — from IBM and SAP to Facebook and Google — to exploit the architectural advantages that its GPU architecture provides for powering neural networks.

The first generation of deep learning systems revolved around two main problems: image recognition and NLP. We’re now seeing deep learning being applied to a broader range of challenges, and Nvidia is at the forefront in making it happen with its GPUs and software frameworks.

“As these neural networks are getting smarter, they’re doing more things. They’re applying new use cases, they’re getting larger,” Buck said. “Naturally they have to do more and get more accurate. They’re growing.”

Nvidia CEO Huang takes the stage this morning to deliver his highly anticipated keynote address. In the past, this is how Nvidia made major announcements, like unveiling new GPUs. But far from just selling super-fast GPUs, the company is positioning itself to be a full-stack platform provider, and the shepherd of an ecosystem that’s emerging around AI and deep learning. With hundreds of partners, Nvidia is posed to be a key player as AI use cases expand, and GTC is its showcase for that work.

It’s all about creating “a different kind of computing,” Buck said. “We don’t try to build a processor that tries to run a fixed instruction set. We’re building an entire platform and innovating in all layers of that stack.”

A version of this article originally appeared on our sister site, Datanami.