Nvidia unveiled a raft of new products from its annual technology conference in San Jose today, and as expected, there was no major new silicon announcement (or any mention of what’s next on the roadmap). Chip consumers can only handle so many refreshes and V100 just came out in September – but Nvidia did have a few surprises in store for HPC hardware aficionados. The de facto server maker is announcing a higher-memory V100, an upgraded DGX server and most impressively, Nvidia’s first ever switch technology.

In front of nearly 8,000 attendees at the San Jose Convention center in a two-and-a-half hour keynote, Nvidia CEO Jensen Huang announced that the company is upgrading its Tesla V100 products (SXM and PCIe modules) to now have 32 GB of memory each, a 2X boost, to help data scientists train deeper larger models and to boost the performance of memory-constrained HPC applications.

The original V100s had 16GB GPUs with a 4-hi stack of HBM2 memory; now the chip has an 8-hi stack of HBM2. All the other stats are the same (floating point numbers, CUDA cores, thermals, electricals), which will be a relief to channel partners and end users who just made investments in the V100 and related components. The larger memory GPU helps with larger networks, enabling larger batch sizes and more training in parallel. Nvidia said it’s seeing neural machine translation and large-scale FFTs, the latter commonly used in the oil and gas industry and signal processing, executing about 50 percent faster.

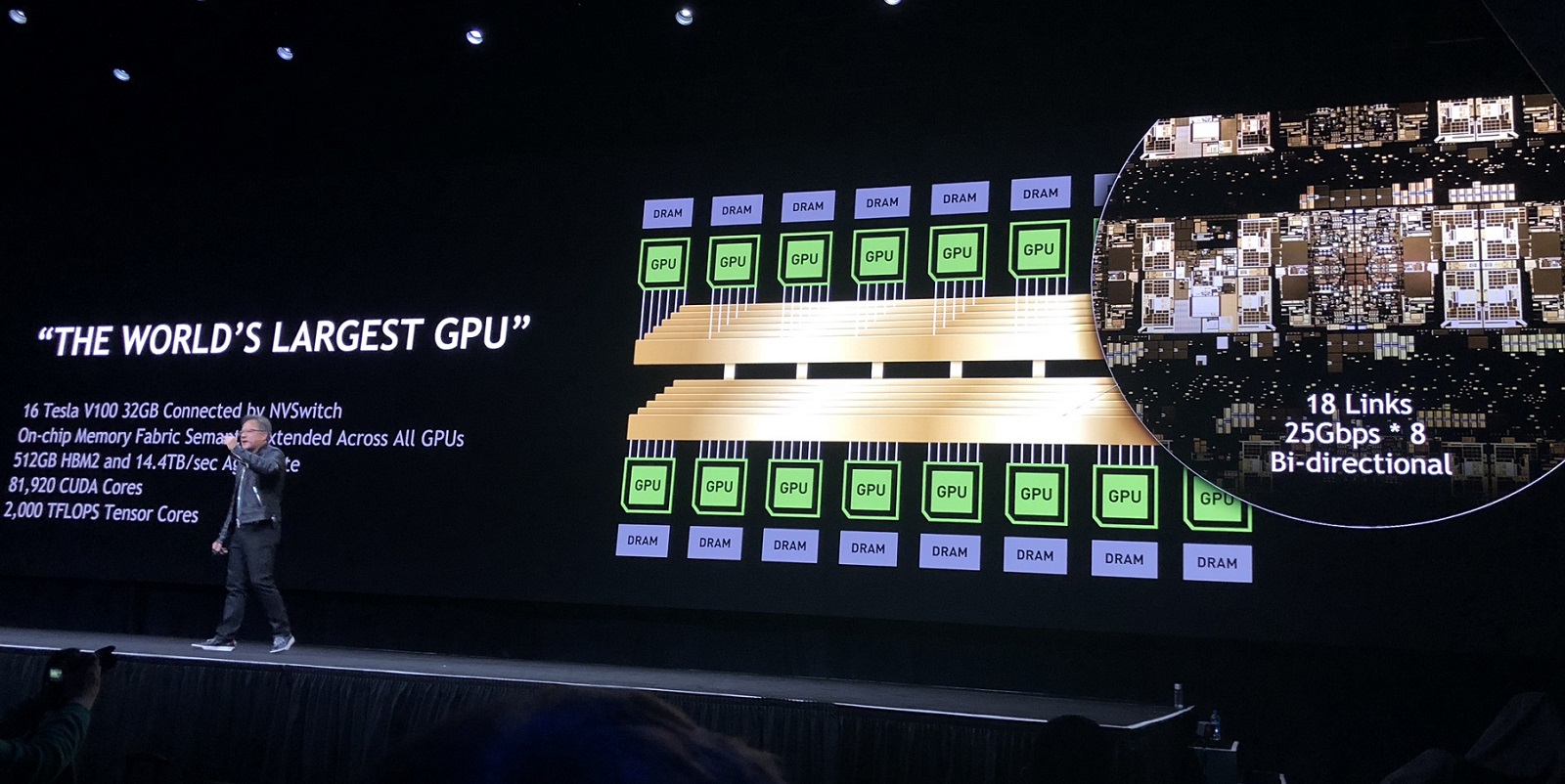

Huang also unveiled the 16-GPU DGX-2 server – and like the ‘2’ in the name, the box offers delivers two petaflops at half-precision (FP16), twice the computational amperage of the first-iteration eight-GPU DGX-1 unit. So how did Nvidia pack 16-NVlinked GPUs into one server if the V100s GPUs only have 6 ports? Well that brings us to the next part of the hardware reveal: the NVLink Switch, or NVSwitch.

The NVSwitch extends the innovations of Nvidia’s NVLink interconnect and offers 5x higher bandwidth “than the best PCIe switch,” according to Nvidia. It’s an 18-port fully connected crossbar switch (comprised of 12 switch ASICs) that allows users to build an NVLink fabric. Each port delivers 50 GB/sec for a total of 900 GB/sec of aggregate NVLink bi-directional bandwidth in a single device. “It is a fully-connected crossbar internally – at every port connected to every other port at full speed,” said Ian Buck in a pre-announcement press briefing yesterday. “We spared no expense on the design of this to make sure we would never be limited by GPU to GPU communication.”

The DGX-2 is a monster. The 10U, 350 lb mega-server houses two boards, with eight v100s 32GBs and six NVSwitches on each, enabling the GPUs to communicate at a record 2.4 TB per second. All those GPUs and switches consume a lot of power and the entire machine can burn a turbine-spinning 10,000 watts.

The server supports both InfiniBand or 100G Ethernet and increases the system memory to 1.5 TB (LRDIMM), up from 512 GB. It has two Intel Xeon Platinum (Skylake-SP) CPUs and 30 TB of NVMe SSDs, expandable up to 60 TB. Since not every user wants to take advantage of all 16 GPUs all the time and to make the product cloud-friendly, Nvidia is announcing full KVM support, so the system can either run all 16 GPUs with NVSwitch, or it can be segmented down to a single GPU.

The server supports both InfiniBand or 100G Ethernet and increases the system memory to 1.5 TB (LRDIMM), up from 512 GB. It has two Intel Xeon Platinum (Skylake-SP) CPUs and 30 TB of NVMe SSDs, expandable up to 60 TB. Since not every user wants to take advantage of all 16 GPUs all the time and to make the product cloud-friendly, Nvidia is announcing full KVM support, so the system can either run all 16 GPUs with NVSwitch, or it can be segmented down to a single GPU.

Nvidia announced that with DGX-2 it has taken the training time of FAIRSeq, a neural machine translation model, from 10 days (on the V100-equipped DGX-1) down to 1.5 days, a 10x improvement in six months. Nvidia claims that it would take an equivalent of about 300 Skylake servers to get that same performance into a single server.

The price tag for the DGX-2 server is $399,000 and availability is scheduled for the third quarter.

The 32GB v100 GPU is available immediately across Nvidia’s entire DGX portfolio and it will also be available from major computer manufacturers, including IBM, Cray, Hewlett Packard Enterprise, Lenovo, Supermicro and Tyan. Oracle announced that it will offer Tesla V100 32GB in the Oracle Cloud infrastructure the second half of 2018.

Nvidia also announced it has updated its deep learning stack with new versions of Nvidia CUDA, TensorRT, NCCL and cuDNN. Nvidia said it has crossed the 8 million mark for total number of CUDA downloads, more than half of those in the last year.