On Monday, the United States Department of Energy announced its intention to procure up to three exascale supercomputers at a cost of up to $1.8 billion with the release of the much-anticipated CORAL-2 request for proposals (RFP). Although funding is not yet secured, the anticipated budget range for each system is significant: $400 million to $600 million per machine including associated non-recurring engineering (NRE).

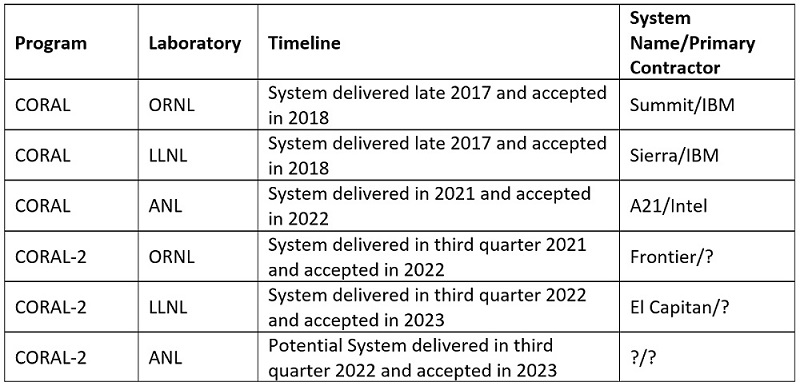

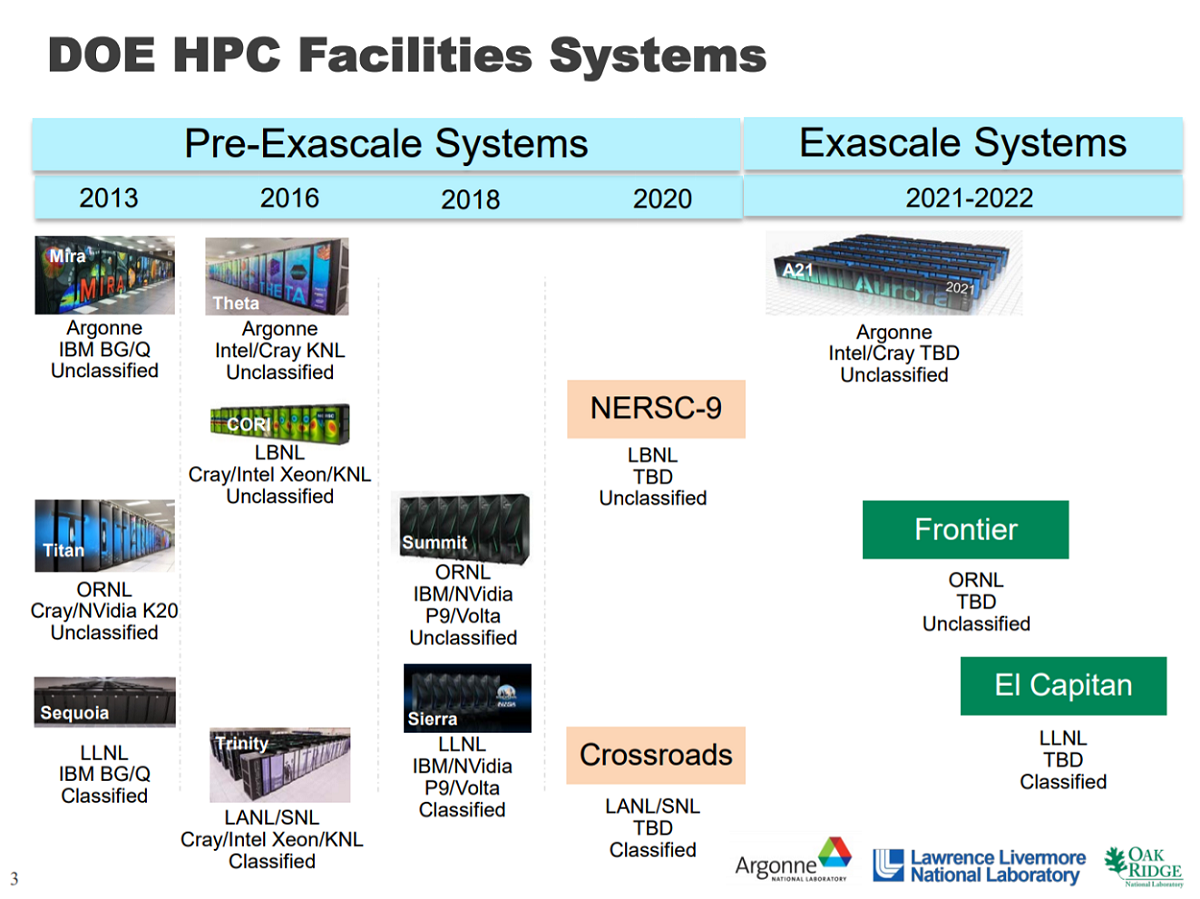

CORAL of course refers to the joint effort to procure next-generation supercomputers for Department of Energy’s National Laboratories at Oak Ridge, Argonne, and Livermore. The fruits of the original CORAL RFP include Summit and Sierra, ~200 petaflops systems being built by IBM in partnership with Nvidia and Mellanox for Oak Ridge and Livermore, respectively, and “A21,” the retooled Aurora contract with prime Intel (and partner Cray), destined for Argonne in 2021 and slated to be the United States’ first exascale machine.

CORAL of course refers to the joint effort to procure next-generation supercomputers for Department of Energy’s National Laboratories at Oak Ridge, Argonne, and Livermore. The fruits of the original CORAL RFP include Summit and Sierra, ~200 petaflops systems being built by IBM in partnership with Nvidia and Mellanox for Oak Ridge and Livermore, respectively, and “A21,” the retooled Aurora contract with prime Intel (and partner Cray), destined for Argonne in 2021 and slated to be the United States’ first exascale machine.

The heavyweight supercomputers are required to meet the mission needs of the Advanced Scientific Computing Research (ASCR) Program within the DOE’s Office of Science and the Advanced Simulation and Computing (ASC) Program within the National Nuclear Security Administration.

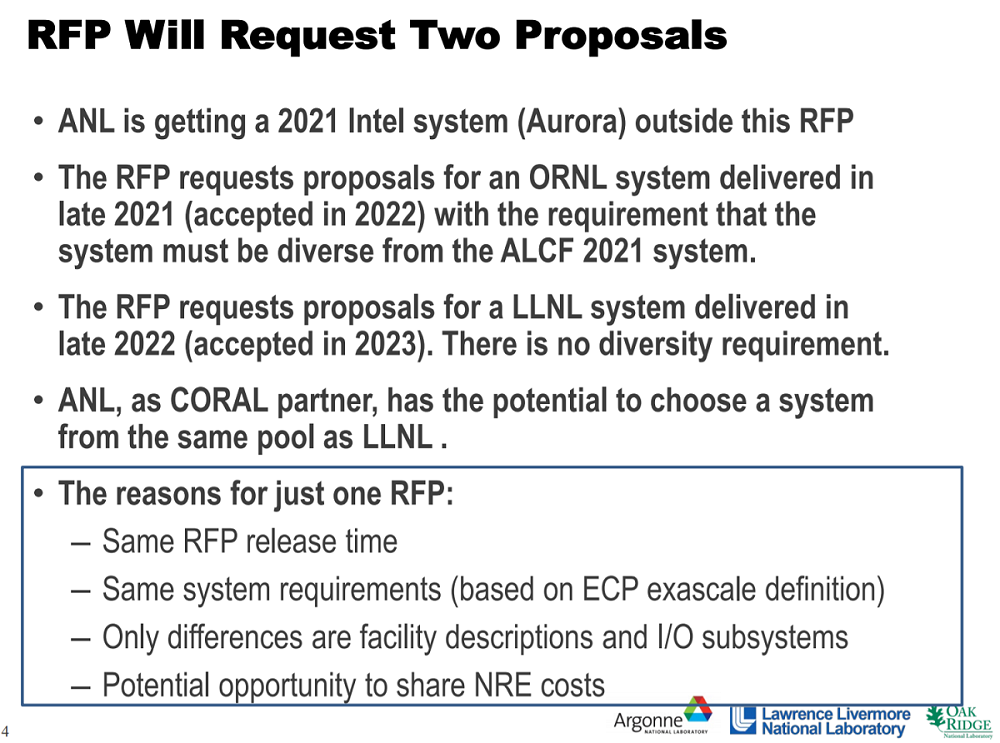

The CORAL-2 collaboration specifically seeks to fund non-recurring engineering and up to three exascale-class systems: one at Oak Ridge, one at Livermore and a potential third system at Argonne if it chooses to make an award under the RFP and if funding is available. The Exascale Computing Project (ECP), a joint DOE-NNSA effort, has been organizing and leading R&D in the areas of the software stack, applications, and hardware to ensure “capable,” i.e., productively usable, exascale machines that can solve science problems 50x faster (or more complex) over today’s ~20-petaflops DOE systems (i.e., Sequoia and Titan). In terms of peak Linpack, 1.3 exaflops is the “desirable” target set by the DOE.

Like the original CORAL program, which kicked off in 2012, CORAL-2 has a mandate to field architecturally diverse machines in a way that manages risk during a period of rapid technological evolution. “Regardless of which system or systems are being discussed, the systems residing at or planned to reside at ORNL and ANL must be diverse from one another,” notes the CORAL-2 RFP cover letter [PDF]. Sharpening the point, that means the Oak Ridge system must be distinct from A21 and from a potential CORAL-2 machine at Argonne. It is conceivable, then, that this RFP may result in one, two or three different architectures, depending of course on the selections made by the labs and whether Argonne’s CORAL-2 machine comes to fruition.

Like the original CORAL program, which kicked off in 2012, CORAL-2 has a mandate to field architecturally diverse machines in a way that manages risk during a period of rapid technological evolution. “Regardless of which system or systems are being discussed, the systems residing at or planned to reside at ORNL and ANL must be diverse from one another,” notes the CORAL-2 RFP cover letter [PDF]. Sharpening the point, that means the Oak Ridge system must be distinct from A21 and from a potential CORAL-2 machine at Argonne. It is conceivable, then, that this RFP may result in one, two or three different architectures, depending of course on the selections made by the labs and whether Argonne’s CORAL-2 machine comes to fruition.

“Diversity,” according to the RFP documents, “will be evaluated by how much the proposed system(s) promotes a competition of ideas and technologies; how much the proposed system(s) reduces risk that may be caused by delays or failure of a particular technology or shifts in vendor business focus, staff, or financial health; and how much the proposed system(s) diversity promotes a rich and healthy HPC ecosystem.”

Here is a listing of current and future CORAL machines:

Proposals for CORAL-2 are due in May with bidders to be selected later this year. Acquisition contracts are anticipated for 2019.

If Argonne takes delivery of A21 in 2021 and deploys an additional machine (or upgrade) in the third quarter of 2022, it would be fielding two exascale machines/builds in less than two years.

“Whether CORAL-2 winds up being two systems or three may come down to funding, which is ‘expected’ at this point, but not committed,” commented HPC veteran and market watcher Addison Snell, CEO of Intersect360 Research. “If ANL does not fund an exascale system as part of CORAL-2, I would nevertheless expect an exascale system there in a similar timeframe, just possibly funded separately.”

Several HPC community leaders we spoke with shared more pointed speculation on what the overture for a second exascale machine at Argonne so soon on the heels of A21 may indicate, insofar as there may be doubt about whether Intel’s “novel architecture” will satisfy the full scope of DOE’s needs. Given the close timing and the reality of lengthy procurement cycles, the decision on a follow-on will have to be made without the benefit of experience with A21.

Argonne’s Associate Laboratory Director for Computing, Environment and Life Sciences Rick Stevens, commenting for this piece, underscored the importance of technology diversity and shined a light on Argonne’s thinking. “We are very interested in getting as broad range of responses as possible to consider for our planning. We would love to have multiple choices to consider for the DOE landscape including exciting options for potential upgrades to Aurora,” he said.

If Intel, working with Cray, is able to fulfill the requirements for a 1-exaflops A21 machine in 2021, the pair may be in a favorable position to fulfill the more rigorous “capable exascale” requirements outlined by ECP and CORAL-2.

The overall bidding pool for CORAL-2 is likely to include IBM, Intel, Cray and Hewlett Packard Enterprise (HPE); upstart system-maker Nvidia may also have a hand to play. HPE could come in with a GPU-based machine or an implementation of its memory-centric architecture, known as The Machine. In IBM’s court, the successor architectures to Power9 are no doubt being looked at as candidates.

And while it’s always fun dishing over the sexy processing elements (with flavors from Intel, Nvidia, AMD and IBM on the tasting menu), Snell pointed out it is perhaps more interesting to prospect the interconnect topologies in the field. “Will we be looking at systems based on an upcoming version of a current technology, such as InfiniBand or OmniPath, or a future technology like Gen-Z, or something else proprietary?” he pondered.

Stevens weighed in on the many technological challenges still at hand, ranging from memory capacity, power consumption, and systems balance, but he noted that, fortunately, the DOE has been investing in many of these issues for many years, through the PathForward program and its predecessors, created to foster the technology pipeline needed for extreme-scale computing. It’s no accident or coincidence that we’ve already run through all the names in the current “Forward” program: AMD, Cray, HPE, IBM, Intel, and Nvidia.

“Hopefully the vendors will have some good options for us to consider,” said Stevens, adding that Argonne is seeking a broad set of responses from as many vendors as possible. “This RFP is really about opening up the aperture to new architectural concepts and to enable new partnerships in the vendor landscape. I think it’s particularly important to notice that we are interested in systems that can support the integration of simulation, data and machine learning. This is reflected in both the technology specifications as well as the benchmarks outlined in the RFP.”

Other community members also shared their reactions.

“It is good to see a commitment to high-end computing by DOE, though I note that the funding has not yet been secured,” said Bill Gropp, director of the National Center for Supercomputing Applications (NCSA) at the University of Illinois at Urbana-Champaign (home to the roughly 13-petaflops Cray “Blue Waters” supercomputer). “What is needed is a holistic approach to HEC; this addresses the next+1 generation of systems but not work on applications or algorithms.”

“What stands out [about the CORAL-2 RFP] is that it doesn’t take advantage of the diversity of systems to encourage specialization in the hardware to different data structure/algorithm choices,” Gropp added. “Once you decide to acquire several systems, you can consider specialization. Frankly, for example, GPU-based systems are specialized; they run some important algorithms very well, but are less effective at others. Rather than deny that, make it into a strength. There are hints of this in the way the different classes of benchmarks are described and the priorities placed on them [see page 23 of the RFP’s Proposal Evaluation and Proposal Preparation Instructions], but it could be much more explicit.

“Also, this line on page 23 stands out: “The technology must have potential commercial viability.” I understand the reasoning behind this, but it is an additional constraint that may limit the innovation that is possible. In any case, this is an indirect requirement. DOE is looking for viable technologies that it can support at reasonable cost. But this misses the point that using commodity (which is how commercial viability is often interpreted) technology has its own costs, in the part of the environment that I mentioned above and that is not covered by this RFP.”

Gropp, who is awaiting the results of the NSF Track 1 RFP that will award the follow-on to Blue Waters, also pointed out that NSF has only found $60 million for the next-generation system, and has (as of November 2017) cut the number of future track 2 systems to one. “I hope that DOE can convince Congress to not only appropriate the funds for these systems, but also for the other science agencies,” he said.

Adding further valuable insight into the United States’ strategy to field next-generation leadership-class supercomputers especially with regard to the “commercial viability” precept is NNSA Chief Scientist Dimitri Kusnezov. Interviewed by the Supercomputing Frontiers Europe 2018 conference in Warsaw, Poland, last month (link to video), Kusnezov characterized DOE and NNSA’s $258 million funding of the PathFoward program as “an investment with the private sector to buy down risk in next-generation technologies.”

“We would love to simply buy commercial,” he said. “It would be more cost-effective for us. We’d run in the cloud if that was the answer for us, if that was the most cost-effective way, because it’s not about the computer, it’s about the outcomes. The $250 million [spent on PathForward] was just a piece of ongoing and much larger investments we are making to try and steer, on the sides, vendor roadmaps. We have a sense where companies are going. They share with us their technology investments, and we ask them if there are things we can build on those to help modify it so they can be more broadly serviceable to large scalable architectures.

“$250 million dollars is not a lot of money in the computer world. A billion dollars is not a lot of money in the computer world, so you have to have measured expectations on what you think you can actually impact. We look at impacting the high-end next-generation roadmaps of companies where we can, to have the best output. The best outcome for us is we invest in modifications, lower-power processors, memory closer to the processor, AI-injected into the CPUs in some way, and, in the best case, it becomes commercial, and there’s a market for it, a global market ideally because then the price point comes down and when we build something there, it’s more cost-effective for us. We’re trying to avoid buying special-purpose, single-use systems because they’re too expensive and it doesn’t make a lot of sense. If we can piggyback on where companies want to go by having a sense of what might ultimately have market value for them, we leverage a lot of their R&D and production for our value as well.

“This investment we are doing buys down risk. If other people did it for us that would even be better. If they felt the urgency and invested in the areas we care about, we’d be really happy. So we fill in the gaps where we can. …But ultimately it’s not about the computer, it’s really about the purpose…the problems you are solving and do they make a difference.”