Precisely modeling climate and weather is a crucial first step in understanding how to mitigate and adapt to climate change – for example, researchers are using state-of-the-art supercomputers to simulate the hurricanes of a warmer world. But a new paper by a team of European researchers claims that even as scientists’ conceptual and mathematical grasp of climate modeling rapidly accelerates, a discrepancy is developing that threatens their practical ability to perform those calculations.

The looming chasm

Weather and climate models are labyrinthine beasts – massively complex pieces of software that sometimes employ millions of lines of code, retooled and jerry-rigged by highly specialized teams of researchers over many years. These models have historically been designed to run on very specific hardware in order to maximize performance – and until recently, that was a viable strategy.

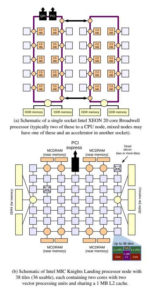

For a long time, the computing environment for climate modeling was stable, primarily consisting of systems with multicore x86 processors using MPI for parallelism. When researchers needed more power for their increasingly complex climate models, they leaned on the ever-faster clock speeds of next generation processors.

But with the loss of Dennard scaling and the slowdown in Moore’s law, the physical limits of silicon and ever-growing power consumption costs are forcing manufacturers to find those performance gains elsewhere. As a result, that comfortable history of computational similarity is giving way to an increasingly heterogeneous computing environment that involves ARM server chips, GPU-based architectures, FPGAs, and much more.

Thus we approach what the paper’s authors, led by Dr. Bryan N. Lawrence, term a “chasm” in climate modeling: the gap between the rapidly-iterated, hardware-specific code that currently exists and the new, powerful technology that it cannot exploit. Incremental improvements in the existing code will not be sufficient – in most climate models, the code has been optimized at the expense of adaptability.

This “chasm” leaves climate model developers in an apparent catch-22. Developers could begin work on a more adaptable climate model – but the process would take many years and come at the expense of critical ongoing science. Alternatively, developers could continue to iterate the existing code – but they would be using increasingly obsolete and inefficient hardware. Even national-level climate model programs, the paper suggests, are unlikely to be able to be able to pursue both goals independently.

Bridging the gap

The solution, the authors suggest, is an open-source, collaborative approach based on a separation of concerns: essentially, dividing the work between a number of cooperating organizations and institutions. This would be a drastic process change – they note that historically, the weather and climate communities have done a poor job of sharing tools, and many of the existing models use code that leans on the in-house shorthand of their developers.

The new system would be highly modularized, with discrete tools and libraries that could be developed by separate teams and which would be able to evolve independently of one another. This, they say, would reduce the “chasm” to a series of coding leaps between “stable platforms.” Developing a model with such a strong separation of concerns would require many other adjustments – a robust sharing infrastructure, a clear taxonomy, and so on.

Critically, it would also require domain-specific languages (DSLs). Much of the existing code relies on code-using libraries and directives (such as OpenMP) because those tools are easier to adjust for climate modeling, while generic parallelization tools are less optimized. The construction of a DSL would allow scientific programmers to interact with a leaner, intuitive language designed specifically to handle the calculations common in climate and weather modeling.

The authors acknowledge that their proposed way forward depends on “institutions, projects, and individuals adopting new interdependencies and ways of working.” The paper identifies many key characteristics of ideal institutions, but it particularly emphasizes the value of rewarding collaboration at all levels. As the “chasm” looms, they argue, the barriers have to drop.

About the paper

The paper discussed in this article, “Crossing the chasm: how to develop weather and climate models for next generation computers?”, was authored by Bryan N. Lawrence, Michael Rezny, Reinhard Budich, Peter Bauer, Jörg Behrens, Mick Carter, Willem Deconinck, Rupert Ford, Christopher Maynard, Steven Mullerworth, Carlos Osuna, Andrew Porter, Kim Serradell, Sophie Valcke, Nils Wedi, and Simon Wilson. It can be found as a publicly-available publication of the European Geosciences Union.