Software Defined Visualization (SDVis), an open source initiative from Intel and industry collaborators, delivers breath-taking visual impact and interactivity for all scales of scientific and photorealistic data, and has been designed to support the coming massive data sizes of future Exascale capable machines.

“Our ability to generate data is increasing faster than our ability to store it” explains Professor Hank Childs, recipient of the Department of Energy’s Early Career Award to research visualization with exascale computers and Associate Professor in the Department of Computer and Information Science at the University of Oregon.

As Dr. Childs implies, dramatically reducing or eliminating data movement will become a necessary requirement to enable discovery through visual analysis as computational capability leaps ahead of data I/O speeds not only to permanent storage, but across even local peripheral buses like PCIe. SDVis enables applications that can perform in-situ visualization where visualizing simulation data happens directly from simulation’s output memory on the same compute nodes that run the model or simulation. In-situ visualization represents the ultimate in HPC performance and scalability because time-consuming and massive data transfers are not required.

As a result, visualizations run faster. Plus SDVis application users can realize huge performance gains through the use of the Intel SDVis libraries’ efficient algorithms that exploit both the larger memory capacity of CPUs and the massive parallelism in Intel® processors and compute clusters.

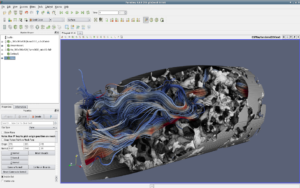

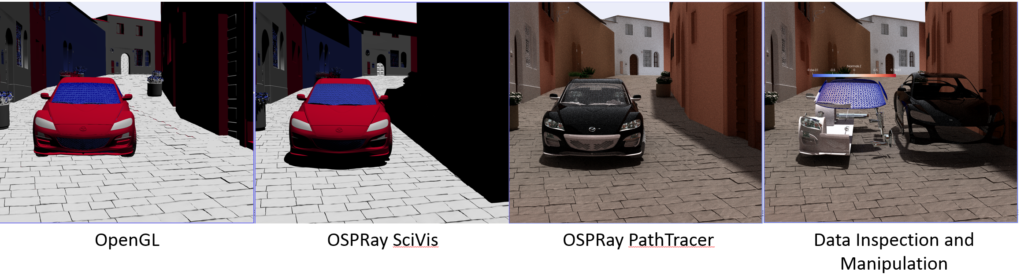

David DeMarle, visualization luminary and lead Visual ToolKit (VTK*) engineer at Kitware makes this concrete, “We are entering the era, based on the data size, where the scalability and constant runtime of Software Defined Visualization often wins over GPUs for visualization”. He bases this statement on Kitware’s experience integrating open-source high-performance parallel software rendering libraries OpenSWR, Embree, and OSPRay into VTK and the ParaView* visualization application.

We are entering the era, based on the data size, where the scalability and constant runtime of Software Defined Visualization (SDVis) often wins over GPUs for visualization – David DeMarle, Kitware

“Massive data poses a problem as it simply becomes impractical from a runtime point of view to move it around or keep multiple copies,” explains Jim Jeffers (Sr. Director and Sr. PE, Visualization Solutions at Intel). ‘It just takes too much time and memory capacity. This makes in-situ visualization a “must-have for exascale.”

Big is good, but big and interactive is even better!

Local and cloud-based demonstrations have shown that CPU rendering to an in-memory framebuffer with a display only device at a desktop or client is all that is required to interactively visualize even the most complex ray-traced photorealistic images. [i]

Jeffers notes that a small local eight node CPU cluster can deliver high-resolution, interactive frame rates for even photorealistic ray-traced images. Further, these same images can be interactively viewed on a laptop in Denver even when the rendering occurs remotely at the Texas Advanced Computing Center. Jeffers’ points out that scaling to 128 or more nodes enables frames rates as high as 100fps, “The 128-node images are fully interactive with photorealistic, ray traced quality, with no discernable rendering artifacts.”

Trillions of triangles

Raster-based OpenGL codes benefit from the same SDVis benefits of performance, scalability, and the ability to run anywhere. Basically just change the library path to the Mesa library with OpenSWR instead of a GPU accelerated library.

DeMarle explains why SDVis OpenGL is so fast, “Eliminating the need to transfer data to the GPU is the reason why OpenSWR can compete so effectively against GPU accelerated libraries”. He also observes that “scalability is another reason to consider OpenSWR” as “OpenGL performance does not trail off even when rendering meshes containing one trillion (10 ** 12) triangles on the Trinity leadership class supercomputer”.

Eliminating the need to transfer data to the GPU is one reason why OpenSWR can compete so effectively against GPU accelerated libraries. Scalability is another reason to consider OpenSWR. – David DeMarle, Kitware

Not everyone is using ray-tracing … yet! SDVis also provides a path from OpenGL only rendering to the creation of visually compelling photorealistic ray traced images using only free, production quality open-source software like ParaView.

Learn more about Software Defined Visualization here.

[i] Demonstrated at both SC’17 and the Intel® HPC Developer Conference in Denver, Colorado. SDVis performance and scalability confirmed by other third-parties such as the Beckman Institute and the University of Utah as well as the University of Stuttgart.