HPC in the cloud is one of those “insanely great” ideas that, failing to fire, year after year recedes before our expectations. Until, that is, last year, according to industry watcher Addison Snell, CEO of Intersect360 Research, which he said was a “break-out year” for HPC in the cloud.

At Intersect360’s annual HPC market update at ISC18 in Frankfurt yesterday, Snell also stated that:

- Commercial sectors represent more than 55 percent of 2017 HPC technology revenues.

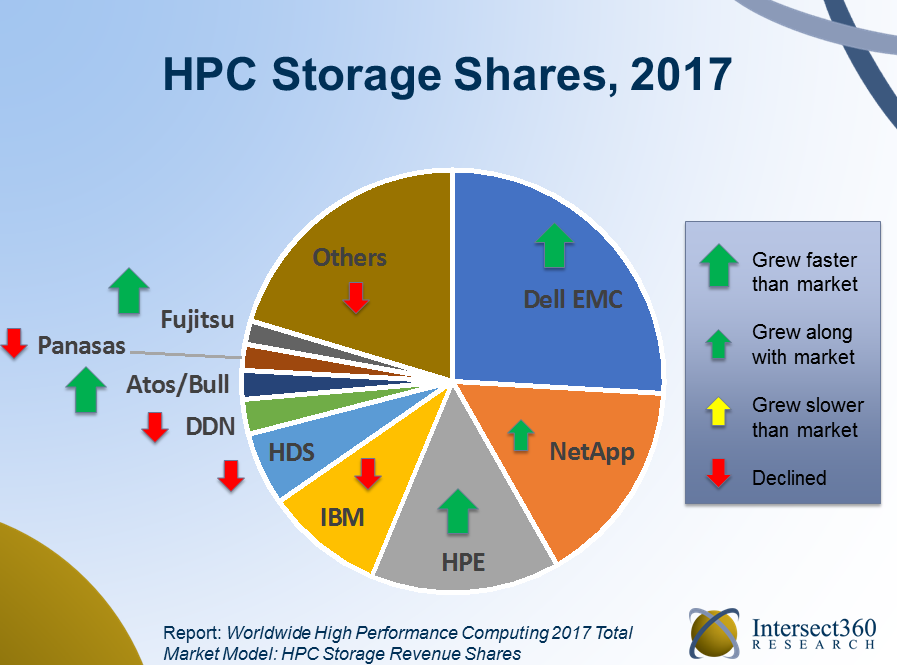

- Pure-play high performance storage had an off year as IT planners showed a preference for integrated storage within server offerings.

- As the trend of “technology disaggregation” (Snell’s phrase for the rapid proliferation of non-x86 accelerators) continued apace, risk-averse buyers are increasingly concerned that today’s advanced scale technology purchasers may not fit tomorrow’s workloads; the upshot, according to Snell, is a user community yearning for vendors that deliver a diversity of technology solutions and sound guidance for their implementations.

The total worldwide HPC market (servers, storage, software, etc.) revenues, according to Intersect360, reached $35.4 billion in 2017, up 1.6 percent from 2016. Curiously, that anemic growth number contradicts the overall positive indicators from the “demand-side” (buyers). The reason, Snell said, is that a significant amount of customer budgets was spent on increases in power and cooling costs at commercial sites. “Facility spending was consuming a larger portion of budgets,” he said, “and not all of the demand-side budget increase made it out into the market in terms of products and services.”

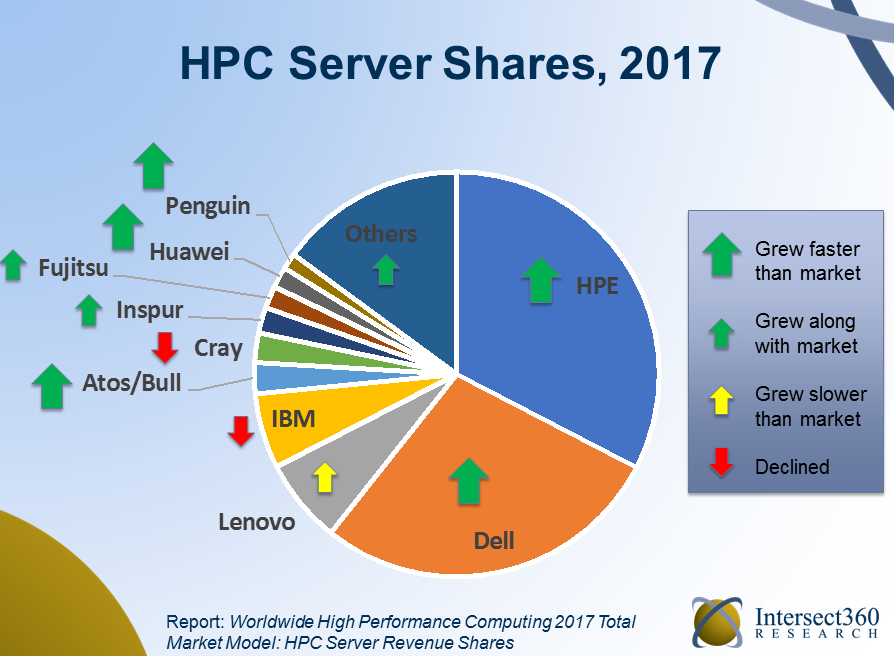

Intersect360 found HPE to be the top HPC server provider, followed closely by Dell EMC. In fact, those two companies had positive years in 2017, extending their market leadership positions. Meanwhile, Cray and IBM had down years despite the latter having delivered the world’s first- and third-most powerful supercomputers.

Intersect360 found HPE to be the top HPC server provider, followed closely by Dell EMC. In fact, those two companies had positive years in 2017, extending their market leadership positions. Meanwhile, Cray and IBM had down years despite the latter having delivered the world’s first- and third-most powerful supercomputers.

“IBM fell back a little bit,” Snell said. “This has been disappointing. It’s a company having a great show at ISC here. There’s the new Top500 list with ‘Summit’ and ‘Sierra’ numbers one and three…. But in 2017 they did not have good traction with the Power9 servers, they’re going to really need to get that going and figure out how to execute in this environment.”

In a conversation after his presentation, Snell said he believes IBM emphasizes AI as the target workload for its high end servers – at the expense of their broader HPC applicability.

“IBM has a lot of the right pieces in place to gain market share across high performance computing, with not only their own products but all their partnerships,” he said. “They have under-performed so far in their Power division against the (potential) of what they’re capable of doing. They could turn that around, but it would be a matter of strategically placing emphasis on HPC again and not just AI. They’re clearly weighting their massaging toward AI.” He said that at a recent IBM conference, Big Blue talked about even Sierra and Summit, built for the U.S. national labs, as AI systems.

“There’s a tremendous potential for Summit and Sierra to have a ripple effect for selling more Power9-GPU-InfiniBand systems across the HPC landscape,” he said, “but IBM would have to have an intentional strategy to go do that, instead of just talking about AI.”

Having said that, machine learning is a growing aspect of the HPC firmament. By Intersect360’s calculation, there was roughly $4.5 billion in dedicated machine learning spending worldwide last year, “but 95 percent is coming from the hyperscale market, where you have the Googles, Facebooks and Amazons of the world.”

Of the remaining measurable spending on ML, most is happening in financial services, which Snell said is aggressively adopting AI.

“This is the vertical furthest ahead in HPC in terms of real use cases for dedicated spending for deep learning,” said Snell. “We love talking about self-driving cars and personalized medicine. But I promise you’re going to have personalized interest rates long before you have personalized medicine. That is where the money is, and it’s the areas that have money in them, like finance, advertising, and retail, and hyperscale, that are driving a lot more of the immediate investment in machine learning.”

The hot growth segment of the industry in 2017 was cloud HPC. Though starting from a relatively small base, HPC in the cloud grew by 44 percent, to $1.1 billion, according to Intersect360, exceeding the billion-dollar mark for the first time.

“HPC cloud computing really had a break-out year,” Snell said, “which we’ve been waiting for.” Intersect360 expects cloud HPC to continue its hot pace, reaching $2 billion next year and $3 billion in 2022.

This growth stems from two factors, Snell said: first, machine learning, and the movement of machine learning application to the cloud. “The ML types of applications have a great deal of cloud affinity, and have their roots in the hyperscale segment, so it’s natural to migrate those.”

Second and more important, Snell said: the maturation of licensing models, particularly for ISV codes in the high performance computing space. “It got easier in 2017 to migrate your Abacus license to the cloud, or Fluent, and that caused a lot of the spike for the higher value components, like SaaS.”

Meanwhile, high performance storage revenues were weak relative to server spending, Snell said, “and that’s because people were buying more computational, link-heavy configurations, mostly with GPUs, predominantly because of the influx of machine learning.” Which is to say that with high prices for GPUs, there’s less money left in budgets for storage.

Meanwhile, high performance storage revenues were weak relative to server spending, Snell said, “and that’s because people were buying more computational, link-heavy configurations, mostly with GPUs, predominantly because of the influx of machine learning.” Which is to say that with high prices for GPUs, there’s less money left in budgets for storage.

Intersect360 also reported that high performance storage vendors, such as DDN and Panasas, had off years in 2017.

“More of the storage revenues flowed away from dedicated storage companies and toward server companies that were also selling storage,” he said. “That goes along with that theme that people were buying things that are more integrated.”

Looking ahead, Intersect360 sees growth for the HPC industry’s future.

“In the forecast, we see a return to steady growth, primarily because the budget outlooks have improved across all three sectors (industry, government, academia),” Snell said, “particularly government… it has the best forward looking outlook it’s had had in the last five years. That’s been flat, and it’s finally starting to reengage again. So that’s going to unlock some of the growth that we see here.”

But for all the optimism about the industry’s future, problems still exist. Snell cited “technology disaggregation,” the emergence of non-x86 accelerated processors, such as GPUs, FPGAs, Arm and others.

“The big challenge is on the one hand end users are facing (a) diversifying set of workloads,” he said. “I’ve got all my traditional HPC stuff, and I’ve got analytics and now I’ve also got ML. On the other hand, I’ve got a diversifying set of technologies, where I’ve got to choose what processor type, what interconnect type, how many tiers of storage, and so forth. And the user is really afraid of getting burnt. I’m going to go buy a bunch of stuff in this arch, and then in four years that’s not going to be what I want for this workload.”

The problem, he said, is “there are precious few technology vendors addressing this head on. Everyone’s selling their own solutions.”

But a vendor that not only has a spectrum of available solutions but also the ability to guide customers on the best use of those solutions to address the customer’s workload portfolio “would have a major competitive advantage in this space. That’s what we’re waiting for – either from a major system vendor or from a major cloud vendor that can offer different types of instances.”