Reducing the “Time to Science”

The phrase “time is money” is not limited to the world of business. Getting to results faster is also an urgent requirement in the sciences. Science costs of money, after all. This was the issue confronting Dr. Lance Wilson, the Characterisation Virtual Laboratory (CVL) Coordinator and Senior HPC Consultant at Australia’s Monash University.

“Researchers have come to expect ‘bigger, better and faster,’ said Dr. Wilson. In Australia, a “category one peak grant” can award up to $1 million to scientists who need the stack as soon as they get the funds. However, the award winners do not always give the HPC team notice ahead of time, primarily due to very competitive nature of grants. Nevertheless, they need to begin their compute work right away. The Monash Research department may have to scramble in suppling capabilities for huge projects. Dr. Wilson has answered this challenge by

“We must improvise and provide them with what they need, balanced against the very short lead times,” he said. As he explained, “Effective research computing is all about partnerships between experts in their research fields and experts in the research computing space. We make it work and OpenStack is essential to delivery.”supporting his colleagues with a curated range of Open Source tools and services for data analysis in the cloud—including those built using OpenStack. Dr. Wilson prioritizes requests and ongoing mandates for computing so he can “reduce the time to science.”

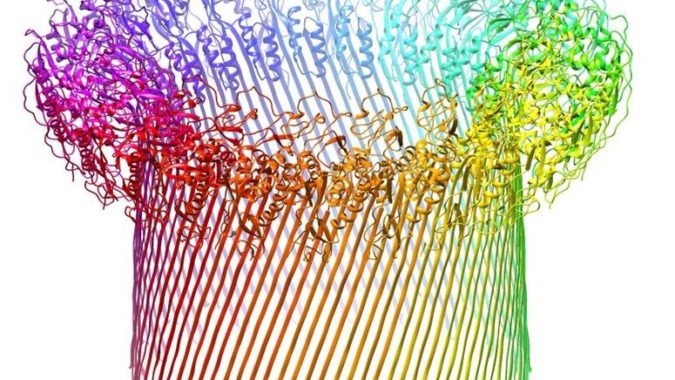

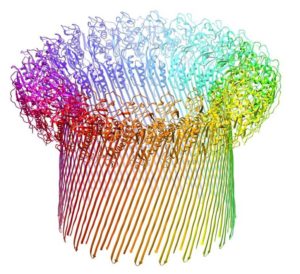

Compared to commercial clouds, the Monash on-premise cloud (Built with Dell EMC hardware ) saves about 50% of the costs, mainly due to the scale of operation and high utilisation of resources. It’s also much faster. Dr. Wilson was tasked with supporting cryo-electron microscopy, which produces a monumental 2-4 terabytes each on a daily basis. “Using the stack, it took researchers four months to get through the collected data. It may have taken years if the cloud were not available,” he noted. Figure 1 shows an example of a the 3D output capable of being produced by the virtual laboratory solution running on Dell EMC systems with OpenStack.

His use of OpenStack alleviates the need for scientists to develop an additional skillset. “The benefit of working with Dell EMC leads to achieving more with available resources,” Dr. Wilson said. “When the research computing works to the researchers needs, they perform better across productivity and increase their quality of work.”

Matching HPC to Research Workloads

V.K. Cody Bumgardner, Director, Pathology Informatics and Assistant Professor of Pathology and Laboratory Medicine Computer Science at University of Kentucky (UKY) in Lexington, offers another perspective on the benefits of OpenStack for HPC in a research environment. Bumgardner, previously the Director of Research Computing, works in the medical area, using HPC for genomics and biomedical informatics. Specifically, they use virtual HPC for cancer diagnostic panels.

As Bumgardner sees it, until recently, the HPC landscape was dominated by computational physics, chemistry, and other scientific disciplines, where fundamental research was accomplished through model simulation. The first three decades of HPC primarily made use of shared monolithic and symmetric multiprocessing (SMP) systems. “In the late nineties we shifted to commodity-based clusters using high-speed interconnects and software to tie together system components,” Bumgardner said. “By 2010, many of the traditional HPC users had shifted their workloads from general purpose processors to GPUs, where in many cases, there were orders of magnitude performance gains.” Here, the new OpenStack Queens release includes significant enhancements for managing accelerators including Intel FPGAs, which are increasingly being added to the compute nodes in OpenStack clusters.

Bumgardner’s group was under pressure from its user community to refresh its traditional HPC cluster as usual. As he put it, “We knew that general purpose processing performance was not increasing like it had in the past and our workloads had also changed. Over the course of a 19 month study[1] we collected over 30 billion time-series metrics in relation to over 200 thousand scheduled jobs. The goal of our analysis was to characterize existing workloads as they relate to traditional HPC environments and identify workloads that would be served better by an alternative architecture.

The study found that bioinformatic research was a major consumer of HPC resources, but the workloads were not well suited for the environment. Many users’ workloads didn’t require an entire bare-metal node, while other users’ workloads required a larger node than what is typically provided. “What we found was that 91% of our jobs ran on a single node, which accounted for 63% of the cluster runtime,” said Bumgardner. “These single-node jobs didn’t take advantage of our low-latency interconnect, and many didn’t require a traditional bare-metal HPC environment. In addition, 75% of jobs ran in a ‘long’ queue, which means we had many single node jobs running for long durations, which is the opposite of what you would want to see in traditional HPC.”

UK received a $2.2 million dollar grant[2] to build the Kentucky Research Informatics Cloud (KyRIC), which makes use of OpenStack and Ceph[3] components. They had been using OpenStack for enterprise use cases, such as VM provisioning, etc. Now, they apply it to scientific workloads. It has worked well for them. It is flexible. In addition, over 80% of the data found on the HPC clustered file system had not been accessed over a year. Making use of Ceph a multi-protocol distributed storage system, they were able to provide shared filesystem, object, and block storage from the same platform, allowing data to be stored and accessed based on workload demands. They’ve created the equivalent of internal storage clouds using OpenStack, Ceph, Dell EMC servers and storage.

To learn more about running HPC on OpenStack, visit:

OpenStack for Research Computing – A University of Cambridge Perspective, http://technodocbox.com/Data_Centers/72202840-Openstack-for-research-computing-a-university-of-cambridge-perspective.html

The Crossroads of Cloud and HPC: OpenStack for Scientific Research, https://www.openstack.org/assets/marketing/OpenStackandHPCforscientificresearch-printformat.pdf

University of Kentucky: HPC tailored to research needs with OpenStack cloud, https://www.emc.com/collateral/customer-profiles/university-of-kentucky-hpc-openstack-case-study.pdf

[1] Bumgardner, VK Cody, Victor W. Marek, and Ray L. Hyatt. “Collating time-series resource data for system-wide job profiling.” Network Operations and Management Symposium (NOMS), 2016 IEEE/IFIP. IEEE, 2016.

[2] https://nsf.gov/awardsearch/showAward?AWD_ID=1626364&HistoricalAwards=false