Introducing the Niagara supercomputer

The need to analyze massive amount of data and solve complex computational problems drives the increasing use of high performance computing (HPC) systems and is leading to innovation in networking technology. Canadian academics and scientists across Canada use supercomputers at SciNet, Canada’s largest supercomputer center located at the University of Toronto to process their data. Researchers run big data workloads in areas such as artificial intelligence, biomedical sciences, aerospace engineering, astrophysics and climate science that requires major data processing time—up to thousands of hours.

SciNet recently installed a new high-performance computing system named Niagara that is now Canada’s fastest supercomputer as shown in Figure 1. Niagara uses the latest cutting-edge innovations developed by an ecosystem of partners to deliver performance at a cost performance price. The Niagara system is a partnership between Lenovo, Mellanox and Excelero to deliver Canada’s fastest supercomputer based on world’s first implementation of DragonFly+ architecture.

According to Scot Schultz, Sr. Director, HPC / Artificial Intelligence and Technical Computing – Mellanox Technologies, “Niagara runs on a Lenovo designed supercomputer using the latest Mellanox EDR InfiniBand technology, DragonFly+ network topology and InfiniBand-based burst buffer leveraging Mellanox interconnect accelerations and Excelero’s NVMesh solution. Niagara was designed using multiple technology innovations and networking accelerations to deliver state-of-the-art performance to tackle the most compute intense processing tasks.”

Lenovo—powering many of the world’s fastest supercomputers

Lenovo is now the global leader in powering leading HPC systems with the most supercomputers on the Top500 list. The Niagara supercomputer is a Lenovo ThinkSystem SD530 system built with input from SciNet, the University of Toronto, Compute Ontario and Compute Canada. The SciNet Lenovo Niagara HPC system contains 1,500 dense Lenovo ThinkSystem SD530 high-performance compute nodes and uses Lenovo Scalable Infrastructure (LeSI). It also contains two Intel® Xeon® 20-core Gold 6148 (2.4Ghz) CPUs fitting into 21 racks along with Lenovo DSS-G high performance storage. Currently, Niagara ranks among the top 10 percent of fastest publicly benchmarked computers in the world with a peak theoretical speed of 4.61 petaflops and a Linpack Rmax of 3 petaflops.

DragonFly+ networking topology enables high levels of compute performance

Current research and big data processing drives the need for higher data throughput to enable detailed analysis and network topology has a significant impact on distributed application performance. In typical, and probably the most popular networking scenarios, servers are connected and based on fat-tree network topology. While this topology maximizes throughput for a variety of communication patterns, it is relatively costly due to the large number of routers and links.

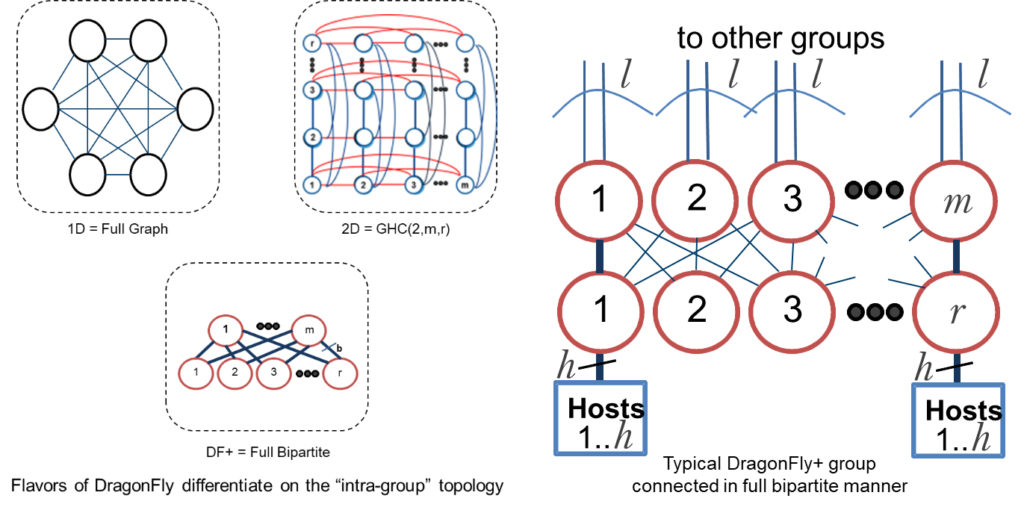

The Dragonfly topology was originally introduced as an alternative to show good performance of various traffic patterns while reducing the cost, compared to other topologies as well as reducing the number of long links. Mellanox introduced DragonFly+, which is a very cost-effective performance-based topology and allows connecting a larger number of hosts to the network. DragonFly+ is a concept of connecting groups of compute nodes in an all-to-all way where each group has at least one direct link to another group as shown in Figure 2.

Dragonfly+ also implements Fully Progressive Adaptive Routing (FPAR) with the advantage that routing decisions are evaluated in every switch in the network packets path. In addition, Adaptive Routing Notification (ARN) is implemented where messages are sent between routers to notify of distant congestion that can be resolved before it becomes an issue.

DragonFly+ uses open standard interconnect to provide low latency communication and high bandwidth resulting in expandability and cost reduction. DragonFly+ also allows extending the cluster without the need to reserve ports (which on standard DragonFly provides less bandwidth). In a more traditional FAT Tree network topology, expanding the network cluster almost always requires a significant amount of re-cabling, and often the cables are often cut and wasted and new cables are installed. According to Schultz, “The DragonFly+ system installed in the Niagara system has a reduced number of switches and provides a better value between performance and cost, and allows for flexible growth of the system.”

Excelero NVMesh burst buffers achieve unprecedented bandwidth

HPC systems use checkpointing and checkpoint restart during processing to make sure compute jobs are not interrupted. When a computer system is tracking checkpointing, it is not computing which leads to a performance slow down. Niagara combines Excelero’s NVMesh burst buffer with Mellanox’s world-leading, end-to-end InfiniBand networking solution to resolve the performance issue.

A burst buffer is a fast and intermediate storage layer between the nonpersistent memory of the compute nodes and persistent storage (parallel file system). Niagara uses a built-in low-latency network fabric and the solution adds commodity flash drives and NVMesh software to compute nodes. NVMesh provides redundancy without impacting target central processing units which enables standard servers to also act as file servers. This enables Niagara to use the full performance capacity and processing power of underlying servers and storage.

“In supercomputing any unavailability wastes time, reduces the availability score of the system and impedes the progress of scientific exploration. In working with partners at Lenovo and Mellanox, we were able to provide SciNet and its researchers with important storage functionality that achieves the highest performance available in the industry at a significantly reduced price – while assuring vital scientific research can progress swiftly,” said Lior Gal, CEO and co-founder at Excelero.

Summary

Canada’s fastest supercomputer named Niagara, located at the SciNet computing center, uses innovative networking technology based on a partnership of Lenovo, Mellanox and Excelero. Niagara is a Lenovo ThinkSystem SD530 system designed with world-class architecture and is currently ranked one of the fastest supercomputers in the world. The Niagara system uses the world’s first implementation of Mellanox DragonFly+ networking architecture which provides hyperscale processing capabilities while reducing the need to buy additional hardware—the solution uses industry standard hardware, commercial and open source software. Excelero’s NVMesh burst buffers allow Niagara to use the full performance capacity and processing power of underlying servers and storage. The partnership resulted in unprecedented bandwidth processing on Niagara which results in fast performance at a low-cost point.

Resources

The SciNet Niagara system is already being used in research as described in: “SciNet Launches Niagara, Canada’s Fastest Supercomputer, Tabor Communications, March 5, 2018.

https://www.hpcwire.com/2018/03/05/scinet-launches-niagara-canadas-fastest-supercomputer/