Attention has been paid to the sheer quantity of water consumed by supercomputers’ cooling towers – and rightly so, as they can require thousands of gallons per minute to cool. But in the background, another factor can emerge, bottlenecking efficiency and raising costs: water quality.

Water quality is the obstacle facing Los Alamos National Laboratory’s (LANL) Strategic Computing Complex (SCC), a supercomputing facility that models and simulates nuclear weapons in support of the National Nuclear Security Administration’s (NNSA) Advanced Simulation and Computing (ASC) program. Currently, the SCC needs around 45 million gallons a year (MGY) of water for cooling its Trinity supercomputer, a Cray system benchmarked at 14.137 Linpack petaflops.

There are, of course, a slew of water quality standards for the water that is discharged from the facility. Furthermore, beyond certain contamination levels, the cooling water can damage elements of the system through corrosion or “scaling” – and the water at Los Alamos is very high in silica. To remove dissolved solids and combat these effects, SCC’s cooling towers use wastewater treated by LANL’s Sanitary Effluent Recovery Facility (SERF).

SERF’s up (but too often, it’s down)

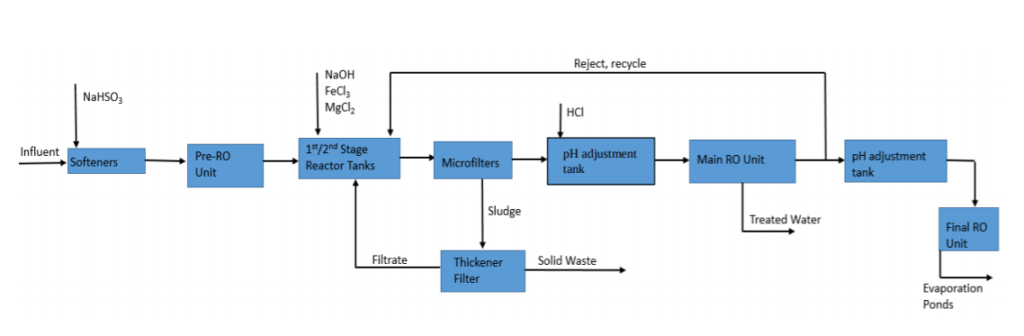

To remove impurities from the water, SERF uses a combination of chemical coagulation – introducing chemicals that bond to contaminants and settle them in a sludge at the bottom – and reverse osmosis – passing the water through a membrane that separates it into a stream of clean water and wastewater.

However, SERF is deeply flawed. In a water treatment feasibility study recently published by LANL, author William Ira Bennett wrote: “The original designers of SERF didn’t do their due diligence […] and check their calculations before construction.” He enumerated a series of design flaws in the final report:

- In the chemical coagulation tank, the chemicals are released at the bottom rather than the top, decreasing the speed and efficiency of the reaction, and leaving it to continue in the reverse osmosis tank, which harms the membranes.

- The sludge formed by the chemical coagulation is fragile, and the mixing blades in the tank destroy it, leaving much of the material in the solution as it progresses to reverse osmosis.

- When SERF recycles its wastewater, it feeds it into the reverse osmosis tank, bypassing the chemical coagulation stage entirely. Finally, the system was designed with no redundancies, forcing the SCC to use costly and limited potable water to cool its facilities if there is any malfunction with SERF.

These shutdowns are, in fact, a problem; SERF has been operating closer and closer to its full capacity due to increased demand, necessitating constant replacements and repairs. When new parts need to be ordered, it can cause the SERF to shut down for 6-10 weeks while the parts ship from Berlin. This all belies the staggering cost of SERF – three million dollars a year to operate, with over half of that spent solely on the chemicals used for the inefficient coagulation process.

SERF is currently being modified to address many of these concerns. The modifications eliminate the recycled stream and change the injection point of the chemicals, decreasing the quantity of chemicals necessary and substantially cutting annual costs.

However, the modifications aren’t the end of the story for one simple reason: SERF won’t be the SCC’s only filtration facility.

Cooling LANL’s future supercomputer infrastructure

SCC’s future supercomputer infrastructure will require about 80MW of peak power and cooling — and approximately 125 MGY of water, up from the 45 MGY demanded by its current infrastructure. With SERF approaching its water limit, this means that LANL will need to construct a new filtration system. Bennett asked the question: even with its upcoming modifications, is SERF really the ideal model for supplying this extra filtration capacity?

In short? No.

Bennett explored other options using different technologies, conducting a cost-benefit analysis of each. He explored the possibility of removing the pre-treatment system entirely and only using reverse osmosis, but concluded that the substantially larger waste stream would be impractical.

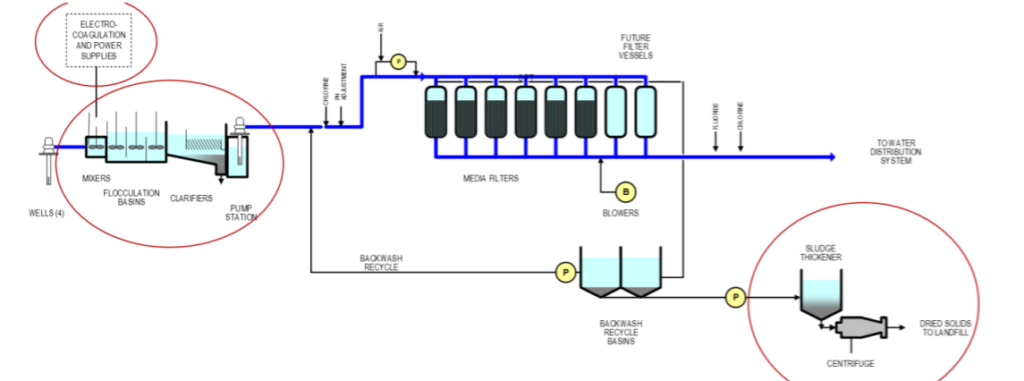

Ultimately, Bennett recommended a modified version of the SERF that used electrocoagulation – wherein electrons destabilize the contaminant particles and cause them to precipitate out – in place of chemical coagulation. He argued that by removing the need for chemicals – far and away SERF’s highest cost – the new towers would operate at much greater cost efficiency, even compared to the modified SERF design – specifically, an estimated cost savings of roughly $800,000 per year.

Keeping cool and carrying on

Bennett’s LANL report isn’t the end of the conversation; follow-up studies with electrocoagulation vendors are required, as well as benchtop experimentation at LANL itself. SERF’s operators are also looking at other options, including a process to remove the chemical coagulation process by adding an additional reverse osmosis unit and introducing ion exchange (which uses a filtering resin to replace contaminants with an acceptable constituent).

LANL’s work to improve the efficiency of the SCC’s cooling infrastructure as it moves toward expansion highlights the importance of integrating water as a holistic consideration in system design. Seemingly ancillary design considerations – in this case, water filtration – can have significant impacts on a system’s efficiency in terms of time, energy, effort, and money.

About the report

The report discussed in this article, “Water Quality for High Performance Computing,” was authored by William Ira Bennett and published by Los Alamos National Lab. It can be accessed here.