AWS, a macrocosm of the emerging high-performance technology landscape, wants to be everywhere you want to be and offer everything you want to use (or at least try out). As such, the cloud computing services giant has furthered the spirit of technology disaggregation – processor diversity – with new Amazon Elastic Container Service (EC2) A1 instances powered by AWS Graviton processors based on the Arm architecture, a first for the cloud industry. The Graviton processors feature 16 64-bit Arm Neoverse cores and custom silicon designed by AWS’s chip development group, Annapurna Labs.

With the new instances, AWS promises cost reductions of up to 45 percent for scale-out jobs in which the load is shared across a group of smaller instances, such as containerized microservices, web servers, development environments and caching fleets, according to the company.

Monday’s announcement at AWS’s annual re:Invent conference in Las Vegas adds to AWS instances based on GPUs, FPGAs and, more recently, AMD Epyc CPUs, also a cloud first.

Monday’s announcement at AWS’s annual re:Invent conference in Las Vegas adds to AWS instances based on GPUs, FPGAs and, more recently, AMD Epyc CPUs, also a cloud first.

In addition, AWS announced 100 Gbps networking capability in new P3 and C5 instances for machine learning and for high-performance computing applications, such as computational fluid dynamics, weather simulation, video encoding and other scale-out, distributed workloads.

The Arm architecture, of course, has been on the cusp of broader adoption seemingly for years, and at least one industry analyst said Graviton A1 instances — powered by the Arm Neoverse-based CPU — could be a significant step forward in that process.

“I see this announcement as a big breakthrough for general purpose Arm processor use in the datacenter server,” said Patrick Moorhead, president/principal analyst, Moor Insights & Strategy. “Many buyers consider AWS the gold standard for identification of the right compute options and I believe we could see more Arm at Azure and other cloud players.”

AWS said emerging scale-out workloads, such as containerized microservices and web tier applications that do not rely on the x86 instruction set, can gain cost and performance advantages from smaller 64-bit Arm processors that share an application’s computational load. Amazon said the A1 instances will deliver up to a 45 percent cost reduction (compared to other Amazon EC2 general purpose instances) for scale-out workloads. A1 instances are supported by several Linux distributions, including Amazon Linux, Red Hat and Ubuntu, as well as container services, including ECS and Amazon EC2 for Kubernetes (EKS).

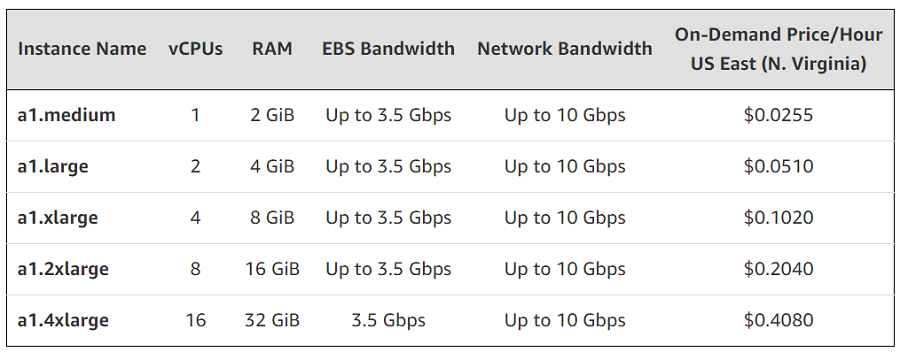

Amazon EC2 A1 instances are available in the US East (N. Virginia), US East (Ohio), US West (Oregon), and Europe (Ireland) regions. The five sizes provide between one and 16 vCPUs, which can be purchased as On-Demand, Reserved or Spot Instances.

On the networking front, AWS said its C5 instances and GPU-powered P3 instances are the first to deliver 100 Gbps networking performance “in a secure, scalable, and elastic manner so that customers can use it not just for HPC, but also for analytics, machine learning, big data, and data lake workloads with standard drivers and protocols.”

With “P3dn instances, customers can lower their training times to less than an hour by distributing their machine learning workload across multiple GPU instances,” AWS said, adding that the instances deliver a 4X increase in network throughput compared to existing P3 instances. P3dn instances include custom Intel CPUs with 96 vCPUs and support for AVX512 instructions, and Nvidia Tesla V100 GPUs each with 32 GB of memory.

“In August 2018, we were able to train Imagenet to 93 percent accuracy in just 18 minutes, using 16 P3 instances for only around $40,” said Jeremy Howard, founding researcher, fast.ai, a deep learning organization.

The new C5n instances offer 100 Gbps of network bandwidth, providing four times the throughput of C5 instances, enabling “previously network bound applications to scale up or scale out effectively on AWS,” the company said. Customers can also use the higher network performance to accelerate data transfer to and from Amazon S3 storage, reducing data ingestion time, according to the company.

AWS also announced Global Accelerator, designed to improve availability and performance of globally distributed applications by making it simpler to direct internet traffic from users to application endpoints running in multiple AWS regions. It uses AWS’s global network backbone and edge locations, directing clients to the right endpoint based on geographic location, application health and customer-configurable routing policies.

And AWS announced Elastic Fabric Adapter (EFA) for migrating HPC workloads to AWS to augment fixed-size, on-premises HPC systems. AWS said EFA enhances inter-instance communications critical for scaling HPC applications, providing elasticity and scalability. EFA is integrated with the Message Passing Interface (MPI), which allows HPC applications to scale to tens of thousands of CPU cores without modifications.

In other announcements, AWS launched Marketplace for Containers, designed to ease deployment of containers on Amazon ECS and EKS, which includes six new AI containers from Nvidia (for listing see Nvidia blog).