Experts at General Atomics (GA) have achieved a major improvement in processing speed for an important plasma physics code by working with experts from Nvidia to optimize it for operation on the latest GPU-based supercomputers.

This three-fold increase in processing time for the latest CGYRO code, used to simulate turbulent behavior of confined plasmas, was made possible by acquiring hardware similar to that used in the Summit supercomputer now being developed at Oak Ridge National Laboratory. Working on the system allowed GA researchers to test and validate their approach before deployment – an approach that could prove valuable for researchers in a variety of fields preparing for work on the next wave of supercomputing.

Physicists tend to be heavy users of Department of Energy (DOE) supercomputer resources. Those working on nuclear fusion rely heavily on modeling to predict plasma conditions inside a fusion reactor. An approach known as gyrokinetics has been used for nearly two decades to investigate turbulence in fusion plasmas because of its ability to model behavior of the ions and electrons in the plasma.

However, the extreme complexity of plasmas – which are heated to temperatures many times that of the sun to cause hydrogen isotopes to fuse into heavier elements – means that even limited simulations require enormous processing power. This is why all current models can simulate only portions of the plasma over limited timescales. As a result, physics researchers are constantly looking for ways to make their simulations more efficient.

Over a decade ago, scientists at GA – which operates the DIII-D National Fusion Facility for DOE at GA’s campus in San Diego – developed GYRO (a predecessor to CGYRO) to model the turbulent motion of confined plasma.

“In a toroidal containment device, the plasma fuel is confined by magnetic fields but suffers a very slow leakage due to turbulent behavior,” said Jeff Candy, manager of the Turbulence and Transport Group in GA’s Magnetic Fusion Energy Division. “GYRO has proved to be very accurate at simulating this complex nonlinear process in the core region of DIII-D and other tokamaks in the U.S. and Europe, but modeling the outside edge of the plasma is more challenging.”

The need to accurately model this more complex regime motivated the development of CGYRO. It was built from the ground up by combining the best algorithms from GYRO with new numerical schemes as well as a cutting-edge approach to parallelization that targeted the upcoming generation of multicore and GPU-based architectures.

“CGYRO was intended for operation on petascale systems,” said Igor Sfiligoi, a high-performance computer software developer at GA. “We’ve run it successfully on Titan for several years. But the original version of CGYRO was optimized for older GPUs, which means we weren’t using all the features of more modern GPUs.”

One goal in particular was optimizing CGYRO for use on Summit, Titan’s successor at ORNL. At the University of Colorado in Boulder GPU Hackathon in July, GA researchers worked with specialists from Nvidia to optimize CGYRO for the latest generation of GPU systems using the OpenACC programming model.

“A GPU-aware message passing interface is now used to move data directly between GPUs, without the need for staging first to the system memory,” Sfiligoi said. “Most of the remaining physics code execution has also been moved to the GPU, where it can now be efficiently executed without the excessive memory movement penalty.”

Since 2014, the Oak Ridge Leadership Computing Facility and its partners have held GPU hackathons to teach new GPU programmers how to leverage accelerated computing and help existing GPU programmers further optimize their codes. In 2018, there were seven such events at locations around the world, one of which was held at the UC Boulder. The GA team worked with Brent Leback and Craig Tierney from Nvidia at the hackathon.

In order to validate and benchmark the resulting code at the Hackathon, GA researchers needed access to Summit-like hardware. However, they did not have access to Summit nodes, and will not get it until 2019 at the earliest. In early 2018, to get around this problem, GA purchased two nodes that would mimic Summit’s fairly exotic processing environment.

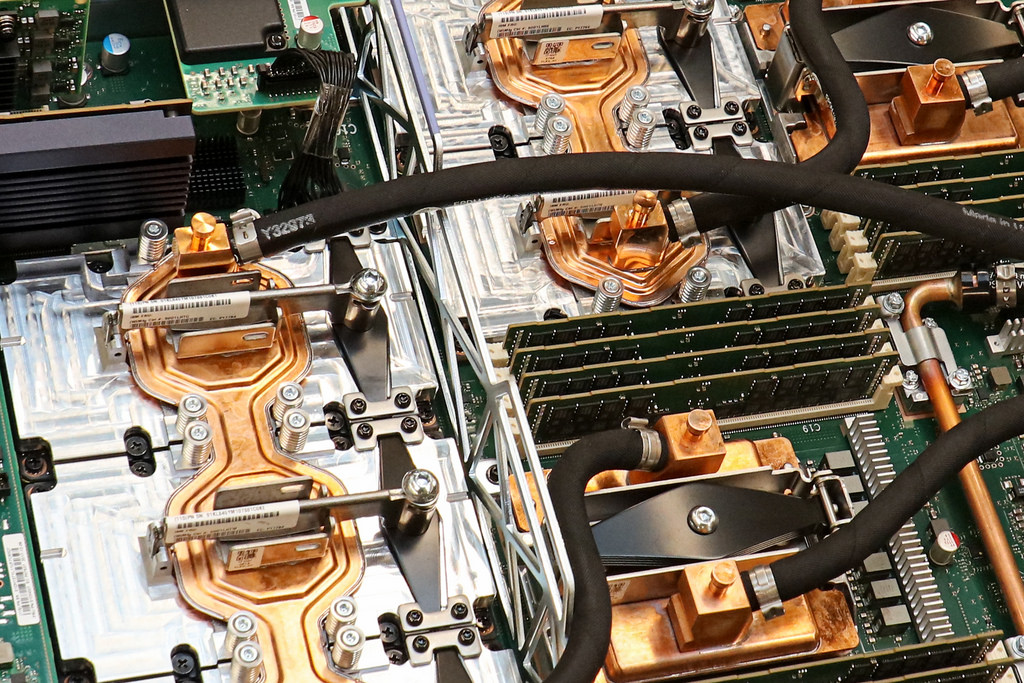

The nodes comprise two IBM AC922 servers, each with two 16-core 4-hyperthread IBM Power9 CPUs and four Nvidia Tesla V100 with NVLink Volta GPUs, with 512GB of CPU RAM and 4x16GB of GPU RAM. GA was the first commercial user to acquire a setup like this.

The result of the optimization efforts is a three-fold increase in processing speed on those nodes. The new version of CGYRO can thus be assumed to be optimized for use on Summit, where it will be able to run three times as many simulations in the same amount of time. Given how expensive and competitive supercomputer time is, a three-fold increase in the amount of work that can be done is an important achievement for GA’s plasma physicists.

“This improved computational efficiency on next-generation supercomputers will allow CGYRO to precisely compute plasma turbulence levels in the most challenging regions of the device operating space,” Candy said. “That opens up a new range of research that can help get us to commercial fusion power plants.”