Looking for advice on how to deliver HPC to a diverse science user community? MIT’s Lincoln Laboratory has just posted a new paper – Lessons Learned from a Decade of Providing Interactive, On-Demand High Performance Computing to Scientists and Engineers – intended to fill the bill.

“For over a decade, MIT Lincoln Laboratory has been supporting interactive, on-demand high performance computing by seamlessly integrating familiar high productivity tools to provide users with an increased number of design turns, rapid prototyping capability, and faster time to insight. In this paper, we discuss the lessons learned while supporting interactive, on-demand high performance computing from the perspectives of the users and the team supporting the users and the system,” write the authors.

The experiences outlined are derived from Lincoln Lab’s development and operation of “SuperCloud” (specs not given). It is described as “a fusion of the four large computing ecosystems: supercomputing, enterprise computing, big data and traditional databases into a coherent, unified platform. The MIT SuperCloud has spurred the development of a number of cross-ecosystem innovations in high performance databases; database management; data protection; database federation; data analytics; dynamic virtual machines and system monitoring.”

Pointedly, the advice is aimed not at supercomputer centers fixed on maximizing machine utilization but at organizations seeking an ROI that blends ease of use with efficiency in an on-demand environment.

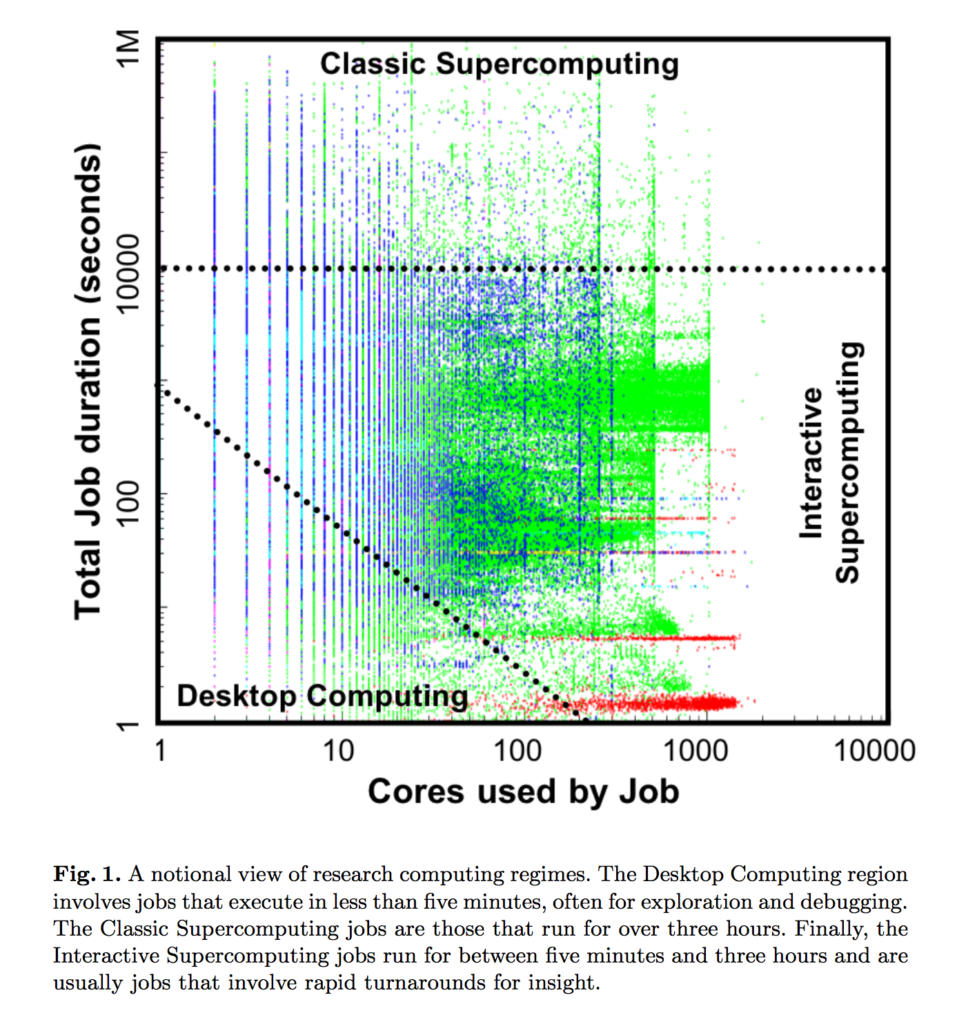

“Virtually all HPC centers report the percentage of system utilization as their metric of success. This choice of metrics often leads to queuing systems and user behavior designed to feed the system with the type of jobs that yield high utilization. However, these utilization-based queuing practices are often at odds with rapid prototyping of algorithms and simulations, exploration of large datasets and real time steering of complicated multi-physics simulations,” according to the report.

Lincoln Lab relied on “DARPA’s High Productivity Computing System program where a productivity metric was developed as part of a larger analysis of HPC Return On Investment for a broad range of applications and research domains.”

The report is an interesting blend of history and blueprint. Among the common use cases cited are: algorithm development for sensor signal processing; development of multiple program, multiple data (MPMD) real time signal processing systems; high throughput computing for aircraft collision avoidance system testing; and biomedical analytics to develop medical support techniques for personnel in remote areas.

The authors write, “Unlike traditional HPC applications, most of these capabilities involve prototyping efforts for multi-year, but not multi-decade, mission-driven programs making it even more important that researchers are able to use familiar interactive tools and achieve a greater number of design cycles per day. To enable an interactive high performance development environment, our team turned the traditional HPC paradigm on its head.

“Rather than providing a batch system, training in MPI, and assistance porting serial code to a supercomputer, we developed the tools and training to bring HPC capabilities to the researchers’ desktops and laptops. As common use cases and staff computational preparation change, we routinely update our tools so that we can provide relevant interactive research computing environments.”

You get the general picture. The full report is on arXiv (https://arxiv.org/pdf/1903.01982.pdf)

[i]Lessons Learned from a Decade of Providing Interactive, On-Demand High Performance Computing to Scientists and Engineers; Julia Mullen, Albert Reuther, William Arcand, Bill Bergeron, David Bestor, Chansup Byun, Vijay Gadepally, Michael Houle, Matthew Hubbell, Michael Jones, Anna Klein, Peter Michaleas, Lauren Milechin, Andrew Prout, Antonio Rosa, Siddharth Samsi, Charles Yee, and Jeremy Kepner, https://arxiv.org/pdf/1903.01982.pdf