When you’re running compute-bound or memory-bound applications for high performance computing or large, data-dependent machine learning training workloads on GPU clusters, you need the right mix of compute, storage, and accelerator resources for the job. The vast majority of enterprise workloads run successfully on Google Cloud Platform (GCP) using our general-purpose VMs. However, as the number of HPC and ML users moving to the cloud continues to grow, we see the need for VMs that are optimized specifically for HPC and ML types of workloads, the latest in GPU technology, and storage partnerships to provide high performance parallel storage.

Today we are pleased to announce four new capabilities for HPC: the expansion of our Google Compute Engine virtual machine (VM) offerings to include new Compute-Optimized VMs and Memory-Optimized VMs, a Google Cloud Platform Marketplace solution for Lustre from DataDirect Networks, and the general availability of T4 GPUs on GCP.

Compute-Optimized VMs

Both new VM types are based on 2nd Generation Intel Xeon Scalable Processors, which we delivered to customers last October—the first cloud provider to do so. In addition, these processors will also be coming to our general-purpose VMs. This means you’ll have access to a complete portfolio of machine types to successfully run your workloads across a wide range of memory and compute requirements.

Compute-Optimized VMs (C2) are a new compute family on GCP, exposing high per-thread performance and memory speeds that benefit the most compute-intensive workloads. Compute-Optimized VMs are great for HPC, electronic design automation (EDA), gaming, single-threaded applications and more. The new Compute-Optimized VMs offer a greater than 40% performance improvement compared to current GCP VMs. They also leverage 2nd Generation Intel Xeon Scalable Processors and can run at a sustained clock speed of 3.8 GHz. Additionally, C2 VMs provide full transparency into the architecture of the underlying server platforms, enabling advanced performance tuning. You can choose Compute-Optimized VMs with up to 60 vCPUs, 240 GBs of memory, and up to 3TB of local storage. Compute-Optimized VMs are currently available in alpha.

Memory-Optimized VMs

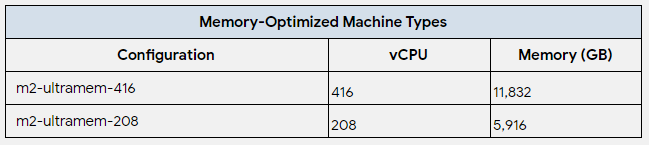

Memory-Optimized VMs (M2) offer the highest memory configuration for a Compute Engine VM. They are well suited for memory-intensive workloads such as large in-memory databases, e.g., SAP HANA, as well as in-memory data analytics workloads. Last July, we announced memory optimized VMs with up to 4 TBs of memory. Today’s additions to the M2 family offer up to 12 TB of memory and 416 vCPUs, enabling you to run scale-up workloads on GCP. These VMs are also based on 2nd Generation Intel Xeon Scalable Processors, and these newest Memory-Optimized VMs will be available in the following sizes:

M2 machine types will be available to early access customers this quarter.

VM Pricing

The new Compute-Optimized VMs will start at $0.209/hr for a c2-standard-4, and up to $3.13/hr for a c2-standard-60 instance. C2 VMs are also available as Preemptible VMs starting at $0.0505/hr. Pricing for the newest M2 VMs will be announced at a later date.

If you’re ready to get started, you can sign up for early access. Once your account is approved for access, you can log in to the Google Cloud Platform Console, use the Google Cloud SDK, or use Google Cloud APIs to launch the new VMs. Stay tuned for updates on beta and general availability.

DDN Lustre Available on Google Cloud Platform Marketplace

In order to supply the demand for extremely fast storage for HPC and ML workloads, DataDirect Networks (DDN) has released the Cloud Edition for Lustre in the Google Cloud Platform Marketplace. Simply search “Lustre” in the GCP Marketplace, configure your Lustre cluster’s total size and performance tier, and deploy a Lustre parallel file system with a click of a button. Now you too can use the same exact easily deployed solution that the DDN and Google teams used to capture one of the top spots in the premiere HPC storage benchmark IO500! You can hear more from DDN about their GCP Marketplace solution, and see a demo of it in action in our Google Cloud Next 19 talk “Technical Deep Dive into Storage for High Performance Computing”.

T4 GPUs now Generally Available

In January, we announced that NVIDIA T4 GPUs were in beta on Google Cloud Platform. Now the T4 GPU is generally available in eight GCP regions for as little as $0.29 per hour per GPU on preemptible VM instances. The T4 GPU is well suited for many machine learning, visualization and other GPU accelerated workloads. Each T4 comes with 16GB of GPU memory, offers the widest precision support (FP32, FP16, INT8 and INT4), includes NVIDIA Tensor Core and RTX real-time visualization technology and performs up to 260 TOPS (INT4) of compute performance. A Princeton University researcher had this to say about the T4’s unique price performance for their cutting edge neuroscience research:

“We are excited to partner with Google Cloud on a landmark achievement for neuroscience: reconstructing the connectome of a cubic millimeter of neocortex. It’s thrilling to wield thousands of T4 GPUs powered by Kubernetes Engine. These computational resources are allowing us to trace 5 km of neuronal wiring, and identify a billion synapses inside the tiny volume.” — Sebastian Seung, Princeton University

You can hear about customer use cases and the latest updates to GPUs on GCP in our Google Cloud Next 19 talk “GPU Infrastructure on GCP for ML and HPC Workloads”. Additional information on our GPU offerings can be found on the Google Cloud Platform GPU page.