So much attention is given to forthcoming exascale hardware – Aurora is scheduled to be the first U.S. exascale system to go live around 2021/22 – that the U.S. Exascale Computing Project’s (ECP) work to develop a robust software ecosystem to coax the most from these exascale machines often gets short shrift. That’s too bad because in many ways there is more to talk about with regard to ECP which has already released many ‘products’.

On Tuesday, Doug Kothe, midway through his second year as ECP director, provided a high-speed tour of ECP progress in a livestreamed talk for ACM. Officially entitled, The Exascale Computing Project and the Future of HPC, Kothe quipped at the start, “I’ll leave it to the audience to ascertain the future of HPC [and] do my best to get through the depth and breadth of what we’ve been up to.” Good choice. There’s too much to cover.

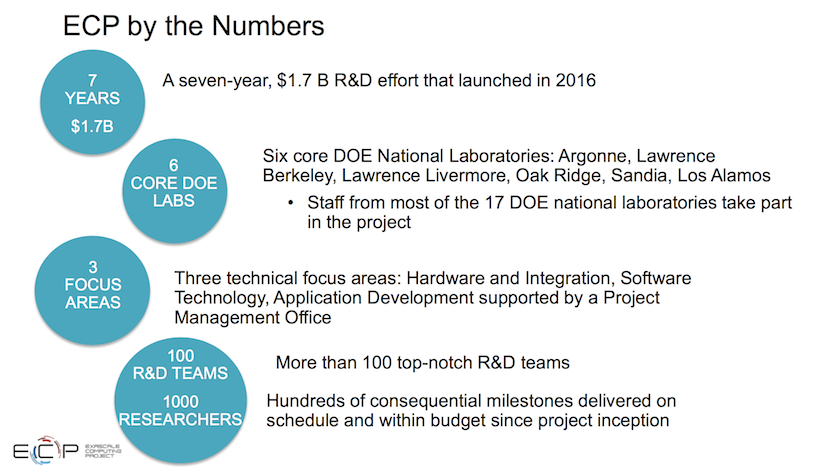

Quick backgrounder: ECP, you may know, was formed in 2016 as part of the overall U.S. Exascale Computing Initiative being run by DoE. The ECI, among other things, procures the exascale systems. ECPs charge is to ensure there’s an exascale-ready software ecosystem to get the most from exascale hardware when it arrives. You may not know ECP has finite lifetime and is scheduled to end in 2023. Kothe calls ECP a seven-year sprint.

Organizationally, ECP is overseen by a board of directors chaired by Bill Goldstein, director of Lawrence Livermore National Laboratory, and vice chair Thomas Zacharia, director of Oak Ridge National Laboratory. There is also an ECP Industry Council led by General Electric which weighs in on functional requirements and acts in an advisory capacity but has no formal review authority. Kothe notes that perhaps unlike past DoE efforts, ECP is trying to produce hardened, production quality software which is released regularly and that ECP has firm milestones and to keep it on track.

Much of Kothe’s presentation is likely familiar to close watchers of ECP; nevertheless, the scope of ECP activities presented along with pointers to sources for more material was impressive. It was almost too much but as an ECP resource the ACM recording and slide deck is a keeper if you can get it. ECP communication lead Mike Bernhardt says DoE is reviewing the talk now before publicly releasing it but that should happen soon (update: recording plus slides now available).

Kothe zipped through about 70 slides in under an hour. He made clear throughout his presentation that ECP’s various goals specifically supporting DoE missions are matched by the expectation that ECP developed technologies will also find broad use within HPC. It turns out ECP has already been busy churning out applications, SDKs, contributions to open source, and an extreme scale software stack.

It’s probably worth restating what constitutes DoE’s definition of ‘capable exascale’ for the new systems since that’s the official goal. Broadly, ECI calls for at least two diverse system architectures. Each should deliver 50x the performance of today’s 20 petaflop systems and 5x the performance of Summit. The systems should function with sufficient resiliency (an average fault rate of ≤1 per week) and include a software stack that meets the needs of a broad spectrum of applications and workloads.

Presented here are just a few of Kothe’s slides (click to enlarge) and his accompanying comments.

There are six application target areas (shown below) which were selected in 2016 in conjunction with DoE sponsors. Each application (~20) addresses a strategic problem of interest to a program office. Kothe said, “It wasn’t easy to downselect to those” and also emphasized ECP “is not waiting for exascale systems [to arrive] but working hard on the current systems (e.g. Summit, Sierra).” So far, he said, performance is exceeding expectations.

Co-design, of course, has been a key component from ECP’s start. One area being focused on is motifs. “Typically each application has a small set of motifs, a common pattern of computation. We we’ve chosen in terms of co-design to go after motifs and really see if we can make those motifs perform well on the exascale and pre-exascale systems. Here (below) you see the list of six co-design centers and a proxy application [center],” said Kothe. “[They have] all have proven their worth with regard to developing, not just best practices and lessons learned, but libraries and components that we view as sort of next generation middleware that many applications will use.”

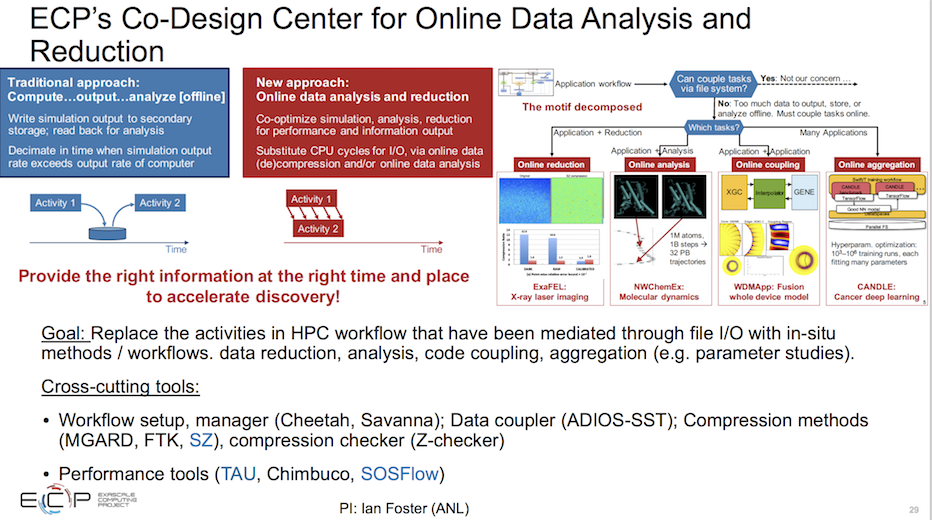

Interestingly, one of the co-design efforts is not motif-focused. It’s the Co-Design Center for Online Data Analysis and Reduction (CODAR) working on approaches to workflow management and data analysis.

“Here (below) you see the traditional approach. An ap runs and dumps its data and another ap runs and picks it up and does the analysis. We really can’t afford to do that. There is a disparity in the hardware in terms of I/O bandwidth relative to the memory bandwidth. We really want to be able to do online reduction. In other words the application runs, and we’re doing of reduction of data as it runs, and process the data as it runs, and that analysis may be passive or active back on the application. We may [sometimes] need a couple applications running on the hardware at the same time that may need to talk back and forth in some sort of consistent way,” he said.

“This center is essentially releasing an entire workflow management system that a number of applications [in areas such as] fusion, material science, molecular dynamics, and climate, are looking at to leverage,” said Kothe.

Not surprisingly, ECP, like many in HPC, is scrambling to dive into machine learning. The ExaLearn center was created just last fall.

“There are several use cases of interest to ECP. Obviously we’ll be interested in picking industry frameworks wherever we can. So the goal isn’t to recreate good technologies like Tensorflow that are out there. In particular we are interested in surrogate models for uncertainty quantification and error estimation, control systems, and inverse problems. A good [use case] example for machine learning is looking at our experimental facilities. Here’s a light source, (slide below) and I think there are at least five [similar light sources] in the labs,” said Kothe.

“These are multi-million-dollar facilities that are getting great experimental return but we think we can help even more with everything [from] up-stream design of the light source, controlling the beam lines in real-time, to interfacing with the data acquisition system to help understand the data real-time [and] being able to do fast analysis onsite, and ultimately sending data to the exascale system. This is potentially a high return area.”

Clearly developing a wide range of software technology – tools and the stack – is a key imperative for ECP. “The philosophy here is to be prudent and extend current technologies where possible, build a comprehensive software stack, but leverage frankly the hundreds of man-years of that investment that we have,” said Kothe.

In delivering these capabilities Kothe said the emphasis is on delivering high quality, production software – “probably something we haven’t done well in DoE in the past”. Convenient delivery of these tools is also important, “recognizing we can encapsulate these products into a smaller set of development kits that have kind of like products packages that are containerized.” ECP currently supports Docker, Charliecloud, Singularity, and Shifter container technology.

Performance milestones are also part of ECP delivery requirements and Kothe points to work with Hypre to leverage mixed precision computation as an example: “We were investigating going from 64-bit to 32-bit integer trying to take advantage of the accelerated hardware. In this case we gained about a 25 percent performance increase just by investigating where can we go to lower precision and still do the job. I just want to point out we are a project with milestones kind of every three or four months and this is a good example of a hypre milestone.”

On balance Kothe’s talk and slides present a reasonably full picture of the scope of ECP activities. HPCwire will provide a link to those resources when they become available. Stay tuned. (update: link to recording and slides now available)

Link to slides: https://on24static.akamaized.net/event/19/82/47/0/rt/1/documents/resourceList1556627172809/dougkotheexascaletechtalkslides1556627165263.pdf