Ongoing research initiatives are showing that artificial intelligence may be able to predict a person’s likelihood of developing Alzheimer’s disease with a high level of accuracy.

As the population ages, the specter of Alzheimer’s disease becomes all the more ominous. It’s like a dark cloud on the horizon, threatening a massive and devastating storm that is coming our way.

In reality, this storm has already begun. Today, 5.8 million Americans are living with Alzheimer’s, according to the Alzheimer’s Association. By 2050, this number is projected to rise to nearly 14 million, barring the development of medical breakthroughs to prevent, slow or cure the disease. And aside from the devastating impact of Alzheimer’s on individuals and families, the societal costs of the disease are astronomical. In 2019, Alzheimer’s and other dementias will cost the United States $290 billion, and by 2050, these costs could rise to as high as $1.1 trillion.1

While we do not yet have a cure for Alzheimer’s, one of the keys to the fight against the disease is more accurate and timely diagnosis of the condition. Earlier diagnosis could help patients fight back by beginning treatments sooner and engaging in non-pharmacologic therapies — such as exercise and cognitive stimulation — that have been shown to be beneficial to people with Alzheimer’s dementia.2

There’s good news on this front. Multiple academic research initiatives are showing that artificial intelligence could soon be used to help doctors identify patients likely to develop Alzheimer’s disease.

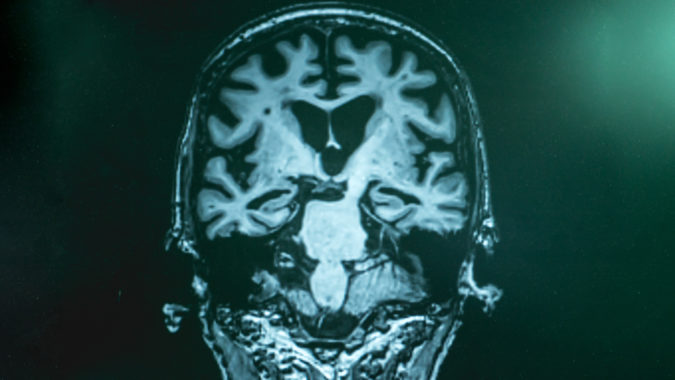

In one of these initiatives, now under way at McGill University in Canada, a team of scientists has successfully trained an AI algorithm to make accurate predictions regarding cognitive decline leading to Alzheimer’s disease. This algorithm learns signatures from magnetic resonance imaging (MRI), genetics and clinical data. Based on these learnings, the algorithm can help predict whether an individual’s cognitive faculties are likely to deteriorate toward Alzheimer’s in the next five years, according to the team’s findings, which have been published in the journal PLOS Computational Biology.3

One of the team members, Dr. Mallar Chakravarty, a computational neuroscientist at the Douglas Mental Health University Institute, notes that this algorithm could help get people on the path to treatment sooner.

“At the moment, there are limited ways to treat Alzheimer’s and the best evidence we have is for prevention,” Chakravarty says in a McGill University news release. “Our AI methodology could have significant implications as a ‘doctor’s assistant’ that would help stream people onto the right pathway for treatment. For example, one could even initiate lifestyle changes that may delay the beginning stages of Alzheimer’s or even prevent it altogether.”3

Building better algorithms

From here, a key challenge for the McGill research team is to refine AI algorithms to improve prediction capabilities. To that end, a Dell EMC data scientist, Dr. Luke Wilson, and his colleagues in the Dell EMC HPC and AI Innovation Lab are working with researchers from McGill to adapt and improve algorithms for faster disease identification.

Wilson notes that modern 3D medical imaging systems can easily produce hundreds of gigabytes of data per scan, and computers with many hundreds of gigabytes or multiple terabytes of memory are needed to train AI models for these massive data objects. Scaling to multiple large-memory systems, with Intel® Xeon® 2nd generation scalable processors inside, is the only way to solve these complex image processing problems in a reasonable amount of time.

“These AI techniques, which we’re enabling on Dell EMC HPC solutions like the Zenith supercomputer in our HPC and AI Innovation Lab, are critical to developing the high-quality models that will make AI-augmented medicine a reality,” Wilson says. “We’re excited to be working with McGill University to enable the breakthroughs that will improve people’s lives in the future. “

To learn more

- For a close-up look at a reference architecture for an enterprise imaging platform for healthcare organizations, read the Intel white paper “Enterprise Image Platform from Dell EMC and Intel.”

- To explore the computing infrastructure and solutions that power AI-driven applications, including Dell EMC Ready Solutions for HPC and AI, visit an AI Experience Zone in a Dell Technologies Customer Solution Center near you.

Endnotes:

1. Alzheimer’s Association, “Facts and Figures,” accessed August 22, 2019.

- Alzheimer’s Association, “2019 Alzheimer’s Disease Facts and Figures” report.

- McGill University, “AI Could Predict Cognitive Decline Leading to Alzheimer’s Disease in the Next 5 Years,” October 4, 2018.