Weather and climate applications are some of the most important uses of HPC – a good model can save lives, as well as billions of dollars. But many weather and climate models struggle to run efficiently in their HPC environments, with users reporting “fundamentally inappropriate” node and CPU designs and job efficiencies below one percent. So when Bob Sorensen, vice president of research and technology at Hyperion Research, reached out to weather and climate organizations, he had a tantalizing question for them to answer: “What’s your dream HPC?”

Finding the dream HPC system for weather and climate

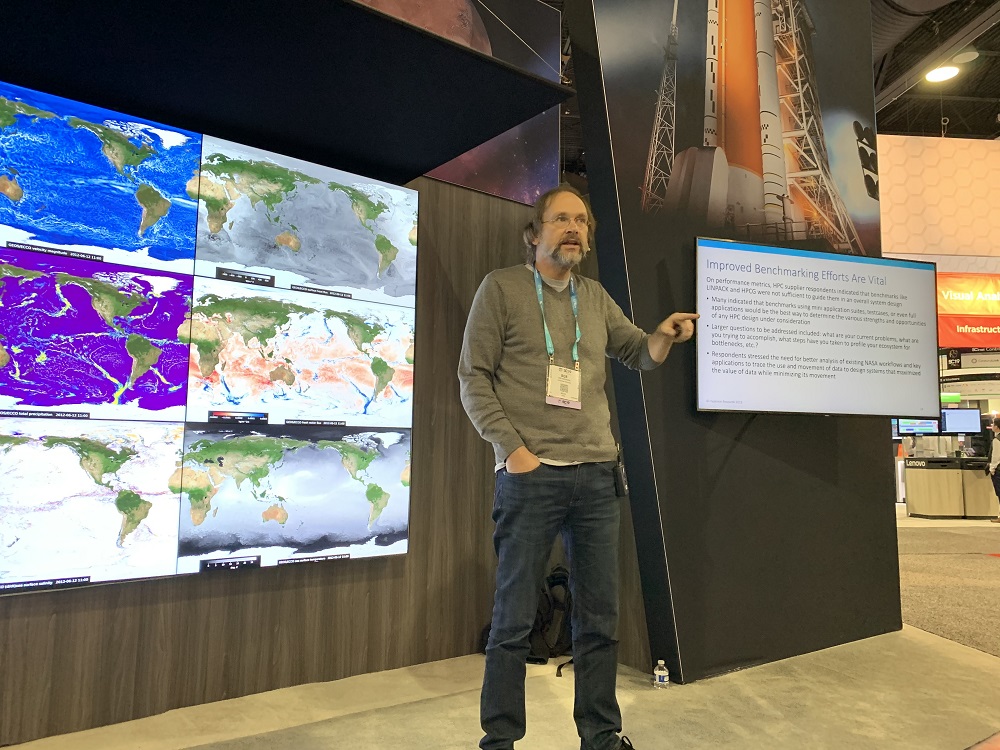

Hyperion Research, Sorensen explained at SC19, had been approached by NASA about a year ago to explore options for bespoke HPC solutions targeted at weather and climate research. To tackle this question, Hyperion conducted a two-phase study: first, they surveyed 15 different weather and climate organizations in the U.S. and Europe, including the European Centre for Medium-Range Weather Forecasts (ECMWF), Los Alamos National Laboratory (LANL), the National Oceanic and Atmospheric Administration (NOAA), Oak Ridge National Laboratory (ORNL), the University Corporation for Atmospheric Research (UCAR), the University of Delaware and more.

“The focus of the study was to gather insights through a series of surveys with not only weather people from around the world,” Sorensen explained, “but also taking what we did in those surveys to understand what their requirements were for producing weather and climate research.”

Then, after asking those organizations about their needs, they approached major HPC suppliers – including Dell EMC, Cray, HPE and IBM – and surveyed them about the challenges and opportunities about developing a system suited for weather and climate applications. Sorensen said they wanted to “talk to HPC vendors and say, ‘what would be some of the options for producing that kind of system if it became available? How would you participate? What would your response be?’”

Phase one: The weather and climate organizations

“The concern we heard most has to do with feeding the beast,” Sorensen said. “Everyone was relatively happy with the CPU capabilities of their system, but bandwidth was considered to be extremely problematic. We heard time and time again that memory and storage latency and bandwidth are the big problems. It’s why the existing weather and climate jobs don’t run as effectively as they could.”

“If you have a data-starved CPU,” he said, “it doesn’t matter how fast your processor is.”

Organizations cited a lack of diversity in processor design and selection, Sorensen said, and showed eagerness to integrate AI into weather and climate workloads by using machine learning to optimize jobs. They also expressed a general reluctance to introduce GPUs into their workloads in serious ways, arguing that the “pain and suffering” required to integrate GPUs made them currently non-viable. And, after all, the respondents argued, if they were still bottlenecked by interconnect issues, GPUs would just widen the gap between processing power and results.

The weather and climate organizations, Sorensen said, “expressed their opinions very vociferously.” He stressed that the organizations were not united on all fronts (“We didn’t see a general, universal agreement”), and that even within organizations, it was “four, five, six, ten people” arguing in a room about how they should respond to the survey questions.

“Even though you can argue that they’re all modeling the same Earth,” he reflected, “there’s a lot of different ways to do that.”

The respondents were also concerned about the scalability of their code, acknowledging that much of it would need to be rewritten – but, they said, they currently lacked the strong base of software development tools they would need to support rewriting.

Phase two: The vendors

“Everyone said, ‘Hey, we’re really interested in exploring this,’ but the level of commitment varied greatly,” Sorensen said of the vendors surveyed. Even at $10- or $100-million levels of buy-in, he explained, “they said that the opportunity costs of concentrating effort … just simply was too great, unless that particular box had applicability across a wider range of areas.” Instead, the vendors steered their answers toward flexibility: “How can you take that range of issues that are available out there in terms of the design flexibility of HPC, and turn it into a machine that most completely meets your requirements?”

They also expressed confusion at the unclear requirements of the weather and climate sector due to their wide and varied workloads. “Vendors,” Sorensen said, “were very unclear on what really matters to these folks.” Furthermore, vendors were worried that if they tried to cater to a wide range of needs, the systems would have “too many cooks.” (“It will respond to everyone’s requirements equally, which means it doesn’t respond to any of those requirements very well,” Sorensen said.)

“Almost every vendor,” Sorensen continued, “said if you could spend a dollar on anything, spend it on software modernization.” Vendors stressed that more performance-per-dollar could be gained from investing in leveraging technology from the last few years: heterogeneous architectures, high-bandwidth memory, burst buffers, SSD/spinning disk combinations and more. They acknowledged that this would be an “exceedingly painful operation,” but, Sorensen said, the choice seemed to be whether to “fight one goal today, or fight two goals tomorrow.”

Moving forward

“Upfront codesign efforts are critical,” Sorensen said, stressing the need to “sit down with the vendors,” who, he said, were probably in the best position to know what would be available in the coming years. Furthermore, he suggested, “any of these codesign efforts should seek to engage the wider weather and climate community” – not just a select few organizations.

Sorensen also highlighted the need for effective benchmarking. “This would be, I think, a critical element in NASA being able to assess the value of particular efforts – to say not ‘We got a system that is 500 petaflops,’ but ‘We got a new system that’s running this application 37 times faster than the machine from two years ago.’”

Sorensen suggested that weather and climate organizations embrace new workload compositions, such as AI for modeling and simulation. “Think about how you can enrich the modeling and simulation environment by using big data analytics to help you better some of your solutions,” he said. Beyond AI, he floated hybrid deployments as both a solution for absorbing large workload fluctuations and as a testbed for weather and climate researchers to experiment with new hardware and software offerings.

Finally, Sorensen saw opportunities for mutual benefit between sectors. “Find verticals that are in the same position that you’re in,” he urged. “Because if you go to a vendor and say ‘We really like this, is this something you’d be able to supply – oh and by the way, the pharmaceutical guys would love it, the oil and gas guys would love it and the automotive sector would love it too!’, you’re going to get the sales guy sitting up and listening to you.”

“I don’t think this is unique to the NASA environment,” Sorensen concluded. “And I don’t think it’s unique to the weather environment, either. I think that there’s probably an awful lot of use cases out there that haven’t been modernized yet, haven’t really adapted to some of the changes going on in the HPC sector in, say, the last five years.”

“I think it’s admirable that NASA is one of the first groups to step forward and say, ‘Let’s start thinking about this process.’”