Last week, the day before the El Capitan processor disclosures were made at HPE’s new headquarters in San Jose, Steve Scott (CTO for HPC & AI at HPE, and former Cray CTO) was on-hand at the Rice Oil & Gas HPC conference in Houston. He was there to discuss the HPE-Cray transition and blended roadmap, as well as his favorite topic, Cray’s eighth-gen networking technology, Slingshot.

HPE announced its intention to acquire Cray last May (2019) for $1.3 billion. At the time, Cray’s Shasta architecture had been selected for two U.S. exascale contracts — Aurora at Argonne and Frontier at Oak Ridge — and it would soon secure the third and final outstanding Department of Energy exascale contract, El Capitan at Livermore. (Note, as we reported last August, the DOE is not seeking a second exascale system for Argonne under the CORAL-2 procurement project.)

With excitement building around its exascale wins, Cray’s profile has only grown stronger as the company was brought into HPE, bucking the trend that we see in many M&As. As Scott said at the Rice event, “Cray went away as an entity, but the Cray systems and Cray brand will definitely persist.”

The companies were fully merged by Jan. 1, 2020.

The way Scott tells it, the transition went smoothly. “The transaction closed in September of 2019, and it literally took one month for organizations to be fully combined. We’re not running as kind of a little subsidiary off the side,” he said.

Pete Ungaro (SVP & GM, HPC & AI at HPE, formerly Cray CEO) runs the combined HPC and AI organization inside HPE. The group has also pulled in additional HPE units: Mission Critical (includes Superdome Flex), the Edge line, and the Moonshot division all report to Ungaro. “Within one month, we had a combined blended leadership team, and we have one organization working as one team,” said Scott.

Consolidation of the product roadmaps was completed inside of two months, in time for SC19, according to Scott. “Within the first month and a half, we had pretty much fully blended our storage and compute roadmaps. It was relatively easy to do because they were somewhat complementary. There were some things that we were doing in both companies, and we chose one,” he said.

Consolidation of the product roadmaps was completed inside of two months, in time for SC19, according to Scott. “Within the first month and a half, we had pretty much fully blended our storage and compute roadmaps. It was relatively easy to do because they were somewhat complementary. There were some things that we were doing in both companies, and we chose one,” he said.

Liquid-cooled infrastructure was one of the overlapping technologies, and that choice fell to Cray’s design. Some of the air-cooled commodity cabinets that Cray was developing will be replaced by the HPE Apollo. The storage roadmaps were merged together under ClusterStor.

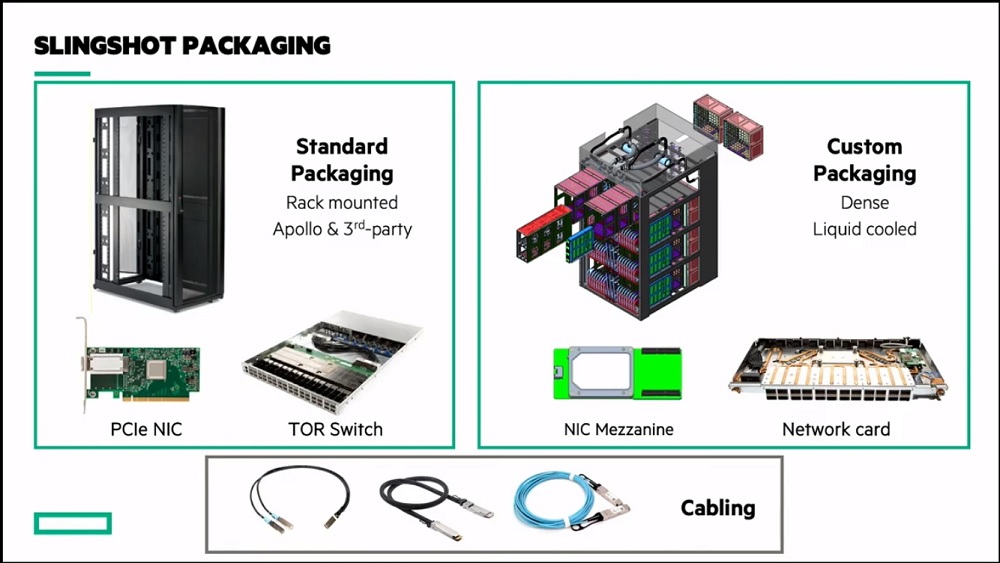

The HPE Apollo line, comprised of standard 19″ rack systems, has been brought into the Shasta fold, along with the Cray-developed dense, scale-optimized cabinets, now known as Olympus.

“[Pre-Shasta] from a hardware perspective on the XC systems, you had one liquid-cooled cabinet and then if you wanted to have I/O nodes, you had to have them designed into this customized cabinet,” said Scott. “And if you wanted other stuff that didn’t fit in the customized cabinet, you basically had to buy a separate system and hook it up to the side through the I/O subsystem and share it. That all goes away with Shasta. There’s two physical infrastructures: the Apollo infrastructure coming from HPE, and the Olympus infrastructure, which is the dense-liquid cooled infrastructure. You can kind of get anything under the sun to put into the Apollo infrastructure. But then we do a smaller number of custom blades, high performance blades, that are really intended to optimize for the key computational technologies that go into the Olympus infrastructure and that has very high density and direct warm water cooling.”

Of the combined Shasta lineup, Scott said, “It’s the same interconnect that spans them and it’s the same software environment. It’s literally just a physical infrastructure choice. It makes no difference in terms of the look and feel of the system, in terms of the performance of the software and the user experience–it’s literally just a packaging choice.”

In a follow-up email exchange, Scott clarified that HPE still offers the CS line, the commodity Cray line with Appro roots. Recall that the Fujitsu-Cray A64FX boards are supported by the CS500 architecture, a decision that was driven by time-to-market. Long term, HPE expects the CS line to be supplanted by the Apollo line, which will also provide the expanded physical infrastructure for the Shasta supercomputers. Further, HPE is still selling Cray XC systems even as it introduces the new Shasta systems.

With the Olympus architecture, Scott notes every cabinet has a stack of four chassis on the left and another four chassis on the right. Each chassis has eight compute blades that plug into the front, up to 64 compute blades into a single cabinet. And then from the back, you can plug in from one to eight network cards per chassis, which offers configurability in terms of that amount of network bandwidth.

With the Olympus architecture, Scott notes every cabinet has a stack of four chassis on the left and another four chassis on the right. Each chassis has eight compute blades that plug into the front, up to 64 compute blades into a single cabinet. And then from the back, you can plug in from one to eight network cards per chassis, which offers configurability in terms of that amount of network bandwidth.

There is direct liquid cooling to all the components, including the optical transceivers for the active optical cables, the memories, and the processors, etc. One cabinet supports up to 512 high wattage GPUs plus 128 CPUs and up to 64 network switches. Currently, Olympus cabinets are shipping with up to 250 kilowatts, and soon that will go to 300 kilowatts, and then 400 kilowatts in a single cabinet, said Scott.

In discussing the reasons for moving to Shasta, Scott emphasized flexibility and upgradability. “The XC system that we’ve been shipping for a number of years is great in lots of ways but it’s not flexible; you can have any number of nodes you want for your blade as long as it’s four; you can have any amount of network bandwidth per node as long as it’s one PCI Gen3 x16 and the node can be any size you want, as long as it’s that, right? It’s a very inflexible design. And we hit limits there especially as we started looking at hotter and hotter processors, and we just couldn’t accommodate in that system design. So Shasta is designed to have a wide diversity of processors, all shapes and sizes, and particularly, of increasingly higher and higher wattages.”

In discussing the reasons for moving to Shasta, Scott emphasized flexibility and upgradability. “The XC system that we’ve been shipping for a number of years is great in lots of ways but it’s not flexible; you can have any number of nodes you want for your blade as long as it’s four; you can have any amount of network bandwidth per node as long as it’s one PCI Gen3 x16 and the node can be any size you want, as long as it’s that, right? It’s a very inflexible design. And we hit limits there especially as we started looking at hotter and hotter processors, and we just couldn’t accommodate in that system design. So Shasta is designed to have a wide diversity of processors, all shapes and sizes, and particularly, of increasingly higher and higher wattages.”

Cray’s first wins with Shasta reflect this diversity at the node. There’s Aurora at Argonne (two Intel Xeon CPUs and six of the Xe GPUs, connected by Compute eXpress Link [CXL]); Perlmutter at NERSC (two AMD Epyc Milan CPUs and four Nvidia Volta-Next GPUs connected by PCIe Gen4); Frontier at Oak Ridge (one custom AMD Epyc CPU plus four Radeon GPUs connected by an enhanced Infinity fabric) and El Capitan at Livermore (AMD’s ‘Genoa’ Zen4 Epyc CPU plus Radeon Instinct GPUs in a one-to-four ratio, connected by AMD’s 3rd Gen AMD Infinity fabric).

While all of these are heterogeneous systems targeting “big flops,” Scott also discussed the CPU-based compute blades going into NERSC Perlmutter, encompassing four dual-socket AMD Epyc “Milan” nodes.

Cray also supports Marvell ThunderX2 Arm processors in its XC50 architecture, as in the recent refresh win (Isambard2) for GW4/UK Met, and supports the Fujitsu A64FX chips in its CS500 system, as already mentioned.

Scott, who has led the design of several generations of supercomputing interconnects, made sure to save time in his presentation to discuss Cray’s new Slingshot network, a pillar of the Shasta architecture and the interconnect on the three DOE exascale systems that are underway. Scott highlighted Slingshot’s pivot to Ethernet, its feeds and speeds, quality of service and congestion control features.

Scott, who has led the design of several generations of supercomputing interconnects, made sure to save time in his presentation to discuss Cray’s new Slingshot network, a pillar of the Shasta architecture and the interconnect on the three DOE exascale systems that are underway. Scott highlighted Slingshot’s pivot to Ethernet, its feeds and speeds, quality of service and congestion control features.

“We have decided to stop building proprietary networks and instead adopt Ethernet. The world is going to Ethernet, so we decided to stop fighting them and instead join them, but instead of just using a commodity Ethernet, we are redesigning Ethernet and bringing HPC to Ethernet. So we have standard Ethernet connectivity at the edges; we can talk to standard NICs; you can talk to other datacenter switches, etc. But inside it’s…a state of the art HPC fabric,” said Scott.

“Our Rosetta switch is 64 ports times 200 gigabits per second,” Scott continued. “This allows you to build really big systems–like those exascale systems–with a network diameter of just three switch to switch hops. The diameter is three whether it’s two cabinets or 20 cabinets or 200 cabinets, which is the neat thing about this Dragonfly topology and having high-radix switches…. It also turns out that we can build a system the size of Frontier, a multi-exaflop system where 90 percent of the cables in the system are short, cheap, reliable electrical copper cables, and only 10 percent of them have to be optics.”

“[With Shasta], the way that the network blades and the compute blades are put in the system also gives you the flexibility to put a second generation network, and a third generation network. We will absolutely be going to optics everywhere within a couple of generations without having to worry about redesigning the electrical backplane and that sort of thing. So it gives us a lot of flexibility on the interconnect side as well,” he said.

Watch Scott’s full presentation from the 2020 Rice Oil and Gas conference below for additional details on the Slingshot network, the E1000 ClusterStor system, and the Cray software environment.