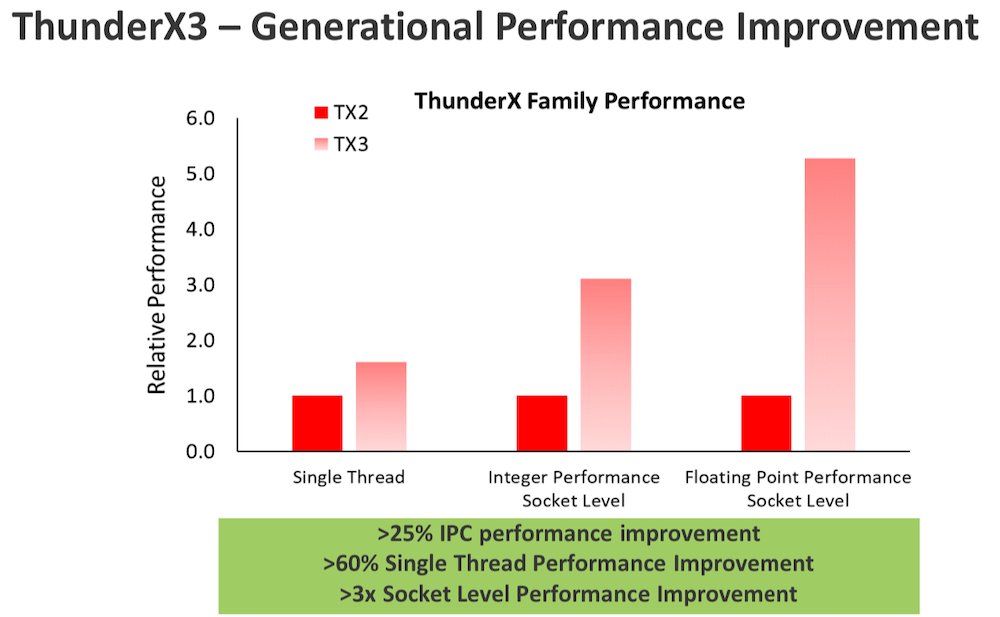

Marvell yesterday released more details about ThunderX3, its next-gen 7nm Arm-based microprocessor, codenamed “Triton,” which it says is now sampling and will be broadly available later in 2020. The new chip will feature up to 96 Arm v8.3+ cores and support 4 threads per core thus delivering up 384 threads per socket. While many details of ThunderX3’s architecture were not disclosed, Marvell says more information will be forthcoming over the next few months. Marvell also took the opportunity to issue a barrage of performance advantage claims over both Intel and AMD CPUs.

The recent rise of Arm CPUs in servers targeting HPC and the cloud is noteworthy. Arm has long been a force in embedded applications, leveraging its low power consumption attributes. Now, advancing chip features, available silicon, an emerging accelerator strategy, and a significantly expanded ecosystem are invigorating Arm’s server aspirations.

Marvell, through its acquisition of Cavium (announced 2016, completed 2018) is finally starting to enjoy growing success in servers with the ThunderX CPU line. ThunderX2, for example, is being used in high-profile supercomputing projects at Sandia Laboratory (Astra), Los Alamos National Laboratory, GW4 (the Met Office), and France’s CEA. In the cloud, Microsoft Azure now uses ThunderX2-based clusters for internal purposes and Marvell says it has deals with 20 other hyperscalers.

Of course Marvell isn’t alone. Fujitsu’s A64FX Arm CPU will power Japan’s “Fugaku” supercomputer to be deployed at RIKEN in 2021, and in November Cray (now HPE) announced a collaboration with Fujitsu to bring out A64FX-based systems.

All things considered, the Arm camp – once thought of as a long shot in mainstream servers let alone high-end HPC – is making steady headway into server markets. In a pre-briefing on the forthcoming ThunderX3 with HPCwire, Gopal Hegde vice president and GM, server processors, Marvell, argued that now that the heavy lifting is done, Marvell’s Arm’s server chip design is inherently better than x86 architecture because it doesn’t need to support legacy architecture or so many diverse device types.

“Intel designed its cores for use in [systems] from laptops and desktops all the way to servers. It’s not optimized for servers. We have no x86 legacy, like 32-bit support and things like that,” said Hegde. “We are able to optimize our code, and our core area is significantly smaller [as a result]. Just to give you an idea, in the previous generation, if you look at ThunderX2, compared to AMD or Skylake, for the same process node technology [we get] roughly 20% to 25% smaller die area. That translates into lower power. When we move to 7nm with ThunderX3, our core compared to AMD Rome’s 7nm is roughly 30% smaller.”

The slides below summarize ThunderX3’s specs, Marvell’s general pitch for ThunderX in advanced computing, and its processor portfolio:

It may be useful to briefly describe Marvell. Founded in 1995, FY20 revenue was $2.7 billion. The company has roughly 5000 employees. Its roots are in storage technology, but the product portfolio and markets served have expanded over the years. Marvell now has three main businesses – processors, networking, and storage, and it bills itself as the largest supplier of Arm server chips.

“Cavium was in the processor business for almost 15 years. Marvell has been shipping Armada (low power SoC) for a similar amount of time. So together, we have shipped over hundreds of millions of CPUs over the years. These are multicore CPUs ranging from two cores all the way up to 48 cores are now, even higher (96-core ThunderX3) soon,” said Hegde

“Octeon and Octeon Fusion are products used in wireless 5G infrastructure. We announced design wins with Samsung and Nokia about a week ago. Octeon processors are [also] very widely used in the embedded market and constitute a pretty significant part of the 2.7 billion in revenue that we did last year. Today we exclusively develop Arm-based processor products,” he said.

The server-oriented ThunderX line targets HPC, the cloud, and as Marvell puts it, “Arm native applications at cloud and edge.” Hegde contends that Intel’s struggles with process and resulting low core counts have created an opportunity for making gains in single and multi-threaded performance, while AMD’s multi-die-on-a-chip, or chiplet, approach necessarily introduces latency. ThunderX3, said Hegde, is designed to exploit those.

Given small die area and the lack of legacy x86 overhead, contends Hegde, it is possible to leverage Arm architecture for lower power consumption, lower cost, and added functionality “into the same monolithic die.” This gives ThunderX3, he argues, improved instruction per cycle (IPC) performance, better thermal design power (DTP), and “really good memory latency and memory bandwidth. “If you look at the ThunderXs, from that standpoint, you get the best of both worlds. You don’t have to sacrifice core count like, x86 Intel, and you don’t have to sacrifice memory latency like AMD.”

It’s true ThunderX3 still doesn’t have Arm Scalable Vector Extension (SVE) which Fujitsu’s A64FX has. Hegde said “It is going to be available in a later processor. The challenge is that compilers needed to take advantage of SVP are still under development. So we’re actually pretty happy to be providing systems so that the compiler toolchain can evolve.”

Below are a few workload and performance comparison slides Hegde presented

Until the fairly recently, the state of the Arm ecosystem – tools, supported OSs, adapter cards, etc. – has been a source of concern among would-be Arm users. That does seem to be changing. One prominent example of change is Nvidia’s decision last summer to support Arm as its accelerator strategy emerged and firmed up.

Hegde noted, “When I started in 2014 [with Cavium], we had two partners. Over the last six years, we have built a very broad ecosystem of over 100 partners across commercial, open source, and industry standard partners. A lot of these are driven through contracts and contracts to platforms that are in the ecosystem. Today there are thousands of ThunderX and ThunderX2 platforms in the ecosystem today.

“Not only do we work with several OEMs and ODMs to deliver platforms, but also have full tools and full collaterals. Pretty much all the operating systems are supported on ThunderX today, ranging from, Red Hat, SuSe, Oracle Linux, to Microsoft Windows, VMware, and some of the free community-based operating systems like Centos, FreeBSD, all the way up to middleware. HPC has been a special area of focus for us. And the number of partners in that space has more than doubled over last 12 months, and of course, cloud and now also in the edge computing,” he said.