The Exascale Computing Project (ECP) milestone report issued last week presents a good snapshot of progress in preparing applications for exascale computing. There are roughly 30 ECP application development (AD) subprojects (24 applications and six co-design centers) covered in the report, spanning many domains including, for example, chemistry, materials, energy, earth and space science, data analytics, and national security. While the report is lengthy it may be useful for researchers to zero in on application development in their specific areas of expertise for directional guidance.

“Each AD application code team must define an application challenge problem that is both scientifically impactful and requires exascale-level resources to execute. Each exascale challenge problem targets a key DOE science or mission need and is the basis for quantitative measurements of success for each of the AD projects,” notes the report.

ECP has established firm goals, settling on three distinct key performance parameters (KPP-1, KPP-2, KPP-3) reflecting the nature of the task, along with specific figures of merit (FOM).

- KPP-1 quantitatively measures the increased capability of applications on exascale platforms compared with their capability on the leadership-class machines available at the start of the project. Each application targeting KPP-1 is required to define a quantitative FOM that represents the rate of science work for their defined exascale challenge problem… For KPP-1, a key concept is the performance baseline, which is a quantitative measure of an application FOM using the fastest computers available at the inception of the ECP against which the final FOM improvement is measured. This includes systems at the ALCF, NERSC, and the OLCF such as Mira, Theta, Cori, and Titan—systems in the 10–20 PFLOP/s range. The expectation is that applications will run at full scale on at least one of these systems to establish the performance baseline.

- KPP-2 is intended to assess the successful creation of new exascale science and engineering DOE mission application capabilities. Applications targeting KPP-2 are required to define an exascale challenge problem that represents a significant capability advance in its area of interest to the DOE…The distinguishing feature of KPP-2 applications relative to those targeting KPP-1 is the amount of new capability that must be developed to enable execution of the exascale challenge problem. Many KPP-2 applications lack sufficient code infrastructure from which to calculate an FOM performance baseline (e.g., they started in the ECP as mere prototypes). Without a well-defined starting point at the 10–20 PFLOP/s scale, it is unclear what FOM improvement would correspond to a successful outcome. A more appropriate measure of success for these applications is whether the necessary capability to execute their exascale challenge problems is in place at the end of the project, not the relative performance improvement throughout the project.

- KPP-3 is used to measure the impact of both co-design software products and the projects in the ECP’s ST (software technology) scope. ECP KPP-3 impact goals and metrics are the primary high-level means of connecting ECP co-design efforts to the ECP effort as a whole. Achieving these KPP-3 impact goals defines how the ECP’s co-design centers are reviewed and how their success is determined.

The report is fairly detailed and discusses specific challenges encountered and steps taken to adapt code. Much of the work required is to implement parallelism to make more efficient use of the heterogeneous architectures and varying accelerators (Nvidia, AMD, and Intel) planned for the coming exascale systems.

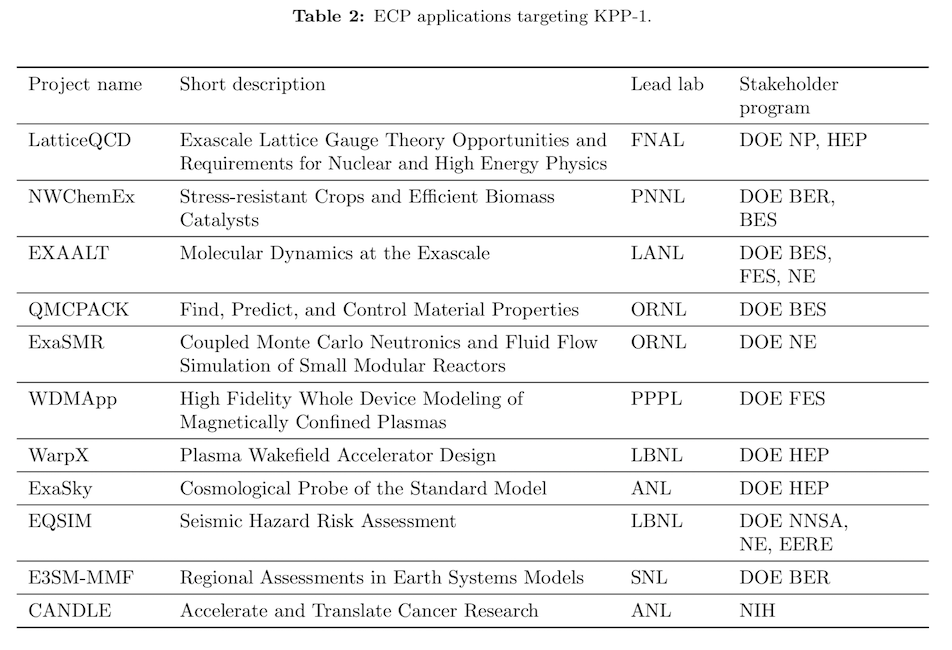

Shown below are lists of applications targeting KPP-1 and KPP-2.

One interesting example is the EXAALT (Exascale Atomistic capability for Accuracy, Length and Time) project; it combines three state-of-the-art codes – LAMMPS, LATTE, and ParSplice – into a unified tool that will leverage exascale platforms across all three dimensions (ALT).

According to the report, “The new integrated capability will be composed of three software layers. First, a task management layer will enable the creation of MD tasks, their management through task queues, and the storage of results in distributed databases. It will be used to implement various replica-based Accelerated Molecular Dynamics (AMD) techniques, as well as to enable other complex MD workflows. The second layer is a powerful MD engine based on the LAMMPS code. It will offer a uniform interface through which the different physical models can be accessed. The third layer provides a wide range of physical models.”

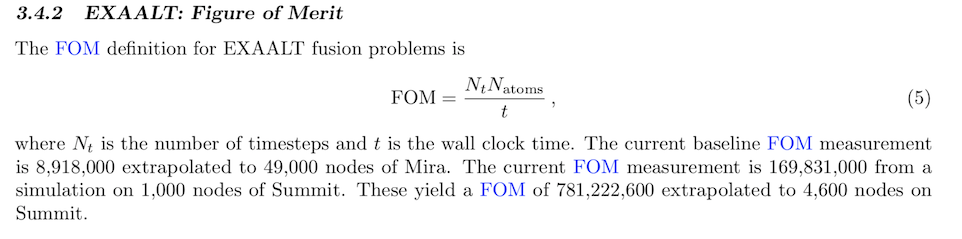

EXAALT’s key performance parameter is for a fusion challenge problem to simulate a surface of tungsten in conditions typical of plasma-facing materials in fusion reactors. These simulations will use the Parallel Trajectory Splicing technique, as well as a hierarchy of parallelization levels (over coarse domain elements, over replicas, and over fine domain elements).

As noted by ECP, “The task management components of the calculations are very light, so will not have to be ported to the GPUs. The overwhelming majority of the flops in the calculations will be consumed carrying out molecular dynamics simulations on each worker process using the LAMMPS MD code and the SNAP model of interatomic interactions. SNAP is a new generation of machine-learned potential that promises high accuracy in exchange for a rather high computational cost. It is therefore critical to efficiently port the SNAP MD kernels to GPUs in order to achieve optimal performance.”

Here are lightly edited excerpts from the report on EXAALT’s GPU strategy and progress being made:

- GPU Strategy. “Our main approach is to rely on the Kokkos programming model, whose development is also supported by ECP. Kokkos promises portable performance over a wide range of architectures, including Aurora and Frontier. The MD code will be fully ported to Kokkos and will therefore be able to efficiently run on GPUs. In addition, in collaboration with the CoPA (§ 8.2) co-design center and the NERSC Exascale Science Applications Program (NESAP) program at the NERSC, we are developing a suite of SNAP proxy apps (TestSNAP) implemented using different programming models, including OpenMP, CUDA, and OpenACC. This will allow us the flexibility to assess the relative merits of the different approaches and insure we have a fall-back solution in place if the deployment of a production-quality Kokkos backend on Aurora and Frontier is delayed. For example, OpenMP will be supported by all upcoming machines and the CUDA version should be convertible to the HIP runtime API relatively easily.”

- Result so far. “Rapid progress on the development of a high-performance implementation of the SNAP kernels has been made over the last year, in preparation for early access to NERSC/Perlmutter and for upcoming exascale machines Aurora and Frontier. This work resulted from a close collaboration between EXAALT, NERSC (through the NESAP program), and CoPA.The development of this new version proceeded by the extraction of a CPU SNAP proxy-app (TestSNAP) from the LAMMPS codebase, its rewrite following the discovery of an algorithmic trick that can reduce the number of executed flops, and the restructuring of its memory layout. These improvements yielded an increase in simulation throughput of roughly 2.4× in CPU performance on the P9s of Summit. This version of TestSNAP formed the basis on the new GPU implementation of the SNAP kernels. This new implementation proceeded from scratch, completely independently of the previous Kokkos implementation. Multiple versions were developed by the team, first using OpenACC, then CUDA, and finally OpenMP offload. TestSNAP was re-engineered throughout the year, resulting in a spectacular increase in performance during the summer of 2019, from about 1 katoms-steps/wall-clock second in April to 40 katoms-steps/s in July. At this point in time, the TestSNAP implementation was ported back to a production version of LAMMPS using Kokkos. This effort yielded an increase of 5.5× in simulation throughput on the V100 of Summit, as compared to the original Kokkos implementation at the beginning of the year.”

While the latest ECP report focuses on applications, its larger mission is to ensure a full software ecosystem is ready to take advantage of the coming exascale systems.

Link to ECP report: https://www.exascaleproject.org/wp-content/uploads/2020/03/ECP_AD_Milestone-Early-Application-Results_v1.0_20200325_FINAL.pdf