In this bimonthly feature, HPCwire highlights newly published research in the high-performance computing community and related domains. From parallel programming to exascale to quantum computing, the details are here.

Charting the trends of machine learning in Python

Charting the trends of machine learning in Python

“Deep neural networks, along with advancements in classical [machine learning] and scalable general-purpose GPU computing, have become critical components of artificial intelligence,” these authors – a team from the University of Wisconsin-Madison, the University of Maryland, and Nvidia – write. In this paper, they survey the field of machine learning with Python, identifying core hardware and software paradigms that enabled it to be the preferred language for much of this activity.

Authors: Sebastian Raschka, Joshua Patterson and Corey Nolet.

Identifying wildfires with HPC and deep learning

As climate change looms, dangerous wildfires have become unfortunately prevalent in many parts of the world. These researchers, a duo from the P.G. Demidov Yaroslavl State University in Russia, used an Nvidia DGX-1 system to train a neural network to identify wildfires in a series of nearly two thousand satellite images. The researchers report that the resulting algorithm is suitable for early wildfire detection in the real world.

Authors: Vladimir Khryashchev and Roman Larionov.

Simulating large-scale models of brain circuits with Google Cloud Platform

Simulating large-scale models of brain circuits with Google Cloud Platform

More and more, researchers are turning to cloud-based HPC to meet their intensive computing needs. This team of researchers from several universities, two hospitals and Google developed a detailed model of the brain motor cortex circuits, including more than 10,000 neurons and 30 million connections, using Google Cloud Platform. Each simulated second, the authors say, required 50 core hours.

Authors: Subhashini Sivagnanam, Wyatt Gorman, Donald Doherty, Samuel A. Neymotin, Stephen Fang, Hermine Hovhannisyan, William W. Lytton and Salvador Dura-Bernal.

Benchmarking Microsoft Azure virtual machines for HPC applications

With cloud HPC on the rise, researchers are turning their attention to the relative performance of different cloud options for HPC applications. In this paper, written by a team from the University of Jordan, the authors conduct a performance analysis of Microsoft Azure virtual machines using NASA’s NAS parallel benchmarks for HPC.

Authors: Rawan Aljamal, Ali El-Mousa and Fahed Jubair.

Simulating 100 million atoms on Summit

Simulating 100 million atoms on Summit

These authors, a team from four universities in China and the U.S., present a GPU adaptation of the DeePMD tool, which uses a deep neural network to drive extremely large molecule dynamics simulations. After testing the new version of the tool on Summit – the world’s fastest publicly ranked supercomputer – the authors concluded that “the GPU version is seven times faster than the CPU version with the same power consumption.”

Authors: Denghui Lu, Han Wang, Mohan Chen, Jiduan Liu, Lin Lin, Roberto Car, Weinan E, Weile Jia and Linfeng Zhang.

Scaling neural networks for geophysics on supercomputers

Numerical simulation is often computationally expensive, leading geophysics researchers to search for surrogate modeling techniques through deep learning. In this paper, researchers from Argonne National Laboratory discuss the development of a scalable neural architecture for a temperature forecasting model. The model scaled up using several different techniques on over 500 nodes of Argonne’s Theta supercomputer.

Authors: Romain Egele, Bethany Lusch and Prasanna Balaprakash.

Developing an app for smoothed particle hydrodynamics at exascale

Developing an app for smoothed particle hydrodynamics at exascale

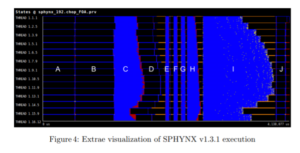

Smoothed particle hydrodynamics (SPH) are used in numerical simulations of fluids for astrophysics, computational fluid dynamics and many other fields. This paper, written by a team from Switzerland, presents the SPH-EXA project, which aims to develop an exascale-ready SPH app. As a starting point, the authors examine three different SPH codes and work to consolidate them into a well-optimized mini-app for exascale.

Authors: Danilo Guerrera, Rubén M. Cabezón, Jean-Guillaume Piccinali, Aurélien Cavelan, Florina M. Ciorba, David Imbert, Lucio Mayer and Darren Reed.

Do you know about research that should be included in next month’s list? If so, send us an email at [email protected]. We look forward to hearing from you.